??中文| English |文檔/Docs | ?模型/Models

Text2vec : Text to Vector, Get Sentence Embeddings. 文本向量化,把文本(包括詞、句子、段落)表徵為向量矩陣。

text2vec實現了Word2Vec、RankBM25、BERT、Sentence-BERT、CoSENT等多種文本表徵、文本相似度計算模型,並在文本語義匹配(相似度計算)任務上比較了各模型的效果。

[2023/09/20] v1.2.9版本: 支持多卡推理(多進程實現多GPU、多CPU推理),新增命令行工具(CLI),可以腳本執行批量文本向量化,詳見Release-v1.2.9

[2023/09/03] v1.2.4版本: 支持FlagEmbedding模型訓練,發布了中文匹配模型shibing624/text2vec-bge-large-chinese,用CoSENT方法監督訓練,基於BAAI/bge-large-zh-noinstruct用中文匹配數據集訓練得到,並在中文測試集評估相對於原模型效果有提升,短文本區分度上提昇明顯,詳見Release-v1.2.4

[2023/07/17] v1.2.2版本: 支持多卡訓練,發布了多語言匹配模型shibing624/text2vec-base-multilingual,用CoSENT方法訓練,基於sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2用人工挑選後的多語言STS數據集shibing624/nli-zh-all/text2vec-base-multilingual-dataset訓練得到,並在中英文測試集評估相對於原模型效果有提升,詳見Release-v1.2.2

[2023/06/19] v1.2.1版本: 更新了中文匹配模型shibing624/text2vec-base-chinese-nli為新版shibing624/text2vec-base-chinese-sentence,針對CoSENT的loss計算對排序敏感特點,人工挑選並整理出高質量的有相關性排序的STS數據集shibing624/nli-zh-all/text2vec-base-chinese-sentence-dataset,在各評估集表現相對之前有提升;發布了適用於s2p的中文匹配模型shibing624/text2vec-base-chinese-paraphrase,詳見Release-v1.2.1

[2023/06/15] v1.2.0版本: 發布了中文匹配模型shibing624/text2vec-base-chinese-nli,基於nghuyong/ernie-3.0-base-zh模型,使用了中文NLI數據集shibing624/nli_zh全部語料訓練的CoSENT文本匹配模型,在各評估集表現提昇明顯,詳見Release-v1.2.0

[2022/03/12] v1.1.4版本: 發布了中文匹配模型shibing624/text2vec-base-chinese,基於中文STS訓練集訓練的CoSENT匹配模型。詳見Release-v1.1.4

Guide

詳細文本向量表示方法見wiki: 文本向量表示方法

文本匹配

| Arch | BaseModel | Model | English-STS-B |

|---|---|---|---|

| GloVe | glove | Avg_word_embeddings_glove_6B_300d | 61.77 |

| BERT | bert-base-uncased | BERT-base-cls | 20.29 |

| BERT | bert-base-uncased | BERT-base-first_last_avg | 59.04 |

| BERT | bert-base-uncased | BERT-base-first_last_avg-whiten(NLI) | 63.65 |

| SBERT | sentence-transformers/bert-base-nli-mean-tokens | SBERT-base-nli-cls | 73.65 |

| SBERT | sentence-transformers/bert-base-nli-mean-tokens | SBERT-base-nli-first_last_avg | 77.96 |

| CoSENT | bert-base-uncased | CoSENT-base-first_last_avg | 69.93 |

| CoSENT | sentence-transformers/bert-base-nli-mean-tokens | CoSENT-base-nli-first_last_avg | 79.68 |

| CoSENT | sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2 | shibing624/text2vec-base-multilingual | 80.12 |

| Arch | BaseModel | Model | ATEC | BQ | LCQMC | PAWSX | STS-B | Avg |

|---|---|---|---|---|---|---|---|---|

| SBERT | bert-base-chinese | SBERT-bert-base | 46.36 | 70.36 | 78.72 | 46.86 | 66.41 | 61.74 |

| SBERT | hfl/chinese-macbert-base | SBERT-macbert-base | 47.28 | 68.63 | 79.42 | 55.59 | 64.82 | 63.15 |

| SBERT | hfl/chinese-roberta-wwm-ext | SBERT-roberta-ext | 48.29 | 69.99 | 79.22 | 44.10 | 72.42 | 62.80 |

| CoSENT | bert-base-chinese | CoSENT-bert-base | 49.74 | 72.38 | 78.69 | 60.00 | 79.27 | 68.01 |

| CoSENT | hfl/chinese-macbert-base | CoSENT-macbert-base | 50.39 | 72.93 | 79.17 | 60.86 | 79.30 | 68.53 |

| CoSENT | hfl/chinese-roberta-wwm-ext | CoSENT-roberta-ext | 50.81 | 71.45 | 79.31 | 61.56 | 79.96 | 68.61 |

說明:

SBERT-macbert-base模型,是用SBert方法訓練,運行examples/training_sup_text_matching_model.py代碼可訓練模型sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2模型是用SBert訓練,是paraphrase-MiniLM-L12-v2模型的多語言版本,支持中文、英文等| Arch | BaseModel | Model | ATEC | BQ | LCQMC | PAWSX | STS-B | SOHU-dd | SOHU-dc | Avg | QPS |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Word2Vec | word2vec | w2v-light-tencent-chinese | 20.00 | 31.49 | 59.46 | 2.57 | 55.78 | 55.04 | 20.70 | 35.03 | 23769 |

| SBERT | xlm-roberta-base | sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2 | 18.42 | 38.52 | 63.96 | 10.14 | 78.90 | 63.01 | 52.28 | 46.46 | 3138 |

| CoSENT | hfl/chinese-macbert-base | shibing624/text2vec-base-chinese | 31.93 | 42.67 | 70.16 | 17.21 | 79.30 | 70.27 | 50.42 | 51.61 | 3008 |

| CoSENT | hfl/chinese-lert-large | GanymedeNil/text2vec-large-chinese | 32.61 | 44.59 | 69.30 | 14.51 | 79.44 | 73.01 | 59.04 | 53.12 | 2092 |

| CoSENT | nghuyong/ernie-3.0-base-zh | shibing624/text2vec-base-chinese-sentence | 43.37 | 61.43 | 73.48 | 38.90 | 78.25 | 70.60 | 53.08 | 59.87 | 3089 |

| CoSENT | nghuyong/ernie-3.0-base-zh | shibing624/text2vec-base-chinese-paraphrase | 44.89 | 63.58 | 74.24 | 40.90 | 78.93 | 76.70 | 63.30 | 63.08 | 3066 |

| CoSENT | sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2 | shibing624/text2vec-base-multilingual | 32.39 | 50.33 | 65.64 | 32.56 | 74.45 | 68.88 | 51.17 | 53.67 | 3138 |

| CoSENT | BAAI/bge-large-zh-noinstruct | shibing624/text2vec-bge-large-chinese | 38.41 | 61.34 | 71.72 | 35.15 | 76.44 | 71.81 | 63.15 | 59.72 | 844 |

說明:

shibing624/text2vec-base-chinese模型,是用CoSENT方法訓練,基於hfl/chinese-macbert-base在中文STS-B數據訓練得到,並在中文STS-B測試集評估達到較好效果,運行examples/training_sup_text_matching_model.py代碼可訓練模型,模型文件已經上傳HF model hub,中文通用語義匹配任務推薦使用shibing624/text2vec-base-chinese-sentence模型,是用CoSENT方法訓練,基於nghuyong/ernie-3.0-base-zh用人工挑選後的中文STS數據集shibing624/nli-zh-all/text2vec-base-chinese-sentence-dataset訓練得到,並在中文各NLI測試集評估達到較好效果,運行examples/training_sup_text_matching_model_jsonl_data.py代碼可訓練模型,模型文件已經上傳HF model hub,中文s2s(句子vs句子)語義匹配任務推薦使用shibing624/text2vec-base-chinese-paraphrase模型,是用CoSENT方法訓練,基於nghuyong/ernie-3.0-base-zh用人工挑選後的中文STS數據集shibing624/nli-zh-all/text2vec-base-chinese-paraphrase-dataset,數據集相對於shibing624/nli-zh-all/text2vec-base-chinese-sentence-dataset加入了s2p(sentence to paraphrase)數據,強化了其長文本的表徵能力,並在中文各NLI測試集評估達到SOTA,運行examples/training_sup_text_matching_model_jsonl_data.py代碼可訓練模型,模型文件已經上傳HF model hub,中文s2p(句子vs段落)語義匹配任務推薦使用shibing624/text2vec-base-multilingual模型,是用CoSENT方法訓練,基於sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2用人工挑選後的多語言STS數據集shibing624/nli-zh-all/text2vec-base-multilingual-dataset訓練得到,並在中英文測試集評估相對於原模型效果有提升,運行examples/training_sup_text_matching_model_jsonl_data.py代碼可訓練模型,模型文件已經上傳HF model hub,多語言語義匹配任務推薦使用shibing624/text2vec-bge-large-chinese模型,是用CoSENT方法訓練,基於BAAI/bge-large-zh-noinstruct用人工挑選後的中文STS數據集shibing624/nli-zh-all/text2vec-base-chinese-paraphrase-dataset訓練得到,並在中文測試集評估相對於原模型效果有提升,在短文本區分度上提昇明顯,運行examples/training_sup_text_matching_model_jsonl_data.py代碼可訓練模型,模型文件已經上傳HF model hub,中文s2s(句子vs句子)語義匹配任務推薦使用w2v-light-tencent-chinese是騰訊詞向量的Word2Vec模型,CPU加載使用,適用於中文字面匹配任務和缺少數據的冷啟動情況--model_name hfl/chinese-macbert-base或者roberta模型: --model_name uer/roberta-medium-wwm-chinese-cluecorpussmallEncoderType.FIRST_LAST_AVG和EncoderType.MEAN ,兩者預測效果差異很小examples/data ,運行tests/model_spearman.py 代碼復現評測結果模型訓練實驗報告:實驗報告

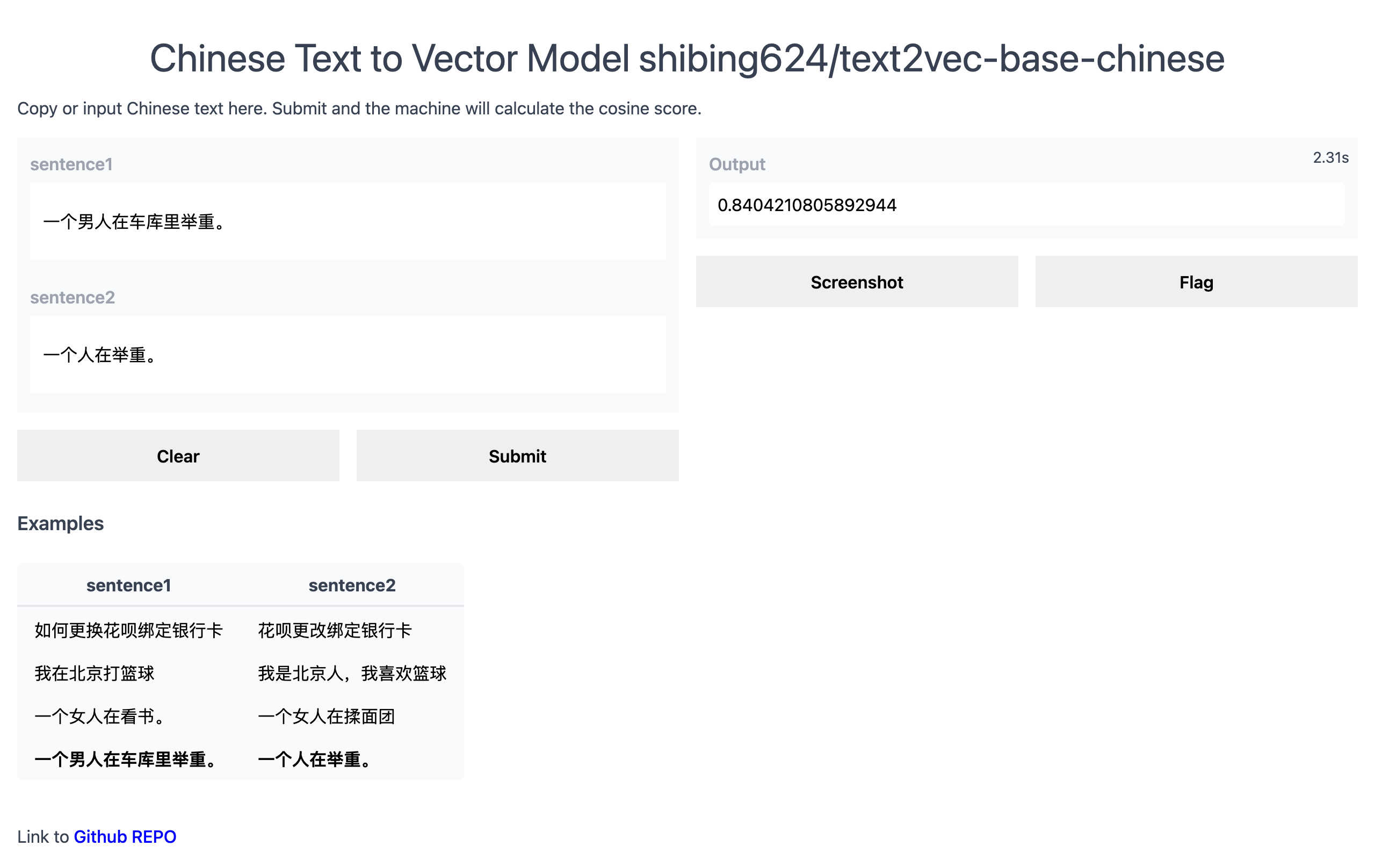

Official Demo: https://www.mulanai.com/product/short_text_sim/

HuggingFace Demo: https://huggingface.co/spaces/shibing624/text2vec

run example: examples/gradio_demo.py to see the demo:

python examples/gradio_demo.pypip install torch # conda install pytorch

pip install -U text2vecor

pip install torch # conda install pytorch

pip install -r requirements.txt

git clone https://github.com/shibing624/text2vec.git

cd text2vec

pip install --no-deps . 基於pretrained model計算文本向量:

>>> from text2vec import SentenceModel

>>> m = SentenceModel ()

>>> m.encode( "如何更换花呗绑定银行卡" )

Embedding shape: (768,)example: examples/computing_embeddings_demo.py

import sys

sys . path . append ( '..' )

from text2vec import SentenceModel

from text2vec import Word2Vec

def compute_emb ( model ):

# Embed a list of sentences

sentences = [

'卡' ,

'银行卡' ,

'如何更换花呗绑定银行卡' ,

'花呗更改绑定银行卡' ,

'This framework generates embeddings for each input sentence' ,

'Sentences are passed as a list of string.' ,

'The quick brown fox jumps over the lazy dog.'

]

sentence_embeddings = model . encode ( sentences )

print ( type ( sentence_embeddings ), sentence_embeddings . shape )

# The result is a list of sentence embeddings as numpy arrays

for sentence , embedding in zip ( sentences , sentence_embeddings ):

print ( "Sentence:" , sentence )

print ( "Embedding shape:" , embedding . shape )

print ( "Embedding head:" , embedding [: 10 ])

print ()

if __name__ == "__main__" :

# 中文句向量模型(CoSENT),中文语义匹配任务推荐,支持fine-tune继续训练

t2v_model = SentenceModel ( "shibing624/text2vec-base-chinese" )

compute_emb ( t2v_model )

# 支持多语言的句向量模型(CoSENT),多语言(包括中英文)语义匹配任务推荐,支持fine-tune继续训练

sbert_model = SentenceModel ( "shibing624/text2vec-base-multilingual" )

compute_emb ( sbert_model )

# 中文词向量模型(word2vec),中文字面匹配任务和冷启动适用

w2v_model = Word2Vec ( "w2v-light-tencent-chinese" )

compute_emb ( w2v_model )output:

<class 'numpy.ndarray'> (7, 768)

Sentence: 卡

Embedding shape: (768,)

Sentence: 银行卡

Embedding shape: (768,)

...

embeddings是numpy.ndarray類型,shape為(sentences_size, model_embedding_size) ,三個模型任選一種即可,推薦用第一個。shibing624/text2vec-base-chinese模型是CoSENT方法在中文STS-B數據集訓練得到的,模型已經上傳到huggingface的模型庫shibing624/text2vec-base-chinese, 是text2vec.SentenceModel指定的默認模型,可以通過上面示例調用,或者如下所示用transformers庫調用, 模型自動下載到本機路徑: ~/.cache/huggingface/transformersw2v-light-tencent-chinese是通過gensim加載的Word2Vec模型,使用騰訊詞向量Tencent_AILab_ChineseEmbedding.tar.gz計算各字詞的詞向量,句子向量通過單詞詞向量取平均值得到,模型自動下載到本機路徑: ~/.text2vec/datasets/light_Tencent_AILab_ChineseEmbedding.bintext2vec支持多卡推理(計算文本向量): examples/computing_embeddings_multi_gpu_demo.py Without text2vec, you can use the model like this:

First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

example: examples/use_origin_transformers_demo.py

import os

import torch

from transformers import AutoTokenizer , AutoModel

os . environ [ "KMP_DUPLICATE_LIB_OK" ] = "TRUE"

# Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling ( model_output , attention_mask ):

token_embeddings = model_output [ 0 ] # First element of model_output contains all token embeddings

input_mask_expanded = attention_mask . unsqueeze ( - 1 ). expand ( token_embeddings . size ()). float ()

return torch . sum ( token_embeddings * input_mask_expanded , 1 ) / torch . clamp ( input_mask_expanded . sum ( 1 ), min = 1e-9 )

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer . from_pretrained ( 'shibing624/text2vec-base-chinese' )

model = AutoModel . from_pretrained ( 'shibing624/text2vec-base-chinese' )

sentences = [ '如何更换花呗绑定银行卡' , '花呗更改绑定银行卡' ]

# Tokenize sentences

encoded_input = tokenizer ( sentences , padding = True , truncation = True , return_tensors = 'pt' )

# Compute token embeddings

with torch . no_grad ():

model_output = model ( ** encoded_input )

# Perform pooling. In this case, max pooling.

sentence_embeddings = mean_pooling ( model_output , encoded_input [ 'attention_mask' ])

print ( "Sentence embeddings:" )

print ( sentence_embeddings )sentence-transformers is a popular library to compute dense vector representations for sentences.

Install sentence-transformers:

pip install -U sentence-transformersThen load model and predict:

from sentence_transformers import SentenceTransformer

m = SentenceTransformer ( "shibing624/text2vec-base-chinese" )

sentences = [ '如何更换花呗绑定银行卡' , '花呗更改绑定银行卡' ]

sentence_embeddings = m . encode ( sentences )

print ( "Sentence embeddings:" )

print ( sentence_embeddings )Word2Vec詞向量提供兩種Word2Vec詞向量,任選一個:

~/.text2vec/datasets/light_Tencent_AILab_ChineseEmbedding.bin~/.text2vec/datasets/Tencent_AILab_ChineseEmbedding.txt ,騰訊詞向量主頁:https://ai.tencent.com/ailab/nlp/zh/index.html 詞向量下載地址:https://ai.tencent.com/ailab/nlp/en/download.html 更多查看騰訊詞向量介紹-wiki支持批量獲取文本向量

code: cli.py

> text2vec -h

usage: text2vec [-h] --input_file INPUT_FILE [--output_file OUTPUT_FILE] [--model_type MODEL_TYPE] [--model_name MODEL_NAME] [--encoder_type ENCODER_TYPE]

[--batch_size BATCH_SIZE] [--max_seq_length MAX_SEQ_LENGTH] [--chunk_size CHUNK_SIZE] [--device DEVICE]

[--show_progress_bar SHOW_PROGRESS_BAR] [--normalize_embeddings NORMALIZE_EMBEDDINGS]

text2vec cli

optional arguments:

-h, --help show this help message and exit

--input_file INPUT_FILE

input file path, text file, required

--output_file OUTPUT_FILE

output file path, output csv file, default text_embs.csv

--model_type MODEL_TYPE

model type: sentencemodel, word2vec, default sentencemodel

--model_name MODEL_NAME

model name or path, default shibing624/text2vec-base-chinese

--encoder_type ENCODER_TYPE

encoder type: MEAN, CLS, POOLER, FIRST_LAST_AVG, LAST_AVG, default MEAN

--batch_size BATCH_SIZE

batch size, default 32

--max_seq_length MAX_SEQ_LENGTH

max sequence length, default 256

--chunk_size CHUNK_SIZE

chunk size to save partial results, default 1000

--device DEVICE device: cpu, cuda, default None

--show_progress_bar SHOW_PROGRESS_BAR

show progress bar, default True

--normalize_embeddings NORMALIZE_EMBEDDINGS

normalize embeddings, default False

--multi_gpu MULTI_GPU

multi gpu, default False

run:

pip install text2vec -U

text2vec --input_file input.txt --output_file out.csv --batch_size 128 --multi_gpu True輸入文件(required):

input.txt,format:一句話一行的句子文本。

example: examples/semantic_text_similarity_demo.py

import sys

sys . path . append ( '..' )

from text2vec import Similarity

# Two lists of sentences

sentences1 = [ '如何更换花呗绑定银行卡' ,

'The cat sits outside' ,

'A man is playing guitar' ,

'The new movie is awesome' ]

sentences2 = [ '花呗更改绑定银行卡' ,

'The dog plays in the garden' ,

'A woman watches TV' ,

'The new movie is so great' ]

sim_model = Similarity ()

for i in range ( len ( sentences1 )):

for j in range ( len ( sentences2 )):

score = sim_model . get_score ( sentences1 [ i ], sentences2 [ j ])

print ( "{} t t {} t t Score: {:.4f}" . format ( sentences1 [ i ], sentences2 [ j ], score ))output:

如何更换花呗绑定银行卡 花呗更改绑定银行卡 Score: 0.9477

如何更换花呗绑定银行卡 The dog plays in the garden Score: -0.1748

如何更换花呗绑定银行卡 A woman watches TV Score: -0.0839

如何更换花呗绑定银行卡 The new movie is so great Score: -0.0044

The cat sits outside 花呗更改绑定银行卡 Score: -0.0097

The cat sits outside The dog plays in the garden Score: 0.1908

The cat sits outside A woman watches TV Score: -0.0203

The cat sits outside The new movie is so great Score: 0.0302

A man is playing guitar 花呗更改绑定银行卡 Score: -0.0010

A man is playing guitar The dog plays in the garden Score: 0.1062

A man is playing guitar A woman watches TV Score: 0.0055

A man is playing guitar The new movie is so great Score: 0.0097

The new movie is awesome 花呗更改绑定银行卡 Score: 0.0302

The new movie is awesome The dog plays in the garden Score: -0.0160

The new movie is awesome A woman watches TV Score: 0.1321

The new movie is awesome The new movie is so great Score: 0.9591句子餘弦相似度值

score範圍是[-1, 1],值越大越相似。

一般在文檔候選集中找與query最相似的文本,常用於QA場景的問句相似匹配、文本相似檢索等任務。

example: examples/semantic_search_demo.py

import sys

sys . path . append ( '..' )

from text2vec import SentenceModel , cos_sim , semantic_search

embedder = SentenceModel ()

# Corpus with example sentences

corpus = [

'花呗更改绑定银行卡' ,

'我什么时候开通了花呗' ,

'A man is eating food.' ,

'A man is eating a piece of bread.' ,

'The girl is carrying a baby.' ,

'A man is riding a horse.' ,

'A woman is playing violin.' ,

'Two men pushed carts through the woods.' ,

'A man is riding a white horse on an enclosed ground.' ,

'A monkey is playing drums.' ,

'A cheetah is running behind its prey.'

]

corpus_embeddings = embedder . encode ( corpus )

# Query sentences:

queries = [

'如何更换花呗绑定银行卡' ,

'A man is eating pasta.' ,

'Someone in a gorilla costume is playing a set of drums.' ,

'A cheetah chases prey on across a field.' ]

for query in queries :

query_embedding = embedder . encode ( query )

hits = semantic_search ( query_embedding , corpus_embeddings , top_k = 5 )

print ( " n n ====================== n n " )

print ( "Query:" , query )

print ( " n Top 5 most similar sentences in corpus:" )

hits = hits [ 0 ] # Get the hits for the first query

for hit in hits :

print ( corpus [ hit [ 'corpus_id' ]], "(Score: {:.4f})" . format ( hit [ 'score' ]))output:

Query: 如何更换花呗绑定银行卡

Top 5 most similar sentences in corpus:

花呗更改绑定银行卡 (Score: 0.9477)

我什么时候开通了花呗 (Score: 0.3635)

A man is eating food. (Score: 0.0321)

A man is riding a horse. (Score: 0.0228)

Two men pushed carts through the woods. (Score: 0.0090)

======================

Query: A man is eating pasta.

Top 5 most similar sentences in corpus:

A man is eating food. (Score: 0.6734)

A man is eating a piece of bread. (Score: 0.4269)

A man is riding a horse. (Score: 0.2086)

A man is riding a white horse on an enclosed ground. (Score: 0.1020)

A cheetah is running behind its prey. (Score: 0.0566)

======================

Query: Someone in a gorilla costume is playing a set of drums.

Top 5 most similar sentences in corpus:

A monkey is playing drums. (Score: 0.8167)

A cheetah is running behind its prey. (Score: 0.2720)

A woman is playing violin. (Score: 0.1721)

A man is riding a horse. (Score: 0.1291)

A man is riding a white horse on an enclosed ground. (Score: 0.1213)

======================

Query: A cheetah chases prey on across a field.

Top 5 most similar sentences in corpus:

A cheetah is running behind its prey. (Score: 0.9147)

A monkey is playing drums. (Score: 0.2655)

A man is riding a horse. (Score: 0.1933)

A man is riding a white horse on an enclosed ground. (Score: 0.1733)

A man is eating food. (Score: 0.0329)similarities庫[推薦]

文本相似度計算和文本匹配搜索任務,推薦使用similarities庫,兼容本項目release的Word2vec、SBERT、Cosent類語義匹配模型,還支持億級圖文搜索,支持文本語義去重,圖片去重等功能。

安裝: pip install -U similarities

句子相似度計算:

from similarities import BertSimilarity

m = BertSimilarity ()

r = m . similarity ( '如何更换花呗绑定银行卡' , '花呗更改绑定银行卡' )

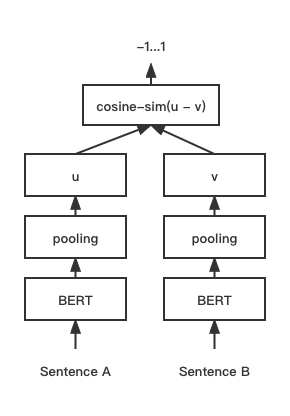

print ( f"similarity score: { float ( r ) } " ) # similarity score: 0.855146050453186 CoSENT(Cosine Sentence)文本匹配模型,在Sentence-BERT上改進了CosineRankLoss的句向量方案

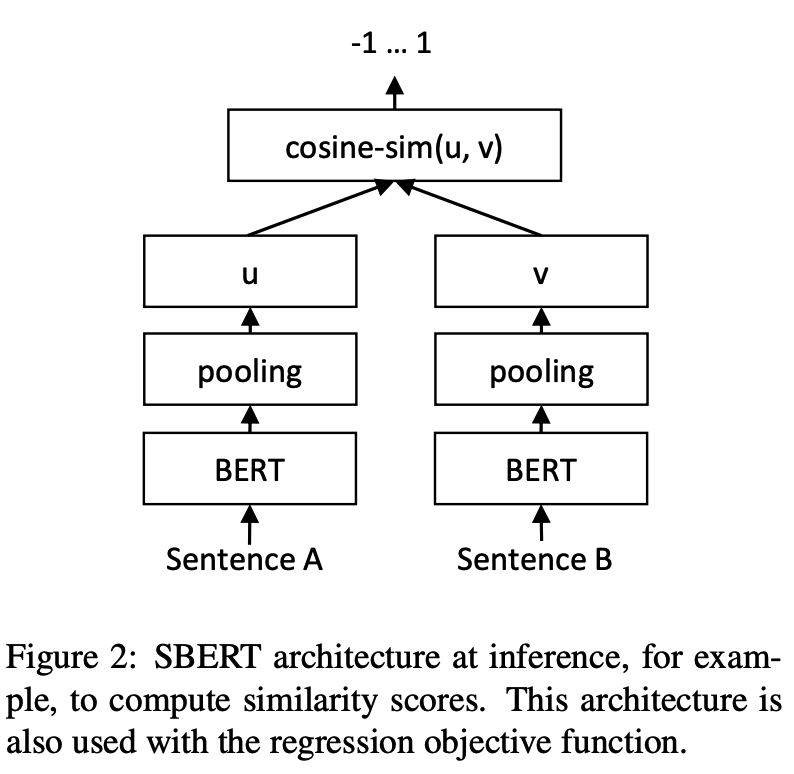

Network structure:

Training:

Inference:

訓練和預測CoSENT模型:

CoSENT模型example: examples/training_sup_text_matching_model.py

cd examples

python training_sup_text_matching_model.py --model_arch cosent --do_train --do_predict --num_epochs 10 --model_name hfl/chinese-macbert-base --output_dir ./outputs/STS-B-cosentCoSENT模型支持這些中文匹配數據集的使用:'ATEC', 'STS-B', 'BQ', 'LCQMC', 'PAWSX',具體參考HuggingFace datasets https://huggingface.co/datasets/shibing624/nli_zh

python training_sup_text_matching_model.py --task_name ATEC --model_arch cosent --do_train --do_predict --num_epochs 10 --model_name hfl/chinese-macbert-base --output_dir ./outputs/ATEC-cosentexample: examples/training_sup_text_matching_model_mydata.py

單卡訓練:

CUDA_VISIBLE_DEVICES=0 python training_sup_text_matching_model_mydata.py --do_train --do_predict多卡訓練:

CUDA_VISIBLE_DEVICES=0,1 torchrun --nproc_per_node 2 training_sup_text_matching_model_mydata.py --do_train --do_predict --output_dir outputs/STS-B-text2vec-macbert-v1 --batch_size 64 --bf16 --data_parallel 訓練集格式參考examples/data/STS-B/STS-B.valid.data

sentence1 sentence2 label

一个女孩在给她的头发做发型。 一个女孩在梳头。 2

一群男人在海滩上踢足球。 一群男孩在海滩上踢足球。 3

一个女人在测量另一个女人的脚踝。 女人测量另一个女人的脚踝。 5 label可以是0,1標籤,0代表兩個句子不相似,1代表相似;也可以是0-5的評分,評分越高,表示兩個句子越相似。模型都能支持。

CoSENT模型example: examples/training_sup_text_matching_model_en.py

cd examples

python training_sup_text_matching_model_en.py --model_arch cosent --do_train --do_predict --num_epochs 10 --model_name bert-base-uncased --output_dir ./outputs/STS-B-en-cosentCoSENT模型,在STS-B測試集評估效果example: examples/training_unsup_text_matching_model_en.py

cd examples

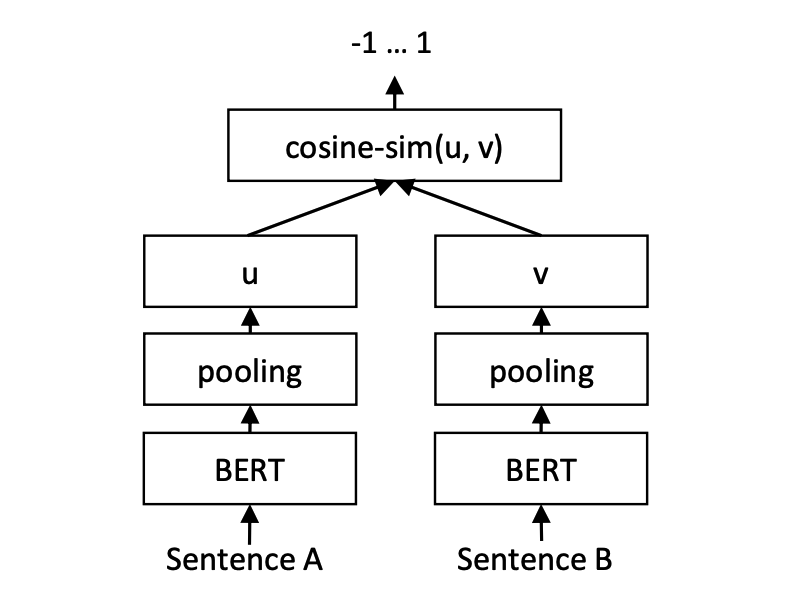

python training_unsup_text_matching_model_en.py --model_arch cosent --do_train --do_predict --num_epochs 10 --model_name bert-base-uncased --output_dir ./outputs/STS-B-en-unsup-cosentSentence-BERT文本匹配模型,表徵式句向量表示方案

Network structure:

Training:

Inference:

SBERT模型example: examples/training_sup_text_matching_model.py

cd examples

python training_sup_text_matching_model.py --model_arch sentencebert --do_train --do_predict --num_epochs 10 --model_name hfl/chinese-macbert-base --output_dir ./outputs/STS-B-sbertSBERT模型example: examples/training_sup_text_matching_model_en.py

cd examples

python training_sup_text_matching_model_en.py --model_arch sentencebert --do_train --do_predict --num_epochs 10 --model_name bert-base-uncased --output_dir ./outputs/STS-B-en-sbertSBERT模型,在STS-B測試集評估效果example: examples/training_unsup_text_matching_model_en.py

cd examples

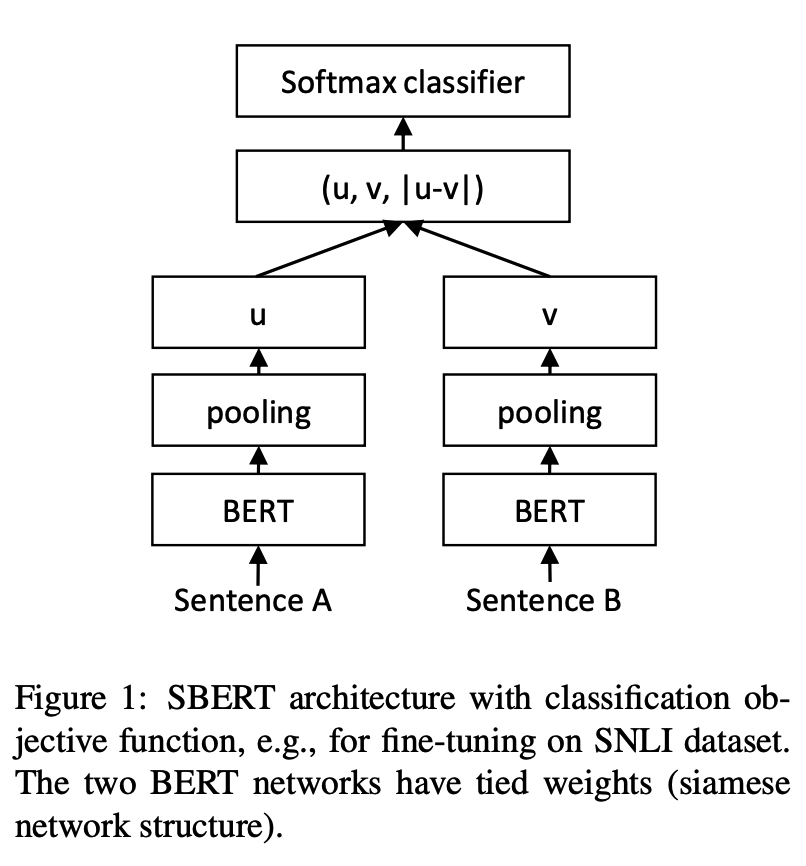

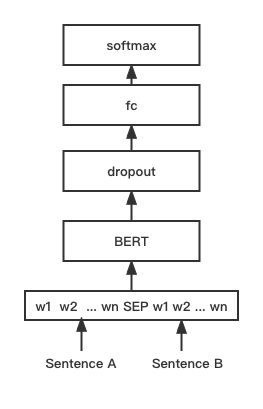

python training_unsup_text_matching_model_en.py --model_arch sentencebert --do_train --do_predict --num_epochs 10 --model_name bert-base-uncased --output_dir ./outputs/STS-B-en-unsup-sbertBERT文本匹配模型,原生BERT匹配網絡結構,交互式句向量匹配模型

Network structure:

Training and inference:

訓練腳本同上examples/training_sup_text_matching_model.py。

BGE模型example: examples/training_bge_model_mydata.py

cd examples

python training_bge_model_mydata.py --model_arch bge --do_train --do_predict --num_epochs 4 --output_dir ./outputs/STS-B-bge-v1 --batch_size 4 --save_model_every_epoch --bf16BGE模型微調訓練,使用對比學習訓練模型,輸入數據的格式是一個三元組' (query, positive, negative) '

cd examples/data

python build_zh_bge_dataset.py

python hard_negatives_mine.pybuild_zh_bge_dataset.py基於中文STS-B生成三元組訓練集,格式如下: { "query" : "一个男人正在往锅里倒油。 " , "pos" :[ "一个男人正在往锅里倒油。 " ], "neg" :[ "亲俄军队进入克里米亚乌克兰海军基地" , "配有木制家具的优雅餐厅。 " , "马雅瓦蒂要求总统统治查谟和克什米尔" , "非典还夺去了多伦多地区44人的生命,其中包括两名护士和一名医生。 " , "在一次采访中,身为犯罪学家的希利说,这里和全国各地的许多议员都对死刑抱有戒心。 " , "豚鼠吃胡萝卜。 " , "狗嘴里叼着一根棍子在水中游泳。 " , "拉里·佩奇说Android很重要,不是关键" , "法国、比利时、德国、瑞典、意大利和英国为印度计划向缅甸出售的先进轻型直升机提供零部件和技术。 " , "巴林赛马会在动乱中进行" ]}hard_negatives_mine.py使用faiss相似匹配,挖掘難負例。由於text2vec訓練的模型可以使用sentence-transformers庫加載,此處復用其模型蒸餾方法distillation。

提供兩種部署模型,搭建服務的方法: 1)基於Jina搭建gRPC服務【推薦】;2)基於FastAPI搭建原生Http服務。

採用C/S模式搭建高性能服務,支持docker雲原生,gRPC/HTTP/WebSocket,支持多個模型同時預測,GPU多卡處理。

安裝: pip install jina

啟動服務:

example: examples/jina_server_demo.py

from jina import Flow

port = 50001

f = Flow ( port = port ). add (

uses = 'jinahub://Text2vecEncoder' ,

uses_with = { 'model_name' : 'shibing624/text2vec-base-chinese' }

)

with f :

# backend server forever

f . block ()該模型預測方法(executor)已經上傳到JinaHub,裡麵包括docker、k8s部署方法。

from jina import Client

from docarray import Document , DocumentArray

port = 50001

c = Client ( port = port )

data = [ '如何更换花呗绑定银行卡' ,

'花呗更改绑定银行卡' ]

print ( "data:" , data )

print ( 'data embs:' )

r = c . post ( '/' , inputs = DocumentArray ([ Document ( text = '如何更换花呗绑定银行卡' ), Document ( text = '花呗更改绑定银行卡' )]))

print ( r . embeddings )批量調用方法見example: examples/jina_client_demo.py

安裝: pip install fastapi uvicorn

啟動服務:

example: examples/fastapi_server_demo.py

cd examples

python fastapi_server_demo.pycurl -X ' GET '

' http://0.0.0.0:8001/emb?q=hello '

-H ' accept: application/json ' | Dataset | Introduce | Download Link |

|---|---|---|

| shibing624/nli-zh-all | 中文語義匹配數據合集,整合了文本推理,相似,摘要,問答,指令微調等任務的820萬高質量數據,並轉化為匹配格式數據集 | https://huggingface.co/datasets/shibing624/nli-zh-all |

| shibing624/snli-zh | 中文SNLI和MultiNLI數據集,翻譯自英文SNLI和MultiNLI | https://huggingface.co/datasets/shibing624/snli-zh |

| shibing624/nli_zh | 中文語義匹配數據集,整合了中文ATEC、BQ、LCQMC、PAWSX、STS-B共5個任務的數據集 | https://huggingface.co/datasets/shibing624/nli_zh or 百度網盤(提取碼:qkt6) or github |

| shibing624/sts-sohu2021 | 中文語義匹配數據集,2021搜狐校園文本匹配算法大賽數據集 | https://huggingface.co/datasets/shibing624/sts-sohu2021 |

| ATEC | 中文ATEC數據集,螞蟻金服Q-Qpair數據集 | ATEC |

| BQ | 中文BQ(Bank Question)數據集,銀行Q-Qpair數據集 | BQ |

| LCQMC | 中文LCQMC(large-scale Chinese question matching corpus)數據集,Q-Qpair數據集 | LCQMC |

| PAWSX | 中文PAWS(Paraphrase Adversaries from Word Scrambling)數據集,Q-Qpair數據集 | PAWSX |

| STS-B | 中文STS-B數據集,中文自然語言推理數據集,從英文STS-B翻譯為中文的數據集 | STS-B |

常用英文匹配數據集:

數據集使用示例:

pip install datasets from datasets import load_dataset

dataset = load_dataset ( "shibing624/nli_zh" , "STS-B" ) # ATEC or BQ or LCQMC or PAWSX or STS-B

print ( dataset )

print ( dataset [ 'test' ][ 0 ])output:

DatasetDict({

train: Dataset({

features: [ ' sentence1 ' , ' sentence2 ' , ' label ' ],

num_rows: 5231

})

validation: Dataset({

features: [ ' sentence1 ' , ' sentence2 ' , ' label ' ],

num_rows: 1458

})

test: Dataset({

features: [ ' sentence1 ' , ' sentence2 ' , ' label ' ],

num_rows: 1361

})

})

{ ' sentence1 ' : '一个女孩在给她的头发做发型。 ' , ' sentence2 ' : '一个女孩在梳头。 ' , ' label ' : 2}

如果你在研究中使用了text2vec,請按如下格式引用:

APA:

Xu, M. Text2vec: Text to vector toolkit (Version 1.1.2) [Computer software]. https://github.com/shibing624/text2vecBibTeX:

@misc{Text2vec,

author = {Ming Xu},

title = {Text2vec: Text to vector toolkit},

year = {2023},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = { url {https://github.com/shibing624/text2vec}},

}授權協議為The Apache License 2.0,可免費用做商業用途。請在產品說明中附加text2vec的鏈接和授權協議。

項目代碼還很粗糙,如果大家對代碼有所改進,歡迎提交回本項目,在提交之前,注意以下兩點:

tests添加相應的單元測試python -m pytest -v來運行所有單元測試,確保所有單測都是通過的之後即可提交PR。