mlm scoring

1.0.0

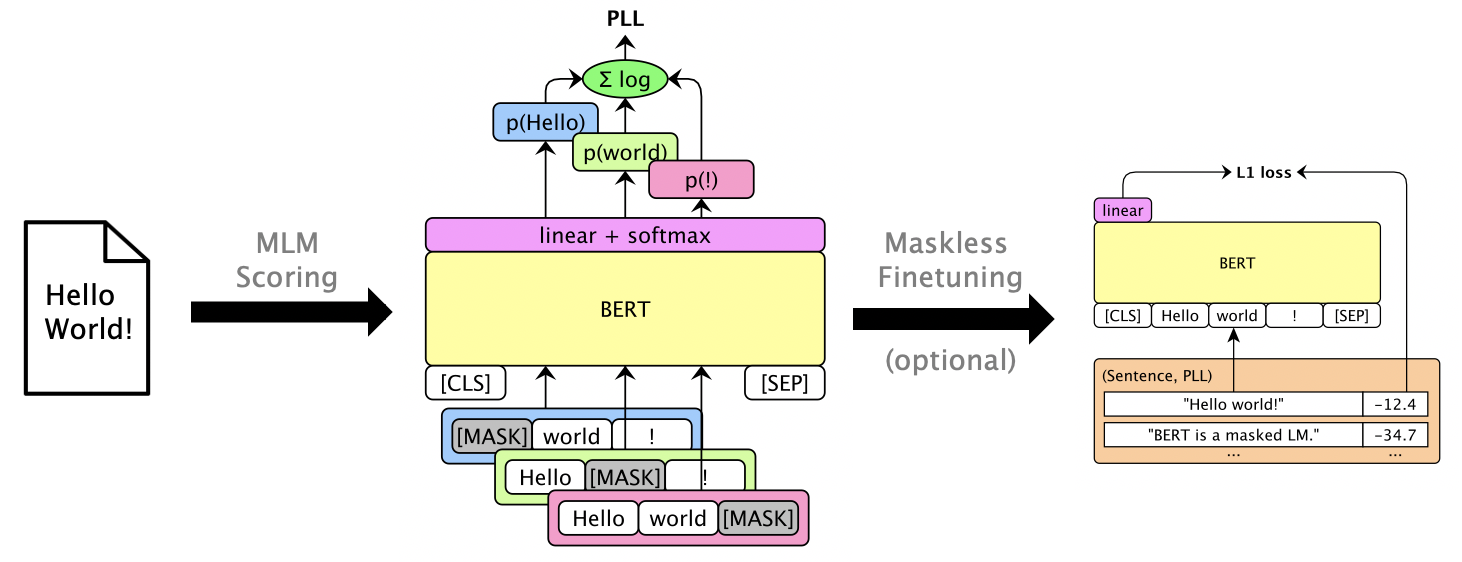

该软件包使用Bert,Roberta和XLM等蒙版LMS来评分句子,并通过伪log-likelihood Hoods得分来恢复n-最佳列表,这些分数是通过掩盖单个单词来计算的。我们还支持GPT-2等自回旋LMS。示例用途包括:

论文:朱利安·萨拉萨尔(Julian Salazar),戴维斯·林格(Davis Liang),托恩(Toan) “蒙版语言模型得分”,ACL 2020。

需要Python 3.6+。克隆此存储库并安装:

pip install -e .

pip install torch mxnet-cu102mkl # Replace w/ your CUDA version; mxnet-mkl if CPU only.有些模型通过Gluonnlp,而其他模型则通过?变压器,因此现在我们需要MXNET和PYTORCH。您现在可以直接导入库:

from mlm . scorers import MLMScorer , MLMScorerPT , LMScorer

from mlm . models import get_pretrained

import mxnet as mx

ctxs = [ mx . cpu ()] # or, e.g., [mx.gpu(0), mx.gpu(1)]

# MXNet MLMs (use names from mlm.models.SUPPORTED_MLMS)

model , vocab , tokenizer = get_pretrained ( ctxs , 'bert-base-en-cased' )

scorer = MLMScorer ( model , vocab , tokenizer , ctxs )

print ( scorer . score_sentences ([ "Hello world!" ]))

# >> [-12.410664200782776]

print ( scorer . score_sentences ([ "Hello world!" ], per_token = True ))

# >> [[None, -6.126736640930176, -5.501412391662598, -0.7825151681900024, None]]

# EXPERIMENTAL: PyTorch MLMs (use names from https://huggingface.co/transformers/pretrained_models.html)

model , vocab , tokenizer = get_pretrained ( ctxs , 'bert-base-cased' )

scorer = MLMScorerPT ( model , vocab , tokenizer , ctxs )

print ( scorer . score_sentences ([ "Hello world!" ]))

# >> [-12.411025047302246]

print ( scorer . score_sentences ([ "Hello world!" ], per_token = True ))

# >> [[None, -6.126738548278809, -5.501765727996826, -0.782496988773346, None]]

# MXNet LMs (use names from mlm.models.SUPPORTED_LMS)

model , vocab , tokenizer = get_pretrained ( ctxs , 'gpt2-117m-en-cased' )

scorer = LMScorer ( model , vocab , tokenizer , ctxs )

print ( scorer . score_sentences ([ "Hello world!" ]))

# >> [-15.995375633239746]

print ( scorer . score_sentences ([ "Hello world!" ], per_token = True ))

# >> [[-8.293947219848633, -6.387561798095703, -1.3138668537139893]](MXNET和PYTORCH界面将很快统一!)

运行mlm score --help以查看支持的模型等。请参阅examples/demo/format.json有关文件格式。对于输入,“得分”是可选的。输出将添加包含PLL分数的“得分”字段。

有三种分数类型,具体取决于模型:

--no-mask )我们使用bert base(未基于)在GPU 0上为librispeech dev-other的三种话进行了假设:

mlm score

--mode hyp

--model bert-base-en-uncased

--max-utts 3

--gpus 0

examples/asr-librispeech-espnet/data/dev-other.am.json

> examples/demo/dev-other-3.lm.json一个人可以通过对数线性插值来恢复N最佳列表。运行mlm rescore --help以查看所有选项。输入一个是一个具有原始分数的文件;输入两个是mlm score的分数。

我们在不同的LM权重下使用BERT的得分(从上一节)恢复了声学得分(从dev-other.am.json ):

for weight in 0 0.5 ; do

echo " lambda= ${weight} " ;

mlm rescore

--model bert-base-en-uncased

--weight ${weight}

examples/asr-librispeech-espnet/data/dev-other.am.json

examples/demo/dev-other-3.lm.json

> examples/demo/dev-other-3.lambda- ${weight} .json

done最初的wer为12.2%,而被夺回的WER为8.5%。

可以对LMS进行封装,以提供可用的PLL分数而无需掩盖。请参阅LibrisPeech无面膜登录。

运行pip install -e .[dev]安装额外的测试软件包。然后:

pytest --cov=src/mlm 。