毫不费力地使用一个命令运行LLM后端,API,前端和服务。

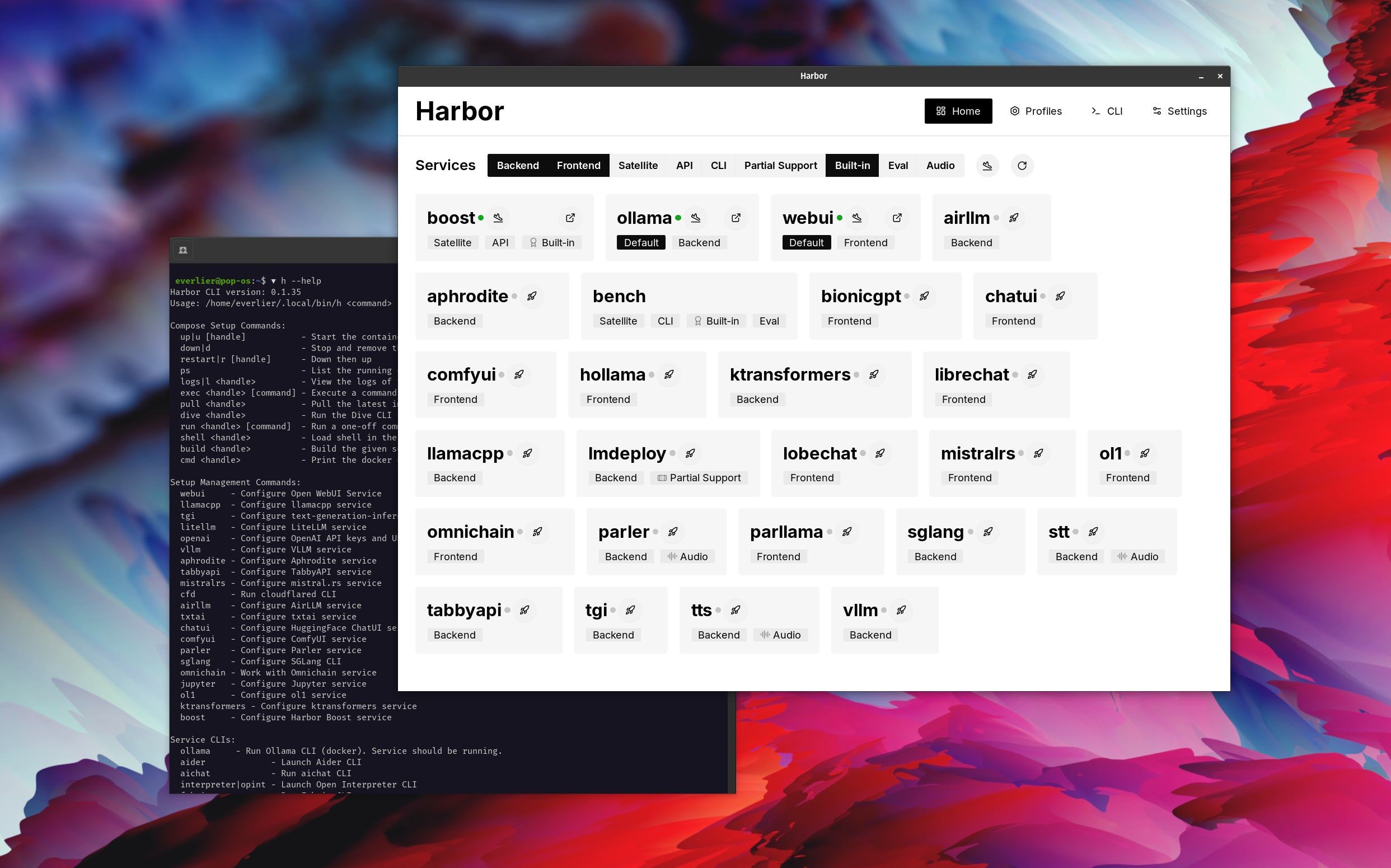

Harbor是一个容器化的LLM工具包,可让您运行LLM和其他服务。它由一个CLI和一个伴随应用程序组成,可让您轻松管理和运行AI服务。

打开webui⦁︎comfyui⦁︎librechat⦁︎huggingface chatui to lobe聊天⦁︎hollama⦁︎

ollama⦁︎llama.cpp⦁︎vllm⦁︎tabbyapi⦁︎aphrodite Engine⦁︎mistral.rs⦁︎opendeedai-speech⦁︎更快 - 旋转式服务器⦁︎parler⦁︎parler⦁︎parler⦁︎文本生成⦁︎lmdeploy⦁︎lmdeploy⦁︎airlllm⦁ ︎sglang⦁︎ktransformers⦁︎nexa sdk

港口长凳⦁︎港口boost⦁︎searxng⦁︎pleplexiga⦁︎dify dify plandex⦁︎litellm⦁︎langfuse langfuse⦁︎开放口译⦁cmdflared cmdflared cmdh cmdh fabric⦁︎txtai rag t txtai rag textgrad textgrad⦁︎ ︎omnichain⦁︎lm-评估harness⦁︎jupyterlab⦁︎ol1⦁︎openhands ⦁︎litlytics⦁︎repopack⦁︎n8n⦁︎螺栓。新开放webui管道⦁︎qdrant⦁︎k6⦁︎

请参阅服务文档以获取每个文档的简要概述。

# Run Harbor with default services:

# Open WebUI and Ollama

harbor up

# Run Harbor with additional services

# Running SearXNG automatically enables Web RAG in Open WebUI

harbor up searxng

# Run additional/alternative LLM Inference backends

# Open Webui is automatically connected to them.

harbor up llamacpp tgi litellm vllm tabbyapi aphrodite sglang ktransformers

# Run different Frontends

harbor up librechat chatui bionicgpt hollama

# Get a free quality boost with

# built-in optimizing proxy

harbor up boost

# Use FLUX in Open WebUI in one command

harbor up comfyui

# Use custom models for supported backends

harbor llamacpp model https://huggingface.co/user/repo/model.gguf

# Shortcut to HF Hub to find the models

harbor hf find gguf gemma-2

# Use HFDownloader and official HF CLI to download models

harbor hf dl -m google/gemma-2-2b-it -c 10 -s ./hf

harbor hf download google/gemma-2-2b-it

# Where possible, cache is shared between the services

harbor tgi model google/gemma-2-2b-it

harbor vllm model google/gemma-2-2b-it

harbor aphrodite model google/gemma-2-2b-it

harbor tabbyapi model google/gemma-2-2b-it-exl2

harbor mistralrs model google/gemma-2-2b-it

harbor opint model google/gemma-2-2b-it

harbor sglang model google/gemma-2-2b-it

# Convenience tools for docker setup

harbor logs llamacpp

harbor exec llamacpp ./scripts/llama-bench --help

harbor shell vllm

# Tell your shell exactly what you think about it

harbor opint

harbor aider

harbor aichat

harbor cmdh

# Use fabric to LLM-ify your linux pipes

cat ./file.md | harbor fabric --pattern extract_extraordinary_claims | grep " LK99 "

# Access service CLIs without installing them

harbor hf scan-cache

harbor ollama list

# Open services from the CLI

harbor open webui

harbor open llamacpp

# Print yourself a QR to quickly open the

# service on your phone

harbor qr

# Feeling adventurous? Expose your harbor

# to the internet

harbor tunnel

# Config management

harbor config list

harbor config set webui.host.port 8080

# Create and manage config profiles

harbor profile save l370b

harbor profile use default

# Lookup recently used harbor commands

harbor history

# Eject from Harbor into a standalone Docker Compose setup

# Will export related services and variables into a standalone file.

harbor eject searxng llamacpp > docker-compose.harbor.yml

# Run a build-in LLM benchmark with

# your own tasks

harbor bench run

# Gimmick/Fun Area

# Argument scrambling, below commands are all the same as above

# Harbor doesn't care if it's "vllm model" or "model vllm", it'll

# figure it out.

harbor model vllm

harbor vllm model

harbor config get webui.name

harbor get config webui_name

harbor tabbyapi shell

harbor shell tabbyapi

# 50% gimmick, 50% useful

# Ask harbor about itself

harbor how to ping ollama container from the webui ? 在演示中,Harbor App用于启动使用Ollama和Open WebUI服务的默认堆栈。后来,还启动了Searxng,WebUI可以连接到Web抹布。之后,Harbour Boost也开始并自动连接到WebUI,以诱导更多的创意输出。作为最后一步,在Harbor Boost中的klmbr模块的应用程序中调整了Harbor Config,这使LLM的输出无法避免(但对于人类仍无法确定)。

如果您对Docker和Linux管理感到满意 - 您可能不需要港口本身就可以管理当地的LLM环境。但是,您最终也可能达到类似的解决方案。我知道这是事实,因为我在摇摆不定的设置,而没有所有的哨子和铃铛。

Harbor并非被设计为部署解决方案,而是作为当地LLM开发环境的帮助者。这是尝试LLM和相关服务的好起点。

您以后可以从Harbor弹出并在您自己的设置中使用这些服务,或继续使用Harbor作为您自己的配置的基础。

该项目由一个相当大的外壳CLI组成,相当小的.env文件和Enourmous(用于一个存储库)数量的docker-compose文件。

hf , ollama等)harbor eject的奔跑