The pytorch implementation of papar Efficiently Trainable Text-to-Speech System Based on Deep Convolutional Networks with Guided Attention.

Thanks for Kyubyong/dc_tts, which helped me a lot to overcome some difficulties.

I have tuned hyper parameters and trained a model with The LJ Speech Dataset. The hyper parameters may not be the best and are slightly different with those used in original paper.

To train a model yourself with The LJ Speech Dataset:

pkg/hyper.pypython3 main.py --action preprocess

pkg/hyper.py

python3 main.py --action train --module Text2Mel

python3 main.py --action train --module SuperRes

Some synthesized samples are contained in directory synthesis. The according sentences are listed in sentences.txt. The pre-trained model for Text2Mel and SuperRes (auto-saved at logdir/text2mel/pkg/trained.pkg and logdir/superres/pkg/trained.pkg in training phase) will be loaded when synthesizing.

You can synthesis samples listed in sentences.txt with

python3 main.py --action synthesis

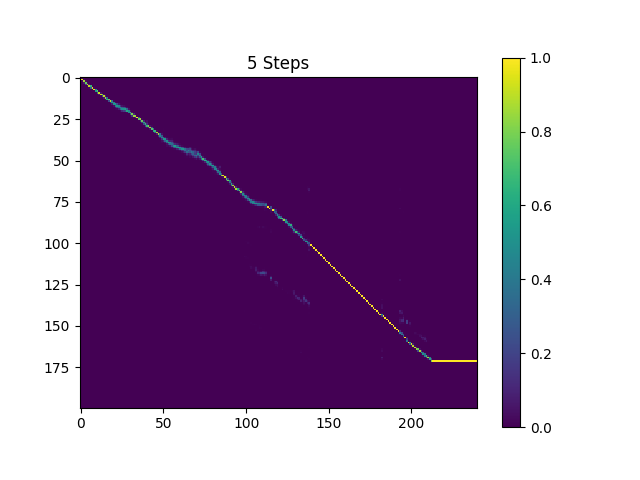

The samples in directory synthesis is sampled with 410k batches trained Text2Mel and 190k batches trained SuperRes.

The current result is not very satisfying, specificly, some vowels are skipped. Hope someone can find better hyper parameters and train better models. Please tell me if you were able to get a great model.

You can download the current pre-trained model from my dropbox.

TensorFlow implementation: Kyubyong/dc_tts

Please email me or open an issue, if you have any question or suggestion.