"目前在深度學習領域分類兩個派別,一派為學院派,研究強大、複雜的模型網絡和實驗方法,為了追求更高的性能;另一派為工程派,旨在將算法更穩定、高效的落地在硬件平台上,效率是其追求的目標。複雜的模型固然具有更好的性能,但是高額的存儲空間、計算資源消耗是使其難以有效的應用在各硬件平台上的重要原因。所以,深度神經網絡日益增長的規模為深度學習在移動端的部署帶來了巨大的挑戰,深度學習模型壓縮與部署成為了學術界和工業界都重點關注的研究領域之一"

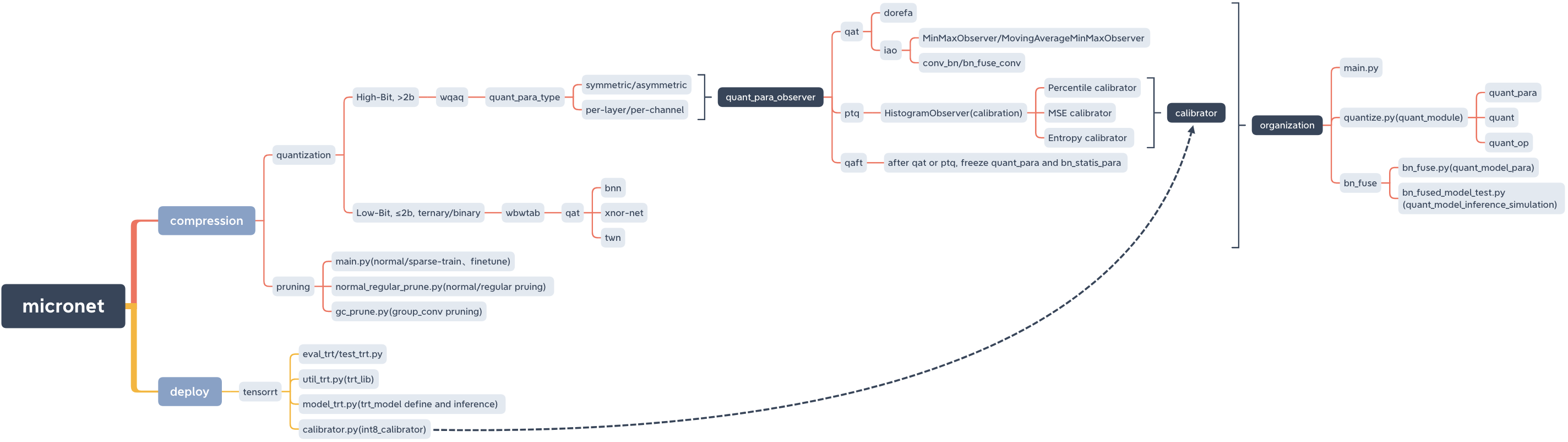

micronet, a model compression and deploy lib.

micronet

├── __init__.py

├── base_module

│ ├── __init__.py

│ └── op.py

├── compression

│ ├── README.md

│ ├── __init__.py

│ ├── pruning

│ │ ├── README.md

│ │ ├── __init__.py

│ │ ├── gc_prune.py

│ │ ├── main.py

│ │ ├── models_save

│ │ │ └── models_save.txt

│ │ └── normal_regular_prune.py

│ └── quantization

│ ├── README.md

│ ├── __init__.py

│ ├── wbwtab

│ │ ├── __init__.py

│ │ ├── bn_fuse

│ │ │ ├── bn_fuse.py

│ │ │ ├── bn_fused_model_test.py

│ │ │ └── models_save

│ │ │ └── models_save.txt

│ │ ├── main.py

│ │ ├── models_save

│ │ │ └── models_save.txt

│ │ └── quantize.py

│ └── wqaq

│ ├── __init__.py

│ ├── dorefa

│ │ ├── __init__.py

│ │ ├── main.py

│ │ ├── models_save

│ │ │ └── models_save.txt

│ │ ├── quant_model_test

│ │ │ ├── models_save

│ │ │ │ └── models_save.txt

│ │ │ ├── quant_model_para.py

│ │ │ └── quant_model_test.py

│ │ └── quantize.py

│ └── iao

│ ├── __init__.py

│ ├── bn_fuse

│ │ ├── bn_fuse.py

│ │ ├── bn_fused_model_test.py

│ │ └── models_save

│ │ └── models_save.txt

│ ├── main.py

│ ├── models_save

│ │ └── models_save.txt

│ └── quantize.py

├── data

│ └── data.txt

├── deploy

│ ├── README.md

│ ├── __init__.py

│ └── tensorrt

│ ├── README.md

│ ├── __init__.py

│ ├── calibrator.py

│ ├── eval_trt.py

│ ├── models

│ │ ├── __init__.py

│ │ └── models_trt.py

│ ├── models_save

│ │ └── calibration_seg.cache

│ ├── test_trt.py

│ └── util_trt.py

├── models

│ ├── __init__.py

│ ├── nin.py

│ ├── nin_gc.py

│ └── resnet.py

└── readme_imgs

├── code_structure.jpg

└── micronet.xmind

PyPI

pip install micronet -i https://pypi.org/simpleGitHub

git clone https://github.com/666DZY666/micronet.git

cd micronet

python setup.py install驗證

python -c " import micronet; print(micronet.__version__) " Install from github

--refine,可加載預訓練浮點模型參數,在其基礎上做量化

--W --A, 權重W和特徵A量化取值

cd micronet/compression/quantization/wbwtabpython main.py --W 2 --A 2python main.py --W 2 --A 32python main.py --W 3 --A 2python main.py --W 3 --A 32--w_bits --a_bits, 權重W和特徵A量化位數

cd micronet/compression/quantization/wqaq/dorefapython main.py --w_bits 16 --a_bits 16python main.py --w_bits 8 --a_bits 8python main.py --w_bits 4 --a_bits 4 cd micronet/compression/quantization/wqaq/iao量化位數選擇同dorefa

單卡

QAT/PTQ —> QAFT

! 注意,需要在QAT/PTQ之後再做QAFT !

--q_type, 量化類型(0-對稱, 1-非對稱)

--q_level, 權重量化級別(0-通道級, 1-層級)

--weight_observer, weight_observer選擇(0-MinMaxObserver, 1-MovingAverageMinMaxObserver)

--bn_fuse, 量化中bn融合標誌

--bn_fuse_calib, 量化中bn融合校準標誌

--pretrained_model, 預訓練浮點模型

--qaft, qaft標誌

--ptq, ptq_observer

--ptq_control, ptq_control

--ptq_batch, ptq的batch數量

--percentile, ptq校準的比例

QAT

python main.py --q_type 0 --q_level 0 --weight_observer 0python main.py --q_type 0 --q_level 0 --weight_observer 1python main.py --q_type 0 --q_level 1python main.py --q_type 1 --q_level 0python main.py --q_type 1 --q_level 1python main.py --q_type 0 --q_level 0 --bn_fusepython main.py --q_type 0 --q_level 1 --bn_fusepython main.py --q_type 1 --q_level 0 --bn_fusepython main.py --q_type 1 --q_level 1 --bn_fusepython main.py --q_type 0 --q_level 0 --bn_fuse --bn_fuse_calibPTQ

需要加載預訓練浮點模型,本項目中其可由剪枝中採用正常訓練獲取

python main.py --refine ../../../pruning/models_save/nin_gc.pth --q_level 0 --bn_fuse --pretrained_model --ptq_control --ptq --batch_size 32 --ptq_batch 200 --percentile 0.999999QAFT

! 注意,需要在QAT/PTQ之後再做QAFT !

QAT —> QAFT

python main.py --resume models_save/nin_gc_bn_fused.pth --q_type 0 --q_level 0 --bn_fuse --qaft --lr 0.00001PTQ —> QAFT

python main.py --resume models_save/nin_gc_bn_fused.pth --q_level 0 --bn_fuse --qaft --lr 0.00001 --ptq稀疏訓練—> 剪枝—> 微調

cd micronet/compression/pruning-sr 稀疏標誌

--s 稀疏率(需根據dataset、model情況具體調整)

--model_type 模型類型(0-nin, 1-nin_gc)

python main.py -sr --s 0.0001 --model_type 0python main.py -sr --s 0.001 --model_type 1--percent 剪枝率

--normal_regular 正常、規整剪枝標誌及規整剪枝基數(如設置為N,則剪枝後模型每層filter個數即為N的倍數)

--model 稀疏訓練後的model路徑

--save 剪枝後保存的model路徑(路徑默認已給出, 可據實際情況更改)

python normal_regular_prune.py --percent 0.5 --model models_save/nin_sparse.pth --save models_save/nin_prune.pthpython normal_regular_prune.py --percent 0.5 --normal_regular 8 --model models_save/nin_sparse.pth --save models_save/nin_prune.pth或

python normal_regular_prune.py --percent 0.5 --normal_regular 16 --model models_save/nin_sparse.pth --save models_save/nin_prune.pthpython gc_prune.py --percent 0.4 --model models_save/nin_gc_sparse.pth--prune_refine 剪枝後的model路徑(在其基礎上做微調)

python main.py --model_type 0 --prune_refine models_save/nin_prune.pth需要傳入剪枝後得到的新模型的cfg

如

python main.py --model_type 1 --gc_prune_refine 154 162 144 304 320 320 608 584加載剪枝後的浮點模型再做量化

cd micronet/compression/quantization/wqaq/dorefapython main.py --w_bits 8 --a_bits 8 --model_type 0 --prune_quant ../../../pruning/models_save/nin_finetune.pthpython main.py --w_bits 8 --a_bits 8 --model_type 1 --prune_quant ../../../pruning/models_save/nin_gc_retrain.pth cd micronet/compression/quantization/wqaq/iaoQAT/PTQ —> QAFT

! 注意,需要在QAT/PTQ之後再做QAFT !

QAT

bn不融合

python main.py --w_bits 8 --a_bits 8 --model_type 0 --prune_quant ../../../pruning/models_save/nin_finetune.pth --lr 0.001python main.py --w_bits 8 --a_bits 8 --model_type 1 --prune_quant ../../../pruning/models_save/nin_gc_retrain.pth --lr 0.001bn融合

python main.py --w_bits 8 --a_bits 8 --model_type 0 --prune_quant ../../../pruning/models_save/nin_finetune.pth --bn_fuse --pretrained_model --lr 0.001python main.py --w_bits 8 --a_bits 8 --model_type 1 --prune_quant ../../../pruning/models_save/nin_gc_retrain.pth --bn_fuse --pretrained_model --lr 0.001PTQ

python main.py --w_bits 8 --a_bits 8 --model_type 0 --prune_quant ../../../pruning/models_save/nin_finetune.pth --bn_fuse --pretrained_model --ptq_control --ptq --batch_size 32 --ptq_batch 200 --percentile 0.999999QAFT

! 注意,需要在QAT/PTQ之後再做QAFT !

QAT —> QAFT

bn不融合

python main.py --w_bits 8 --a_bits 8 --model_type 0 --prune_qaft models_save/nin.pth --qaft --lr 0.00001python main.py --w_bits 8 --a_bits 8 --model_type 1 --prune_qaft models_save/nin_gc.pth --qaft --lr 0.00001bn融合

python main.py --w_bits 8 --a_bits 8 --model_type 0 --prune_qaft models_save/nin_bn_fused.pth --bn_fuse --qaft --lr 0.00001python main.py --w_bits 8 --a_bits 8 --model_type 1 --prune_qaft models_save/nin_gc_bn_fused.pth --bn_fuse --qaft --lr 0.00001PTQ —> QAFT

bn不融合

python main.py --w_bits 8 --a_bits 8 --model_type 0 --prune_qaft models_save/nin.pth --qaft --lr 0.00001 --ptqpython main.py --w_bits 8 --a_bits 8 --model_type 1 --prune_qaft models_save/nin_gc.pth --qaft --lr 0.00001 --ptqbn融合

python main.py --w_bits 8 --a_bits 8 --model_type 0 --prune_qaft models_save/nin_bn_fused.pth --bn_fuse --qaft --lr 0.00001 --ptqpython main.py --w_bits 8 --a_bits 8 --model_type 1 --prune_qaft models_save/nin_gc_bn_fused.pth --bn_fuse --qaft --lr 0.00001 --ptq cd micronet/compression/quantization/wbwtabpython main.py --W 2 --A 2 --model_type 0 --prune_quant ../../pruning/models_save/nin_finetune.pthpython main.py --W 2 --A 2 --model_type 1 --prune_quant ../../pruning/models_save/nin_gc_retrain.pth cd micronet/compression/quantization/wbwtab/bn_fuse--model_type, 1 - nin_gc(含分組卷積結構); 0 - nin(正常卷積結構)

--prune_quant, 剪枝_量化模型標誌

--W, weight量化取值

均需要與量化訓練保持一致,可直接用默認

python bn_fuse.py --model_type 1 --W 2python bn_fuse.py --model_type 1 --prune_quant --W 2python bn_fuse.py --model_type 1 --W 3python bn_fuse.py --model_type 0 --W 2python bn_fused_model_test.py cd micronet/compression/quantization/wqaq/dorefa/quant_model_test--model_type, 1 - nin_gc(含分組卷積結構); 0 - nin(正常卷積結構)

--prune_quant, 剪枝_量化模型標誌

--w_bits, weight量化位數; --a_bits, activation量化位數

均需要與量化訓練保持一致,可直接用默認

python quant_model_para.py --model_type 1 --w_bits 8 --a_bits 8python quant_model_para.py --model_type 1 --prune_quant --w_bits 8 --a_bits 8python quant_model_para.py --model_type 0 --w_bits 8 --a_bits 8python quant_model_test.py注意,量化訓練時--bn_fuse 需要設置為True

cd micronet/compression/quantization/wqaq/iao/bn_fuse--model_type, 1 - nin_gc(含分組卷積結構); 0 - nin(正常卷積結構)

--prune_quant, 剪枝_量化模型標誌

--w_bits, weight量化位數; --a_bits, activation量化位數

--q_type, 0 - 對稱; 1 - 非對稱

--q_level, 0 - 通道級; 1 - 層級

均需要與量化訓練保持一致,可直接用默認

python bn_fuse.py --model_type 1 --w_bits 8 --a_bits 8python bn_fuse.py --model_type 1 --prune_quant --w_bits 8 --a_bits 8python bn_fuse.py --model_type 0 --w_bits 8 --a_bits 8python bn_fuse.py --model_type 0 --w_bits 8 --a_bits 8 --q_type 1 --q_level 1python bn_fused_model_test.py現支持cpu、gpu(單卡、多卡)

--cpu 使用cpu,--gpu_id 使用並選擇gpu

python main.py --cpupython main.py --gpu_id 0或

python main.py --gpu_id 1python main.py --gpu_id 0,1或

python main.py --gpu_id 0,1,2默認,使用服務器全卡

目前僅提供相關核心模塊代碼,後續再加入完整可運行demo

A model can be quantized(High-Bit(>2b)、Low-Bit(≤2b)/Ternary and Binary) by simply replacing op with quant_op .

import torch . nn as nn

import torch . nn . functional as F

# some base_op, such as ``Add``、``Concat``

from micronet . base_module . op import *

# ``quantize`` is quant_module, ``QuantConv2d``, ``QuantLinear``, ``QuantMaxPool2d``, ``QuantReLU`` are quant_op

from micronet . compression . quantization . wbwtab . quantize import (

QuantConv2d as quant_conv_wbwtab ,

)

from micronet . compression . quantization . wbwtab . quantize import (

ActivationQuantizer as quant_relu_wbwtab ,

)

from micronet . compression . quantization . wqaq . dorefa . quantize import (

QuantConv2d as quant_conv_dorefa ,

)

from micronet . compression . quantization . wqaq . dorefa . quantize import (

QuantLinear as quant_linear_dorefa ,

)

from micronet . compression . quantization . wqaq . iao . quantize import (

QuantConv2d as quant_conv_iao ,

)

from micronet . compression . quantization . wqaq . iao . quantize import (

QuantLinear as quant_linear_iao ,

)

from micronet . compression . quantization . wqaq . iao . quantize import (

QuantMaxPool2d as quant_max_pool_iao ,

)

from micronet . compression . quantization . wqaq . iao . quantize import (

QuantReLU as quant_relu_iao ,

)

class LeNet ( nn . Module ):

def __init__ ( self ):

super ( LeNet , self ). __init__ ()

self . conv1 = nn . Conv2d ( 1 , 10 , kernel_size = 5 )

self . conv2 = nn . Conv2d ( 10 , 20 , kernel_size = 5 )

self . fc1 = nn . Linear ( 320 , 50 )

self . fc2 = nn . Linear ( 50 , 10 )

self . max_pool = nn . MaxPool2d ( kernel_size = 2 )

self . relu = nn . ReLU ( inplace = True )

def forward ( self , x ):

x = self . relu ( self . max_pool ( self . conv1 ( x )))

x = self . relu ( self . max_pool ( self . conv2 ( x )))

x = x . view ( - 1 , 320 )

x = self . relu ( self . fc1 ( x ))

x = F . dropout ( x , training = self . training )

x = self . fc2 ( x )

return F . log_softmax ( x , dim = 1 )

class QuantLeNetWbWtAb ( nn . Module ):

def __init__ ( self ):

super ( QuantLeNetWbWtAb , self ). __init__ ()

self . conv1 = quant_conv_wbwtab ( 1 , 10 , kernel_size = 5 )

self . conv2 = quant_conv_wbwtab ( 10 , 20 , kernel_size = 5 )

self . fc1 = nn . Linear ( 320 , 50 )

self . fc2 = nn . Linear ( 50 , 10 )

self . max_pool = nn . MaxPool2d ( kernel_size = 2 )

self . relu = quant_relu_wbwtab ()

def forward ( self , x ):

x = self . relu ( self . max_pool ( self . conv1 ( x )))

x = self . relu ( self . max_pool ( self . conv2 ( x )))

x = x . view ( - 1 , 320 )

x = self . relu ( self . fc1 ( x ))

x = F . dropout ( x , training = self . training )

x = self . fc2 ( x )

return F . log_softmax ( x , dim = 1 )

class QuantLeNetDoReFa ( nn . Module ):

def __init__ ( self ):

super ( QuantLeNetDoReFa , self ). __init__ ()

self . conv1 = quant_conv_dorefa ( 1 , 10 , kernel_size = 5 )

self . conv2 = quant_conv_dorefa ( 10 , 20 , kernel_size = 5 )

self . fc1 = quant_linear_dorefa ( 320 , 50 )

self . fc2 = quant_linear_dorefa ( 50 , 10 )

self . max_pool = nn . MaxPool2d ( kernel_size = 2 )

self . relu = nn . ReLU ( inplace = True )

def forward ( self , x ):

x = self . relu ( self . max_pool ( self . conv1 ( x )))

x = self . relu ( self . max_pool ( self . conv2 ( x )))

x = x . view ( - 1 , 320 )

x = self . relu ( self . fc1 ( x ))

x = F . dropout ( x , training = self . training )

x = self . fc2 ( x )

return F . log_softmax ( x , dim = 1 )

class QuantLeNetIAO ( nn . Module ):

def __init__ ( self ):

super ( QuantLeNetIAO , self ). __init__ ()

self . conv1 = quant_conv_iao ( 1 , 10 , kernel_size = 5 )

self . conv2 = quant_conv_iao ( 10 , 20 , kernel_size = 5 )

self . fc1 = quant_linear_iao ( 320 , 50 )

self . fc2 = quant_linear_iao ( 50 , 10 )

self . max_pool = quant_max_pool_iao ( kernel_size = 2 )

self . relu = nn . ReLU ( inplace = True )

def forward ( self , x ):

x = self . relu ( self . max_pool ( self . conv1 ( x )))

x = self . relu ( self . max_pool ( self . conv2 ( x )))

x = x . view ( - 1 , 320 )

x = self . relu ( self . fc1 ( x ))

x = F . dropout ( x , training = self . training )

x = self . fc2 ( x )

return F . log_softmax ( x , dim = 1 )

lenet = LeNet ()

quant_lenet_wbwtab = QuantLeNetWbWtAb ()

quant_lenet_dorefa = QuantLeNetDoReFa ()

quant_lenet_iao = QuantLeNetIAO ()

print ( "***ori_model*** n " , lenet )

print ( " n ***quant_model_wbwtab*** n " , quant_lenet_wbwtab )

print ( " n ***quant_model_dorefa*** n " , quant_lenet_dorefa )

print ( " n ***quant_model_iao*** n " , quant_lenet_iao )

print ( " n quant_model is ready" )

print ( "micronet is ready" )A model can be quantized(High-Bit(>2b)、Low-Bit(≤2b)/Ternary and Binary) by simply using micronet.compression.quantization.quantize.prepare(model) .

import torch . nn as nn

import torch . nn . functional as F

# some base_op, such as ``Add``、``Concat``

from micronet . base_module . op import *

import micronet . compression . quantization . wqaq . dorefa . quantize as quant_dorefa

import micronet . compression . quantization . wqaq . iao . quantize as quant_iao

class LeNet ( nn . Module ):

def __init__ ( self ):

super ( LeNet , self ). __init__ ()

self . conv1 = nn . Conv2d ( 1 , 10 , kernel_size = 5 )

self . conv2 = nn . Conv2d ( 10 , 20 , kernel_size = 5 )

self . fc1 = nn . Linear ( 320 , 50 )

self . fc2 = nn . Linear ( 50 , 10 )

self . max_pool = nn . MaxPool2d ( kernel_size = 2 )

self . relu = nn . ReLU ( inplace = True )

def forward ( self , x ):

x = self . relu ( self . max_pool ( self . conv1 ( x )))

x = self . relu ( self . max_pool ( self . conv2 ( x )))

x = x . view ( - 1 , 320 )

x = self . relu ( self . fc1 ( x ))

x = F . dropout ( x , training = self . training )

x = self . fc2 ( x )

return F . log_softmax ( x , dim = 1 )

"""

--w_bits --a_bits, 权重W和特征A量化位数

--q_type, 量化类型(0-对称, 1-非对称)

--q_level, 权重量化级别(0-通道级, 1-层级)

--weight_observer, weight_observer选择(0-MinMaxObserver, 1-MovingAverageMinMaxObserver)

--bn_fuse, 量化中bn融合标志

--bn_fuse_calib, 量化中bn融合校准标志

--pretrained_model, 预训练浮点模型

--qaft, qaft标志

--ptq, ptq标志

--percentile, ptq校准的比例

"""

lenet = LeNet ()

quant_lenet_dorefa = quant_dorefa . prepare ( lenet , inplace = False , a_bits = 8 , w_bits = 8 )

quant_lenet_iao = quant_iao . prepare (

lenet ,

inplace = False ,

a_bits = 8 ,

w_bits = 8 ,

q_type = 0 ,

q_level = 0 ,

weight_observer = 0 ,

bn_fuse = False ,

bn_fuse_calib = False ,

pretrained_model = False ,

qaft = False ,

ptq = False ,

percentile = 0.9999 ,

)

# if ptq == False, do qat/qaft, need train

# if ptq == True, do ptq, don't need train

# you can refer to micronet/compression/quantization/wqaq/iao/main.py

print ( "***ori_model*** n " , lenet )

print ( " n ***quant_model_dorefa*** n " , quant_lenet_dorefa )

print ( " n ***quant_model_iao*** n " , quant_lenet_iao )

print ( " n quant_model is ready" )

print ( "micronet is ready" )python -c " import micronet; micronet.quant_test_manual() " python -c " import micronet; micronet.quant_test_auto() "when outputting "quant_model is ready", micronet is ready.

參考BN融合與量化推理仿真測試

以下為cifar10示例,可在更冗餘模型、更大數據集上嘗試其他組合壓縮方式

| 類型 | W(Bits) | A(Bits) | Acc | GFLOPs | Para(M) | Size(MB) | 壓縮率 | 損失 |

|---|---|---|---|---|---|---|---|---|

| 原模型(nin) | FP32 | FP32 | 91.01% | 0.15 | 0.67 | 2.68 | *** | *** |

| 採用分組卷積結構(nin_gc) | FP32 | FP32 | 91.04% | 0.15 | 0.58 | 2.32 | 13.43% | -0.03% |

| 剪枝 | FP32 | FP32 | 90.26% | 0.09 | 0.32 | 1.28 | 52.24% | 0.75% |

| 量化 | 1 | FP32 | 90.93% | *** | 0.58 | 0.204 | 92.39% | 0.08% |

| 量化 | 1.5 | FP32 | 91% | *** | 0.58 | 0.272 | 89.85% | 0.01% |

| 量化 | 1 | 1 | 86.23% | *** | 0.58 | 0.204 | 92.39% | 4.78% |

| 量化 | 1.5 | 1 | 86.48% | *** | 0.58 | 0.272 | 89.85% | 4.53% |

| 量化(DoReFa) | 8 | 8 | 91.03% | *** | 0.58 | 0.596 | 77.76% | -0.02% |

| 量化(IAO,全量化,symmetric/per-channel/bn_fuse) | 8 | 8 | 90.99% | *** | 0.58 | 0.596 | 77.76% | 0.02% |

| 分組+剪枝+量化 | 1.5 | 1 | 86.13% | *** | 0.32 | 0.19 | 92.91% | 4.88% |

--train_batch_size 256, 單卡

BinarizedNeuralNetworks: TrainingNeuralNetworkswithWeightsand ActivationsConstrainedto +1 or−1

XNOR-Net:ImageNetClassificationUsingBinary ConvolutionalNeuralNetworks

AN EMPIRICAL STUDY OF BINARY NEURAL NETWORKS' OPTIMISATION

A Review of Binarized Neural Networks