LiLT

1.0.0

[2022/10] LILT已添加到这里的HuggingFace/Transformers。

[2022/03]初始模型和代码发布。

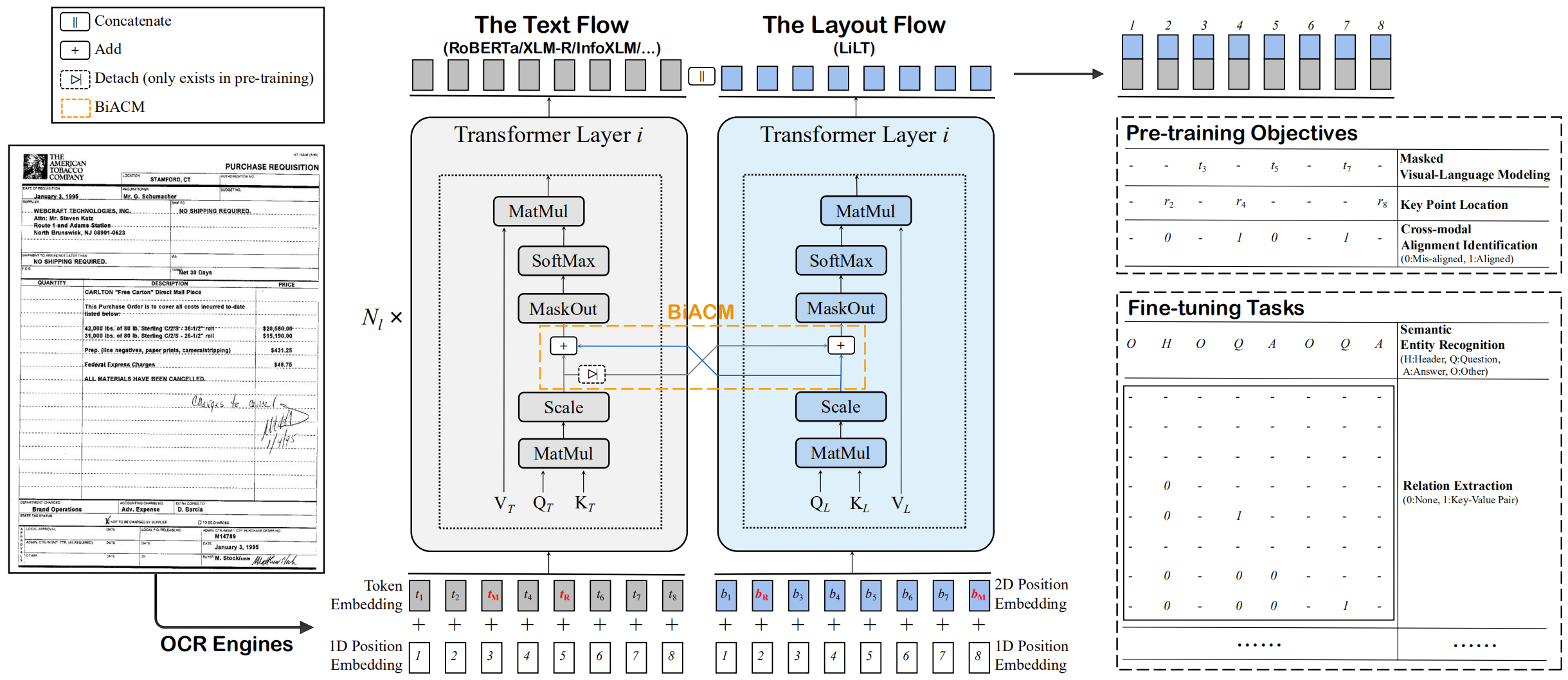

这是ACL 2022论文的官方Pytorch实施:“ LILT:一种简单而有效的语言独立的布局变压器,用于结构化文档的理解”。 [官方] [arxiv]

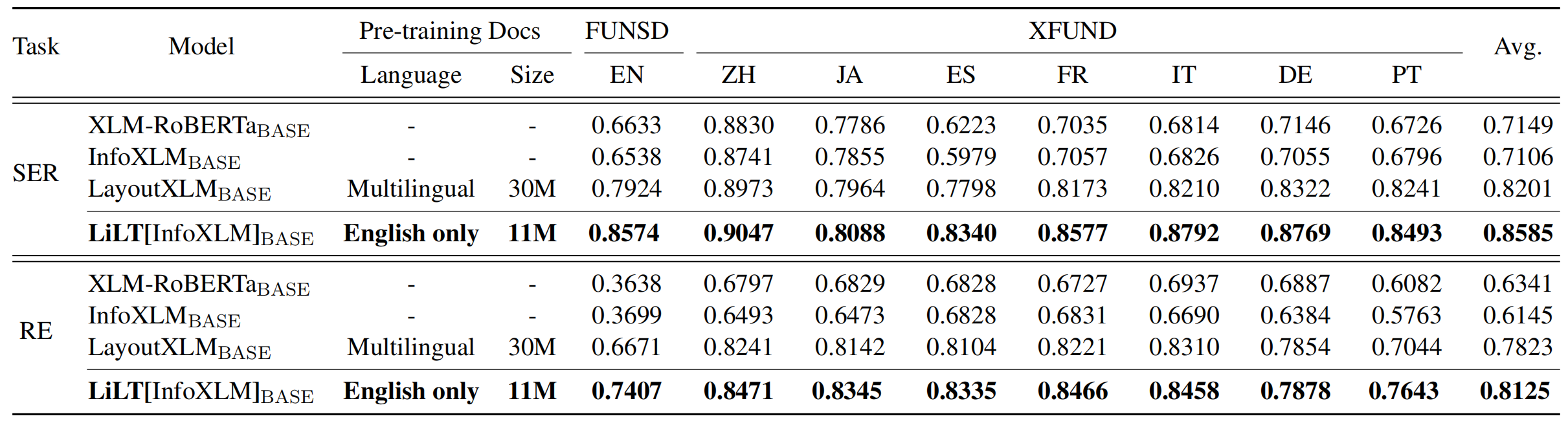

LILT已在单一语言(英语)的视觉文档上进行了预先训练,并且可以直接对其他语言进行微调,并具有相应的现成单语/多语言预训练的预训练的文本模型。我们希望这项工作的公众可用性可以帮助记录情报研究。

对于Cuda 11.x:

conda create -n liltfinetune python=3.7

conda activate liltfinetune

conda install pytorch==1.7.1 torchvision==0.8.2 cudatoolkit=11.0 -c pytorch

python -m pip install detectron2==0.5 -f https://dl.fbaipublicfiles.com/detectron2/wheels/cu110/torch1.7/index.html

git clone https://github.com/jpWang/LiLT

cd LiLT

pip install -r requirements.txt

pip install -e .或检查detectron2/pytorch版本并相应地修改命令行。

在此存储库中,我们为FUNSD和XFUND提供了微调代码。

您可以从此处下载我们的预处理数据(〜1.2GB) ,然后将未拉链的xfund&funsd/放在LiLT/下。

| 模型 | 语言 | 尺寸 | 下载 |

|---|---|---|---|

lilt-roberta-en-base | en | 293MB | OneDrive |

lilt-infoxlm-base | mul | 846MB | OneDrive |

lilt-only-base | 没有任何 | 21MB | OneDrive |

如果您想将预先训练的LILT与其他语言的Roberta相结合,请下载lilt-only-base ,并使用gen_weight_roberta_like.py生成自己的预训练检查点。

例如,将lilt-only-base与英语roberta-base相结合:

mkdir roberta-en-base

wget https://huggingface.co/roberta-base/resolve/main/config.json -O roberta-en-base/config.json

wget https://huggingface.co/roberta-base/resolve/main/pytorch_model.bin -O roberta-en-base/pytorch_model.bin

python gen_weight_roberta_like.py

--lilt lilt-only-base/pytorch_model.bin

--text roberta-en-base/pytorch_model.bin

--config roberta-en-base/config.json

--out lilt-roberta-en-base或lilt-only-base与microsoft/infoxlm-base结合在一起:

mkdir infoxlm-base

wget https://huggingface.co/microsoft/infoxlm-base/resolve/main/config.json -O infoxlm-base/config.json

wget https://huggingface.co/microsoft/infoxlm-base/resolve/main/pytorch_model.bin -O infoxlm-base/pytorch_model.bin

python gen_weight_roberta_like.py

--lilt lilt-only-base/pytorch_model.bin

--text infoxlm-base/pytorch_model.bin

--config infoxlm-base/config.json

--out lilt-infoxlm-base CUDA_VISIBLE_DEVICES=0,1,2,3 python -m torch.distributed.launch --nproc_per_node=4 examples/run_funsd.py

--model_name_or_path lilt-roberta-en-base

--tokenizer_name roberta-base

--output_dir ser_funsd_lilt-roberta-en-base

--do_train

--do_predict

--max_steps 2000

--per_device_train_batch_size 8

--warmup_ratio 0.1

--fp16

CUDA_VISIBLE_DEVICES=0,1,2,3 python -m torch.distributed.launch --nproc_per_node=4 examples/run_xfun_ser.py

--model_name_or_path lilt-infoxlm-base

--tokenizer_name xlm-roberta-base

--output_dir ls_ser_xfund_zh_lilt-infoxlm-base

--do_train

--do_eval

--lang zh

--max_steps 2000

--per_device_train_batch_size 16

--warmup_ratio 0.1

--fp16

CUDA_VISIBLE_DEVICES=0,1,2,3 python -m torch.distributed.launch --nproc_per_node=4 examples/run_xfun_re.py

--model_name_or_path lilt-infoxlm-base

--tokenizer_name xlm-roberta-base

--output_dir ls_re_xfund_zh_lilt-infoxlm-base

--do_train

--do_eval

--lang zh

--max_steps 5000

--per_device_train_batch_size 8

--learning_rate 6.25e-6

--warmup_ratio 0.1

--fp16

CUDA_VISIBLE_DEVICES=0,1,2,3 python -m torch.distributed.launch --nproc_per_node=4 examples/run_xfun_ser.py

--model_name_or_path lilt-infoxlm-base

--tokenizer_name xlm-roberta-base

--output_dir mt_ser_xfund_all_lilt-infoxlm-base

--do_train

--additional_langs all

--max_steps 16000

--per_device_train_batch_size 16

--warmup_ratio 0.1

--fp16

CUDA_VISIBLE_DEVICES=0,1,2,3 python -m torch.distributed.launch --nproc_per_node=4 examples/run_xfun_re.py

--model_name_or_path lilt-infoxlm-base

--tokenizer_name xlm-roberta-base

--output_dir mt_re_xfund_all_lilt-infoxlm-base

--do_train

--additional_langs all

--max_steps 40000

--per_device_train_batch_size 8

--learning_rate 6.25e-6

--warmup_ratio 0.1

--fp16

存储库从Unilm/Layoutlmft受益匪浅。非常感谢他们的出色工作。

如果我们的论文有助于您的研究,请在您的出版物中引用:

@inproceedings{wang-etal-2022-lilt,

title = "{L}i{LT}: A Simple yet Effective Language-Independent Layout Transformer for Structured Document Understanding",

author={Wang, Jiapeng and Jin, Lianwen and Ding, Kai},

booktitle = "Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers)",

month = may,

year = "2022",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2022.acl-long.534",

doi = "10.18653/v1/2022.acl-long.534",

pages = "7747--7757",

}

非常欢迎建议和讨论。请通过发送电子邮件至[email protected]与作者联系。