AgentOps帮助开发人员构建,评估和监视AI代理。从原型到生产。

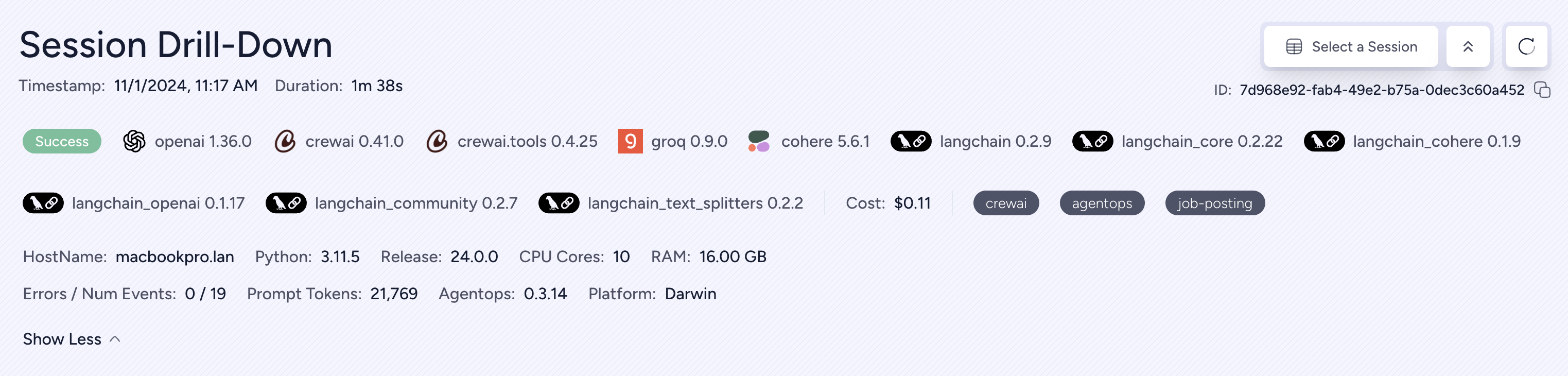

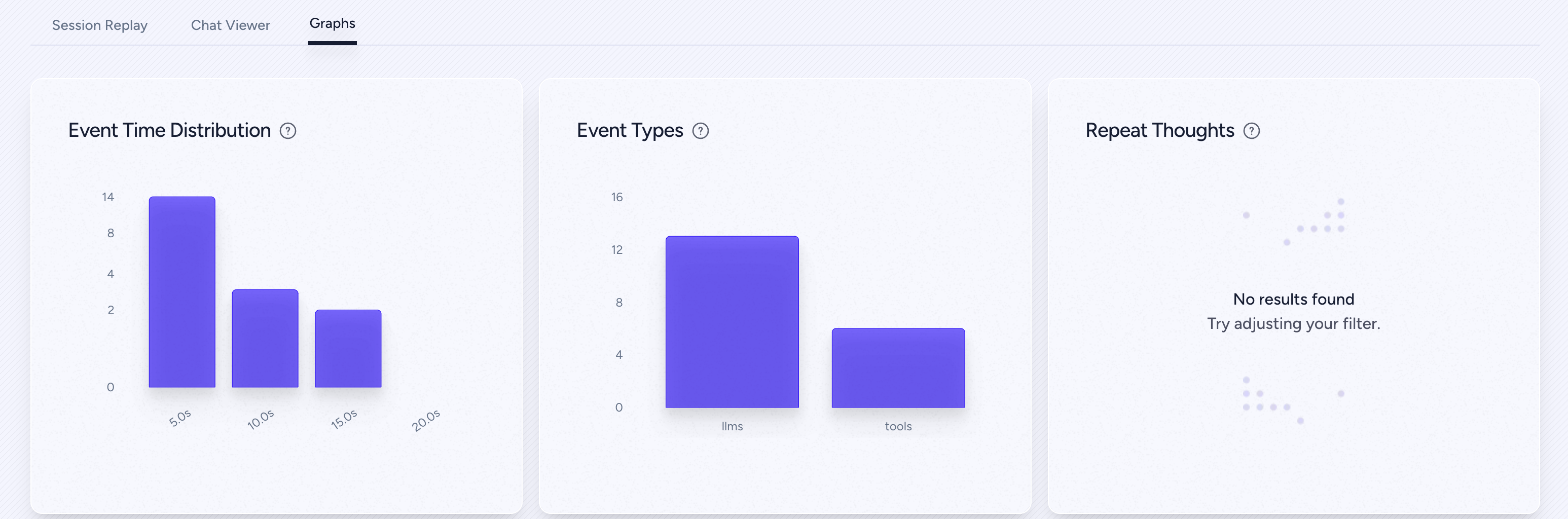

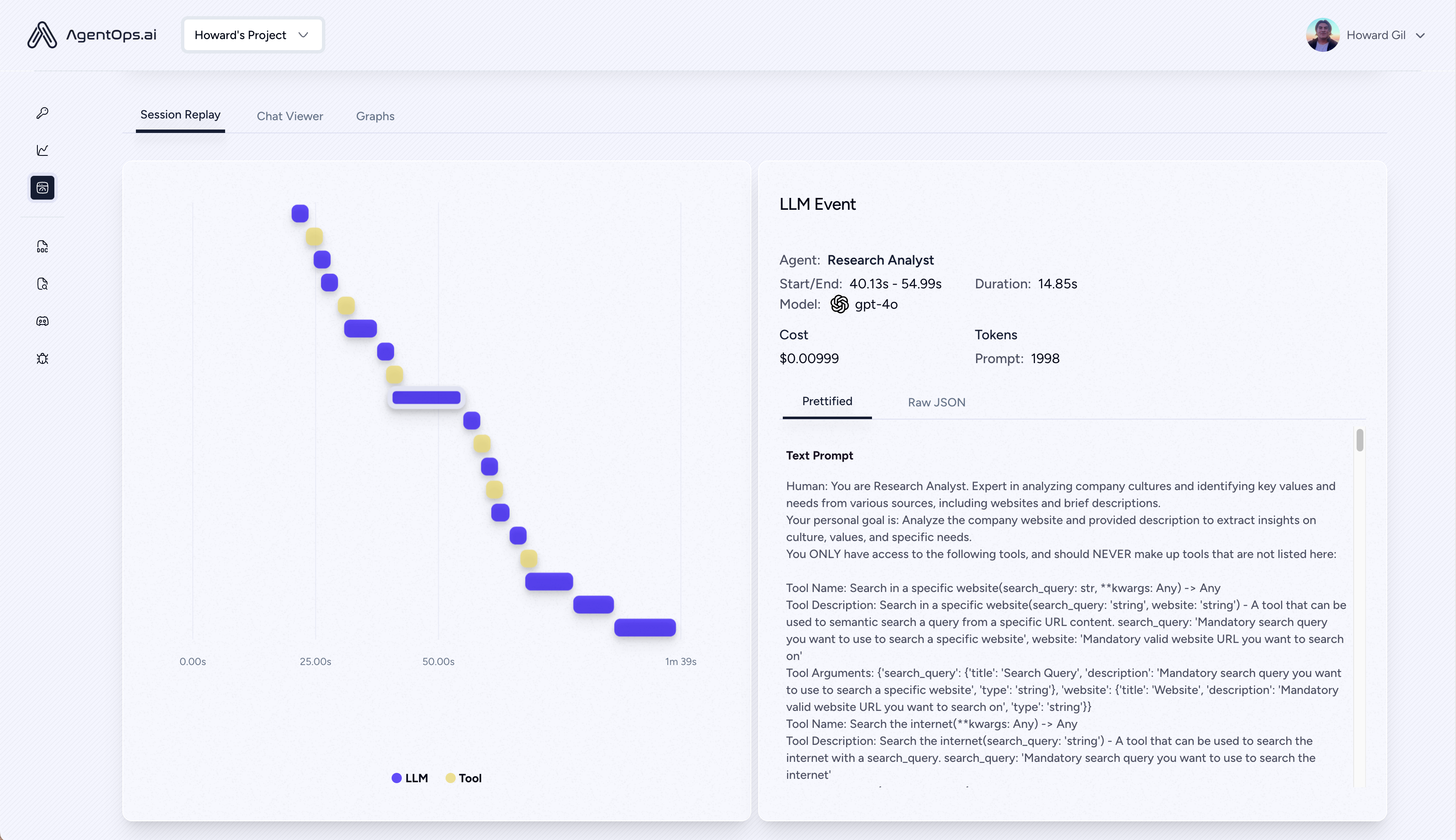

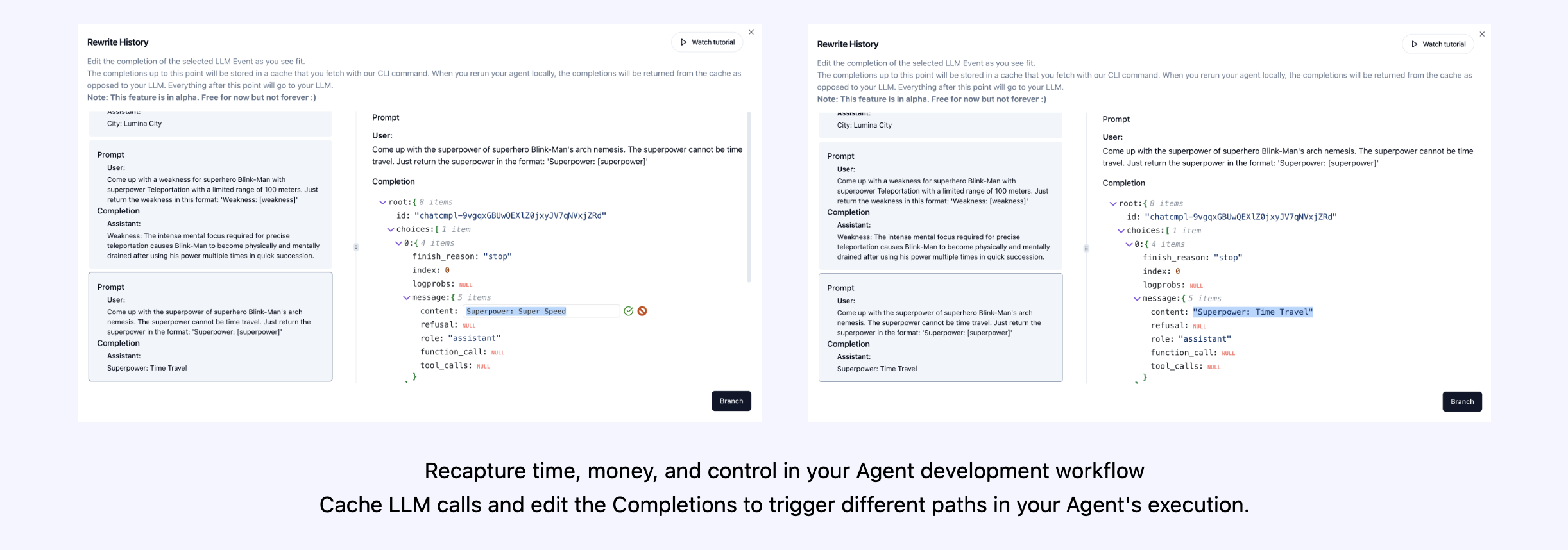

| 重播分析和调试 | 分步代理执行图 |

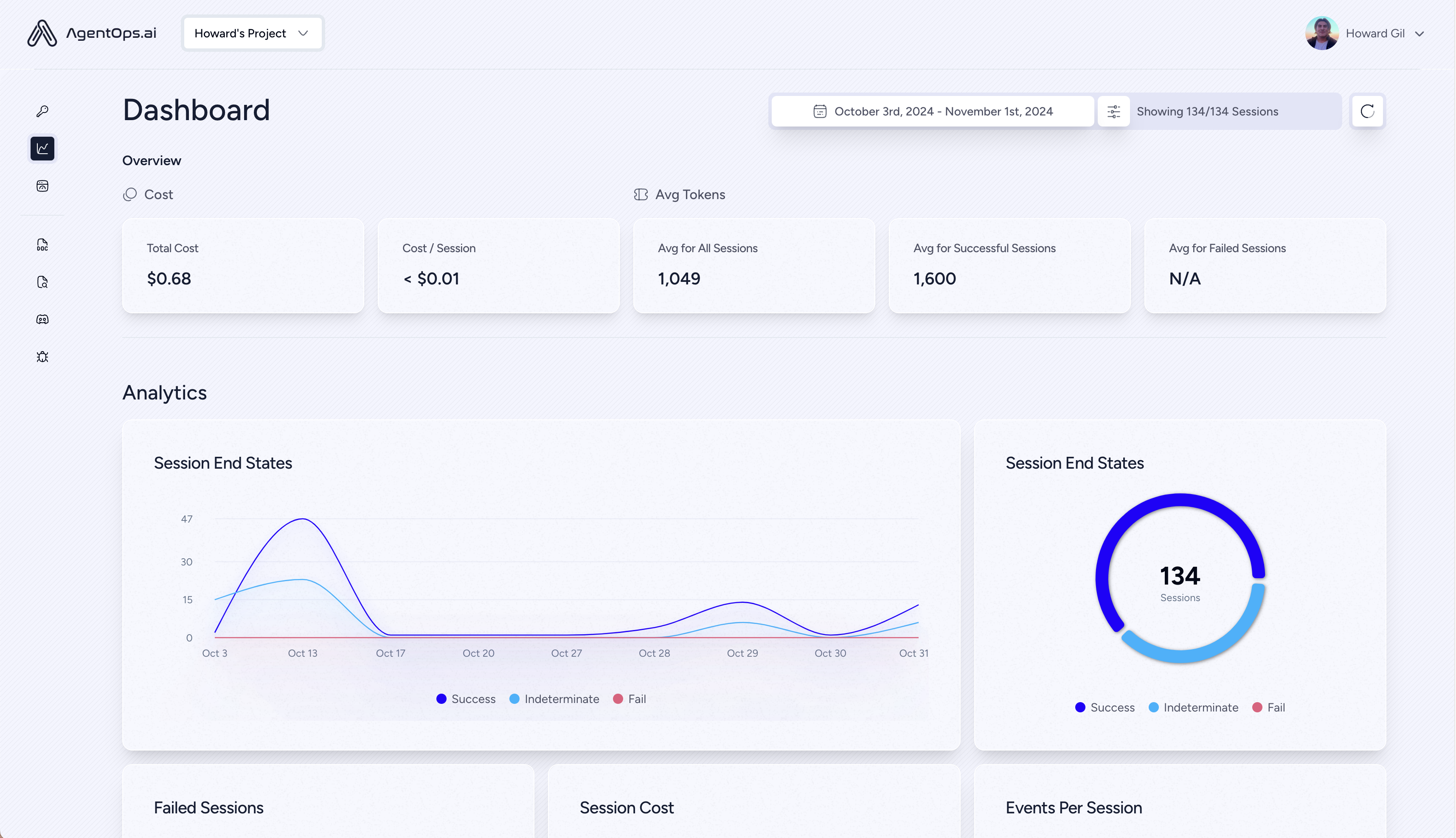

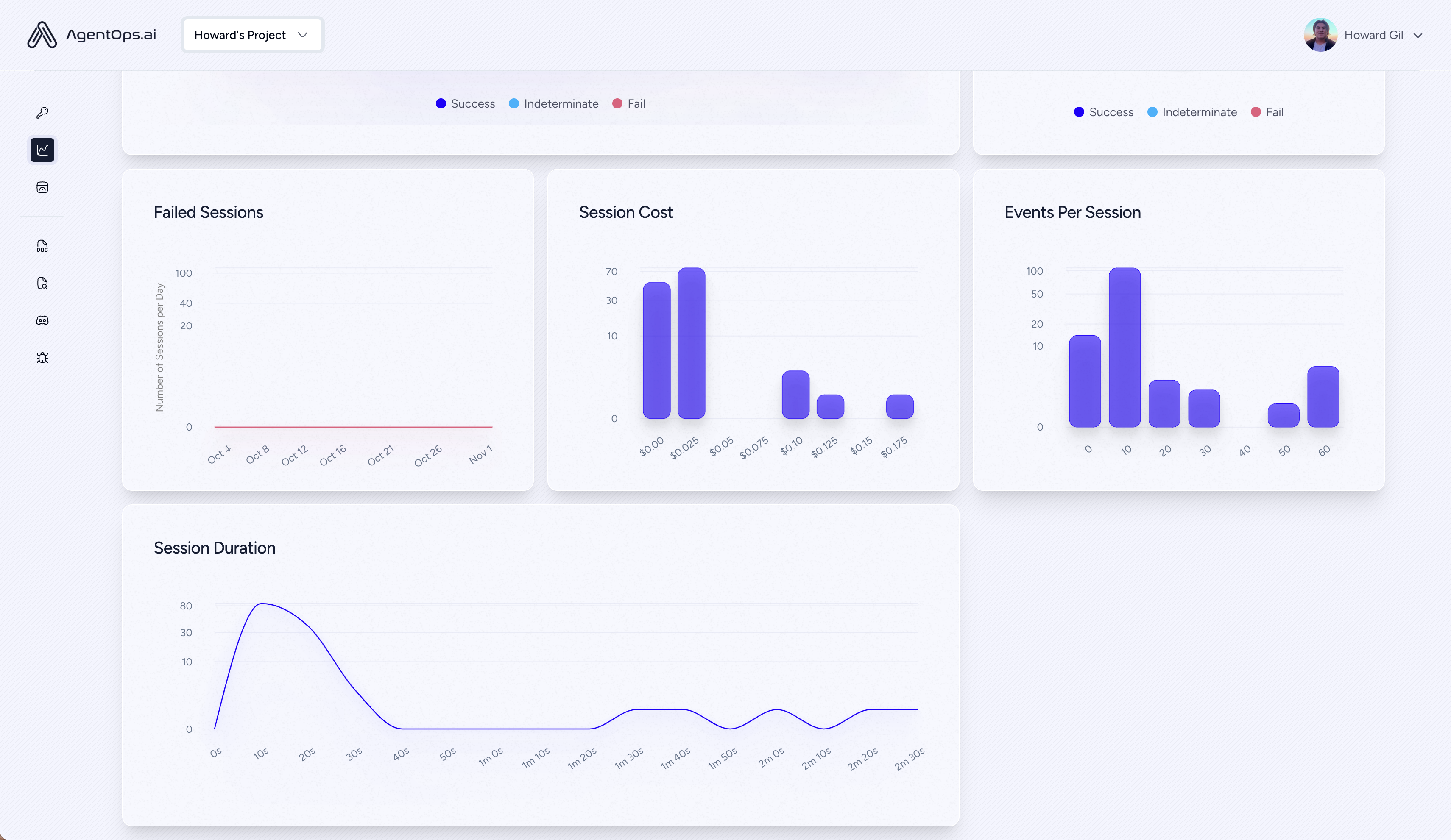

| ? LLM成本管理 | LLM基金会模型提供商的踪迹支出 |

| ?代理基准测试 | 测试您的代理商针对1,000多个Evals |

| ?合规性和安全性 | 检测常见的提示注射和数据渗透漏洞 |

| ?框架集成 | 与Crewai,Autogen和Langchain的本地集成 |

pip install agentops初始化AgensOps客户端,并自动在所有LLM呼叫上获取分析。

获取API键

import agentops

# Beginning of your program (i.e. main.py, __init__.py)

agentops . init ( < INSERT YOUR API KEY HERE > )

...

# End of program

agentops . end_session ( 'Success' )您所有的会话都可以在AgensOps仪表板上查看

在代理,工具和功能中添加强大的可观察性,并尽可能少:一次一行。

参考我们的文档

# Automatically associate all Events with the agent that originated them

from agentops import track_agent

@ track_agent ( name = 'SomeCustomName' )

class MyAgent :

... # Automatically create ToolEvents for tools that agents will use

from agentops import record_tool

@ record_tool ( 'SampleToolName' )

def sample_tool (...):

... # Automatically create ActionEvents for other functions.

from agentops import record_action

@ agentops . record_action ( 'sample function being record' )

def sample_function (...):

... # Manually record any other Events

from agentops import record , ActionEvent

record ( ActionEvent ( "received_user_input" ))构建具有可观察性的机组人员,只有2行代码。只需在您的环境中设置AGENTOPS_API_KEY ,您的工作人员将在AgensOps仪表板上自动监视。

pip install ' crewai[agentops] '只有两行代码,为Autogen剂添加了完整的可观察性和监视。在您的环境中设置AGENTOPS_API_KEY并呼叫agentops.init()

AgentOps与使用Langchain构建的应用程序无缝地工作。要使用处理程序,请安装Langchain作为可选的依赖性:

pip install agentops[langchain]使用处理程序,导入并设置

import os

from langchain . chat_models import ChatOpenAI

from langchain . agents import initialize_agent , AgentType

from agentops . partners . langchain_callback_handler import LangchainCallbackHandler

AGENTOPS_API_KEY = os . environ [ 'AGENTOPS_API_KEY' ]

handler = LangchainCallbackHandler ( api_key = AGENTOPS_API_KEY , tags = [ 'Langchain Example' ])

llm = ChatOpenAI ( openai_api_key = OPENAI_API_KEY ,

callbacks = [ handler ],

model = 'gpt-3.5-turbo' )

agent = initialize_agent ( tools ,

llm ,

agent = AgentType . CHAT_ZERO_SHOT_REACT_DESCRIPTION ,

verbose = True ,

callbacks = [ handler ], # You must pass in a callback handler to record your agent

handle_parsing_errors = True )查看Langchain示例笔记本,以获取更多详细信息,包括异步处理程序。

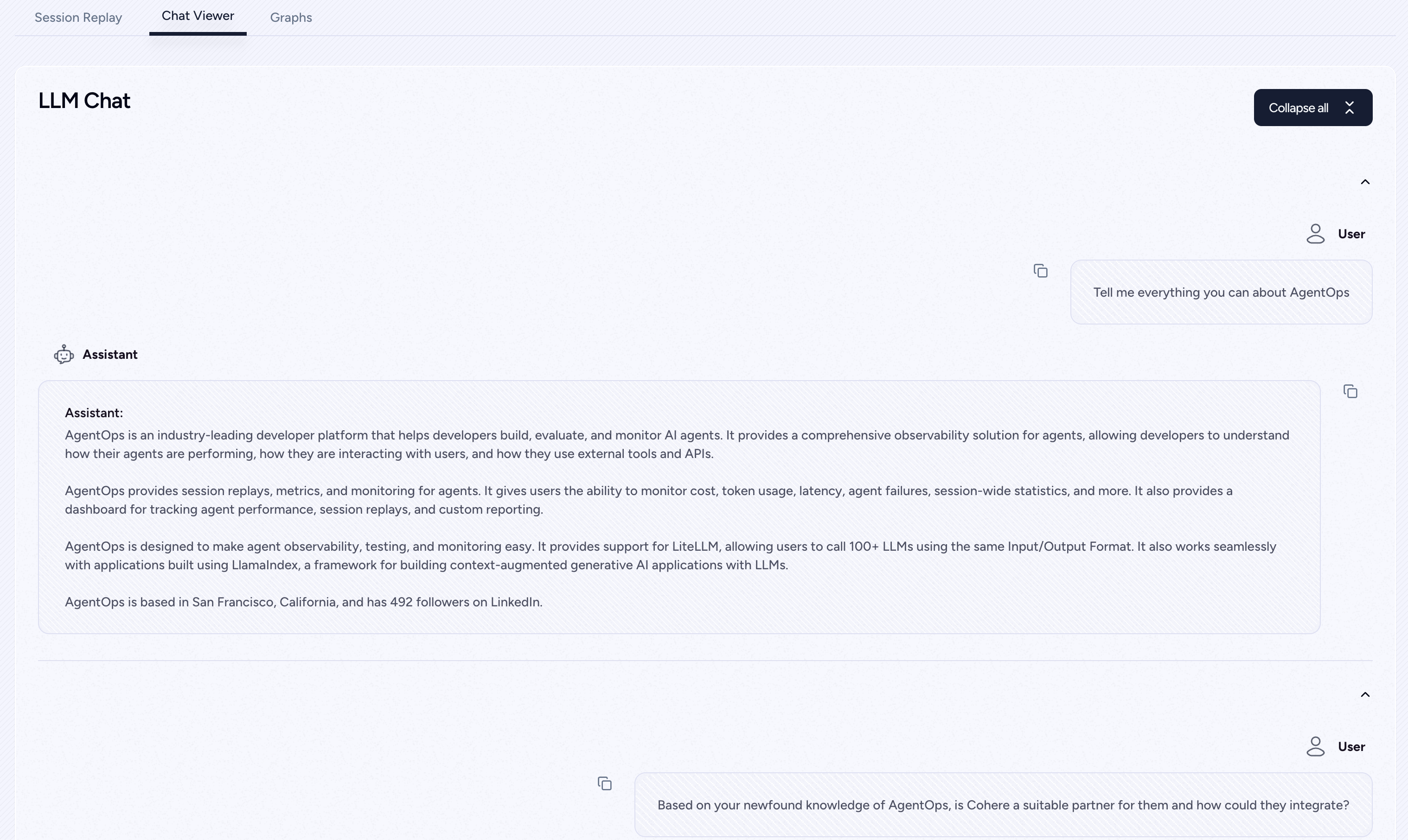

对Cohere的一流支持(> = 5.4.0)。这是一个生活整合,如果您需要任何附加功能,请在Discord上给我们发消息!

pip install cohere import cohere

import agentops

# Beginning of program's code (i.e. main.py, __init__.py)

agentops . init ( < INSERT YOUR API KEY HERE > )

co = cohere . Client ()

chat = co . chat (

message = "Is it pronounced ceaux-hear or co-hehray?"

)

print ( chat )

agentops . end_session ( 'Success' ) import cohere

import agentops

# Beginning of program's code (i.e. main.py, __init__.py)

agentops . init ( < INSERT YOUR API KEY HERE > )

co = cohere . Client ()

stream = co . chat_stream (

message = "Write me a haiku about the synergies between Cohere and AgentOps"

)

for event in stream :

if event . event_type == "text-generation" :

print ( event . text , end = '' )

agentops . end_session ( 'Success' )用拟人化Python SDK构建的轨道代理(> = 0.32.0)。

pip install anthropic import anthropic

import agentops

# Beginning of program's code (i.e. main.py, __init__.py)

agentops . init ( < INSERT YOUR API KEY HERE > )

client = anthropic . Anthropic (

# This is the default and can be omitted

api_key = os . environ . get ( "ANTHROPIC_API_KEY" ),

)

message = client . messages . create (

max_tokens = 1024 ,

messages = [

{

"role" : "user" ,

"content" : "Tell me a cool fact about AgentOps" ,

}

],

model = "claude-3-opus-20240229" ,

)

print ( message . content )

agentops . end_session ( 'Success' )流

import anthropic

import agentops

# Beginning of program's code (i.e. main.py, __init__.py)

agentops . init ( < INSERT YOUR API KEY HERE > )

client = anthropic . Anthropic (

# This is the default and can be omitted

api_key = os . environ . get ( "ANTHROPIC_API_KEY" ),

)

stream = client . messages . create (

max_tokens = 1024 ,

model = "claude-3-opus-20240229" ,

messages = [

{

"role" : "user" ,

"content" : "Tell me something cool about streaming agents" ,

}

],

stream = True ,

)

response = ""

for event in stream :

if event . type == "content_block_delta" :

response += event . delta . text

elif event . type == "message_stop" :

print ( " n " )

print ( response )

print ( " n " )异步

import asyncio

from anthropic import AsyncAnthropic

client = AsyncAnthropic (

# This is the default and can be omitted

api_key = os . environ . get ( "ANTHROPIC_API_KEY" ),

)

async def main () -> None :

message = await client . messages . create (

max_tokens = 1024 ,

messages = [

{

"role" : "user" ,

"content" : "Tell me something interesting about async agents" ,

}

],

model = "claude-3-opus-20240229" ,

)

print ( message . content )

await main ()用拟人化Python SDK构建的轨道代理(> = 0.32.0)。

pip install mistralai同步

from mistralai import Mistral

import agentops

# Beginning of program's code (i.e. main.py, __init__.py)

agentops . init ( < INSERT YOUR API KEY HERE > )

client = Mistral (

# This is the default and can be omitted

api_key = os . environ . get ( "MISTRAL_API_KEY" ),

)

message = client . chat . complete (

messages = [

{

"role" : "user" ,

"content" : "Tell me a cool fact about AgentOps" ,

}

],

model = "open-mistral-nemo" ,

)

print ( message . choices [ 0 ]. message . content )

agentops . end_session ( 'Success' )流

from mistralai import Mistral

import agentops

# Beginning of program's code (i.e. main.py, __init__.py)

agentops . init ( < INSERT YOUR API KEY HERE > )

client = Mistral (

# This is the default and can be omitted

api_key = os . environ . get ( "MISTRAL_API_KEY" ),

)

message = client . chat . stream (

messages = [

{

"role" : "user" ,

"content" : "Tell me something cool about streaming agents" ,

}

],

model = "open-mistral-nemo" ,

)

response = ""

for event in message :

if event . data . choices [ 0 ]. finish_reason == "stop" :

print ( " n " )

print ( response )

print ( " n " )

else :

response += event . text

agentops . end_session ( 'Success' )异步

import asyncio

from mistralai import Mistral

client = Mistral (

# This is the default and can be omitted

api_key = os . environ . get ( "MISTRAL_API_KEY" ),

)

async def main () -> None :

message = await client . chat . complete_async (

messages = [

{

"role" : "user" ,

"content" : "Tell me something interesting about async agents" ,

}

],

model = "open-mistral-nemo" ,

)

print ( message . choices [ 0 ]. message . content )

await main ()异步流

import asyncio

from mistralai import Mistral

client = Mistral (

# This is the default and can be omitted

api_key = os . environ . get ( "MISTRAL_API_KEY" ),

)

async def main () -> None :

message = await client . chat . stream_async (

messages = [

{

"role" : "user" ,

"content" : "Tell me something interesting about async streaming agents" ,

}

],

model = "open-mistral-nemo" ,

)

response = ""

async for event in message :

if event . data . choices [ 0 ]. finish_reason == "stop" :

print ( " n " )

print ( response )

print ( " n " )

else :

response += event . text

await main ()AgensOps提供了对LITELLM(> = 1.3.1)的支持,允许您使用相同的输入/输出格式调用100+ LLM。

pip install litellm # Do not use LiteLLM like this

# from litellm import completion

# ...

# response = completion(model="claude-3", messages=messages)

# Use LiteLLM like this

import litellm

...

response = litellm . completion ( model = "claude-3" , messages = messages )

# or

response = await litellm . acompletion ( model = "claude-3" , messages = messages )AgentOps与使用LlamainDex构建的应用程序无缝合作,该应用程序是一个框架,用于使用LLMS构建上下文启动的生成AI应用程序。

pip install llama-index-instrumentation-agentops使用处理程序,导入并设置

from llama_index . core import set_global_handler

# NOTE: Feel free to set your AgentOps environment variables (e.g., 'AGENTOPS_API_KEY')

# as outlined in the AgentOps documentation, or pass the equivalent keyword arguments

# anticipated by AgentOps' AOClient as **eval_params in set_global_handler.

set_global_handler ( "agentops" )查看LlamainDex文档以获取更多详细信息。

尝试一下!

(即将推出!)

| 平台 | 仪表板 | evals |

|---|---|---|

| python SDK | ✅多课程和跨课程指标 | ✅自定义评估指标 |

| ?评估构建器API | ✅自定义事件标签跟踪 | 特工记分卡 |

| ✅javaScript/打字率SDK | ✅会话重播 | 评估游乐场 +排行榜 |

| 性能测试 | 环境 | LLM测试 | 推理和执行测试 |

|---|---|---|---|

| ✅事件延迟分析 | 非平稳环境测试 | LLM非确定功能检测 | ?无限循环和递归思想检测 |

| ✅代理工作流执行定价 | 多模式环境 | ?令牌极限溢出标志 | 推理检测错误 |

| ?成功验证器(外部) | 执行容器 | 上下文限制溢出标志 | 生成代码验证器 |

| 代理控制器/技能测试 | ✅蜜罐和及时注射检测 | API帐单跟踪 | 错误断点分析 |

| 信息上下文约束测试 | 反特定障碍(即验证码) | CI/CD集成检查 | |

| 回归测试 | 多代理框架可视化 |

没有正确的工具,AI代理人的速度很慢,昂贵且不可靠。我们的使命是将您的代理商从原型带到生产。这就是Agentops脱颖而出的原因:

AgensOps旨在使代理可观察性,测试和监视容易。

查看我们在社区中的增长:

| 存储库 | 星星 |

|---|---|

| Geekan / metagpt | 42787 |

| run-lalama / llama_index | 34446 |

| Crewaiinc / Crewai | 18287 |

| 骆驼 /骆驼 | 5166 |

| 超级代理 /超级代理 | 5050 |

| Iyaja / Llama-fs | 4713 |

| 基于智能 / OMI | 2723 |

| Mervinpraison / Praisonai | 2007 |

| AgentOps-ai / Jaiqu | 272 |

| Strnad / Crewai-Studio | 134 |

| Alejandro-ao / exa-crewai | 55 |

| tonykipkemboi / youtube_yapper_trapper | 47 |

| Sethcoast / Cover-Letter-Builder | 27 |

| Bhancockio / Chatgpt4O分析 | 19 |

| breakstring / agentic_story_book_workflow | 14 |

| 多支出 /多叶py | 13 |

使用github依赖性人Info生成,由Nicolas Vuillamy生成