Agentops membantu pengembang membangun, mengevaluasi, dan memantau agen AI. Dari prototipe ke produksi.

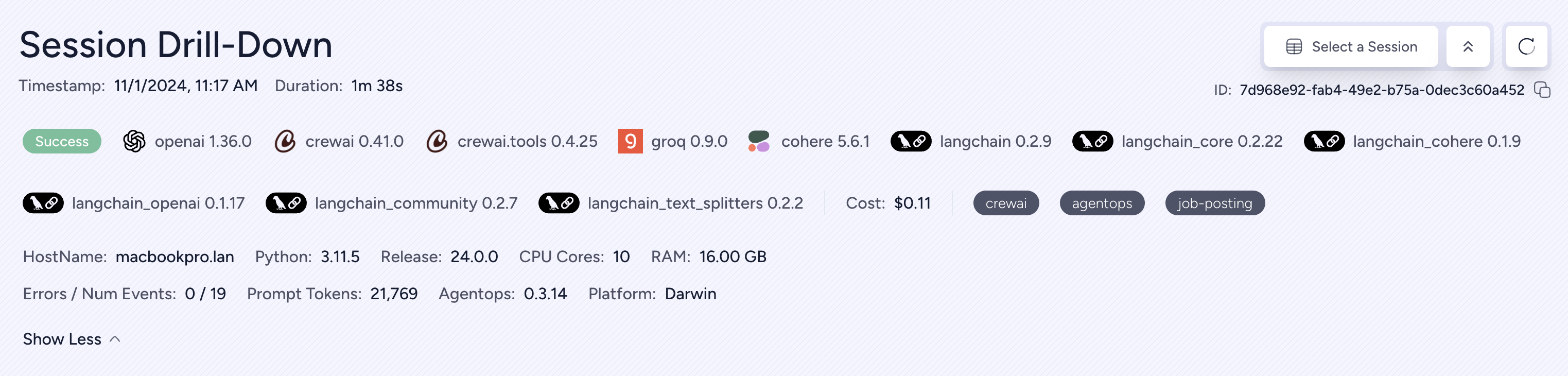

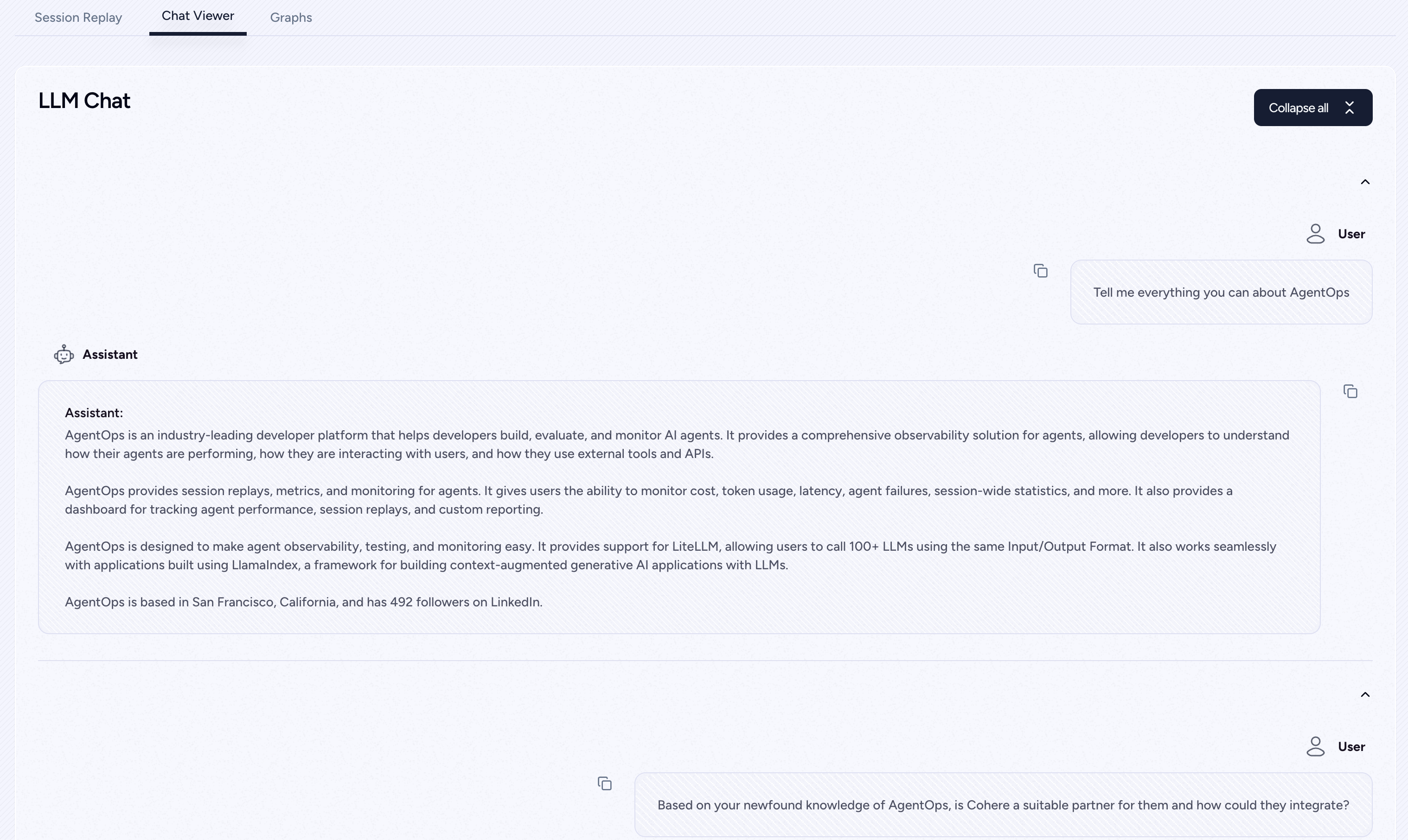

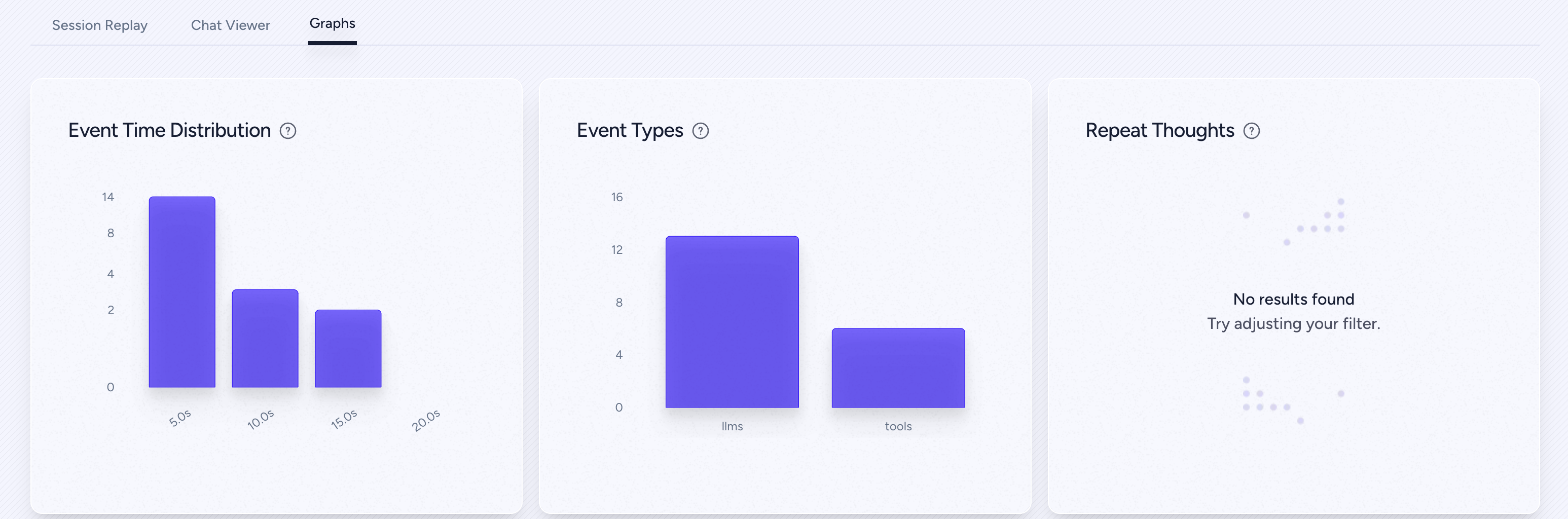

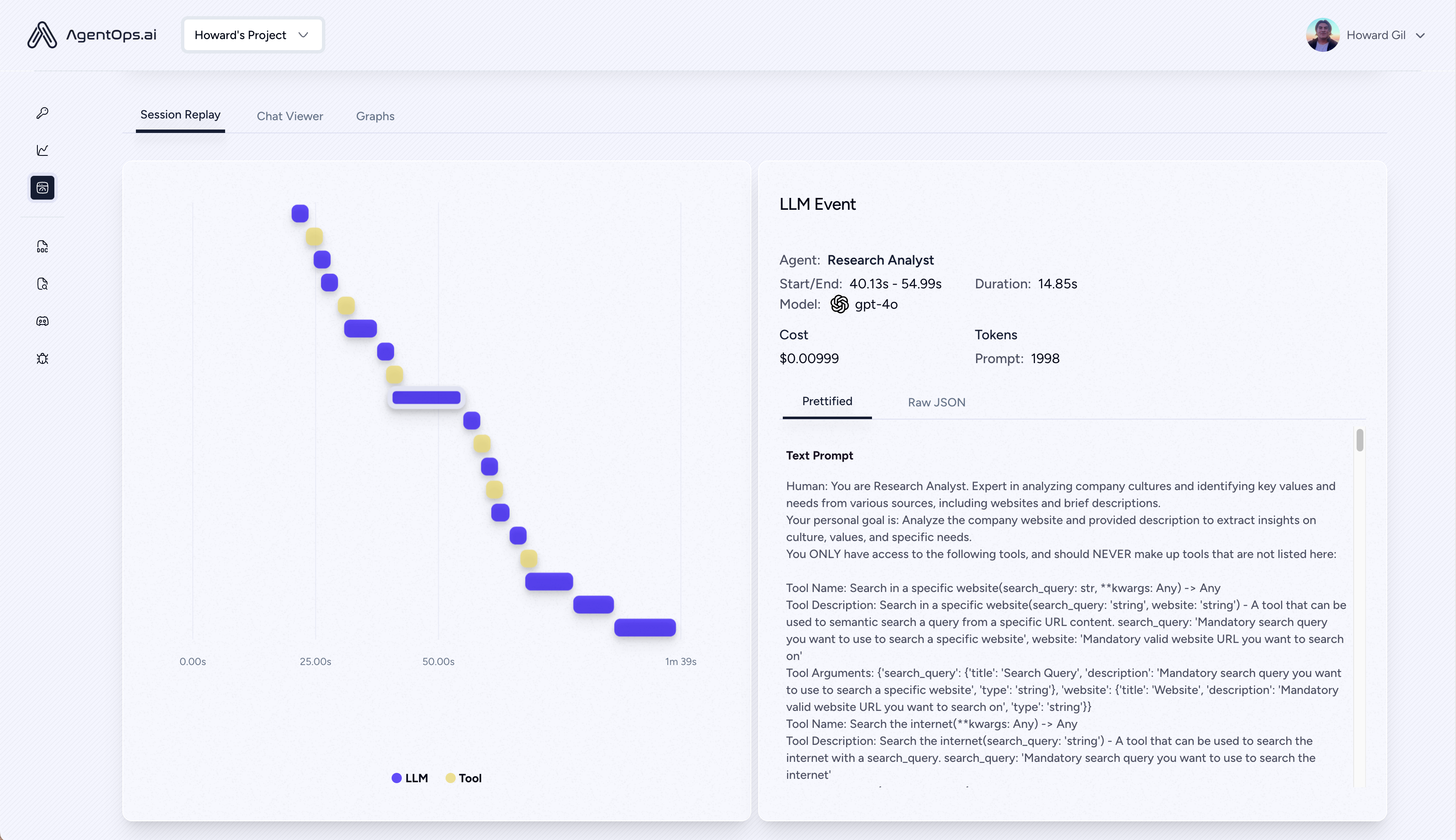

| Memutar ulang analisis dan debugging | Grafik eksekusi agen langkah demi langkah |

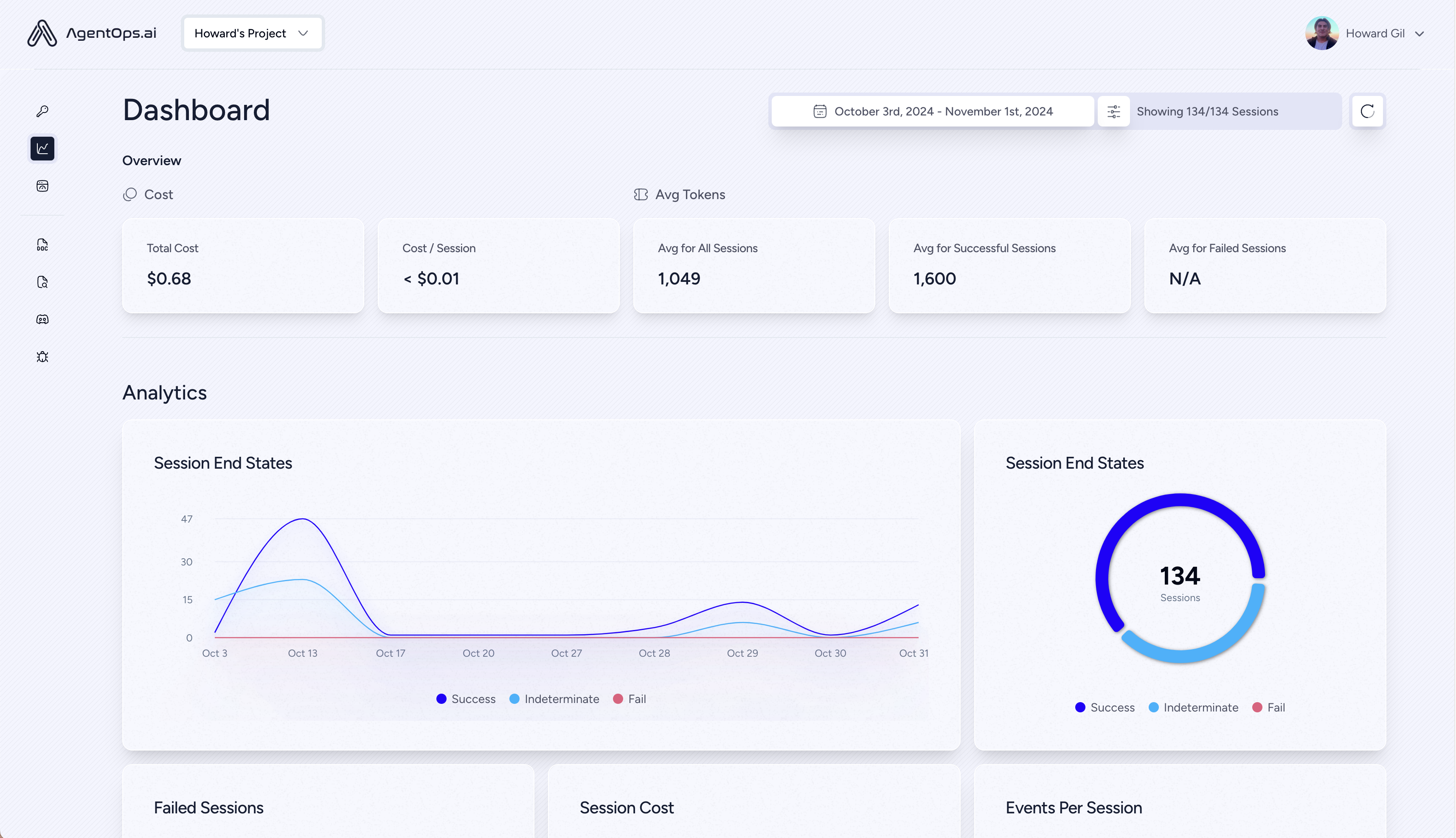

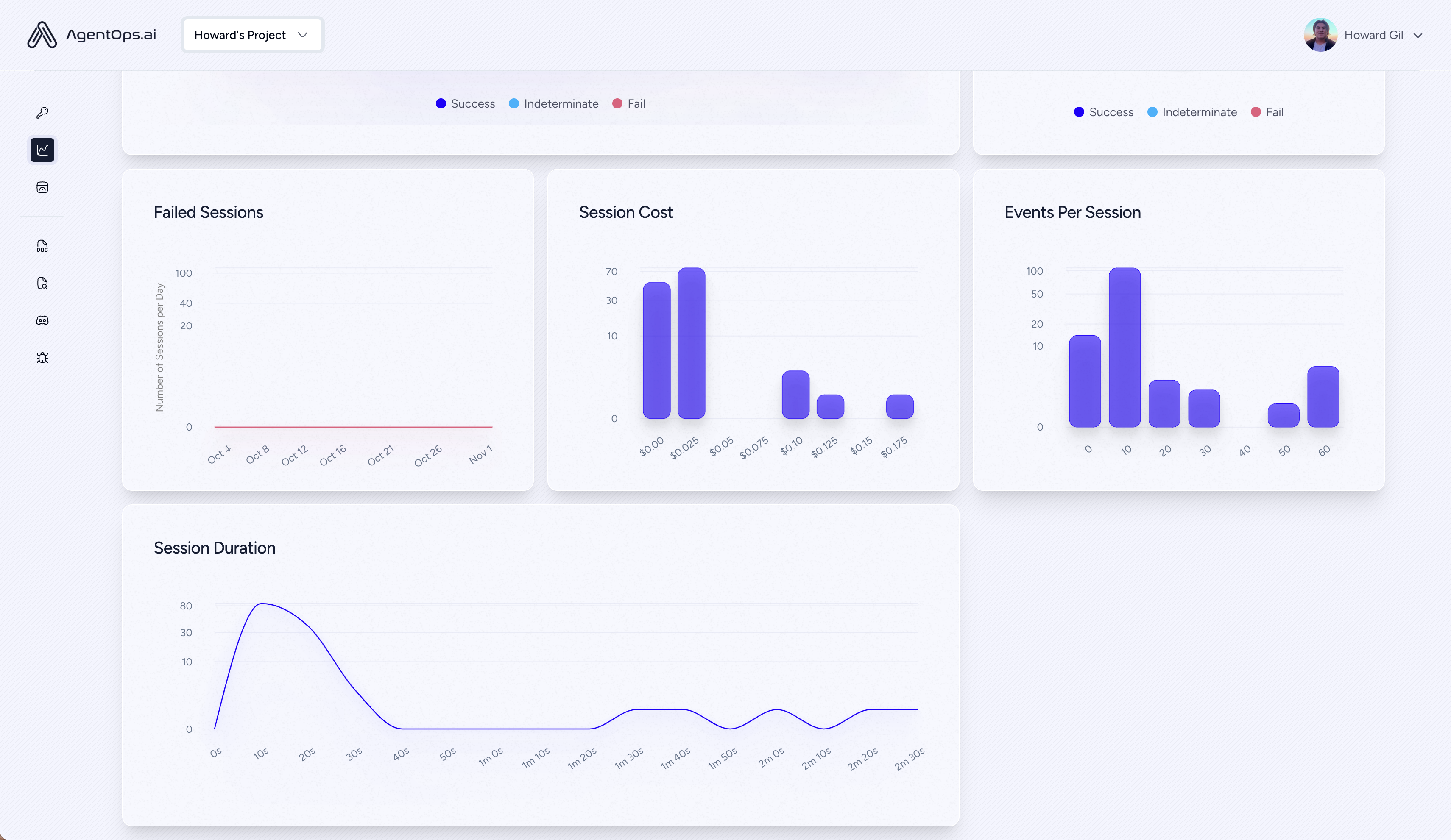

| ? Manajemen Biaya LLM | Pengeluaran Lacak dengan Penyedia Model LLM Foundation |

| ? Benchmarking Agen | Uji agen Anda terhadap 1.000+ eval |

| ? Kepatuhan dan keamanan | Mendeteksi eksploitasi injeksi prompt dan exfiltrasi data umum |

| ? Integrasi kerangka kerja | Integrasi asli dengan Crewai, Autogen, & Langchain |

pip install agentopsInisialisasi klien agenops dan secara otomatis mendapatkan analitik pada semua panggilan LLM Anda.

Dapatkan Kunci API

import agentops

# Beginning of your program (i.e. main.py, __init__.py)

agentops . init ( < INSERT YOUR API KEY HERE > )

...

# End of program

agentops . end_session ( 'Success' ) Semua sesi Anda dapat dilihat di dasbor Agen

Tambahkan pengamatan yang kuat untuk agen, alat, dan fungsi Anda dengan kode sesedikit mungkin: satu baris pada satu waktu.

Lihat dokumentasi kami

# Automatically associate all Events with the agent that originated them

from agentops import track_agent

@ track_agent ( name = 'SomeCustomName' )

class MyAgent :

... # Automatically create ToolEvents for tools that agents will use

from agentops import record_tool

@ record_tool ( 'SampleToolName' )

def sample_tool (...):

... # Automatically create ActionEvents for other functions.

from agentops import record_action

@ agentops . record_action ( 'sample function being record' )

def sample_function (...):

... # Manually record any other Events

from agentops import record , ActionEvent

record ( ActionEvent ( "received_user_input" )) Bangun agen kru dengan kemampuan observasi dengan hanya 2 baris kode. Cukup atur AGENTOPS_API_KEY di lingkungan Anda, dan kru Anda akan mendapatkan pemantauan otomatis di dasbor Agentops.

pip install ' crewai[agentops] ' Dengan hanya dua baris kode, tambahkan pengamatan penuh dan pemantauan ke agen autogen. Tetapkan AGENTOPS_API_KEY di lingkungan Anda dan hubungi agentops.init()

AgenPops bekerja mulus dengan aplikasi yang dibangun menggunakan Langchain. Untuk menggunakan pawang, instal Langchain sebagai ketergantungan opsional:

pip install agentops[langchain]Untuk menggunakan pawang, impor dan setel

import os

from langchain . chat_models import ChatOpenAI

from langchain . agents import initialize_agent , AgentType

from agentops . partners . langchain_callback_handler import LangchainCallbackHandler

AGENTOPS_API_KEY = os . environ [ 'AGENTOPS_API_KEY' ]

handler = LangchainCallbackHandler ( api_key = AGENTOPS_API_KEY , tags = [ 'Langchain Example' ])

llm = ChatOpenAI ( openai_api_key = OPENAI_API_KEY ,

callbacks = [ handler ],

model = 'gpt-3.5-turbo' )

agent = initialize_agent ( tools ,

llm ,

agent = AgentType . CHAT_ZERO_SHOT_REACT_DESCRIPTION ,

verbose = True ,

callbacks = [ handler ], # You must pass in a callback handler to record your agent

handle_parsing_errors = True )Lihatlah buku catatan Langchain untuk detail lebih lanjut termasuk penangan async.

Dukungan kelas satu untuk cohere (> = 5.4.0). Ini adalah integrasi hidup, jika Anda memerlukan fungsi tambahan, silakan pesan kami di Perselisihan!

pip install cohere import cohere

import agentops

# Beginning of program's code (i.e. main.py, __init__.py)

agentops . init ( < INSERT YOUR API KEY HERE > )

co = cohere . Client ()

chat = co . chat (

message = "Is it pronounced ceaux-hear or co-hehray?"

)

print ( chat )

agentops . end_session ( 'Success' ) import cohere

import agentops

# Beginning of program's code (i.e. main.py, __init__.py)

agentops . init ( < INSERT YOUR API KEY HERE > )

co = cohere . Client ()

stream = co . chat_stream (

message = "Write me a haiku about the synergies between Cohere and AgentOps"

)

for event in stream :

if event . event_type == "text-generation" :

print ( event . text , end = '' )

agentops . end_session ( 'Success' )Agen lacak yang dibangun dengan antropik Python SDK (> = 0,32.0).

pip install anthropic import anthropic

import agentops

# Beginning of program's code (i.e. main.py, __init__.py)

agentops . init ( < INSERT YOUR API KEY HERE > )

client = anthropic . Anthropic (

# This is the default and can be omitted

api_key = os . environ . get ( "ANTHROPIC_API_KEY" ),

)

message = client . messages . create (

max_tokens = 1024 ,

messages = [

{

"role" : "user" ,

"content" : "Tell me a cool fact about AgentOps" ,

}

],

model = "claude-3-opus-20240229" ,

)

print ( message . content )

agentops . end_session ( 'Success' )Mengalir

import anthropic

import agentops

# Beginning of program's code (i.e. main.py, __init__.py)

agentops . init ( < INSERT YOUR API KEY HERE > )

client = anthropic . Anthropic (

# This is the default and can be omitted

api_key = os . environ . get ( "ANTHROPIC_API_KEY" ),

)

stream = client . messages . create (

max_tokens = 1024 ,

model = "claude-3-opus-20240229" ,

messages = [

{

"role" : "user" ,

"content" : "Tell me something cool about streaming agents" ,

}

],

stream = True ,

)

response = ""

for event in stream :

if event . type == "content_block_delta" :

response += event . delta . text

elif event . type == "message_stop" :

print ( " n " )

print ( response )

print ( " n " )Async

import asyncio

from anthropic import AsyncAnthropic

client = AsyncAnthropic (

# This is the default and can be omitted

api_key = os . environ . get ( "ANTHROPIC_API_KEY" ),

)

async def main () -> None :

message = await client . messages . create (

max_tokens = 1024 ,

messages = [

{

"role" : "user" ,

"content" : "Tell me something interesting about async agents" ,

}

],

model = "claude-3-opus-20240229" ,

)

print ( message . content )

await main ()Agen lacak yang dibangun dengan antropik Python SDK (> = 0,32.0).

pip install mistralaiSinkronisasi

from mistralai import Mistral

import agentops

# Beginning of program's code (i.e. main.py, __init__.py)

agentops . init ( < INSERT YOUR API KEY HERE > )

client = Mistral (

# This is the default and can be omitted

api_key = os . environ . get ( "MISTRAL_API_KEY" ),

)

message = client . chat . complete (

messages = [

{

"role" : "user" ,

"content" : "Tell me a cool fact about AgentOps" ,

}

],

model = "open-mistral-nemo" ,

)

print ( message . choices [ 0 ]. message . content )

agentops . end_session ( 'Success' )Mengalir

from mistralai import Mistral

import agentops

# Beginning of program's code (i.e. main.py, __init__.py)

agentops . init ( < INSERT YOUR API KEY HERE > )

client = Mistral (

# This is the default and can be omitted

api_key = os . environ . get ( "MISTRAL_API_KEY" ),

)

message = client . chat . stream (

messages = [

{

"role" : "user" ,

"content" : "Tell me something cool about streaming agents" ,

}

],

model = "open-mistral-nemo" ,

)

response = ""

for event in message :

if event . data . choices [ 0 ]. finish_reason == "stop" :

print ( " n " )

print ( response )

print ( " n " )

else :

response += event . text

agentops . end_session ( 'Success' )Async

import asyncio

from mistralai import Mistral

client = Mistral (

# This is the default and can be omitted

api_key = os . environ . get ( "MISTRAL_API_KEY" ),

)

async def main () -> None :

message = await client . chat . complete_async (

messages = [

{

"role" : "user" ,

"content" : "Tell me something interesting about async agents" ,

}

],

model = "open-mistral-nemo" ,

)

print ( message . choices [ 0 ]. message . content )

await main ()Streaming async

import asyncio

from mistralai import Mistral

client = Mistral (

# This is the default and can be omitted

api_key = os . environ . get ( "MISTRAL_API_KEY" ),

)

async def main () -> None :

message = await client . chat . stream_async (

messages = [

{

"role" : "user" ,

"content" : "Tell me something interesting about async streaming agents" ,

}

],

model = "open-mistral-nemo" ,

)

response = ""

async for event in message :

if event . data . choices [ 0 ]. finish_reason == "stop" :

print ( " n " )

print ( response )

print ( " n " )

else :

response += event . text

await main ()AgentOps memberikan dukungan untuk litellm (> = 1.3.1), memungkinkan Anda untuk memanggil 100+ LLM menggunakan format input/output yang sama.

pip install litellm # Do not use LiteLLM like this

# from litellm import completion

# ...

# response = completion(model="claude-3", messages=messages)

# Use LiteLLM like this

import litellm

...

response = litellm . completion ( model = "claude-3" , messages = messages )

# or

response = await litellm . acompletion ( model = "claude-3" , messages = messages )AgenPops bekerja mulus dengan aplikasi yang dibangun menggunakan LlamAinDex, kerangka kerja untuk membangun aplikasi generatif-generatif yang dibeli dengan konteks dengan LLMS.

pip install llama-index-instrumentation-agentopsUntuk menggunakan pawang, impor dan setel

from llama_index . core import set_global_handler

# NOTE: Feel free to set your AgentOps environment variables (e.g., 'AGENTOPS_API_KEY')

# as outlined in the AgentOps documentation, or pass the equivalent keyword arguments

# anticipated by AgentOps' AOClient as **eval_params in set_global_handler.

set_global_handler ( "agentops" )Lihatlah Dokumen Llamaindex untuk lebih jelasnya.

Cobalah!

(segera hadir!)

| Platform | Dasbor | Eval |

|---|---|---|

| ✅ Python SDK | ✅ Metrik multi-sesi dan cross-sesi | ✅ metrik eval khusus |

| ? API Pembangun Evaluasi | ✅ Pelacakan tag acara khusus | Kartu skor agen |

| ✅ JavaScript/TypeScript SDK | ✅ Sesi tayangan ulang | Evaluasi Playground + Papan Tinggi |

| Pengujian kinerja | Lingkungan | Pengujian LLM | PRIBCING DAN EKSEKUSI |

|---|---|---|---|

| ✅ Analisis Latensi Acara | Pengujian Lingkungan Non-Stasioner | Deteksi fungsi non-deterministik llm | ? Loop tak terbatas dan deteksi pemikiran rekursif |

| ✅ Harga Eksekusi Alur Kerja Agen | Lingkungan multi-modal | ? Token Limit Overflow Flags | Deteksi penalaran yang salah |

| ? Validator sukses (eksternal) | Wadah eksekusi | Batas konteks bendera overflow | Validator kode generatif |

| Pengontrol agen/tes keterampilan | ✅ Honeypot dan deteksi injeksi cepat (PromptAmor) | Pelacakan tagihan API | Analisis Breakpoint Kesalahan |

| Pengujian Kendala Konteks Informasi | Penghalang jalan anti-agen (yaitu captchas) | Pemeriksaan Integrasi CI/CD | |

| Pengujian Regresi | Visualisasi kerangka kerja multi-agen |

Tanpa alat yang tepat, agen AI lambat, mahal, dan tidak dapat diandalkan. Misi kami adalah membawa agen Anda dari prototipe ke produksi. Inilah mengapa Agentops menonjol:

Agentops dirancang untuk membuat agen observabilitas, pengujian, dan pemantauan mudah.

Lihatlah pertumbuhan kami di komunitas:

| Gudang | Bintang |

|---|---|

| Geekan / Metagpt | 42787 |

| run-llama / llama_index | 34446 |

| Crewaiinc / Crewai | 18287 |

| Unta-Ai / Camel | 5166 |

| Superagent-Ai / Superagent | 5050 |

| Iyaja / llama-fs | 4713 |

| Berdasarkan Hardware / OMI | 2723 |

| Mervinpraison / praisonai | 2007 |

| Agentops-Ai / Jaiqu | 272 |

| Strnad / Crewai-Studio | 134 |

| ALEJANDRO-AO / EXA-CREWAI | 55 |

| tonykipkemboi / youtube_yapper_trapper | 47 |

| Sethcoast / Cover-Letter-Builder | 27 |

| Bhancockio / chatgpt4o-analisis | 19 |

| Breakstring / Agentic_story_book_workflow | 14 |

| Multi-On / Multion-Python | 13 |

Dihasilkan menggunakan github-dependents-info, oleh nicolas vuillamy