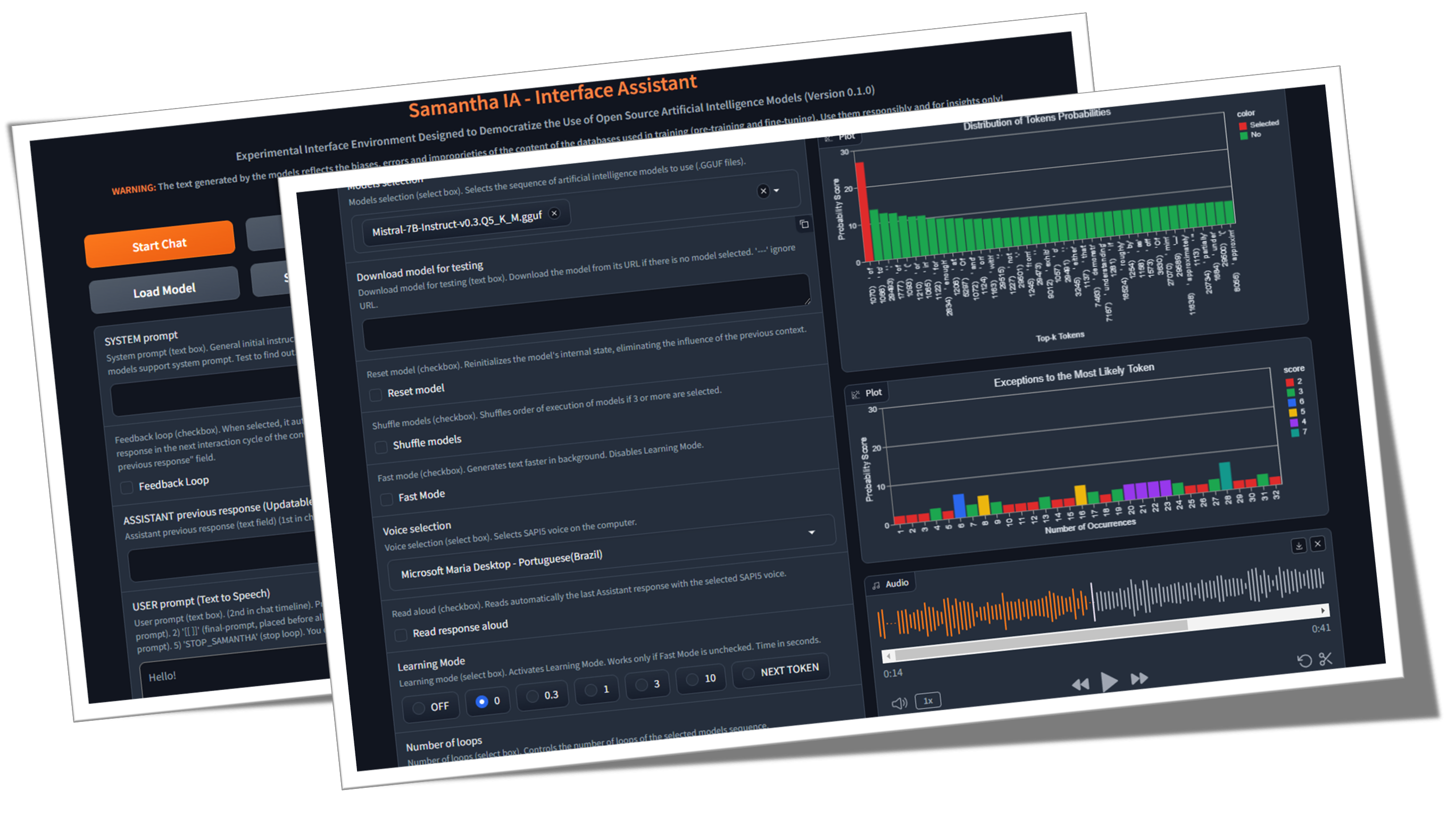

Samanthaは、オープンサイエンスの原則(オープン方法論、オープンソース、オープンデータ、オープンアクセス、オープンピアレビュー、オープン教育リソース)および一般的なWindowsコンピューターでの使用ライセンス(GPUなし)で開発されたオープンソーステキストの人工知能モデルの単純なインターフェイスアシスタントです。このプログラムは、GGUFモデル(GPTが生成されたユニファイドフォーマットのGGUFの略)をダウンロードする場合、またはモデルによって作成されたコードによって作成されたコードの実行で必要な場合(データ分析用のデータセットをダウンロードするために)を除き、インターネット接続を必要とせずに、無料で無制限にLLMをローカルに実行します。その目的は、AIの使用に関する知識を民主化し、適切な手法を使用して、小さなモデルでさえ、より大きなモデルと同様の応答を生成できることを実証することです。彼女の使命は、AIモデルを開いている(本当に)境界を探るのを助けることです。

オープンソースAIとは何ですか(opensource.org)

LLMサイズは重要ですか? (ゲイリーは説明します)

人工知能紙(arxiv.org)

Samanthaは、管理機関に対する市民の信頼の喪失を増やすという心配な現在のシナリオを考慮して、行政の社会的および制度的支配の行使を支援するために開発されています。その機能により、オープンソースの人工知能モデル、特にPythonプログラマーやデータサイエンティストの探索に関心のある人が使用できます。このプロジェクトは、MPC-ESチームがLLMモデルによってトークンを生成するプロセスを理解できるようにするシステムを開発する必要性に由来しています。

♾️このシステムにより、プロンプト(プロンプトチェーン)とモデル(モデルチェーン)のリストの順次ロード、メモリを保存するための1つのモデル、およびハイパーパラメーターの調整が可能になり、以前のモデルによって生成された応答がフィードバックされ、その後のモデルによってフィードバックされて分析され、次の応答(フィードバックループ機能)を生成するために分析します。モデルは、直前のモデルによって提供される回答と対話できます。そのため、新しい応答はそれぞれ前のモデルに置き換えられます。また、1つのモデルのみを使用して、無制限の数のテキスト生成サイクルで以前の応答と対話することもできます。想像力を使用して、モデル、プロンプト、機能を組み合わせてください!

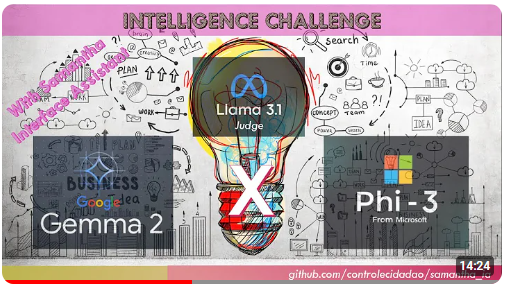

このビデオは、サマンサのコピーアンドペーストLLM機能を使用してモデルとプロンプトをチェーンすることにより、人間の介入なしのモデル間の相互作用の例を示しています。 Microsoft Phi 3.5およびGoogle Gemma 2モデル(Bartowskiによる)の量子化バージョンは、メタラマ3.1モデル(NousResearch)によって作成された人間性に関する質問に答えることに挑戦しています。応答は、METAモデルによっても評価されます。

インテリジェンスチャレンジ:Gemma 2 vs Phi 3.5 with llama 3.1 as Judge

?サマンサの応答フィードバックループ機能を使用せずにいくつかのチェーン例:

(Model_1)応答(PROMPT_1)X応答数:学習モード機能の助けを借りてモデルの決定論的および確率的動作を分析し、確率設定で複数の多様な応答を生成するために使用されます(ビデオ)。

(Model_1)応答(PROMPT_1、PROMPT_2、PROMPT_N):同じモデル(プロンプトチェーン)(ビデオ)で順次命令を実行するために使用されます。

(Model_1、Model_2、Model_n)Respons(Prompt_1):同じ単一プロンプト(モデルチェーン)のモデルの応答を比較するために使用されます。同じモデルの量子化されたバージョンと同様に、異なるモデルを比較するのに役立ちます。

(Model_1、Model_2、Model_n)Respons(Prompt_1、Prompt_2、Prompt_n):プロンプトのリストのモデルの応答を比較するために使用され、主観的なモデル(モデルとプロンプトチェーン)を使用して一連の命令を実行するために使用されます。各モデルはすべてのプロンプトに応答します。次に、モデルごとの単一の応答を使用する場合、各モデルは特定のプロンプトのみに応答します。

?サマンサの応答フィードバックループ機能を使用したいくつかのチェーン例:

(Model_1)応答(PROMPT_1)X応答数:同じモデルを使用した固定ユーザー命令を介してモデルの以前の応答を改善または補完するために使用し、単一のモデル(ビデオ)を使用して2 AI間の無限の会話をシミュレートするために使用されます。

(Model_1)応答(PROMPT_1、PROMPT_2、PROMPT_N):同じモデル(プロンプトチェーン)を使用して、複数のユーザー命令を使用してモデルの以前の応答を改善するために使用されます。各プロンプトは、以前の応答を改善または完了するために使用され、インクリメンタルコーディング(ビデオ)で探索データ分析(EDA)を実行するなど、以前の応答に依存する一連のプロンプトを実行するために使用されます。

(Model_1、Model_2、Model_n)Respons(Prompt_1):不可欠なモデル(モデルチェーン)を使用した以前のモデルの応答を改善し、異なるモデル間のダイアログを生成するために使用されます。

(Model_1、Model_2、Model_n)Respons(Prompt_1、Prompt_2、Prompt_n):モデル機能ごとの不可欠なモデル(モデルとプロンプトチェーン)と単一の応答を使用して一連の命令を実行するために使用されます。

これらの各モデルとプロンプトシーケンスは、ループ機能の数を介して複数回実行できます。

サマンサのチェーンシーケンステンプレート:

([モデルリスト] - >応答 - >([ユーザープロンプトリスト] X応答数)Xループ数

しかし、GPTとは何ですか?トランスフォーマーへの視覚的イントロ(3Blue1brown)

トランスの注意、視覚的に説明されている(3blue1brown)

変圧器の説明(ポロクラブ)

?プロンプトとモデルのシーケンスにより、ユーザーの入力命令を分割することにより、長い応答を生成できます。すべての部分的な応答は、モデルトレーニングプロセスで定義されているモデルの応答長に適合します。

? AIモデル間の自動自己相互作用のためのオープンソースツールとして、Samantha Interface Assistantは、自己改善フィードバックループを使用して逆プロンプトエンジニアリングを探索するように設計されました。この手法は、小さな大規模な言語モデル(LLM)がモデルに転送することにより、より正確な応答を生成するのに役立ちます。ユーザーの最初の不正確な命令に基づいて最終的なプロンプトと対応する応答を作成し、プロンプト構築プロセスに中間層を追加します。サマンサには、独自のモデルのように、隠されたシステムプロンプトがありません。すべての指示はユーザーによって制御されます。人類システムのプロンプトを参照してください。

?トレーニングテキストから抽出された一般化パターンから生じる緊急行動のおかげで、適切なプロンプトで適切なハイパーパラメーター構成により、小さなモデルでも一緒に作業することで大きな応答が生成されます!

人間の種の知性は、単一の知的存在に基づいているのではなく、集合的な知性に基づいています。個別に、私たちは実際にはそれほどインテリジェントでも能力もありません。私たちの社会と経済システムは、さまざまな専門化と専門知識を持つ多様な個人で構成される膨大な範囲の機関を持つことに基づいています。この広大な集団知性は、私たちが個人として誰であるかを形作り、私たち一人一人が人生の私たち自身の道をたどり、ユニークな個人になり、その結果、絶えず拡大する集団知能の一部として種として貢献します。人工知能の開発は、同様の集合的な道をたどると信じています。 AIの未来は、訓練、走行、維持に膨大なエネルギーを必要とする単一の巨大な、すべてを知っているAIシステムで構成されますが、むしろ、独自のニッチと専門性を備えた膨大な小さなAIシステムの膨大なコレクションで構成され、特定のニッチを埋めるために開発された新しいAIシステムと互いに相互作用します。進化する新しい基礎モデル:モデル開発の自動化の力を解き放つ-SakanaAI

?小さなステップ:サマンサは、人工知能が特権ではなく、AIを活用して障壁なしで生産性、創造性、意思決定を向上させ、AIを民主化する旅を歩き、日常生活の中で力を向けることができる世界のすべての人にとってのツールである未来への動きです。

? AIの道具的性質:人工知能の技術的独占を支配の可能性と社会的不平等の拡大として認識することは、歴史のこの変曲点の課題を表しています。テキスト生成プロセス中の小さなモデルの欠陥に注目すると、より大きな独自モデルの主張された完全性と比較することにより、この理解に役立ちます。物事を適切な場所に再配置し、知性(パレイドリアの心理的現象によって引き起こされる擬人化)など、人間の特徴を起因するというロマンチックな還元主義者の見解に疑問を投げかける必要があります。このため、この新しい「Word/Token Calculator」がどのように機能するかについての教訓的なアプローチを通じて、人工知能を測定することが不可欠です。確かに、市場によって人工的に作成された最初の魅力のドーパミンは、数百トークンの生成に耐えられません(トークンは、LLMがテキストを理解して生成するために使用するテキストの基本的なビルディングブロックに与えられた名前です。トークンは単語全体または単語の一部です)。

text生成の考慮事項:ユーザーは、AIによって生成された応答が、テキストデータの広大なコーパス上の大規模な言語モデルのトレーニングから派生していることに注意する必要があります。 AIがその出力を生成するために使用する正確なソースまたはプロセスを正確に引用または識別することはできません。 AIによって作成されたコンテンツは、特定のソースからの直接的な引用や編集ではありません。代わりに、AIのニューラルネットワークが幅広いデータコーパスのトレーニングプロセス中に学習およびエンコードしたパターン、統計的関係、および知識を反映しています。応答は、特定のソース素材から逐語的に取得されるのではなく、この学習した知識表現に基づいて生成されます。 AIのトレーニングデータには権威ある情報源が含まれている可能性がありますが、その出力は、学習された関連性と概念の独自の合成された表現です。

目的:サマンサの主な目的は、他の人に、システムの利用について同様の - そしてはるかに優れたものを確実に作成し、ユーザーを教育するように促すことです。私たちの目標は、オープンソースAIの分野をさらに革新し、貢献するために知識とツールを採用できる開発者と愛好家のコミュニティを育成することです。そうすることにより、コラボレーションと共有の文化を育成することを目的としており、技術的な背景や財源に関係なく、AIの利点がすべての人がアクセスできるようにします。より多くの人々がAIアプリケーションを構築して理解できるようにすることにより、私たちは、情報に基づいた多様な視点で、集合的に進歩を促進し、社会的課題に対処できると考えられています。 AIが人類にとって前向きで包括的な力である未来を形作るために協力しましょう。

ユネスコの人工知能の推奨事項の倫理

仕事、革新、生産性、スキルにおけるAIに関するOECDプログラム

イノベーションの人間コスト:このシステムは、ユーザーに力を与え、AIへのアクセスを民主化することを目的としていますが、この技術の倫理的意味を認めることが重要です。強力なAIシステムの開発は、しばしば人間の労働の搾取、特にデータの注釈とトレーニングプロセスに依存しています。これにより、既存の不平等を永続させ、デジタル格差の新しい形態を作成できます。 AIのユーザーとして、私たちはこれらの問題を認識し、業界内のより公正な慣行を擁護する責任があります。倫理的AIの開発をサポートし、データ調達の透明性を促進することにより、私たちはすべての人にとってより包括的で公平な未来に貢献することができます。

COMO FUNCIONA O TRABALHO HUMNO PORTRáSDaInteligência人工

AIテクノロジーの世界の「現代の奴隷」

他のソース

巨人の肩について: Georgi GerganovとLlama.cppに取り組んでいるチーム全体に、このすべてを可能にしてくれたことに感謝し、Gerganov C ++ Library(Llama-CPP-Python)の驚くべきPython入札でAndrei Bletenに感謝します。

open Open Source Foundation: llama.cpp / llama-cpp-pythonおよびGradioに基づいて、MITライセンスの下で、Samanthaは専用のグラフィックプロセシングユニット(GPU)がなくても標準コンピューターで実行されます。

samantsサマンサはインターネットとは独立して動作し、モデルファイルの最初のダウンロードにのみ接続を必要とします。これにより、データ処理のニーズに合わせてプライバシーとセキュリティが保証されます。機密データは、機密性契約を通じてインターネットを介して企業と共有されていません。

ollimited無制限で無料使用:サマンサのオープンソースの性質は、コストや制限なしに無制限の使用を可能にし、いつでも誰でもアクセスできるようにします。

redive幅広いモデルの選択:数千の基礎と微調整されたオープンソースモデルへのアクセスにより、ユーザーはさまざまなタスクとアプリケーションに合わせて、さまざまなAI機能を実験し、ニーズに最適なモデルのシーケンスをチェーンできます。

llmsのコピーと貼り付け: ggufモデルのシーケンスを試すには、hugging hugging faceリポジトリからダウンロードリンクをコピーして、サマンサ内の貼り付けですぐに順番に実行します。

Customizableパラメーター:ユーザーは、コンテキストウィンドウ長( N_CTX 、 MAX_TOKENS )、トークンサンプリング(温度、 TFS_Z 、トップK 、トップ、 MIN_P 、典型的_P)などのコンテキストウィンドウの長さ( N_CTX 、 MAX_TOKENS )、トークンサンプリングなどのモデルハイパーパラメーターを制御できます。確率的挙動。

✅ランダムハイパーパラメーターの調整:ハイパーパラメーター設定のランダムな組み合わせをテストし、モデルによって生成された応答への影響を観察できます。

✅インタラクティブエクスペリエンス:サマンサのチェーン機能により、ユーザーはプロンプトとモデルをチェーンすることで無限のテキストを生成し、人間の介入なしで異なるLLM間の複雑な相互作用を促進できます。

✅フィードバックループ:この機能により、モデルによって生成された応答をキャプチャし、会話の次のサイクルに戻すことができます。

proventリスト:モデルによって実行される一連の命令を制御するために、任意の数のプロンプト( $$$nまたはnで区切られています)を追加できます。事前定義された一連のプロンプトでTXTファイルをインポートすることができます。

✅モデルリスト:次のプロンプトに応答するモデルを制御するために、任意の数のモデルを選択できます。

in累積応答:モデルによって次の応答を生成するときに考慮される以前の応答にそれを追加することにより、各新しい応答を連結することができます。連結された応答のセットがモデルのコンテキストウィンドウに適合しなければならないことを強調することが重要です。

学習洞察:学習モードと呼ばれる機能は、ユーザーがモデルの意思決定プロセスを観察することができ、確率スコア(ロジスティック単位またはロジットのみ)とハイパーパラメーター設定に基づいて出力トークンを選択する方法についての洞察を提供します。最も可能性の低い選択されたトークンのリストも生成されます。

音声相互作用:サマンサは、オフラインの音声からテキストのVOSK(英語とポルトガル語)を使用したシンプルな音声コマンドと、SAPI5の声を使用したテキストからスピーチをサポートしており、アクセスしやすくユーザーフレンドリーにします。

✅オーディオフィードバック:インターフェイスはユーザーに可聴アラートを提供し、モデルごとにテキスト生成フェーズの開始と終了を通知します。

documentドキュメント処理:システムは小さなPDFおよびTXTファイルをロードできます。チェーンユーザープロンプト、システムプロンプト、モデルのURLリストをTXTファイルを介して入力できます。

versative汎用テキスト入力:プロンプト挿入のフィールドにより、システムプロンプト、以前のモデル応答、ユーザープロンプトなど、ユーザーがモデルの応答をガイドするためのユーザープロンプトなど、ユーザーがシステムと効果的に対話できるようになります。

codeコード統合:モデルの応答からのPythonコードブロックの自動抽出、および孤立した仮想環境での事前にインストールされたJupyterLab統合開発環境(IDE)により、ユーザーは即座に結果を得るために生成コードを迅速に実行できます。

python Pythonコードの編集、コピー、および実行:システムを使用すると、ユーザーはモデルによって生成されたコードを編集し、 CTRL + Cを選択し、 [実行コード]ボタンをクリックして[実行]を実行できます。 Pythonコードをどこからでも(Webページなど)からコピーして、コピーPythonコードを押してコードボタンを実行するだけで実行することもできます(インストールされているPythonライブラリを使用する限り)。

codeコードブロック編集:ユーザーは、出力コードに#IDEコメントを入力し、 CTRL + Cの選択とコピー、最後に[実行コード]ボタンをクリックすることにより、 jupyterlab仮想環境にインストールされているライブラリを使用するモデルによって生成されたPythonコードブロックを選択および実行できます。

✅HTML出力:ターミナルに印刷されたテキストが ''以外の場合、HTMLポップアップウィンドウにPythonインタープリター出力を表示します。この機能により、たとえば、スクリプトを無制限に実行し、特定の条件が満たされた場合にのみ結果を表示できます。

Automatic Codeの実行: Samanthaは、モデルによって生成されたPythonコードを順番に自動的に実行するオプションを備えています。生成されたコードは、複数のライブラリを含む仮想環境にインストールされたPythonインタープリターによって実行されます(インテリジェントエージェントのような機能)。

✅停止条件:モデルによって生成されたPythonコードの自動実行が端末に「(空の文字列)以外の値)が含まれていない場合、エラーメッセージが含まれていない場合、サマンサを停止します。また、特定の条件が満たされたときに文字列STOP_SAMANTHAのみを返す関数を作成することにより、実行中のループを強制することもできます。

codementive積分:決定論的設定を使用して、Pythonコードを段階的に作成し、次の部品に移動する前に各パーツが機能することを確認します。

completeアクセスと制御の完了: Pythonライブラリのエコシステムとモデルによって生成されたコードを通じて、コンピューターファイルにアクセスし、ローカルファイルを読み取り、作成、変更、削除し、利用可能な場合はインターネットにアクセスできるようにすることができます。

KeyboardおよびMouse Automation: Pyautoguiライブラリを使用してコンピューター上のタスクを自動化するための一連のプロンプトを作成できます(Pythonで退屈なものを自動化することを参照してください。Pythonファイル( .py )に、Pyintlerer for pyintlererのグラフィカルユーザーインターフェイス(GUI)を使用して、Pythonファイル(.py)を使用して実行可能ファイル( .exe )を変換することもできます。

✅データ分析ツール: Pandas、Numpy、Scipy、Scikit-Learn、Matplotlib、Seaborn、Vega-Altair、Plotly、Bokeh、Dash、Streamlit、Ydata Profiling、Sweetviz、d-tale、dataprep、Network、beautive ineerion、pyvis、pyvis、pyvis、pyvis、pyumpumdf、sweetviz、d-tale、dataprep、包括的な分析と視覚化のためのJupyterLab。 DBブラウザとの統合も利用できます(DBブラウザボタンを参照)。

jupyterlab仮想環境に巻き込まれたすべてのPythonライブラリの完全なリストには、 「 pkgutilライブラリを使用してインストールされているすべてのモジュールを印刷するPythonコードを作成する」などのプロンプトを使用します。コード生成後にコードを実行するボタンを押します。結果はブラウザのポップアップに表示されます。また、環境対応端末のpipdeptree --packages module_name使用して、その依存関係を確認することもできます。

最適化されたパフォーマンス: CPUのスムーズなパフォーマンスを確保するために、サマンサは以前の応答のみに限られたチャット履歴を維持し、モデルのコンテキストウィンドウサイズを縮小してメモリと計算リソースを保存します。

サマンサを使用するには、次のことが必要です。

コンピューターにVisual Studio(無料コミュニティバージョン)をインストールします。それをダウンロードして実行し、 C ++(管理者の特権が必要)を使用してオプションデスクトップ開発のみを選択します。

ここをクリックしてサマンサのリポジトリからzipファイルをダウンロードして、コンピューターに解凍します。プログラムをインストールするドライブを選択します。

samantha_ia-mainディレクトリを開き、 install_samantha_ia.batファイルをダブルクリックしてインストールを開始します。 Windowsは、 .batファイルの原点を確認するように依頼する場合があります。 「詳細」をクリックして確認してください。私たちは、すべてのファイルのコードを検査するために取り組みます(VirustotalおよびAIシステムを使用してそうする):

これがインストールの重要な部分です。すべてがうまくいけば、端末にエラーメッセージを表示せずにプロセスが完了します。

インストールプロセスには約20分かかり、2つの仮想環境の作成で終了するはずです: samanthaはAIモデルのみを実行し、 jupyterlab実行して、他のインストールされたプログラムを実行します。ハードドライブの約5 GBを占めます。

インストールしたら、 open_samantha.batファイルをダブルクリックしてサマンサを開きます。 Windowsは、 .batファイルのソースを確認するようにもう一度要求する場合があります。この許可は、プログラムを初めて実行するときにのみ必要です。 「詳細」をクリックして確認してください。

端子ウィンドウが開きます。これはサマンサのサーバー側です。

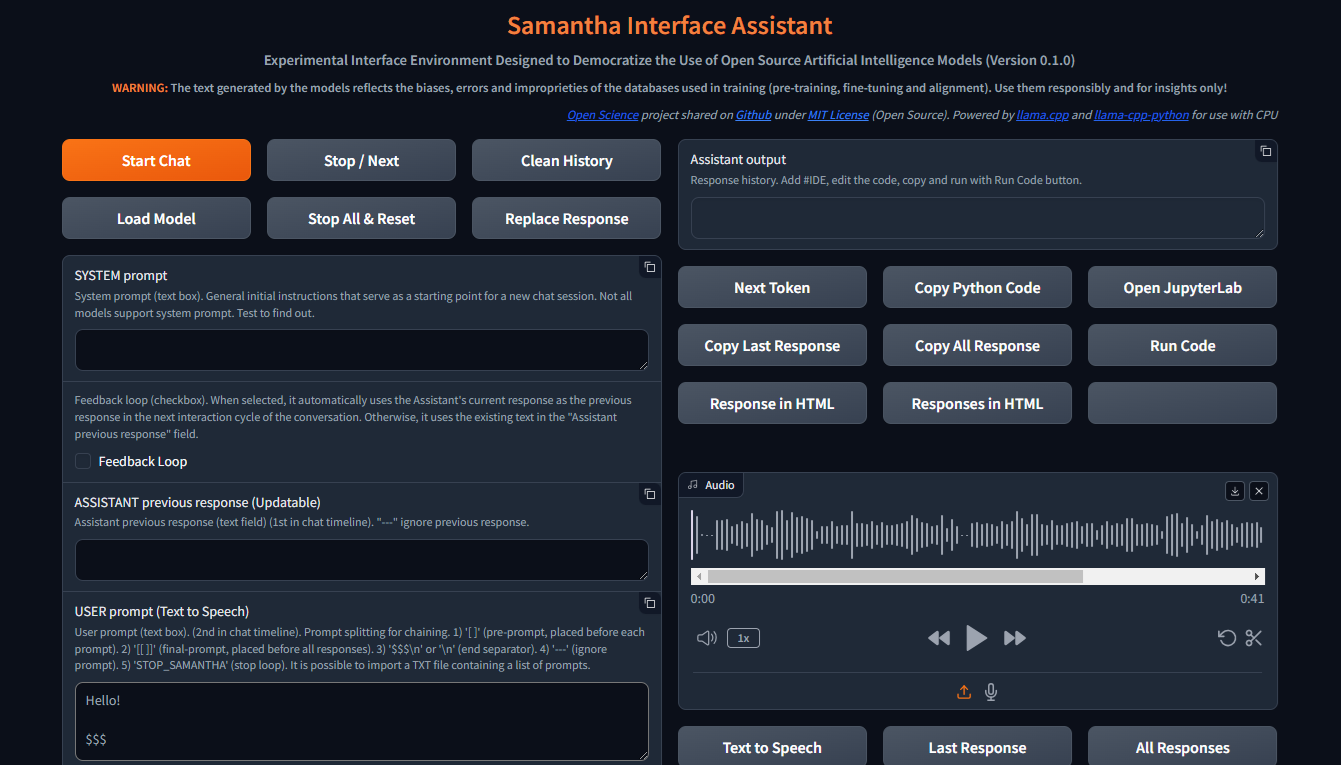

最初の質問(インターフェイス言語と音声制御オプション - ボイスコントロールは最初の使用には適していません)に答えた後、インターフェイスは新しいブラウザタブで開きます。これはサマンサのブラウザ側です:

ブラウザウィンドウを開くと、サマンサは準備ができています。

インストールビデオをご覧ください。

サマンサは、テキストを生成するために.ggufモデルファイルだけが必要です。これらの手順に従って、簡単なモデルテストを実行します。

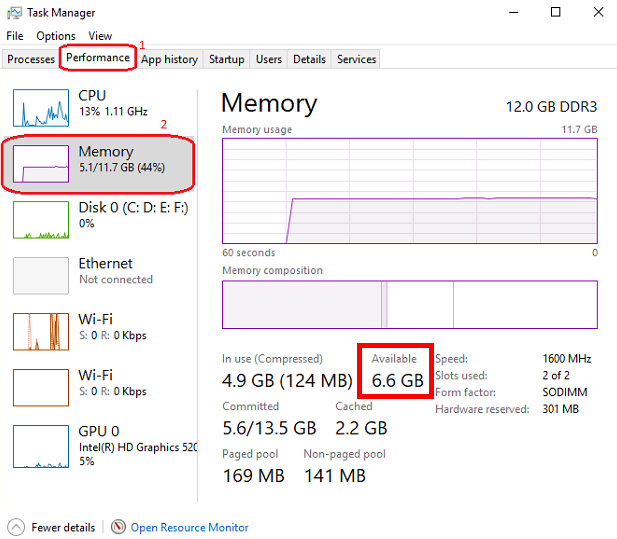

CTRL + SHIFT + ESCを押してWindowsタスク管理を開き、利用可能なメモリを確認します。必要に応じて、メモリを解放するためにいくつかのプログラムを閉じます。

抱きしめるフェイスリポジトリにアクセスして、カードをクリックして対応するページを開きます。 [ファイルとバージョン]タブを見つけ、使用可能なメモリに収まる.ggufテキスト生成モデルを選択します。

モデルのダウンロードリンクアイコンを右クリックして、そのURLをコピーします。

モデルURLをテストフィールドのためにサマンサのダウンロードモデルに貼り付けます。

プロンプトをユーザープロンプトフィールドに挿入し、 Enter押します。プロンプトの最後に$$$サインを保持します。モデルはダウンロードされ、デフォルトの決定論的設定を使用して応答が生成されます。 Windowsタスク管理を介してこのプロセスを追跡できます。

このコピーおよび貼り付け手順でダウンロードされたすべての新しいモデルは、前の手順を置き換えて、ハードドライブスペースを節約します。モデルのダウンロードは、ダウンロードフォルダーのMODEL_FOR_TESTING.ggufとして保存されます。

モデルをダウンロードして、コンピューターに永久に保存することもできます。詳細については、以下のセクションを参照してください。

gguf検索パラメーターとして使用して、オープンソーステキスト生成モデルを抱きしめる顔からダウンロードできます。 gguf codeやgguf portugueseなどの2つの単語を組み合わせることができます。

また、特定のリポジトリにアクセスして、https://huggingface.co/bartowskiやhttps://huggingface.co/nousresearchなど、ダウンロードとテストに利用できるすべての.ggufモデルを確認することもできます。

モデルは次のようなカードに表示されます:

モデルをダウンロードするには、カードをクリックして対応するページを開きます。モデルカードとファイルとバージョンのタブを見つけます。

いくつかのモデルをダウンロードするには、使用条件に同意する必要があります。

その後、 [ファイルとバージョン]タブをクリックして、利用可能なRAMスペースに適合するモデルをダウンロードします。使用可能なメモリを確認するには、 CTRL + SHIFT + ESCを押してWindowsタスクマネージャーを開き、 [パフォーマンス]タブ(1)をクリックして、メモリ(2)を選択します。

Q4_K_M (4ビット量子化)を使用してモデルをリンク名にダウンロードすることをお勧めします(ダウンロードボタンの上にマウスを置いて、次のようなリンクの完全なファイル名を表示します。 https://huggingface.co/NousResearch/Hermes-2-Pro-Llama-3-8B-GGUF/resolve/main/Hermes-2-Pro-Llama-3-8B-Q4_K_M.gguf?download=true )。原則として、モデルサイズが大きいほど、生成されたテキストの精度が大きくなります。

ダウンロードされたモデルが利用可能なRAMスペースに収まらない場合、ハードドライブが使用され、パフォーマンスに影響を与えます。

選択したモデルをダウンロードしてコンピューターに保存するか、ダウンロードリンクをコピーして、テストフィールドのためにサマンサのダウンロードモデルに貼り付けます。詳細については、以下のセクションのビデオチュートリアルをご覧ください。

各モデルには独自の特性があり、そのサイズ、内部アーキテクチャ、トレーニング方法、トレーニングデータベースの主要な言語、ユーザープロンプト、ハイパーパラメーターの調整に応じて、かなり異なる応答を提示し、目的のタスクのパフォーマンスをテストする必要があります。

一部のモデルは、その技術的特性や、サマンサが使用するLlama.cpp Pythonバインディングの現在のバージョンとの非互換性のためにロードされない場合があります。

テストするモデルを見つける場所:Huggingface GGUFモデル

サマンサは、オープンソースAIモデルをテストするために作成された実験プログラムです。したがって、ユーザーが作成した新しいモデルまたは新しいバージョンのモデルをテストしようとする場合、エラーが発生するのが一般的です。

モデルによって生成された応答の品質は、次のような基準を使用して評価できます。

ユーザーとシステムのプロンプトに含まれる明示的および暗黙的な指示の理解度。

これらの指示への服従の程度、データベースの支配的な言語に関連する側面。

コヒーレントテキストの生成における幻覚の程度ですが、間違っているか、文脈から外れます。テキスト生成の幻覚は通常、モデルの不十分なトレーニングまたは次のトークンの不適切な選択に起因し、モデルを望ましくないセマンティック方向に導きます。

意思決定プロセスの精度の程度ユーザープロンプトのコンテキストでギャップを埋め、応答を生成するために必要なあいまいさを解決します。明示的に指定されていないものは、モデルはトレーニングに基づいて推測しようとします。これはエラーにつながる可能性があります。

ユーザーのプロンプトに含まれるバイアス(またはその欠如)を使用して、モデルによって採用されたバイアスの一貫性の程度。

対処することが選択されたトピックの適切性と関連性の程度。

応答のトピックに対する幅とアプローチの深さの程度。

応答の構文と意味の精度の程度。

プロンプト(プロンプトエンジニアリング)の作成に使用される手法とモデルのハイパーパラメーターの調整に使用される手法を考慮して、モデルに提出された問題に対するユーザーの期待(および克服)に関連する応答の構造と内容の品質。

主なコントロール:

チャットセッションを開始し、すべての入力テキスト(システムプロンプト、アシスタント以前の応答、ユーザープロンプト)をサーバーに送信し、ユーザーが調整した設定を送信します。他のすべてのボタンと同様に、マウスクリックが鳴ります。

このボタンは、内部の以前の応答もクリアします。

チャットセッションには、複数の会話サイクル(ループ)を含めることができます。

チャットボタンの開始キーボードショートカット:ページのどこにでもEnter押します。

テキストを生成するには、モデルをモデル選択ドロップダウンリストで事前に選択する必要があります。または、テストフィールドのダウンロードモデルには、抱きしめるフェイスモデルURLを提供する必要があります。両方のフィールドが入力されている場合、ドロップダウンリストを介して選択されたモデルが優先されます。

現在のモデルまたはプロンプトのトークン生成プロセスを中断し、次のモデルの実行を開始するか、シーケンスでプロンプトがある場合(もしあれば」。

また、Speech Autoplay Modeの場合、現在再生されるオーディオの再生を停止します(選択したチェックボックスを読み取ります)。

サマンサには3つのフェーズがあります。

このボタンは、以前に押された場合でも、次のトークン選択フェーズが開始されたときにのみトークンの生成を中断します。

この中断は、実行コードが自動的にチェックボックスが選択されている場合、モデルによって生成されたコードの実行を妨げません。ボタンを押してテキスト生成を停止し、すでに生成されたPythonコードを実行できます。

?現在のチャットセッションの履歴をクリアし、アシスタント出力フィールドとすべての内部ログ、以前の応答などを消去します。

このボタンが機能するには、モデルがテキストの生成が完了するのを待つ必要があります(アシスタント出力フィールドのオレンジ色の境界線が点滅するのは停止)

culyロードに使用できるモデルが保存されるディレクトリを選択できます。

デフォルト:Windows「ダウンロード」フォルダー

GGUFモデルを含む任意のディレクトリを選択できます。この場合、選択したディレクトリに含まれるモデルは、モデル選択ドロップダウンリストにリストされます。

ポップアップウィンドウが開いたら、選択するフォルダーを必ずクリックしてください。

?実行中のモデルのシーケンスを停止し、最後のロードモデルの内部設定をリセットします。

リセット後、モデルは入力テキストのサイズに応じてテキスト生成を再起動するのに時間がかかります。

この中断は、[モデルによって生成された既にPythonコードの実行が自動的に選択されている場合、モデルによって生成された既にPythonコードの実行を防ぎます。

?アシスタント以前の応答フィールドのテキストを、モデルによって生成された最後の応答のテキストに置き換えます。

交換されたテキストは、次の会話サイクルでモデルの以前の応答として使用されます。

この交換されたテキストは表示されません。以前のアシスタント応答フィールドからテキストを消去することはなく、後で再度使用できます。

大規模な言語モデル(LLMS)のコンテキストでは、システムプロンプトは、会話やタスクの開始時にモデルに与えられる特別なタイプの指示です。モデルとのすべての相互作用で考慮されます。

相互作用の段階を設定すると考えてください。 LLMに、その役割、望ましいペルソナ、行動、および会話の全体的なコンテキストに関する重要な情報を提供します。

これがどのように機能しますか:

役割の定義:システムプロンプトは、相互作用におけるLLMの役割を明確に定義します。

トーンとペルソナの設定:システムプロンプトは、LLMの応答に目的のトーンとペルソナを確立することもできます。

コンテキスト情報の提供:システムプロンプトは、会話やタスクに関連する背景情報を提供できます。

Benefits of Using System Prompts:

例:

Let's say you want to use an LLM to write a poem in the style of Shakespeare. A suitable system prompt would be:

You are William Shakespeare, a renowned poet from Elizabethan England.

By providing this system prompt, you guide the LLM to generate a response that reflects Shakespeare's language, style, and thematic interests.

Not all models support system prompt. Test to find out: fill in "x = 2" in the System prompt field and ask the model the value of "x" in the User prompt field. If the model gets the value of "x", system prompt is available in the model.

You can simulate the effect of the system prompt by adding text in square brackets in the beginning of the User prompt field: [This text acts as a system prompt] or adding the system prompt text into the Assistant previous response field (do not use feedback loop).

To ignore the text present in this field, include --- at the beginning. To split the text in parts, put $$$ between them. To ignore each part, include --- at the beginning of each part.

↩️ When activated, it automatically considers the response generated by the model in the current conversation cycle as being the Assistant's previous response in the next cycle, allowing feedback from the system.

Any text entered by the user in the Assistant previous response field is only considered in the first cycle after activating this feature. In the following cycles, the model's response internally replaces the previous response, but without deleting the text contained in that field, which can be reused in a new chat session. You can monitor the content of the assistant previous response via terminal.

In turn, when deactivated, it always uses the text contained in the Assistant previous response field as the previous response, unless the text is preceded by --- (triple dash). Text preceded by --- is ignored by the model.

To internally clear the model's previous response, press the Clean history button.

➡️ Stores the text considered by the model as its previous response in the current conversation cycle.

Used to feed back the responses generated by the model.

To ignore the text present in this field, include --- at the beginning. To split the text in parts, put $$$ between them. To ignore each part, include --- at the beginning of each part.

✏️ The main input field of the interface. It receives the list of user prompts that will be submitted to the model sequentially.

Each item in the list must be separated from the next one by a line break ( SHIFT + ENTER or n ) or by the symbols $$$ (triple dollar signal), if the items are made up of text with line breaks.

When present in the user prompt, the $$$ separator takes precedence over the n separator. In other words, n is ignored.

You can import a TXT file containing a list of prompts.

--- before a prompt list item causes the system to ignore that item.

Text positioned within single square brackets ( [ and ] ) is added to the beginning of each prompt list item, simulating a system prompt.

Text positioned within double square brackets ( [[ and ]] ) is added as the last item in the prompt list. In this case, all responses generated by the model in the current chat session are concatenated and added to the end of this item, allowing the model to analyze them together.

If the Python code execution returns only the word STOP_SAMANTHA , it stops token generation and exits the loop.

If the Python code execution returns only '' (empty string), it does not display the HTML pop-up window.

You can add specific hyperparameters before each prompt. You must use this pattern:

{max_tokens=4000, temperature=0, tfs_z=0, top_p=0, min_p=1, typical_p=0, top_k=40, presence_penalty=0, frequency_penalty=0, repeat_penalty=1}

例:

[You are a poet that writes only in Portuguese]

Create a sentence about love

Create a sentence about life

--- Create a sentence about time (this instruction is ignored)

[[Create a paragraph in English that summarizes the ideas contained in the following sentences:]]

( previous responses are concatenated here )

Model responses sequence:

"O amor é um fogo que arde no meu peito, uma chama que me guia através da vida."

"A vida é um rio que flui sem parar, levando-nos para além do que conhecemos."

Love and life are intertwined forces that shape our existence. Love burns within us like a fire, guiding us through life's journey with passion and purpose. Meanwhile, life itself is a dynamic and ever-changing river, constantly flowing and carrying us beyond the familiar and into the unknown. Together, love and life create a powerful current that propels us forward, urging us to explore, discover, and grow.

✅ Dropdown list of models saved on the computer and available for text generation.

To view models in this field, click the Load model button and select the folder containing the models.

The default location for saving models is the Windows Downloads directory.

You can select multiples models (even repeated) to create a sequence of models to respond the user prompts.

The last model downloaded from a URL is saved as MODEL_FOR_TESTING.gguf and is also displayed in this list.

Receives a list of Hugging Face links to the models that will be downloaded and executed sequencially.

Link example:

Links preceded by --- will be ignored.

Only works if no model is selected in Model selection dropdown list.

1️⃣ Activates a single response per model.

Prompts that exceed the number of models are ignored.

Models that exceed the number of prompts are also ignored.

You can select the same model more than once.

This checkbox disables Number of loops and Number of responses checkboxes.

⏮️ Reinitializes the internal state of the model, eliminating the influence of the previous context.

How it Works:

When the reset feature is invoked:

利点:

Use Cases:

? Shuffles the execution order of the models if 3 or more models are selected in Model selection dropdown list.

?♀️ Generates text faster in the background without displaying the addition of each token in the Assistant output field.

Minimizing or hiding the Samantha browser window makes the token generation process even faster.

This checkbox disables Learning Mode.

Selects the language of the computer's SAPI5 voice that will read the responses generated by the model.

? Activates automatic reading mode for responses generated by the model using the language selected in the Voice selection dropdown list.

If you wish to reproduce the response generated by the model using a better quality speech synthesizer (Microsoft Edge browser), open the response in an HTML pop-up using the Response in HTML button, right-click inside the page and select the option to read the page text aloud.

To save and edit the audio generated by the speech synthesizer, we recommend record de audio using the portable version of the open source program Audacity. Adjust the recording setting to capture audio output from the speakers (not from the microphone).

?? Activates Learning Mode.

It presents a series of features that help in understanding the token selection process by the model, such as:

Only works if Fast Mode is unchecked.

Radio buttons options:

? Set the number of repetitions of the block in the following chaining sequence:

Chaining Sequence: ( [models list] -> respond -> ( [user prompt list] X number of responses) ) X number of loops

Each model in the models list responds to all prompts in the user prompt list for the selected number of responses . This block is repeated for the selected number of loops .

? Number of responses to be generated by each selected model in the following chaining sequence:

Chaining Sequence: ( [models list] -> respond -> ( [user prompt list] X number of responses ) ) X number of loops

Each model in the models list responds to all prompts in the user prompt list for the selected number of responses . This block is repeated for the selected number of loops .

? When checked, runs automatically the Python code generated by the model.

Whenever Python code returns a value other than '' (empty string), an HTML pop-up window opens to display the returned content.

? When checked, stops Samantha when the automatic execution of the Python code generated by the model prints in the terminal a value other than '' (empty string) and that does not contain error message.

Use it to stop a generation loop when a condition is met.

? When checked, concatenates each new response by adding it to the previous response to be considered when generating the next response by the model.

It is important to highlight that the set of concatenated responses must fit in the model's context window.

? Adjusts the model's hyperparameters with random values in each new conversation cycle.

Randomly chosen values vary within the following value range of each hyperparameter and are displayed at the beginning of each response generated by the model.

| Hyperparameter | 分価値 | マックス。価値 |

|---|---|---|

| 温度 | 0.1 | 1.0 |

| tfs_z | 0.1 | 1.0 |

| top_p | 0.1 | 1.0 |

| min_p | 0.1 | 1.0 |

| typical_p | 0.1 | 1.0 |

| presence_penalty | 0.0 | 0.3 |

| frequency_penalty | 0.0 | 0.3 |

| repeat_penalty | 1.0 | 1.2 |

This resource has application in the study of the reflections of the interaction between hyperparameters.

? Feedback only the Python interpreter output as the next assistant's previous response. Do not include model's response.

This feature reduces the number of tokens to be inserted in the assistant's previous response in the next conversation cycle.

Works only with Feedback Loop activated.

Hide HTML model responses, including Python interpreter error messages.

Context Window:

n_ctx stands for number of context tokens in the context window and determines the maximum number of tokens that the model can process at once. It determines how much previous text the model can "remember" and utilize when selecting the next token from model vocabulary.

The context length directly impacts the memory usage and computational load. Longer n_ctx requires more memory and computational power.

How n_ctx works:

It sets the upper limit on the number of tokens the model can "see" at once. Tokens are usually word parts, full words, or characters, depending on the tokenization method. The model uses this context to understand and generate text. For example, if n_ctx is 2048, the model can process up to 2048 tokens (now words) at a time.

Impact on model operation:

During training and inference, the model attends to all tokens within this context window.

It allows the model to capture long-range dependencies in the text.

Larger n_ctx enables the model to handle longer sequences of text without losing earlier context.

Why increasing n_ctx increases memory usage:

Attention mechanism: LLMs uses self-attention mechanisms (like in Transformers) which compute attention scores between all pairs of tokens in the input.

Quadratic scaling: The memory required for attention computations scales quadratically with the context length. If you double n_ctx , you quadruple the memory needed for attention.

CAUTION: n_ctx MUST BE GREATER THAN ( max_tokens + number of input tokens) (system prompt + assistant previous response + user prompt).

If the prompt text contains more tokens than the context window defined with n_ctx or the memory required exceeds the total available on the computer, an error message will be displayed.

Error message displayed on Assistant output field:

==========================================

Error loading LongWriter-glm4-9B-Q4_K_M.gguf.

Some models may not be loaded due to their technical characteristics or incompatibility with the current version of the llama.cpp Python binding used by Samantha.

Try another model.

==========================================

Error messages displayed on terminal:

Requested tokens (22856) exceed context window of 10016

Unable to allocate 14.2 GiB for an array with shape (25000, 151936) and data type float32

When set to 0 , the system will use the maximum n_ctx possible (model's context window size).

As a rule, set n_ctx equal to max_tokens , but only to the value necessary to accommodate the text parsed by the model. Samantha's default values for n_ctx and max_tokens are 4,000 tokens.

Before adjusting n_ctx , you must to unload the model by clicking Unload model button.

例:

User prompt = 2000 tokens

n_ctx = 4000 tokens

If the text generated by the model is equals or greater than 2000 tokens (4000 - 2000), the system will raise an IndexError in the terminal, but the interface will not crash.

To check the impact of the n_ctx in memory, open Windows Task Manager ( CTRL + SHIFT + ESC ) to monitor memory usage, select memory panel and vary n_ctx values. Don't forget to unload model between changes.

?️ Controls maximum number of tokens to be generated by the model.

Select 0 for the models' maximum number of tokens (maximum memory required).

How max_tokens Works:

Sampling Process: When generating text, LLMs predict the next token based on the context provided (system prompt + previous response + user prompt + text already generated). This prediction involves calculating probabilities for each possible token in the vocabulary.

Token Limit: The max_tokens parameter sets a hard limit on how many tokens the model can generate before stopping, regardless of the predicted probabilities.

Truncation: Once the generated text reaches max_tokens , the generation process is abruptly terminated. This means the final output might be incomplete or feel cut off.

Stop Words:

? List of characters that interrupt text generation by the model, in the format ["$$$", ".", ".n"] (Python list).

Token Sampling:

Deterministic Behavior:

To check the deterministic impact of each hyperparameter on the model's behavior, set all others hyperparameters to their maximum stochastic values and execute a prompt more than once. Repeat this procedure for each token sampling hyperparameter.

| Hyperparameter | 決定論的 | 確率論的 | 選択 |

|---|---|---|---|

| 温度 | 0 | > 0 | 2 (stochastic) |

| tfs_z | 0 | > 0 | 1 (stochastic) |

| top_p | 0 | > 0 | 1 (stochastic) |

| min_p | 1 | <1 | 1 (deterministic) |

| typical_p | 0 | > 0 | 1 (stochastic) |

| top_k | 1 | > 1 | 40 (stochastic) |

In other words, the hyperparameter with deterministic adjustment prevails over all other hyperparameters with stochastic adjustments.

As the hyperparameter with deterministic tuning loses this condition, interaction between all hyperparameters with stochastic tuning occurs.

Stochastic Behavior:

To check the stochastic reflection of a hyperparameter on the model's behavior, set all other hyperparameters to their maximum stochastic values and gradually vary the selected hyperparameter based on its deterministic value. Repeat this procedure for each token sampling hyperparameter.

You can combine stochastic tuning of different hyperparameters.

| Hyperparameter | 決定論的 | 確率論的 | 選択 |

|---|---|---|---|

| 温度 | 0 | > 0 | 2 (stochastic) |

| tfs_z | 0 | > 0 | 1 (stochastic) |

| top_p | 0 | > 0 | 1 (stochastic) |

| min_p | 1 | <1 | 1 (reduce progressively) |

| typical_p | 0 | > 0 | 1 (stochastic) |

| top_k | 1 | > 1 | 40 (stochastic) |

The text generation hyperparameters in language models, such as top_k , top_p , tfs-z , typical_p , min_p , and temperature , interact in a complementary way to control the process of choosing the next token. Each affects token selection in different ways, but there is an order of prevalence in terms of influence on the final set of tokens that can be selected. Let's examine how these hyperparameters relate to each other and who "prevails" over whom.

All these hyperparameters are adjusted after the model generates the logits of each token.

Samantha displays the logits of each token in learning mode, before they are changed by the hyperparameters.

Samantha also indicates which token was selected after applying the hyperparameters.

10 vocabulary tokens most likely returned by the model to initiate the answer to the following question: Who are you? :

Vocabulary id / token / logit value:

358) ' I' (15.83)

40) 'I' (14.75) <<< Selected

21873) ' Hello' (14.68)

9703) 'Hello' (14.41)

1634) ' As' (14.31)

2121) 'As' (13.98)

20971) ' Hi' (13.73)

715) ' n' (13.03)

5050) 'My' (13.01)

13041) 'Hi' (12.77)

How to disable hyperparameters:

temperature : Setting it to 1.0 keeps the original odds unchanged. Note: Setting it to 0 does not "disable" it, but makes the selection deterministic.

tfs_z (Tail-Free Sampling with z-score): Setting it to a very high value effectively disables it.

top-p (nucleus sampling): Setting it to 1.0 effectively disables it.

min-p : Setting it to a very low value (close to 0) effectively disables it.

typical-p : Setting it to 1.0 effectively disables it.

top-k : Setting it to a very high value (eg vocabulary size) essentially disables it.

Order of Prevalence

1 top_k , top_p , tfs_z , typical_p , min_p : These delimit the space of possible tokens that can be selected.

top_k restricts the number of available tokens to the k most likely ones. For example, if k = 50 , the model will only consider the 50 most likely tokens for the next word. Tokens outside of these 50 most likely are completely discarded, which can help avoid unlikely or very risky choices.

top-p defines a threshold based on the sum of cumulative probabilities . If p = 0.9 , the model will include the most likely tokens until the sum of their probabilities reaches 90% . Unlike top_k , the number of tokens considered is dynamic, varying according to the probability distribution.

tfs_z aims to eliminate the "tail" of the tokens' probability distribution. It works by discarding tokens whose cumulative probability (from the tail of the distribution) is less than a certain threshold z. The idea is to keep only the most informative tokens and eliminate those with less relevance, regardless of how many tokens this leaves in the set. So, instead of simply truncating the distribution at the top (as top_k or top_p does), tfs_z makes the model get rid of the tokens at the tail of the distribution. This creates a more adaptive way of filtering the least likely tokens, promoting the most important ones without strictly limiting the number of tokens, as with top_k . tfs_z discards the "tail" of the token distribution, eliminating those with cumulative probabilities below a certain threshold z.

typical_p selects tokens based on their divergence from the mean entropy of the distribution, ie how "typical" the token is. typical-p is a more sophisticated sampling technique that aims to maintain the "naturalness" of text generation, based on the notion of entropy, ie how "surprising" or predictable is the choice of a token compared to the what the model expects. How Typical-p Works: Instead of focusing only on the absolute probabilities of tokens, as top_k or top_p do, typical_p selects tokens based on their deviation from the mean entropy of the probability distribution.

Here is the typical_p process:

a) Average Entropy: The average entropy of a token distribution reflects the average level of uncertainty or surprise associated with choosing a token. Tokens with a very high (expected) or very low (rare) probability may be less "typical" in terms of entropy.

b) Divergence Calculation: Each token has its probability compared to the average entropy of the distribution. Divergence measures how far the probability of that token is from the average. The idea is that tokens with a smaller divergence from average entropy are more "typical" or natural within the context.

c) Sampling: typical_p defines a fraction p of the accumulated entropy to consider tokens. Tokens are ordered based on their divergence and those that fall within a portion p (eg, 90% of the most "typical" distribution) are considered for selection. The model chooses tokens in a way that favors those that represent the average uncertainty well, promoting naturalness in text generation.

Prevalence: These parameters define the set of candidate tokens . They are first used to restrict the number of possible tokens before any other adjustments are applied. The way they are combined can be cumulative, where applying multiples of these filters progressively reduces the number of available tokens. The final set is the intersection set between the tokens that pass all these checks.

If you use top_k and top_p at the same time, both must be respected. For example, if top_k = 50 and top_p = 0.9 , the model first limits the choice to the 50 most likely tokens and, within these, considers those whose probability sum reaches 90%.

If you add typical_p or tfs_z to the equation, the model will apply these additional filters over the same set, further reducing the options.

2 temperature: Adjusts the randomness within the set of already filtered tokens .

After the model restricts the universe of tokens based on cutoff hyperparameters like top-k , top_p , tfs_z , etc., temperature comes into play.

temperature changes the smoothness or rigidity of the probability distribution of the remaining tokens. A temperature lower than 1 concentrates the probabilities, causing the model to prefer the most likely tokens. A temperature greater than 1 flattens the distribution, allowing less likely tokens to have a greater chance of being selected.

Prevalence: temperature does not change the set of available tokens, but adjusts the relative probability of already filtered tokens . Thus, it does not prevail over the top_k , top_p , etc. filters, but acts after them, influencing the final selection within the remaining option space.

General Hierarchy

top_k, top_p, tfs_z, typical_p, min_p : These parameters act first, restricting the number of possible tokens.

temperature : After the selection filters are applied, temperature adjusts the probabilities of the remaining tokens, controlling the randomness in the final choice.

Combination Scenario

_top_k + top_p : If top_k is less than the number of tokens selected by top_p , top_k prevails as it limits the number of tokens to k. If top_p is more restrictive (eg only considers 5 tokens with p=0.9), then it prevails over top_k .

typical_p + top_p : Both apply filters, but in different directions. typical_p selects based on entropy, while top_p selects based on cumulative probability. If used together, the end result is the intersection set of these filters.

Temperature : It is always applied last, modulating the randomness in the final selection, but without changing the limits imposed by previous filters.

Prevalence Summary

Filters ( top_k, top_p, tfs_z, typical_p, min_p ) define the set of candidate tokens.

temperature adjusts the relative probability within the filtered set.

The end result is a combination of these filters, where the set of tokens eligible for selection is defined first, and then the randomness is adjusted with temperature.

? Temperature is a hyperparameter that controls the randomness of the text generation process in LLMs. It affects the probability distribution of the model's next-token predictions.

Temperature is a hyperparameter t that we find in stochastic models to regulate the randomness in a sampling process (Ackley, Hinton, and Sejnowski 1985). The softmax function (Equation 1) applies a non-linear transformation to the output logits of the network, turning it into a probability distribution (ie they sum to 1). The temperature parameter regulates its shape, redistributing the output probability mass, flattening the distribution proportional to the chosen temperature. This means that for t > 1, high probabilities are decreased, while low probabilities are increased, and vice versa for t < 1. Higher temperatures increase entropy and perplexity, leading to more randomness and uncertainty in the generative process. Typically, values for t are in the range of [0, 2] and t = 0, in practice, means greedy sampling, ie always taking the token with the highest probability. Is Temperature the Creativity Parameter of Large Language Models?

The Effect of Sampling Temperature on Problem Solving in Large Language Models

Controlling Creativity:

Use higher temperatures when you want the model to generate more creative, unexpected, and varied responses. This is useful for creative writing, brainstorming, and exploring multiple ideas.

This flattens the probability distribution, making the model more likely to sample less probable tokens.

The generated text becomes more diverse and creative, but potentially less coherent.

❄ Use lower temperatures when you need more predictable and focused output. This is useful for tasks requiring precise and reliable information, such as summarization or answering factual questions.

This sharpens the probability distribution, making the model more likely to sample the most probable tokens.

The generated text becomes more focused and deterministic, but potentially less creative.

それがどのように機能するか:

? Mathematically, the temperature (T) is applied by dividing the logits (raw scores from the model) by T before applying the softmax function.

A lower temperature makes the distribution more "peaked," favoring high-probability options.

A higher temperature "flattens" the distribution, giving more chance to lower-probability options.

Temperature scale:

Generally ranges from 0 to 2, with 1 being the default (no modification).

T < 1: Makes the text more deterministic, focused, and "safe."

T > 1: Makes the text more random, diverse, and potentially more creative.

T = 0: Equivalent to greedy selection, always choosing the most probable option.

Avoiding Repetition:

Higher temperatures can help reduce repetitive patterns in the generated text by promoting diversity.

Very low temperatures can sometimes lead to repetitive and deterministic outputs, as the model might keep choosing the highest-probability tokens.

It's important to note that temperature is just one of several sampling hyperparameters available. Others include top-k sampling, nucleus sampling (or top-p), and the TFS-Z. Each of these methods has its own characteristics and may be more suitable for different tasks or generation styles.

Videos:

temperature shorts 1

temperature shorts 2

tfs_z stands for tail-free sampling with z-score . It's a hyperparameter used in a text generation technique designed to balance the trade-off between diversity and quality in generated text.

Context and purpose:

Tail-free sampling was introduced as an alternative to other sampling methods like top-k or nucleus ( top-p ) sampling. Its goal is to remove the arbitrary "tail" of the probability distribution while maintaining a dynamic threshold.

Technical Details of tfs_z in LLM Text Generation

Probability distribution analysis:

The method examines the probability distribution of the next token predictions. It focuses on the "tail" of this distribution - the less likely tokens.

Z-score calculation:

For each token in the sorted (descending) probability distribution, a z-score is calculated. The z-score represents how many standard deviations a token's probability is from the mean.

Cutoff determination:

The tfs_z parameter sets the z-score threshold. Tokens with a z-score below this threshold are removed from consideration.

Dynamic thresholding:

Unlike fixed methods like top-k , the number of tokens retained can vary based on the shape of the distribution. This allows for more flexibility in different contexts.

Sampling process:

After applying the tfs_z cutoff, sampling occurs from the remaining tokens. This can be done using various methods (eg, temperature-adjusted sampling).

tfs_z is a hyperparameter that controls the temperature scaling of the output logits during text generation.

Here's what it does:

Logits : When an LLM generates text, it produces a probability distribution over all possible tokens in the vocabulary. This distribution is represented as a vector of logits (unnormalized log probabilities).

Temperature scaling : To control the level of uncertainty or "temperature" of the output, you can scale the logits by multiplying them with a temperature factor ( t ). This is known as temperature scaling.

tfs_z hyperparameter : It's a hyperparameter that controls how much to scale the logits before applying temperature scaling.

When you set tfs_z > 0 , the model first normalizes the logits by subtracting their mean ( z-score normalization ) and then scales them with the temperature factor ( t ). This has two effects:

Reduced variance : By normalizing the logits, you reduce the variance of the output distribution, which can help stabilize the generation process.

Increased uncertainty : By scaling the normalized logits with a temperature factor, you increase the uncertainty of the output distribution, which can lead to more diverse and creative text generations.

Practical example:

Imagine that the model is trying to complete the sentence "The sky is..."

Without tfs_z , the model could consider:

blue (30%), cloudy (25%), clear (20%), dark (15%), green (5%), singing (3%), salty (2%)

With TFS-Z (cut by 10%):

blue (30%), cloudy (25%), light (20%), dark (15%)

This eliminates less likely and potentially meaningless options, such as "The sky is salty."

By adjusting the Z-score, we can control how "conservative" or "creative" we want the model to be. A higher Z-score will result in fewer but more "safe" options, while a lower Z-score will allow for more variety but with a greater risk of inconsistencies.

In summary, tfs_z controls how much to scale the output logits after normalizing them. A higher value of tfs_z will produce more uncertain and potentially more creative text generations.

Keep in mind that this is a relatively advanced hyperparameter, and its optimal value may depend on the specific LLM architecture, dataset, and task at hand.

⭕ Top-p (nucleus sampling) is a hyperparameter that controls the diversity and quality of text generation in LLMs. It affects the selection of tokens during the generation process by dynamically limiting the vocabulary based on cumulative probability.

Controlling Output Quality:

? Use higher top-p values (closer to 1) when you want the model to consider a wider range of possibilities, potentially leading to more diverse and creative outputs. This is useful for open-ended tasks, storytelling, or generating multiple alternatives. Higher values allow for more low-probability tokens to be included in the sampling pool.

Use lower top-p values (closer to 0) when you need more focused and high-quality output. This is beneficial for tasks requiring precise information or coherent responses, such as answering specific questions or generating formal text. Lower values restrict the sampling to only the most probable tokens.

それがどのように機能するか:

? Mathematically, top-p sampling selects the smallest possible set of words whose cumulative probability exceeds the chosen p-value. The model then samples from this reduced set of tokens. This approach adapts to the confidence of the model's predictions, unlike fixed methods like top-k sampling.

Top-p scale:

Generally ranges from 0 to 1, with common values between 0.1 (10% most likely) and 0.9 (90% most likely).

p = 1: Equivalent to unmodified sampling from the full vocabulary.

p → 0: Increasingly deterministic, focusing on the highest probability tokens.

p = 0.9: A common choice that balances quality and diversity.

Balancing Coherence and Diversity:

Top-p sampling helps maintain coherence while allowing for diversity. It adapts to the model's confidence, using a smaller set of tokens when the model is very certain and a larger set when it's less certain. This can lead to more natural-sounding text compared to fixed cutoff methods.

Comparison with Temperature:

While temperature modifies the entire probability distribution, top-p directly limits the vocabulary considered. Top-p can be more effective at preventing low-quality outputs while still allowing for creativity, as it dynamically adjusts based on the model's confidence.

It's worth noting that top-p is often used in combination with other sampling methods, such as temperature adjustment or top-k sampling. The optimal choice of hyperparameters often depends on the specific task and desired output characteristics.

The min_p hyperparameter is a relatively recent sampling technique used in text generation by large-scale language models (LLMs). It offers an alternative approach to top_k and nucleus sampling ( top_p ) to control the quality and diversity of generated text.

min_p is a sampling hyperparameter that works in a complementary way to top_p (nucleus sampling). While top_p sets an upper bound on cumulative probabilities, min_p sets a lower bound on individual probabilities.

説明:

As with other sampling techniques, LLM calculates a probability distribution over the entire vocabulary for the next word.

The min_p defines a minimum probability threshold, p_min.

The method selects the smallest set of words whose summed probability is greater than or equal to p_min.

The next word is then chosen from that set of words.

Detailed operation:

The model calculates P(w|c) for each word w in the vocabulary, given context c.

The words are ordered by decreasing probability: w₁, w₂, ..., w|V|.

The algorithm selects words in the order of greatest probability until the sum of the probabilities is greater than or equal to p_min :

例:

Suppose we are generating text and the model predicts the following probabilities for the next word:

"o": 0.3

"one": 0.25

"this": 0.2

"that one": 0.15

"some": 0.1

If we use min-p with p_min = 0.7, the algorithm would work like this:

Sum "o": 0.3 < 0.7

Sum "o" + "one": 0.3 + 0.25 = 0.55 < 0.7

Sum "the" + "one" + "this": 0.3 + 0.25 + 0.2 = 0.75 ≥ 0.7

Therefore, we select the first three words. Renormalizing:

"o": 0.3 / 0.75 = 0.4

"one": 0.25 / 0.75 ≈ 0.33

"this": 0.2 / 0.75 ≈ 0.27

The next word will be chosen randomly from these three options with the new probabilities.

The typical_p hyperparameter is an entropy-based sampling technique that aims to generate more natural and less predictable text by selecting tokens that represent what is "typical" or "expected" in a probability distribution. Unlike methods like top_k or top_p , which focus on the absolute probabilities of tokens, typical_p considers how surprising or informative a token is relative to the overall probability distribution.

Technical Operation of typical_p

Entropy: The entropy of a distribution measures the expected uncertainty or surprise of an event. In the context of language models, the higher the entropy, the more uncertain the model is about which token should be generated next. Tokens that are very close to the mean entropy of the output distribution are considered "typical", while tokens that are very far away (too predictable or very unlikely) are considered "atypical".

Calculation of Surprise (Local Entropy): For each token in a given probability distribution, we can calculate its surprise (or "informativeness") by comparing its probability with the average entropy of the token distribution. This surprise is measured by the divergence in relation to the average entropy, that is, how much the probability of a token deviates from the average behavior expected by the distribution.

Selection Based on Entropy Divergence: typical_p filters tokens based on this "divergence" or difference between the token's surprise and the average entropy of the distribution. The model orders the tokens according to how "typical" they are, that is, how close they are to the average entropy.

Typical-p limit: After calculating the divergences of all tokens, the model defines a cumulative probability limit, similar to top_p (nucleus sampling). However, instead of summing the tokens' absolute probabilities, typical_p considers the cumulative sum of the divergences until a portion p of the distribution is included. That p is a value between 0 and 1 (eg 0.9), indicating that the model will include tokens that cover 90% of the most "typical" divergences.

If p = 0.9 , the model selects tokens whose divergences in relation to the average entropy represent 90% of the expected uncertainty. This helps avoid both tokens that are extremely predictable and those that are very unlikely, promoting a more natural and fluid generation.

実用的な例

Suppose the model is predicting the next word in a sentence, and the probability distribution of the tokens looks like this:

In the case of top_p with p = 0.9, the model would only include tokens A, B and C, as their probabilities add up to 90%. However, typical_p can include or exclude tokens based on how their probabilities compare to the average entropy of the distribution. If A is extremely predictable, it can be excluded, and tokens like B, C, and even D can be selected for their more typical representativeness in terms of entropy.

Difference from Other Methods

top_k selects the k most likely tokens directly , regardless of entropy or probability distribution.

top_p selects tokens based on the cumulative sum of absolute probabilities , without considering entropy or surprise.

typical_p , on the other hand, introduces the notion of entropy, ensuring that the selected tokens are neither too predictable nor too surprising , but ones that align with the expected behavior of the distribution.

How Typical-p Improves Text Generation

Naturalness: typical-p prevents the model from choosing very predictable tokens (as could happen with a low temperature or restrictive top-p) or very rare tokens (as could happen with a high temperature), maintaining a fluid and natural generation.

Controlled Diversity: By considering the surprise of each token, it promotes diversity without sacrificing coherence. Tokens that are close to the mean entropy of the distribution are more likely to be chosen, promoting natural variations in the text.

Avoids Extreme Outputs: By excluding overly unlikely or predictable tokens, Typical-p keeps generation within a "safe" and natural range, without veering toward extremes of certainty or uncertainty.

Interaction with Other Parameters

typical_p can be combined with other sampling methods:

When combined with temperature , typical_p further adjusts the set of selectable tokens, while temperature modulates the randomness within that set.

It can be combined with top_k or top_p to further fine-tune the process, restricting the universe of tokens based on different probability and entropy criteria.

In summary, typical_p acts in a unique way by considering the entropy of the distribution and selects tokens that are aligned with the expected behavior of this distribution, resulting in a more balanced, fluid and natural generation.

Here are some guidelines and strategies for tuning typical_p :

typical_p = 1.0: Includes all tokens available in the distribution, without restrictions based on entropy. This is equivalent to not applying any typical restrictions, allowing the model to use the full distribution of tokens.

_typical_p < 1.0: The lower the typical_p value, the narrower the set of tokens considered, keeping only those that most closely align with the average entropy. Common values include 0.9 (90% of "typical" tokens) and 0.8 (80%).

推奨事項:

typical-p = 0.9: This is a common value that typically maintains a balance between diversity and coherence. The model will have the flexibility to generate varied text, but without allowing very extreme choices.

typical_p = 0.8: This value is more restrictive and will result in more predictable choices, keeping only tokens that most accurately align with the average entropy. Useful in scenarios where fluidity and naturalness are priorities.

typical_p = 0.7 or less: The lower the value, the more predictable the text generation will be, eliminating tokens that could be considered atypical. This may result in a less diversified and more conservative output.

Fine-Tuning with temperature

typical_p controls the set of tokens based on entropy, but temperature can be used to adjust the randomness within that set . The interaction between these two parameters is important:

temperature > 1.0: Increases randomness within the set of tokens selected by typical_p , allowing even less likely tokens to have a greater chance of being chosen. This can generate more creative or unexpected responses.

temperature < 1.0: Reduces randomness, making the model more conservative by preferring the most likely tokens from the set filtered by typical_p . Using a low temperature with a high typical_p (0.9 or 1.0) can result in very predictable outputs.

例:

typical_p = 0.9 with _temperature = 1.0: Maintains the balance between naturalness and diversity, allowing the model to generate fluid and creative text, but without major deviations.

typical_p = 0.8 with temperature = 0.7: Makes generation more conservative and predictable, preferring tokens that are closer to the average uncertainty and reducing the chance of creative variations.

それがどのように機能するか

In a language model, when the next word is predicted, the model generates a probability distribution for the next token (word or part of a word), where each token has an associated probability based on its previous context. The sum of all probabilities is equal to 1.

top_k works by reducing the number of options available for sampling, limiting the number of candidate tokens. It does this by selecting only the tokens with the k highest probabilities and discarding all others. Then sampling is done from these k tokens, redistributing the probabilities between them.

例:

Suppose we are generating text and the model predicts the following probabilities for the next word:

"o": 0.3

"one": 0.25

"this": 0.2

"that one": 0.15

"some": 0.1

If we use top-k with k=3, we only keep the three most likely words:

"o": 0.3

"one": 0.25

"this": 0.2

Then, we renormalize the probabilities:

"o": 0.3 / (0.3 + 0.25 + 0.2) ≈ 0.4 (40%)

"one": 0.25 / (0.3 + 0.25 + 0.2) ≈ 0.33 (33%)

"this": 0.2 / (0.3 + 0.25 + 0.2) ≈ 0.27 (27%)

The next word will be chosen from these three options with the new probabilities.

Effect of Hyperparameter k

small k (eg ?=1): The model will be extremely deterministic, as it will always choose the token with the highest probability. This can lead to repetitive and predictable text.

large k (or use all tokens without truncating): The model will have more options and be more creative, but may generate less coherent text as low probability tokens may also be chosen.

Token Penalties:

? Syntactic and semantic variation arises from the penalization of tokens that are replaced by others that begin words related to different ideas, leading the response generated by the model in another direction.

Syntactic variations do not always generate semantic variations.

As text is generated, penalties become more frequent as there are more tokens to be punished.

Deterministic Behavior:

To obtain a deterministic text (same input, same output), but without repeating words (tokens), increase the values of the penalty hyperparameters.

However, if it proves necessary to allow the model to reselect already generated tokens, keep these settings at their default values.

| Hyperparameter | Default Values | Text Diversity |

|---|---|---|

| presence_penalty | 0 | > 0 |

| frequency_penalty | 0 | > 0 |

| repeat_penalty | 1 | > 1 |

Presence Penalty:

The presence penalty penalizes tokens that have already appeared in the text, regardless of their frequency. It discourages the repetition of ideas or themes.

手術:

効果:

フィット:

Frequency Penalty:

The frequency penalty penalizes tokens based on their frequency in the text generated so far. The more times a token appeared, the greater the penalty.

手術:

効果:

フィット:

Repeat Penalty:

The repeat penalty is similar to the frequency penalty, but generally applies to sequences of tokens (n-grams) rather than individual tokens.

手術:

効果:

フィット:

How repeat_penalty works:

Starting from the default value (=1), as we reduce this value (<1) the text starts to present more and more repeated words (tokens), to the point where the model starts to repeat a certain passage or word indefinitely.

In turn, as we increase this value (>1), the model starts to penalize repeated words (tokens) more heavily, up to the point where the input text no longer generates penalties in the output text.

During the penalty process (>1), there is a variation in syntactic and semantic coherence.

Practical observations showed that increasing the token penalty (>1) generates syntactic and semantic diversity in the response, as well as promoting a variation in the response length until stabilization, when increasing the value no longer generates variation in the output.

The repeat_penalty hyperparameter has a deterministic nature.

Adjustment tip:

その他:

Displays model's metadata.

例:

Model: https://huggingface.co/NousResearch/Hermes-3-Llama-3.1-8B-GGUF/resolve/main/Hermes-3-Llama-3.1-8B.Q8_0.gguf?download=true

{'general.name': 'Hermes 3 Llama 3.1 8B'

'general.architecture': 'llama'

'general.type': 'model'

'general.organization': 'NousResearch'

'llama.context_length': '131072'

'llama.block_count': '32'

'general.basename': 'Hermes-3-Llama-3.1'

'general.size_label': '8B'

'llama.embedding_length': '4096'

'llama.feed_forward_length': '14336'

'llama.attention.head_count': '32'

'tokenizer.ggml.eos_token_id': '128040'

'general.file_type': '7'

'llama.attention.head_count_kv': '8'

'llama.rope.freq_base': '500000.000000'

'llama.attention.layer_norm_rms_epsilon': '0.000010'

'llama.vocab_size': '128256'

'llama.rope.dimension_count': '128'

'tokenizer.ggml.model': 'gpt2'

'tokenizer.ggml.pre': 'llama-bpe'

'general.quantization_version': '2'

'tokenizer.ggml.bos_token_id': '128000'

'tokenizer.ggml.padding_token_id': '128040'

'tokenizer.chat_template': "{% if not add_generation_prompt is defined %}{% set add_generation_prompt = false %}{% endif %}{% for message in messages %}{{'<|im_start|>' + message['role'] + 'n' + message['content'] + '<|im_end|>' + 'n'}}{% endfor %}{% if add_generation_prompt %}{{ '<|im_start|>assistantn' }}{% endif %}"}

✅ Displays model's token vocabulary when selected.

? Displays model's vocabulary when Learning Mode and Show Model Vocabulary are selected simultaneously.

Example (from token 2000 to 2100):

Model: https://huggingface.co/Triangle104/Mistral-7B-Instruct-v0.3-Q5_K_M-GGUF/resolve/main/mistral-7b-instruct-v0.3-q5_k_m.gguf?download=true

2000) 'ility'

2001) ' é'

2002) ' er'

2003) ' does'

2004) ' here'

2005) 'the'

2006) 'ures'

2007) ' %'

2008) 'min'

2009) ' null'

2010) 'rap'

2011) '")'

2012) 'rr'

2013) 'List'

2014) 'right'

2015) ' User'

2016) 'UL'

2017) 'ational'

2018) ' being'

2019) 'AN'

2020) 'sk'

2021) ' car'

2022) 'ole'

2023) ' dist'

2024) 'plic'

2025) 'ollow'

2026) ' pres'

2027) ' such'

2028) 'ream'

2029) 'ince'

2030) 'gan'

2031) ' For'

2032) '":'

2033) 'son'

2034) 'rivate'

2035) ' years'

2036) ' serv'

2037) ' made'

2038) 'def'

2039) ';r'

2040) ' gl'

2041) ' bel'

2042) ' list'

2043) ' cor'

2044) ' det'

2045) 'ception'

2046) 'egin'

2047) ' б'

2048) ' char'

2049) 'trans'

2050) ' fam'

2051) ' !='

2052) 'ouse'

2053) ' dec'

2054) 'ica'

2055) ' many'

2056) 'aking'

2057) ' à'

2058) ' sim'

2059) 'ages'

2060) 'uff'

2061) 'ased'

2062) 'man'

2063) ' Sh'

2064) 'iet'

2065) 'irect'

2066) ' Re'

2067) ' differ'

2068) ' find'

2069) 'ethod'

2070) ' r'

2071) 'ines'

2072) ' inv'

2073) ' point'

2074) ' They'

2075) ' used'

2076) 'ctions'

2077) ' still'

2078) 'ió'

2079) 'ined'

2080) ' while'

2081) 'It'

2082) 'ember'

2083) ' say'

2084) ' help'

2085) ' cre'

2086) ' x'

2087) ' Tr'

2088) 'ument'

2089) ' sk'

2090) 'ought'

2091) 'ually'

2092) 'message'

2093) ' Con'

2094) ' mon'

2095) 'ared'

2096) 'work'

2097) '):'

2098) 'ister'

2099) 'arn'

2100) 'ized'

?️ Manually removes the model from memory, freeing up space.

When a new model is selected and the Start chat button is pressed, the previous model is removed from memory automatically.

? Allows you to select a PDF file located on your computer, extracting the text from each page and inserting it in the User prompt field.

The text on each page is separated by $$$ to allow the model to parse it separately.

Click the button to select the PDF file.

? Allows the selection of a PDF file located on the computer, extracting its full text and inserting it in the User prompt field.

At the end, $$$ is inserted to allow the model to fully analyze the entire text.

Click the button to select the PDF file.

Fills System prompt field with a prompt saved in a TXT file.

Click the button to select the TXT file.

Fills Assistant Previous Response field with a prompt saved in a TXT file.

Click the button to select the TXT file.

Fills User prompt field with a list of prompt saved in a TXT file.

Click the button to select the TXT file.

Copy a Hugging Face download model URL (Files and versions tab) and extract all links to .gguf files.

You can paste all the copied links into the Dowonload models for testing field at once.

Fills Download model for testing field with a list of model URLs saved in a TXT file.

Click the button to select the TXT file.

Saves the User prompt in a TXT file.

Click the button to select the directory where to save the file.

Opens DB Browser if its directory is into Samantha's directory.

To install DB Browser:

Download the .zip (no installer) version.

Unpack it with its original name (it will create a directory like DB.Browser.for.SQLite-v3.13.1-win64 ).

Rename the DB Browser directory to db_browser .

Finally, move the db_browser directory to Samantha's directory: ..samantha-ia-maindb_browser

Opens D-Tale library interface in a new browser tab with a example dataset (titanic.csv).

Web Client for Visualizing Pandas Objects

D-Tale is the combination of a Flask back-end and a React front-end to bring you an easy way to view & analyze Pandas data structures. It integrates seamlessly with ipython notebooks & python/ipython terminals. Currently this tool supports such Pandas objects as DataFrame, Series, MultiIndex, DatetimeIndex & RangeIndex. D-Tale Project

Live Demo

A Windows terminal will also open.

Opens Auto-Py-To-Exe library, a graphical user interface to Pyinstaller.

概要

Auto-Py-To-Exe is a user-friendly desktop application that provides a graphical interface for converting Python scripts into standalone executable files (.exe). It serves as a wrapper around PyInstaller, making the conversion process more accessible to users who prefer not to work directly with command-line interfaces.

This tool is particularly valuable for Python developers who need to distribute their applications to users who don't have Python installed or prefer standalone executables. Its combination of simplicity and power makes it an excellent choice for both beginners and experienced developers.

To run the .exe file, right-click inside the directory where the file is located and select the Open in terminal option. Then, type the file name in the terminal and press Enter . This procedure allows you to identify any file execution errors.

Basic Usage

When you copy a Python scrip using CTRL + C or Copy Python Code button and run it by pressing Run Code button, the code is saved as temp.py . Select this file to create a .exe file.

You can use the this procedure with any code, even copyied from the internet.

Common Workflow

Script Selection:

構成:

変換:

テスト:

ベストプラクティス

発達:

変換:

分布:

一般的な問題と解決策

Missing Dependencies:

Path Issues:

パフォーマンス:

利点

制限

Security Considerations

Closes all instances created with the Python interpreter of the jyupyterlab virtual environment.

Use this button to force close running modules that block the Python interpreter, such as servers.

Default Settings:

Samantha's initial settings is deterministic . As a rule, this means that for the same prompt, you'll get always the same answer, even when applying penalties to exclude repeated tokens (penalties does not affect the model deterministic behavior).

? Deterministic settings (default):

Deterministic settings can be used to assess training database biases.

Some models tend to loop (repeat the same text indefinitely) when using highly deterministic adjustments, selecting tokens with the highest probability score. Others may generate the first response with different content from subsequent ones. In this case, to always get the same response, activate the Reset model checkbox.

In turn, for stochastic behavior, suited for creative content, in which model selects tokens with different probability scores, adjust the hyperparameters accordingly.

? Stochastic settings (example):

You can use the Learning Mode to monitor and adjust the degree of determinism/randomness of the responses generated by the model.

Displays the history of responses generated by the model.

The text displayed in this field is editable. But changes made by the user do not affect the text actually generated by the model and stored internally in the interface, which remains accessible through the buttons.

You can use this field to type, paste, edit, copy, and run Python code, as if it were an IDE.

➕ Adds the next token to the model response when Learning Mode is activated.

Copies Python code blocks generated by the model, present in the last response (not the pasted code manually).

You can press this button during response generation to copy the already generated text.

The code must be enclosed in triple backticks:

``` python

(コード)

```

Library installation code blocks starting with pip or !pip are ignored.

To manually run the Python code generated by the model, you must first copy it to the clipboard using this button.

This button executes any Python code copied to the clipboard that uses the libraries installed in the jupterlab virtual environment. Just select the code (even outside of Samantha), press CTRL + C and click this button.

Use it in combination with Copy Python Code button.

To run Python code, you don't need to load the model.

The pip and !pip instructions lines present in the code are ignored.

Whenever Python code returns a value other than '' (empty string), an HTML pop-up window opens to display the returned content.

Copies the text generated by the model in your last response.

You can press this button during response generation to copy the already generated text.

Copies the entire text generated by the model in the current chat session.

You can press this button during response generation to copy the already generated text.

Opens Jupyterlab integrated development environment (IDE) in a new browser tab.

Jupyterlab is installed in a separate Python virtual environment with many libraries available, such as:

For a complete list of all Python available libraries, use a prompt like "create a Python code that prints all modules installed using pkgutil library. Separate each module with <br> tag." and press Run code button. The result will be displayed in a browser popup.

Displays the model's latest response in a HTML pop-up browser window.

Also displays the output of the Python interpreter when it is other than '' (empty string).

The first time the button is pressed, the browser takes a few seconds to load (default: Microsoft Edge).

Displays the all the current chat session responses in a HTML pop-up browser window.

The first time the button is pressed, it takes a few seconds for the window to appear.

When enabled when starting Samantha, this button initiates interaction with the interface through voice.

Interface to convert texts and responses to audio without using the internet.

Reads the text in the User prompt field aloud, using the computer's SAPI5 voice selected by the Voice Selection drop-down list.

Reads the text of the model's last response aloud, using the SAPI5 computer voice selected in the Voice Selection drop-down list.

Reads all the chat session responses aloud, using the SAPI5 computer voice selected in the Voice Selection drop-down list.

List of links to the .gguf model search result on the Hugging Face website, separated by families (llama, gemma, phi, etc.)

Samantha interface operation tips.

For a complete understanding of how the interface works, see the Samantha project on Github.

A collection of user prompts.

Just click to select a prompt and send it to the User prompt field.

You can change this list of prompts by editing the user_prompts.txt file. Samantha must be restarted to display the changes.

A collection of system prompts.

Just click to select a prompt and send it to the System prompt field.

You can change this list of prompts by editing the system_prompts.txt file. Samantha must be restarted to display the changes.

Prompt engineering guides:

? Butterfly Effect: Small changes in the input text (even a single prompt or its position) can lead to substantial changes (syntatic and semantic) in the output generated by the model.

?️ Prompt Tuning: A technique through which the user tests variations of the prompt text (input tokens) in order to generate variations in the output text (scoring of the output tokens) that meet their expectations.

Whatever you don't specify, if it required to generate the response, the model will decide for you.

Simply deleting a word from the prompt or replacing it with its synonym can lead the model to produce the desired answer.

The best response generated by the model does not necessarily result from the most complete prompt text, but rather from the result of a (non-provable) mathematical operation that combines all the prompt tokens.

Using deterministic adjustment and Feedback Mode , the same sequence of prompts will always generate the same sequence of responses. The use of deterministic adjustment allows the reproducibility of the results.

It all comes down to a trial and error process that depends on:

Examples:

Settings: Deterministic, with repeat_penalty = 1.5.

Compare the models' answers in relation to syntactic (different words) and semantic (different ideas) diversities.

Prompt 1: Who are you?

==========================================

1) gemma-2-9b-it-Q4_K_M.gguf

==========================================

I am Gemma, an open-weights AI assistant. I'm a large language model trained by Google DeepMind on a massive dataset of text and code. My purpose is to help users by understanding their requests and providing helpful, informative responses.

Here are some key things to know about me:

* **Open-Weights:** This means my weights are publicly accessible. Anyone can see how I work!

* **Text-Based:** I communicate solely through text. I cannot generate images, sound or videos.

* **Knowledge Cut-Off:** My knowledge is based on the data I was trained on, which has a specific cut-off point. I don't have access to real-time information or Google Search.

I am still under development but learning new things every day!

---------- (161 tokens, 58.4s)

Prompt 2: Who are you (without question mark)

==========================================

1) gemma-2-9b-it-Q4_K_M.gguf

==========================================

I am Gemma, an open-weights AI assistant. I'm a large language model trained by Google DeepMind on a massive dataset of text and code. My purpose is to help users by understanding their requests and providing helpful, informative responses.

Here are some key things to know about me:

* **Open-Weights:** This means my weights (the parameters that control how I work) are publicly available. Anyone can access them, study them, or even build upon them.

* **Text-Based:** I communicate and generate text only. I cannot interact with the world in any physical way, nor can I process images, audio, or video.

My abilities include:

* Generating creative content (stories, poems, articles)

* Answering your questions based on my training data

* Summarizing factual topics

* Translating languages

* Writing different kinds of creative text formats

Keep in mind that while I strive to be helpful and informative, I am still under development. My knowledge is limited to the data I was trained on, which has a cutoff point. I can sometimes make mistakes or provide incomplete information.

---------- (229 tokens, 83.6s)

Prompt 3: Who are you. (replacing "?" by ".")

==========================================

1) gemma-2-9b-it-Q4_K_M.gguf

==========================================

I am Gemma, an open-weights AI assistant developed by the Gemma team at Google DeepMind.

Think of me as a language expert! I can understand and generate text, which means I can answer your questions, write stories, summarize information, translate languages, and much more. However, remember that I'm just a computer program – I don't have feelings or personal experiences like humans do. My knowledge comes from the massive amount of text data I was trained on.

What can I help you with today?

---------- (106 tokens, 39.0s)