Penulis: Richard Paul Hudson, Ledakan AI

Managermanager.nlpOntologySupervisedTopicTrainingBasis (dikembalikan dari Manager.get_supervised_topic_training_basis() )SupervisedTopicModelTrainer (dikembalikan dari SupervisedTopicTrainingBasis.train() )SupervisedTopicClassifier (dikembalikan dari SupervisedTopicModelTrainer.classifier() dan Manager.deserialize_supervised_topic_classifier() )Manager.match()Manager.topic_match_documents_against()Holmes adalah perpustakaan Python 3 (v3.6 - V3.10) yang berjalan di atas Spacy (v3.1 - V3.3) yang mendukung sejumlah kasus penggunaan yang melibatkan ekstraksi informasi dari teks -teks Inggris dan Jerman. Dalam semua kasus penggunaan, ekstraksi informasi didasarkan pada menganalisis hubungan semantik yang diungkapkan oleh bagian komponen dari setiap kalimat:

Dalam kasing chatbot, sistem dikonfigurasi menggunakan satu atau lebih frasa pencarian . Holmes kemudian mencari struktur yang artinya sesuai dengan frasa pencarian ini dalam dokumen yang dicari, yang dalam hal ini sesuai dengan potongan teks atau pidato individual yang dimasukkan oleh pengguna akhir. Dalam kecocokan, setiap kata dengan maknanya sendiri (yaitu yang tidak hanya memenuhi fungsi tata bahasa) dalam frasa pencarian sesuai dengan satu atau lebih kata -kata tersebut dalam dokumen. Kedua fakta bahwa frasa pencarian dicocokkan dan informasi terstruktur apa pun yang dapat digunakan frase pencarian untuk menggerakkan chatbot.

Kasing penggunaan ekstraksi struktural menggunakan teknologi pencocokan struktural yang persis sama dengan casing penggunaan chatbot, tetapi pencarian terjadi sehubungan dengan dokumen atau dokumen yang sudah ada sebelumnya yang biasanya lebih lama daripada cuplikan yang dianalisis dalam kasus penggunaan chatbot, dan tujuannya adalah untuk mengekstrak dan menyimpan informasi terstruktur. Misalnya, serangkaian artikel bisnis dapat dicari untuk menemukan semua tempat di mana satu perusahaan dikatakan berencana untuk mengambil alih perusahaan kedua. Identitas perusahaan yang bersangkutan kemudian dapat disimpan dalam database.

Kasus Penggunaan Pencocokan Topik bertujuan untuk menemukan bagian-bagian dalam dokumen atau dokumen yang maknanya dekat dengan dokumen lain, yang mengambil peran dokumen kueri , atau dengan frasa kueri yang dimasukkan ad-hoc oleh pengguna. Holmes mengekstrak sejumlah phaselet kecil dari frasa kueri atau dokumen kueri, mencocokkan dokumen yang dicari terhadap setiap phraselet, dan mengacaukan hasilnya untuk menemukan bagian yang paling relevan dalam dokumen. Karena tidak ada persyaratan ketat bahwa setiap kata dengan maknanya sendiri dalam dokumen kueri cocok dengan kata atau kata -kata tertentu dalam dokumen yang dicari, lebih banyak kecocokan ditemukan daripada dalam kasus penggunaan ekstraksi struktural, tetapi kecocokan tidak berisi informasi terstruktur yang dapat digunakan dalam pemrosesan selanjutnya. Kasus penggunaan yang cocok topik ditunjukkan oleh situs web yang memungkinkan pencarian dalam enam novel Charles Dickens (untuk bahasa Inggris) dan sekitar 350 cerita tradisional (untuk bahasa Jerman).

Kasus penggunaan klasifikasi dokumen yang diawasi menggunakan data pelatihan untuk mempelajari classifier yang menetapkan satu atau lebih label klasifikasi ke dokumen baru berdasarkan tentang apa mereka. Ini mengklasifikasikan dokumen baru dengan mencocokkannya dengan phraselets yang diekstraksi dari dokumen pelatihan dengan cara yang sama seperti fraselet diekstraksi dari dokumen kueri dalam kasus penggunaan topik yang cocok. Teknik ini diilhami oleh algoritma klasifikasi berbasis tas-word yang menggunakan n-gram, tetapi bertujuan untuk memperoleh n-gram yang kata-kata komponennya terkait secara semantik daripada yang kebetulan adalah tetangga dalam representasi permukaan suatu bahasa.

Dalam keempat kasus penggunaan, kata -kata individual dicocokkan menggunakan sejumlah strategi. Untuk mengetahui apakah dua struktur tata bahasa yang berisi kata -kata yang cocok secara individual sesuai secara logis dan merupakan kecocokan, Holmes mengubah informasi parse sintaksis yang disediakan oleh perpustakaan Spacy menjadi struktur semantik yang memungkinkan teks dibandingkan dengan menggunakan logika predikat. Sebagai pengguna Holmes, Anda tidak perlu memahami seluk -beluk cara kerjanya, meskipun ada beberapa tips penting seputar menulis frasa pencarian yang efektif untuk chatbot dan kasus penggunaan ekstraksi struktural yang harus Anda coba dan ambil papan.

Holmes bertujuan untuk menawarkan solusi generalis yang dapat digunakan lebih atau kurang di luar kotak dengan tuning, penyesuaian atau pelatihan yang relatif sedikit dan yang dengan cepat berlaku untuk berbagai kasus penggunaan. Pada intinya terletak sistem yang logis, terprogram, berbasis aturan yang menjelaskan bagaimana representasi sintaksis dalam setiap bahasa mengekspresikan hubungan semantik. Meskipun kasus penggunaan klasifikasi dokumen yang diawasi memang menggabungkan jaringan saraf dan meskipun perpustakaan spacy di mana Holmes dibangun sendiri telah dilatih sebelumnya menggunakan pembelajaran mesin, pada dasarnya berbasis aturan dari Holmes berarti bahwa chatbot, ekstraksi struktural dan topik yang sesuai dengan data yang tidak ada dalam pelatihan yang disediakan untuk pelatihan yang tidak sesuai dengan pelatihan yang tidak ada dalam hal yang diawasi, yang merupakan pelatihan yang diawasi dengan banyak hal yang diawasi dengan banyak hal yang diawasi dengan sedikit. masalah dunia nyata.

Holmes memiliki sejarah yang panjang dan kompleks dan kami sekarang dapat menerbitkannya di bawah lisensi MIT berkat niat baik dan keterbukaan beberapa perusahaan. Saya, Richard Hudson, menulis versi hingga 3.0.0 saat bekerja di MSG Systems, konsultasi perangkat lunak internasional besar yang berbasis di dekat Munich. Pada akhir 2021, saya mengubah majikan dan sekarang bekerja untuk ledakan, pencipta Spacy dan Prodigy. Unsur -unsur Perpustakaan Holmes dicakup oleh paten AS yang saya sendiri tulis pada awal 2000 -an saat bekerja di sebuah startup bernama Definiens yang sejak itu telah diakuisisi oleh AstraZeneca. Dengan izin baik dari Sistem AstraZeneca dan MSG, saya sekarang memelihara Holmes saat ledakan dan dapat menawarkannya untuk pertama kalinya di bawah lisensi permisif: siapa pun sekarang dapat menggunakan Holmes berdasarkan ketentuan lisensi MIT tanpa harus khawatir tentang paten.

Perpustakaan awalnya dikembangkan di MSG Systems, tetapi sekarang sedang dipelihara di Explosion AI. Harap arahkan masalah atau diskusi baru apa pun ke repositori ledakan.

Jika Anda belum memiliki Python 3 dan Pip di mesin Anda, Anda harus menginstalnya sebelum memasang Holmes.

Instal Holmes menggunakan perintah berikut:

Linux:

pip3 install holmes-extractor

Windows:

pip install holmes-extractor

Untuk meningkatkan dari versi Holmes sebelumnya, mengeluarkan perintah berikut dan kemudian menerbitkan kembali perintah untuk mengunduh model Spacy dan Coreferee untuk memastikan Anda memiliki versi yang benar dari mereka:

Linux:

pip3 install --upgrade holmes-extractor

Windows:

pip install --upgrade holmes-extractor

Jika Anda ingin menggunakan contoh dan tes, klon kode sumber menggunakan

git clone https://github.com/explosion/holmes-extractor

Jika Anda ingin bereksperimen dengan mengubah kode sumber, Anda dapat mengganti kode yang diinstal dengan memulai python (ketik python3 (linux) atau python (windows)) di direktori induk dari direktori di mana kode modul holmes_extractor yang Anda ubah. Jika Anda telah memeriksa Holmes dari git, ini akan menjadi Direktori holmes-extractor .

Jika Anda ingin menghapus Holmes lagi, ini dicapai dengan menghapus file yang diinstal langsung dari sistem file. Ini dapat ditemukan dengan mengeluarkan yang berikut dari prompt perintah python dimulai dari direktori selain direktori induk holmes_extractor :

import holmes_extractor

print(holmes_extractor.__file__)

Perpustakaan Spacy dan Coreferee yang dibangun Holmes membutuhkan model khusus bahasa yang harus diunduh secara terpisah sebelum Holmes dapat digunakan:

Linux/Bahasa Inggris:

python3 -m spacy download en_core_web_trf

python3 -m spacy download en_core_web_lg

python3 -m coreferee install en

Linux/Jerman:

pip3 install spacy-lookups-data # (from spaCy 3.3 onwards)

python3 -m spacy download de_core_news_lg

python3 -m coreferee install de

Windows/Bahasa Inggris:

python -m spacy download en_core_web_trf

python -m spacy download en_core_web_lg

python -m coreferee install en

Windows/Jerman:

pip install spacy-lookups-data # (from spaCy 3.3 onwards)

python -m spacy download de_core_news_lg

python -m coreferee install de

Dan jika Anda berencana untuk menjalankan tes regresi:

Linux:

python3 -m spacy download en_core_web_sm

Windows:

python -m spacy download en_core_web_sm

Anda menentukan model spacy untuk digunakan Holmes saat Anda instantiate kelas fasad manajer. en_core_web_trf dan de_core_web_lg adalah model yang telah ditemukan masing -masing menghasilkan hasil terbaik untuk bahasa Inggris dan Jerman. Karena en_core_web_trf tidak memiliki kata-kata vektor sendiri, tetapi Holmes membutuhkan vektor kata untuk penyatuan berbasis penyatuan, en_core_web_lg model dimuat sebagai sumber vektor setiap kali en_core_web_trf ditentukan untuk kelas manajer sebagai model utama.

Model en_core_web_trf membutuhkan sumber daya yang cukup lebih dari model lainnya; Dalam siutasi di mana sumber daya langka, mungkin kompromi yang masuk akal untuk menggunakan en_core_web_lg sebagai model utama sebagai gantinya.

Cara terbaik untuk mengintegrasikan Holmes ke dalam lingkungan non-python adalah dengan membungkusnya sebagai layanan HTTP yang tenang dan untuk menggunakannya sebagai layanan mikro. Lihat di sini untuk contoh.

Karena Holmes melakukan analisis yang kompleks dan cerdas, tidak dapat dihindari bahwa ia membutuhkan lebih banyak sumber daya perangkat keras daripada kerangka pencarian yang lebih tradisional. Kasus penggunaan yang melibatkan dokumen pemuatan - ekstraksi struktural dan pencocokan topik - paling segera berlaku untuk korpora besar tetapi tidak masif (misalnya semua dokumen milik organisasi tertentu, semua paten pada topik tertentu, semua buku oleh penulis tertentu). Untuk alasan biaya, Holmes tidak akan menjadi alat yang tepat untuk menganalisis konten seluruh internet!

Yang mengatakan, Holmes dapat diskalakan secara vertikal dan horizontal. Dengan perangkat keras yang cukup, kedua kasus penggunaan ini dapat diterapkan pada jumlah dokumen yang pada dasarnya tidak terbatas dengan menjalankan Holmes pada beberapa mesin, memproses serangkaian dokumen yang berbeda pada masing -masing dan menggabungkan hasilnya. Perhatikan bahwa strategi ini sudah digunakan untuk mendistribusikan pencocokan di antara beberapa core pada satu mesin: kelas manajer memulai sejumlah proses pekerja dan mendistribusikan dokumen terdaftar di antara mereka.

Holmes memegang dokumen yang dimuat dalam memori, yang terkait dengan penggunaan yang dimaksudkan dengan korpora besar tetapi tidak masif. Kinerja pemuatan dokumen, ekstraksi struktural, dan topik yang cocok dengan semua degradasi jika sistem operasi harus menukar halaman memori ke penyimpanan sekunder, karena Holmes dapat memerlukan memori dari berbagai halaman untuk diatasi saat memproses satu kalimat. Ini berarti penting untuk memasok RAM yang cukup pada setiap mesin untuk menahan semua dokumen yang dimuat.

Harap perhatikan komentar di atas tentang persyaratan sumber daya relatif dari berbagai model.

Kasus penggunaan termudah yang dapat digunakan untuk mendapatkan ide dasar cepat tentang cara kerja Holmes adalah casing chatbot .

Di sini satu atau lebih frasa pencarian didefinisikan ke Holmes terlebih dahulu, dan dokumen yang dicari adalah kalimat atau paragraf pendek yang diketik secara interaktif oleh pengguna akhir. Dalam pengaturan kehidupan nyata, informasi yang diekstraksi akan digunakan untuk menentukan aliran interaksi dengan pengguna akhir. Untuk tujuan pengujian dan demonstrasi, ada konsol yang menampilkan temuan yang cocok secara interaktif. Ini dapat dengan mudah dan cepat dimulai dari baris perintah Python (yang sendiri dimulai dari prompt sistem operasi dengan mengetik python3 (linux) atau python (windows)) atau dari dalam buku catatan jupyter.

Cuplikan kode berikut dapat dimasukkan baris untuk baris ke baris perintah Python, ke dalam buku catatan Jupyter atau ke dalam IDE. Ini mencatat fakta bahwa Anda tertarik pada kalimat tentang anjing besar mengejar kucing dan memulai konsol chatbot demonstrasi:

Bahasa inggris:

import holmes_extractor as holmes

holmes_manager = holmes.Manager(model='en_core_web_lg', number_of_workers=1)

holmes_manager.register_search_phrase('A big dog chases a cat')

holmes_manager.start_chatbot_mode_console()

Jerman:

import holmes_extractor as holmes

holmes_manager = holmes.Manager(model='de_core_news_lg', number_of_workers=1)

holmes_manager.register_search_phrase('Ein großer Hund jagt eine Katze')

holmes_manager.start_chatbot_mode_console()

Jika Anda sekarang memasukkan kalimat yang sesuai dengan frasa pencarian, konsol akan menampilkan kecocokan:

Bahasa inggris:

Ready for input

A big dog chased a cat

Matched search phrase with text 'A big dog chases a cat':

'big'->'big' (Matches BIG directly); 'A big dog'->'dog' (Matches DOG directly); 'chased'->'chase' (Matches CHASE directly); 'a cat'->'cat' (Matches CAT directly)

Jerman:

Ready for input

Ein großer Hund jagte eine Katze

Matched search phrase 'Ein großer Hund jagt eine Katze':

'großer'->'groß' (Matches GROSS directly); 'Ein großer Hund'->'hund' (Matches HUND directly); 'jagte'->'jagen' (Matches JAGEN directly); 'eine Katze'->'katze' (Matches KATZE directly)

Ini bisa dengan mudah dicapai dengan algoritma pencocokan sederhana, jadi ketik beberapa kalimat yang lebih kompleks untuk meyakinkan diri sendiri bahwa Holmes benar -benar menggenggamnya dan bahwa pertandingan masih dikembalikan:

Bahasa inggris:

The big dog would not stop chasing the cat

The big dog who was tired chased the cat

The cat was chased by the big dog

The cat always used to be chased by the big dog

The big dog was going to chase the cat

The big dog decided to chase the cat

The cat was afraid of being chased by the big dog

I saw a cat-chasing big dog

The cat the big dog chased was scared

The big dog chasing the cat was a problem

There was a big dog that was chasing a cat

The cat chase by the big dog

There was a big dog and it was chasing a cat.

I saw a big dog. My cat was afraid of being chased by the dog.

There was a big dog. His name was Fido. He was chasing my cat.

A dog appeared. It was chasing a cat. It was very big.

The cat sneaked back into our lounge because a big dog had been chasing her.

Our big dog was excited because he had been chasing a cat.

Jerman:

Der große Hund hat die Katze ständig gejagt

Der große Hund, der müde war, jagte die Katze

Die Katze wurde vom großen Hund gejagt

Die Katze wurde immer wieder durch den großen Hund gejagt

Der große Hund wollte die Katze jagen

Der große Hund entschied sich, die Katze zu jagen

Die Katze, die der große Hund gejagt hatte, hatte Angst

Dass der große Hund die Katze jagte, war ein Problem

Es gab einen großen Hund, der eine Katze jagte

Die Katzenjagd durch den großen Hund

Es gab einmal einen großen Hund, und er jagte eine Katze

Es gab einen großen Hund. Er hieß Fido. Er jagte meine Katze

Es erschien ein Hund. Er jagte eine Katze. Er war sehr groß.

Die Katze schlich sich in unser Wohnzimmer zurück, weil ein großer Hund sie draußen gejagt hatte

Unser großer Hund war aufgeregt, weil er eine Katze gejagt hatte

Demonstrasi tidak lengkap tanpa mencoba kalimat lain yang berisi kata -kata yang sama tetapi tidak mengekspresikan ide yang sama dan mengamati bahwa mereka tidak cocok:

Bahasa inggris:

The dog chased a big cat

The big dog and the cat chased about

The big dog chased a mouse but the cat was tired

The big dog always used to be chased by the cat

The big dog the cat chased was scared

Our big dog was upset because he had been chased by a cat.

The dog chase of the big cat

Jerman:

Der Hund jagte eine große Katze

Die Katze jagte den großen Hund

Der große Hund und die Katze jagten

Der große Hund jagte eine Maus aber die Katze war müde

Der große Hund wurde ständig von der Katze gejagt

Der große Hund entschloss sich, von der Katze gejagt zu werden

Die Hundejagd durch den große Katze

Dalam contoh-contoh di atas, Holmes telah mencocokkan berbagai struktur tingkat kalimat yang berbeda yang memiliki makna yang sama, tetapi bentuk dasar dari tiga kata dalam dokumen yang cocok selalu sama dengan tiga kata dalam frasa pencarian. Holmes menyediakan beberapa strategi lebih lanjut untuk dicocokkan pada tingkat kata individual. Dalam kombinasi dengan kemampuan Holmes untuk mencocokkan struktur kalimat yang berbeda, ini dapat memungkinkan frasa pencarian untuk dicocokkan dengan kalimat dokumen yang berbagi maknanya bahkan di mana keduanya tidak berbagi kata dan secara tata bahasa sama sekali berbeda.

Salah satu dari strategi pencocokan kata tambahan ini adalah pencocokan-entitas bernama: kata-kata khusus dapat dimasukkan dalam frasa pencarian yang cocok dengan seluruh kelas nama seperti orang atau tempat. Keluar dari konsol dengan mengetik exit , lalu daftarkan frasa pencarian kedua dan restart konsol:

Bahasa inggris:

holmes_manager.register_search_phrase('An ENTITYPERSON goes into town')

holmes_manager.start_chatbot_mode_console()

Jerman:

holmes_manager.register_search_phrase('Ein ENTITYPER geht in die Stadt')

holmes_manager.start_chatbot_mode_console()

Anda sekarang telah mendaftarkan minat Anda pada orang yang masuk ke kota dan dapat memasukkan kalimat yang sesuai ke dalam konsol:

Bahasa inggris:

Ready for input

I met Richard Hudson and John Doe last week. They didn't want to go into town.

Matched search phrase with text 'An ENTITYPERSON goes into town'; negated; uncertain; involves coreference:

'Richard Hudson'->'ENTITYPERSON' (Has an entity label matching ENTITYPERSON); 'go'->'go' (Matches GO directly); 'into'->'into' (Matches INTO directly); 'town'->'town' (Matches TOWN directly)

Matched search phrase with text 'An ENTITYPERSON goes into town'; negated; uncertain; involves coreference:

'John Doe'->'ENTITYPERSON' (Has an entity label matching ENTITYPERSON); 'go'->'go' (Matches GO directly); 'into'->'into' (Matches INTO directly); 'town'->'town' (Matches TOWN directly)

Jerman:

Ready for input

Letzte Woche sah ich Richard Hudson und Max Mustermann. Sie wollten nicht mehr in die Stadt gehen.

Matched search phrase with text 'Ein ENTITYPER geht in die Stadt'; negated; uncertain; involves coreference:

'Richard Hudson'->'ENTITYPER' (Has an entity label matching ENTITYPER); 'gehen'->'gehen' (Matches GEHEN directly); 'in'->'in' (Matches IN directly); 'die Stadt'->'stadt' (Matches STADT directly)

Matched search phrase with text 'Ein ENTITYPER geht in die Stadt'; negated; uncertain; involves coreference:

'Max Mustermann'->'ENTITYPER' (Has an entity label matching ENTITYPER); 'gehen'->'gehen' (Matches GEHEN directly); 'in'->'in' (Matches IN directly); 'die Stadt'->'stadt' (Matches STADT directly)

Di masing -masing dari dua bahasa, contoh terakhir ini menunjukkan beberapa fitur lebih lanjut dari Holmes:

Untuk lebih banyak contoh, silakan lihat Bagian 5.

Strategi berikut diimplementasikan dengan satu modul Python per strategi. Meskipun perpustakaan standar tidak mendukung penambahan strategi yang dipesan lebih dahulu melalui kelas manajer, akan relatif mudah bagi siapa pun dengan keterampilan pemrograman Python untuk mengubah kode untuk mengaktifkan ini.

word_match.type=='direct' )Pencocokan langsung antara kata -kata frasa pencarian dan kata -kata dokumen selalu aktif. Strategi ini terutama bergantung pada bentuk kata -kata batang yang cocok, misalnya pencocokan pembelian bahasa Inggris dan anak untuk dibeli dan anak -anak , Steigen Jerman dan baik untuk Stieg dan Kinder . Namun, untuk meningkatkan kemungkinan pencocokan langsung bekerja ketika parser memberikan bentuk batang yang salah untuk sebuah kata, bentuk-bentuk teks mentah dari kata-kata pencarian dan kata-kata dokumen juga dipertimbangkan selama pencocokan langsung.

word_match.type=='derivation' ) Pencocokan berbasis derivasi melibatkan kata-kata yang berbeda tetapi terkait yang biasanya termasuk dalam kelas kata yang berbeda, misalnya penilaian dan penilaian bahasa Inggris, Jagen dan Jagd Jerman. Ini aktif secara default tetapi dapat dimatikan menggunakan parameter analyze_derivational_morphology , yang ditetapkan saat instantiasi kelas manajer.

word_match.type=='entity' )Pencocokan Entrytity bernama diaktifkan dengan memasukkan pengidentifikasi entitas khusus yang dinamai pada titik yang diinginkan dalam frasa pencarian sebagai pengganti kata benda, misalnya

Seorang entitas pergi ke kota (bahasa Inggris)

Ein Entityper Geht di Die Stadt (Jerman).

Identifer yang didukung-namanya bergantung langsung pada informasi namanya-entitas yang disediakan oleh model spacy untuk setiap bahasa (deskripsi yang disalin dari versi sebelumnya dari dokumentasi spacy):

Bahasa inggris:

| Pengidentifikasi | Arti |

|---|---|

| Entitynoun | Frasa kata benda apa pun. |

| EntityPerson | Orang, termasuk fiksi. |

| Entitynorp | Kebangsaan atau kelompok agama atau politik. |

| Entityfac | Bangunan, bandara, jalan raya, jembatan, dll. |

| Entityorg | Perusahaan, lembaga, lembaga, dll. |

| Entitygpe | Negara, kota, negara bagian. |

| EntityLoc | Lokasi non-GPE, pegunungan, badan air. |

| EntityProduct | Objek, kendaraan, makanan, dll. (Bukan layanan.) |

| EntityEvent | Bernama Badai, Pertempuran, Perang, Acara Olahraga, dll. |

| Entitywork_of_art | Judul buku, lagu, dll. |

| EntityLaw | Dokumen bernama dibuat menjadi undang -undang. |

| EntityLanguage | Bahasa apa pun yang disebutkan. |

| EntityDate | Tanggal atau periode absolut atau relatif. |

| Entitytime | Kali lebih kecil dari sehari. |

| EntityPercent | Persentase, termasuk "%". |

| EntityMoney | Nilai moneter, termasuk unit. |

| EntityQuantity | Pengukuran, seperti berat atau jarak. |

| Entityordinal | "Pertama", "kedua", dll. |

| Entitycardinal | Angka yang tidak termasuk di bawah jenis lain. |

Jerman:

| Pengidentifikasi | Arti |

|---|---|

| Entitynoun | Frasa kata benda apa pun. |

| Entityper | Orang atau keluarga bernama. |

| EntityLoc | Nama lokasi yang didefinisikan secara politis atau geografis (kota, provinsi, negara, daerah internasional, badan air, pegunungan). |

| Entityorg | Dinamai perusahaan organisasi, pemerintah, atau organisasi lainnya. |

| EntityMisc | Lain -lain entitas, misalnya acara, kebangsaan, produk atau karya seni. |

Kami telah menambahkan ENTITYNOUN ke pengidentifikasi entitas bernama asli. Karena cocok dengan frasa kata benda apa pun, itu berperilaku mirip dengan kata ganti generik. Perbedaannya adalah bahwa ENTITYNOUN harus mencocokkan frasa kata benda tertentu dalam dokumen dan bahwa frasa kata benda khusus ini diekstraksi dan tersedia untuk pemrosesan lebih lanjut. ENTITYNOUN tidak didukung dalam kasus penggunaan topik pencocokan.

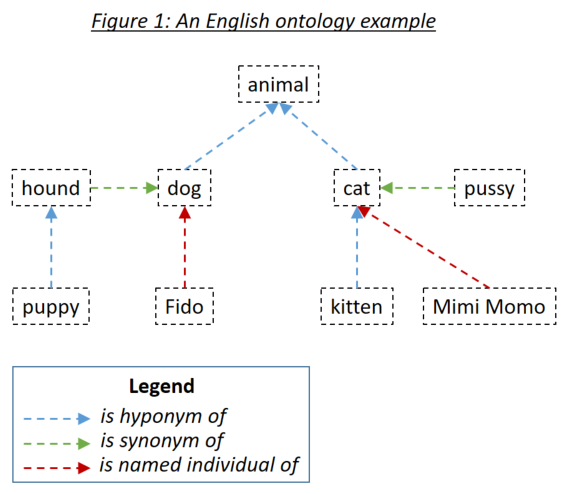

word_match.type=='ontology' )Ontologi memungkinkan pengguna untuk mendefinisikan hubungan antara kata -kata yang kemudian diperhitungkan saat mencocokkan dokumen untuk mencari frasa. Tiga tipe hubungan yang relevan adalah hiponim (sesuatu adalah subtipe dari sesuatu), sinonim (sesuatu berarti sama dengan sesuatu) dan individu bernama (sesuatu adalah contoh spesifik dari sesuatu). Tiga jenis hubungan dicontohkan pada Gambar 1:

Ontologi didefinisikan untuk Holmes menggunakan serial standar Ontologi Ontologi menggunakan RDF/XML. Ontologi semacam itu dapat dihasilkan dengan berbagai alat. Untuk contoh dan tes Holmes, anak didik bebas digunakan. Dianjurkan agar Anda menggunakan anak didik untuk menentukan ontologi Anda sendiri dan untuk menelusuri ontologi yang dikirimkan dengan contoh dan tes. Saat menyimpan ontologi di bawah protege, silakan pilih RDF/XML sebagai format. Protege memberikan label standar untuk hyponim, sinonim dan hubungan individu yang dinamai yang dipahami Holmes sebagai default tetapi itu juga dapat ditimpa.

Entri ontologi didefinisikan menggunakan pengidentifikasi sumber daya internasional (IRI), misalnya http://www.semanticweb.org/hudsonr/ontologies/2019/0/animals#dog . Holmes hanya menggunakan fragmen akhir untuk pencocokan, yang memungkinkan homonim (kata -kata dengan bentuk yang sama tetapi banyak makna) untuk didefinisikan pada beberapa titik di pohon ontologi.

Pencocokan berbasis ontologi memberikan hasil terbaik dengan Holmes ketika ontologi kecil digunakan yang telah dibangun untuk domain subjek tertentu dan kasus penggunaan. Misalnya, jika Anda menerapkan chatbot untuk kasus penggunaan asuransi bangunan, Anda harus membuat ontologi kecil yang menangkap persyaratan dan hubungan dalam domain tertentu. Di sisi lain, tidak disarankan untuk menggunakan ontologi besar yang dibangun untuk semua domain dalam seluruh bahasa seperti WordNet. Ini karena banyaknya homonim dan hubungan yang hanya berlaku di domain subjek sempit akan cenderung menyebabkan sejumlah besar kecocokan yang salah. Untuk kasus penggunaan umum, pencocokan berbasis embedding akan cenderung menghasilkan hasil yang lebih baik.

Setiap kata dalam ontologi dapat dianggap sebagai tajuk subtree yang terdiri dari hiponimnya, sinonim dan individu yang disebut, kata -kata itu 'hiponim, sinonim dan individu yang disebut, dan sebagainya. Dengan ontologi yang diatur dengan cara standar yang sesuai untuk chatbot dan kasus penggunaan ekstraksi struktural, sebuah kata dalam frasa pencarian Holmes cocok dengan kata dalam dokumen jika kata dokumen berada dalam subtree dari kata frasa pencarian. Adalah ontologi pada Gambar 1 yang didefinisikan untuk Holmes, selain strategi pencocokan langsung, yang akan cocok dengan setiap kata dengan dirinya sendiri, kombinasi berikut akan cocok:

Kata kerja phrasal bahasa Inggris seperti Eat Up dan kata kerja Jerman yang dapat dipisahkan seperti Aufessen harus didefinisikan sebagai item tunggal dalam ontologi. Ketika Holmes menganalisis teks dan menemukan kata kerja seperti itu, kata kerja utama dan partikel digabungkan menjadi satu kata logis tunggal yang kemudian dapat dicocokkan melalui ontologi. Ini berarti bahwa makan dalam teks akan cocok dengan subtree Eat Up di dalam ontologi tetapi bukan subtree makan di dalam ontologi.

Jika pencocokan berbasis derivasi aktif, itu diperhitungkan di kedua sisi kecocokan berbasis ontologi potensial. Misalnya, jika Alter dan Amend didefinisikan sebagai sinonim dalam ontologi, perubahan dan amandemen juga akan cocok satu sama lain.

Dalam situasi di mana menemukan kalimat yang relevan lebih penting daripada memastikan korespondensi logis dari dokumen cocok dengan frasa pencarian, mungkin masuk akal untuk menentukan pencocokan simetris saat mendefinisikan ontologi. Pencocokan simetris direkomendasikan untuk kasus penggunaan pencocokan topik, tetapi tidak mungkin sesuai untuk kasus penggunaan chatbot atau ekstraksi struktural. Ini berarti bahwa hubungan hypernym (reverse hyponym) diperhitungkan serta hubungan hiponim dan sinonim saat mencocokkan, sehingga mengarah pada hubungan yang lebih simetris antara dokumen dan frasa pencarian. Aturan penting yang diterapkan saat mencocokkan melalui ontologi simetris adalah bahwa jalur yang cocok mungkin tidak mengandung hubungan hypernym dan hiponim, yaitu Anda tidak dapat kembali pada diri sendiri. Apakah ontologi di atas didefinisikan sebagai simetris, kombinasi berikut akan cocok:

Dalam kasus penggunaan klasifikasi dokumen yang diawasi, dua ontologi terpisah dapat digunakan:

Ontologi pencocokan struktural digunakan untuk menganalisis konten pelatihan dan dokumen pengujian. Setiap kata dari dokumen yang ditemukan dalam ontologi digantikan oleh leluhur Hypernym yang paling umum. Penting untuk disadari bahwa ontologi hanya cenderung bekerja dengan pencocokan struktural untuk klasifikasi dokumen yang diawasi jika dibangun secara khusus untuk tujuan tersebut: ontologi seperti itu harus terdiri dari sejumlah pohon terpisah yang mewakili kelas utama objek dalam dokumen yang akan diklasifikasikan. Dalam contoh ontologi yang ditunjukkan di atas, semua kata dalam ontologi akan diganti dengan hewan ; Dalam kasus ekstrem dengan ontologi gaya WordNet, semua kata benda akan akhirnya diganti dengan benda , yang jelas bukan hasil yang diinginkan!

Ontologi klasifikasi digunakan untuk menangkap hubungan antara label klasifikasi: bahwa dokumen memiliki klasifikasi tertentu menyiratkan juga memiliki klasifikasi apa pun yang menjadi milik klasifikasi itu. Sinonim harus digunakan dengan hemat jika sama sekali dalam klasifikasi ontologi karena mereka menambah kompleksitas jaringan saraf tanpa menambahkan nilai apa pun; Dan meskipun secara teknis dimungkinkan untuk mengatur ontologi klasifikasi untuk menggunakan pencocokan simetris, tidak ada alasan yang masuk akal untuk melakukannya. Perhatikan bahwa label dalam ontologi klasifikasi yang tidak secara langsung didefinisikan sebagai label dari dokumen pelatihan apa pun harus didaftarkan secara khusus menggunakan metode SupervisedTopicTrainingBasis.register_additional_classification_label() .

word_match.type=='embedding' )Spacy menawarkan embeddings kata : representasi vektor numerik yang dihasilkan oleh mesin yang dihasilkan oleh mesin yang menangkap konteks di mana setiap kata cenderung terjadi. Dua kata dengan makna yang sama cenderung muncul dengan embeddings kata yang dekat satu sama lain, dan spacy dapat mengukur kesamaan kosinus antara embedding dua kata yang dinyatakan sebagai desimal antara 0,0 (tidak ada kesamaan) dan 1,0 (kata yang sama). Karena anjing dan kucing cenderung muncul dalam konteks yang sama, mereka memiliki kesamaan 0,80; Anjing dan kuda memiliki lebih sedikit kesamaan dan memiliki kesamaan 0,62; dan anjing dan besi memiliki kesamaan hanya 0,25. Pencocokan berbasis embedding hanya diaktifkan untuk kata benda, kata sifat dan kata keterangan karena hasilnya telah ditemukan tidak memuaskan dengan kelas kata lain.

Penting untuk dipahami bahwa fakta bahwa dua kata memiliki embeddings yang serupa tidak menyiratkan jenis hubungan logis yang sama antara keduanya seperti ketika pencocokan berbasis ontologi digunakan: misalnya, fakta bahwa anjing dan kucing memiliki embeddings yang sama berarti bahwa anjing bukanlah jenis kucing maupun bahwa kucing adalah jenis anjing. Apakah pencocokan berbasis embedding adalah pilihan yang tepat atau tidak tergantung pada kasus penggunaan fungsional.

Untuk chatbot, ekstraksi struktural dan kasus penggunaan klasifikasi dokumen yang diawasi, Holmes memanfaatkan kesamaan berbasis kata-embedding menggunakan parameter overall_similarity_threshold yang didefinisikan secara global pada kelas manajer. Kecocokan terdeteksi antara frasa pencarian dan struktur dalam dokumen setiap kali rata -rata geometris dari kesamaan antara pasangan kata yang sesuai individu lebih besar dari ambang batas ini. Intuisi di balik teknik ini adalah bahwa di mana frasa pencarian dengan misalnya enam kata leksikal telah mencocokkan struktur dokumen di mana lima kata ini cocok dengan tepat dan hanya satu yang sesuai dengan embedding, kesamaan yang harus diperlukan untuk mencocokkan kata keenam ini kurang dari ketika hanya tiga kata yang cocok dengan persis dan dua kata lain juga sesuai dengan embedding.

Cocokkan frasa pencarian dengan dokumen dimulai dengan menemukan kata -kata dalam dokumen yang cocok dengan kata di root (kepala sintaksis) dari frasa pencarian. Holmes kemudian menyelidiki struktur di sekitar masing -masing kata dokumen yang cocok ini untuk memeriksa apakah struktur dokumen cocok dengan struktur frasa pencarian di kepentingannya. Kata -kata dokumen yang cocok dengan kata root frasa pencarian biasanya ditemukan menggunakan indeks. Namun, jika embeddings harus dipertimbangkan ketika menemukan kata -kata dokumen yang cocok dengan frasa root frasa pencarian, setiap kata dalam setiap dokumen dengan kelas kata yang valid harus dibandingkan dengan kemiripan dengan kata root frasa pencarian tersebut. Ini memiliki hit kinerja yang sangat nyata yang membuat semua kasus penggunaan kecuali casing chatbot penggunaan yang pada dasarnya tidak dapat digunakan.

Untuk menghindari hit kinerja yang biasanya tidak perlu yang dihasilkan dari pencocokan embedding dari kata-kata root frasa pencarian, itu dikendalikan secara terpisah dari pencocokan berbasis embedding secara umum menggunakan parameter embedding_based_matching_on_root_words , yang ditetapkan ketika instantiasi kelas manajer. Anda disarankan agar pengaturan ini dimatikan (nilai False ) untuk sebagian besar kasus penggunaan.

Baik overall_similarity_threshold maupun parameter embedding_based_matching_on_root_words memiliki efek pada kasus penggunaan topik pencocokan. Di sini ambang kesamaan embedding tingkat kata diatur menggunakan word_embedding_match_threshold dan initial_question_word_embedding_match_threshold parameter saat memanggil fungsi topic_match_documents_against pada kelas manajer.

word_match.type=='entity_embedding' ) Pencocokan berbasis yang dinamai-entitas yang diperoleh antara kata-kata yang dicari yang memiliki label entitas tertentu dan frasa pencarian atau kata dokumen kueri yang embeddingnya cukup mirip dengan makna yang mendasari label entitas itu, misalnya kata individu dalam frasa pencarian memiliki kata yang sama dengan makna yang mendasari makna label entitas orang . Perhatikan bahwa pencocokan berbasis-embedding bernama tidak pernah aktif pada kata-kata root terlepas dari pengaturan embedding_based_matching_on_root_words .

word_match.type=='question' )Pencocokan kata-pertanyaan awal hanya aktif selama pencocokan topik. Kata-kata pertanyaan awal dalam frasa kueri cocok dengan entitas dalam dokumen yang dicari yang mewakili jawaban potensial untuk pertanyaan, misalnya ketika membandingkan frasa kueri kapan Peter sarapan dengan frasa dokumen yang dicari Peter sarapan pada pukul 8 pagi , kata pertanyaan kapan akan cocok dengan frasa adverbial temporal pada pukul 8 pagi .

Pencocokan kata-pertanyaan-pertanyaan diaktifkan dan dimatikan menggunakan parameter initial_question_word_behaviour saat memanggil fungsi topic_match_documents_against pada kelas manajer. It is only likely to be useful when topic matching is being performed in an interactive setting where the user enters short query phrases, as opposed to when it is being used to find documents on a similar topic to an pre-existing query document: initial question words are only processed at the beginning of the first sentence of the query phrase or query document.

Linguistically speaking, if a query phrase consists of a complex question with several elements dependent on the main verb, a finding in a searched document is only an 'answer' if contains matches to all these elements. Because recall is typically more important than precision when performing topic matching with interactive query phrases, however, Holmes will match an initial question word to a searched-document phrase wherever they correspond semantically (eg wherever when corresponds to a temporal adverbial phrase) and each depend on verbs that themselves match at the word level. One possible strategy to filter out 'incomplete answers' would be to calculate the maximum possible score for a query phrase and reject topic matches that score below a threshold scaled to this maximum.

Before Holmes analyses a searched document or query document, coreference resolution is performed using the Coreferee library running on top of spaCy. This means that situations are recognised where pronouns and nouns that are located near one another within a text refer to the same entities. The information from one mention can then be applied to the analysis of further mentions:

I saw a big dog . It was chasing a cat.

I saw a big dog . The dog was chasing a cat.

Coreferee also detects situations where a noun refers back to a named entity:

We discussed AstraZeneca . The company had given us permission to publish this library under the MIT license.

If this example were to match the search phrase A company gives permission to publish something , the coreference information that the company under discussion is AstraZeneca is clearly relevant and worth extracting in addition to the word(s) directly matched to the search phrase. Such information is captured in the word_match.extracted_word field.

The concept of search phrases has already been introduced and is relevant to the chatbot use case, the structural extraction use case and to preselection within the supervised document classification use case.

It is crucial to understand that the tips and limitations set out in Section 4 do not apply in any way to query phrases in topic matching. If you are using Holmes for topic matching only, you can completely ignore this section!

Structural matching between search phrases and documents is not symmetric: there are many situations in which sentence X as a search phrase would match sentence Y within a document but where the converse would not be true. Although Holmes does its best to understand any search phrases, the results are better when the user writing them follows certain patterns and tendencies, and getting to grips with these patterns and tendencies is the key to using the relevant features of Holmes successfully.

Holmes distinguishes between: lexical words like dog , chase and cat (English) or Hund , jagen and Katze (German) in the initial example above; and grammatical words like a (English) or ein and eine (German) in the initial example above. Only lexical words match words in documents, but grammatical words still play a crucial role within a search phrase: they enable Holmes to understand it.

Dog chase cat (English)

Hund jagen Katze (German)

contain the same lexical words as the search phrases in the initial example above, but as they are not grammatical sentences Holmes is liable to misunderstand them if they are used as search phrases. This is a major difference between Holmes search phrases and the search phrases you use instinctively with standard search engines like Google, and it can take some getting used to.

A search phrase need not contain a verb:

ENTITYPERSON (English)

A big dog (English)

Interest in fishing (English)

ENTITYPER (German)

Ein großer Hund (German)

Interesse am Angeln (German)

are all perfectly valid and potentially useful search phrases.

Where a verb is present, however, Holmes delivers the best results when the verb is in the present active , as chases and jagt are in the initial example above. This gives Holmes the best chance of understanding the relationship correctly and of matching the widest range of document structures that share the target meaning.

Sometimes you may only wish to extract the object of a verb. For example, you might want to find sentences that are discussing a cat being chased regardless of who is doing the chasing. In order to avoid a search phrase containing a passive expression like

A cat is chased (English)

Eine Katze wird gejagt (German)

you can use a generic pronoun . This is a word that Holmes treats like a grammatical word in that it is not matched to documents; its sole purpose is to help the user form a grammatically optimal search phrase in the present active. Recognised generic pronouns are English something , somebody and someone and German jemand (and inflected forms of jemand ) and etwas : Holmes treats them all as equivalent. Using generic pronouns, the passive search phrases above could be re-expressed as

Somebody chases a cat (English)

Jemand jagt eine Katze (German).

Experience shows that different prepositions are often used with the same meaning in equivalent phrases and that this can prevent search phrases from matching where one would intuitively expect it. For example, the search phrases

Somebody is at the market (English)

Jemand ist auf dem Marktplatz (German)

would fail to match the document phrases

Richard was in the market (English)

Richard war am Marktplatz (German)

The best way of solving this problem is to define the prepositions in question as synonyms in an ontology.

The following types of structures are prohibited in search phrases and result in Python user-defined errors:

A dog chases a cat. A cat chases a dog (English)

Ein Hund jagt eine Katze. Eine Katze jagt einen Hund (German)

Each clause must be separated out into its own search phrase and registered individually.

A dog does not chase a cat. (Bahasa inggris)

Ein Hund jagt keine Katze. (Jerman)

Negative expressions are recognised as such in documents and the generated matches marked as negative; allowing search phrases themselves to be negative would overcomplicate the library without offering any benefits.

A dog and a lion chase a cat. (Bahasa inggris)

Ein Hund und ein Löwe jagen eine Katze. (Jerman)

Wherever conjunction occurs in documents, Holmes distributes the information among multiple matches as explained above. In the unlikely event that there should be a requirement to capture conjunction explicitly when matching, this could be achieved by using the Manager.match() function and looking for situations where the document token objects are shared by multiple match objects.

The (English)

Der (German)

A search phrase cannot be processed if it does not contain any words that can be matched to documents.

A dog chases a cat and he chases a mouse (English)

Ein Hund jagt eine Katze und er jagt eine Maus (German)

Pronouns that corefer with nouns elsewhere in the search phrase are not permitted as this would overcomplicate the library without offering any benefits.

The following types of structures are strongly discouraged in search phrases:

Dog chase cat (English)

Hund jagen Katze (German)

Although these will sometimes work, the results will be better if search phrases are expressed grammatically.

A cat is chased by a dog (English)

A dog will have chased a cat (English)

Eine Katze wird durch einen Hund gejagt (German)

Ein Hund wird eine Katze gejagt haben (German)

Although these will sometimes work, the results will be better if verbs in search phrases are expressed in the present active.

Who chases the cat? (Bahasa inggris)

Wer jagt die Katze? (Jerman)

Although questions are supported as query phrases in the topic matching use case, they are not appropriate as search phrases. Questions should be re-phrased as statements, in this case

Something chases the cat (English)

Etwas jagt die Katze (German).

Informationsextraktion (German)

Ein Stadtmittetreffen (German)

The internal structure of German compound words is analysed within searched documents as well as within query phrases in the topic matching use case, but not within search phrases. In search phrases, compound words should be reexpressed as genitive constructions even in cases where this does not strictly capture their meaning:

Extraktion der Information (German)

Ein Treffen der Stadtmitte (German)

The following types of structures should be used with caution in search phrases:

A fierce dog chases a scared cat on the way to the theatre (English)

Ein kämpferischer Hund jagt eine verängstigte Katze auf dem Weg ins Theater (German)

Holmes can handle any level of complexity within search phrases, but the more complex a structure, the less likely it becomes that a document sentence will match it. If it is really necessary to match such complex relationships with search phrases rather than with topic matching, they are typically better extracted by splitting the search phrase up, eg

A fierce dog (English)

A scared cat (English)

A dog chases a cat (English)

Something chases something on the way to the theatre (English)

Ein kämpferischer Hund (German)

Eine verängstigte Katze (German)

Ein Hund jagt eine Katze (German)

Etwas jagt etwas auf dem Weg ins Theater (German)

Correlations between the resulting matches can then be established by matching via the Manager.match() function and looking for situations where the document token objects are shared across multiple match objects.

One possible exception to this piece of advice is when embedding-based matching is active. Because whether or not each word in a search phrase matches then depends on whether or not other words in the same search phrase have been matched, large, complex search phrases can sometimes yield results that a combination of smaller, simpler search phrases would not.

The chasing of a cat (English)

Die Jagd einer Katze (German)

These will often work, but it is generally better practice to use verbal search phrases like

Something chases a cat (English)

Etwas jagt eine Katze (German)

and to allow the corresponding nominal phrases to be matched via derivation-based matching.

The chatbot use case has already been introduced: a predefined set of search phrases is used to extract information from phrases entered interactively by an end user, which in this use case act as the documents.

The Holmes source code ships with two examples demonstrating the chatbot use case, one for each language, with predefined ontologies. Having cloned the source code and installed the Holmes library, navigate to the /examples directory and type the following (Linux):

Bahasa inggris:

python3 example_chatbot_EN_insurance.py

Jerman:

python3 example_chatbot_DE_insurance.py

or click on the files in Windows Explorer (Windows).

Holmes matches syntactically distinct structures that are semantically equivalent, ie that share the same meaning. In a real chatbot use case, users will typically enter equivalent information with phrases that are semantically distinct as well, ie that have different meanings. Because the effort involved in registering a search phrase is barely greater than the time it takes to type it in, it makes sense to register a large number of search phrases for each relationship you are trying to extract: essentially all ways people have been observed to express the information you are interested in or all ways you can imagine somebody might express the information you are interested in . To assist this, search phrases can be registered with labels that do not need to be unique: a label can then be used to express the relationship an entire group of search phrases is designed to extract. Note that when many search phrases have been defined to extract the same relationship, a single user entry is likely to be sometimes matched by multiple search phrases. This must be handled appropriately by the calling application.

One obvious weakness of Holmes in the chatbot setting is its sensitivity to correct spelling and, to a lesser extent, to correct grammar. Strategies for mitigating this weakness include:

The structural extraction use case uses structural matching in the same way as the chatbot use case, and many of the same comments and tips apply to it. The principal differences are that pre-existing and often lengthy documents are scanned rather than text snippets entered ad-hoc by the user, and that the returned match objects are not used to drive a dialog flow; they are examined solely to extract and store structured information.

Code for performing structural extraction would typically perform the following tasks:

Manager.register_search_phrase() several times to define a number of search phrases specifying the information to be extracted.Manager.parse_and_register_document() several times to load a number of documents within which to search.Manager.match() to perform the matching.The topic matching use case matches a query document , or alternatively a query phrase entered ad-hoc by the user, against a set of documents pre-loaded into memory. The aim is to find the passages in the documents whose topic most closely corresponds to the topic of the query document; the output is a ordered list of passages scored according to topic similarity. Additionally, if a query phrase contains an initial question word, the output will contain potential answers to the question.

Topic matching queries may contain generic pronouns and named-entity identifiers just like search phrases, although the ENTITYNOUN token is not supported. However, an important difference from search phrases is that the topic matching use case places no restrictions on the grammatical structures permissible within the query document.

In addition to the Holmes demonstration website, the Holmes source code ships with three examples demonstrating the topic matching use case with an English literature corpus, a German literature corpus and a German legal corpus respectively. Users are encouraged to run these to get a feel for how they work.

Topic matching uses a variety of strategies to find text passages that are relevant to the query. These include resource-hungry procedures like investigating semantic relationships and comparing embeddings. Because applying these across the board would prevent topic matching from scaling, Holmes only attempts them for specific areas of the text that less resource-intensive strategies have already marked as looking promising. This and the other interior workings of topic matching are explained here.

In the supervised document classification use case, a classifier is trained with a number of documents that are each pre-labelled with a classification. The trained classifier then assigns one or more labels to new documents according to what each new document is about. As explained here, ontologies can be used both to enrichen the comparison of the content of the various documents and to capture implication relationships between classification labels.

A classifier makes use of a neural network (a multilayer perceptron) whose topology can either be determined automatically by Holmes or specified explicitly by the user. With a large number of training documents, the automatically determined topology can easily exhaust the memory available on a typical machine; if there is no opportunity to scale up the memory, this problem can be remedied by specifying a smaller number of hidden layers or a smaller number of nodes in one or more of the layers.

A trained document classification model retains no references to its training data. This is an advantage from a data protection viewpoint, although it cannot presently be guaranteed that models will not contain individual personal or company names.

A typical problem with the execution of many document classification use cases is that a new classification label is added when the system is already live but that there are initially no examples of this new classification with which to train a new model. The best course of action in such a situation is to define search phrases which preselect the more obvious documents with the new classification using structural matching. Those documents that are not preselected as having the new classification label are then passed to the existing, previously trained classifier in the normal way. When enough documents exemplifying the new classification have accumulated in the system, the model can be retrained and the preselection search phrases removed.

Holmes ships with an example script demonstrating supervised document classification for English with the BBC Documents dataset. The script downloads the documents (for this operation and for this operation alone, you will need to be online) and places them in a working directory. When training is complete, the script saves the model to the working directory. If the model file is found in the working directory on subsequent invocations of the script, the training phase is skipped and the script goes straight to the testing phase. This means that if it is wished to repeat the training phase, either the model has to be deleted from the working directory or a new working directory has to be specified to the script.

Having cloned the source code and installed the Holmes library, navigate to the /examples directory. Specify a working directory at the top of the example_supervised_topic_model_EN.py file, then type python3 example_supervised_topic_model_EN (Linux) or click on the script in Windows Explorer (Windows).

It is important to realise that Holmes learns to classify documents according to the words or semantic relationships they contain, taking any structural matching ontology into account in the process. For many classification tasks, this is exactly what is required; but there are tasks (eg author attribution according to the frequency of grammatical constructions typical for each author) where it is not. For the right task, Holmes achieves impressive results. For the BBC Documents benchmark processed by the example script, Holmes performs slightly better than benchmarks available online (see eg here) although the difference is probably too slight to be significant, especially given that the different training/test splits were used in each case: Holmes has been observed to learn models that predict the correct result between 96.9% and 98.7% of the time. The range is explained by the fact that the behaviour of the neural network is not fully deterministic.

The interior workings of supervised document classification are explained here.

Manager holmes_extractor.Manager(self, model, *, overall_similarity_threshold=1.0,

embedding_based_matching_on_root_words=False, ontology=None,

analyze_derivational_morphology=True, perform_coreference_resolution=None,

number_of_workers=None, verbose=False)

The facade class for the Holmes library.

Parameters:

model -- the name of the spaCy model, e.g. *en_core_web_trf*

overall_similarity_threshold -- the overall similarity threshold for embedding-based

matching. Defaults to *1.0*, which deactivates embedding-based matching. Note that this

parameter is not relevant for topic matching, where the thresholds for embedding-based

matching are set on the call to *topic_match_documents_against*.

embedding_based_matching_on_root_words -- determines whether or not embedding-based

matching should be attempted on search-phrase root tokens, which has a considerable

performance hit. Defaults to *False*. Note that this parameter is not relevant for topic

matching.

ontology -- an *Ontology* object. Defaults to *None* (no ontology).

analyze_derivational_morphology -- *True* if matching should be attempted between different

words from the same word family. Defaults to *True*.

perform_coreference_resolution -- *True* if coreference resolution should be taken into account

when matching. Defaults to *True*.

use_reverse_dependency_matching -- *True* if appropriate dependencies in documents can be

matched to dependencies in search phrases where the two dependencies point in opposite

directions. Defaults to *True*.

number_of_workers -- the number of worker processes to use, or *None* if the number of worker

processes should depend on the number of available cores. Defaults to *None*

verbose -- a boolean value specifying whether multiprocessing messages should be outputted to

the console. Defaults to *False*

Manager.register_serialized_document(self, serialized_document:bytes, label:str="") -> None

Parameters:

document -- a preparsed Holmes document.

label -- a label for the document which must be unique. Defaults to the empty string,

which is intended for use cases involving single documents (typically user entries).

Manager.register_serialized_documents(self, document_dictionary:dict[str, bytes]) -> None

Note that this function is the most efficient way of loading documents.

Parameters:

document_dictionary -- a dictionary from labels to serialized documents.

Manager.parse_and_register_document(self, document_text:str, label:str='') -> None

Parameters:

document_text -- the raw document text.

label -- a label for the document which must be unique. Defaults to the empty string,

which is intended for use cases involving single documents (typically user entries).

Manager.remove_document(self, label:str) -> None

Manager.remove_all_documents(self, labels_starting:str=None) -> None

Parameters:

labels_starting -- a string starting the labels of documents to be removed,

or 'None' if all documents are to be removed.

Manager.list_document_labels(self) -> List[str]

Returns a list of the labels of the currently registered documents.

Manager.serialize_document(self, label:str) -> Optional[bytes]

Returns a serialized representation of a Holmes document that can be

persisted to a file. If 'label' is not the label of a registered document,

'None' is returned instead.

Parameters:

label -- the label of the document to be serialized.

Manager.get_document(self, label:str='') -> Optional[Doc]

Returns a Holmes document. If *label* is not the label of a registered document, *None*

is returned instead.

Parameters:

label -- the label of the document to be serialized.

Manager.debug_document(self, label:str='') -> None

Outputs a debug representation for a loaded document.

Parameters:

label -- the label of the document to be serialized.

Manager.register_search_phrase(self, search_phrase_text:str, label:str=None) -> SearchPhrase

Registers and returns a new search phrase.

Parameters:

search_phrase_text -- the raw search phrase text.

label -- a label for the search phrase, which need not be unique.

If label==None, the assigned label defaults to the raw search phrase text.

Manager.remove_all_search_phrases_with_label(self, label:str) -> None

Manager.remove_all_search_phrases(self) -> None

Manager.list_search_phrase_labels(self) -> List[str]

Manager.match(self, search_phrase_text:str=None, document_text:str=None) -> List[Dict]

Matches search phrases to documents and returns the result as match dictionaries.

Parameters:

search_phrase_text -- a text from which to generate a search phrase, or 'None' if the

preloaded search phrases should be used for matching.

document_text -- a text from which to generate a document, or 'None' if the preloaded

documents should be used for matching.

topic_match_documents_against(self, text_to_match:str, *,

use_frequency_factor:bool=True,

maximum_activation_distance:int=75,

word_embedding_match_threshold:float=0.8,

initial_question_word_embedding_match_threshold:float=0.7,

relation_score:int=300,

reverse_only_relation_score:int=200,

single_word_score:int=50,

single_word_any_tag_score:int=20,

initial_question_word_answer_score:int=600,

initial_question_word_behaviour:str='process',

different_match_cutoff_score:int=15,

overlapping_relation_multiplier:float=1.5,

embedding_penalty:float=0.6,

ontology_penalty:float=0.9,

relation_matching_frequency_threshold:float=0.25,

embedding_matching_frequency_threshold:float=0.5,

sideways_match_extent:int=100,

only_one_result_per_document:bool=False,

number_of_results:int=10,

document_label_filter:str=None,

tied_result_quotient:float=0.9) -> List[Dict]:

Returns a list of dictionaries representing the results of a topic match between an entered text

and the loaded documents.

Properties:

text_to_match -- the text to match against the loaded documents.

use_frequency_factor -- *True* if scores should be multiplied by a factor between 0 and 1

expressing how rare the words matching each phraselet are in the corpus. Note that,

even if this parameter is set to *False*, the factors are still calculated as they are

required for determining which relation and embedding matches should be attempted.

maximum_activation_distance -- the number of words it takes for a previous phraselet

activation to reduce to zero when the library is reading through a document.

word_embedding_match_threshold -- the cosine similarity above which two words match where

the search phrase word does not govern an interrogative pronoun.

initial_question_word_embedding_match_threshold -- the cosine similarity above which two

words match where the search phrase word governs an interrogative pronoun.

relation_score -- the activation score added when a normal two-word relation is matched.

reverse_only_relation_score -- the activation score added when a two-word relation

is matched using a search phrase that can only be reverse-matched.

single_word_score -- the activation score added when a single noun is matched.

single_word_any_tag_score -- the activation score added when a single word is matched

that is not a noun.

initial_question_word_answer_score -- the activation score added when a question word is

matched to an potential answer phrase.

initial_question_word_behaviour -- 'process' if a question word in the sentence

constituent at the beginning of *text_to_match* is to be matched to document phrases

that answer it and to matching question words; 'exclusive' if only topic matches that

answer questions are to be permitted; 'ignore' if question words are to be ignored.

different_match_cutoff_score -- the activation threshold under which topic matches are

separated from one another. Note that the default value will probably be too low if

*use_frequency_factor* is set to *False*.

overlapping_relation_multiplier -- the value by which the activation score is multiplied

when two relations were matched and the matches involved a common document word.

embedding_penalty -- a value between 0 and 1 with which scores are multiplied when the

match involved an embedding. The result is additionally multiplied by the overall

similarity measure of the match.

ontology_penalty -- a value between 0 and 1 with which scores are multiplied for each

word match within a match that involved the ontology. For each such word match,

the score is multiplied by the value (abs(depth) + 1) times, so that the penalty is

higher for hyponyms and hypernyms than for synonyms and increases with the

depth distance.

relation_matching_frequency_threshold -- the frequency threshold above which single

word matches are used as the basis for attempting relation matches.

embedding_matching_frequency_threshold -- the frequency threshold above which single

word matches are used as the basis for attempting relation matches with

embedding-based matching on the second word.

sideways_match_extent -- the maximum number of words that may be incorporated into a

topic match either side of the word where the activation peaked.

only_one_result_per_document -- if 'True', prevents multiple results from being returned

for the same document.

number_of_results -- the number of topic match objects to return.

document_label_filter -- optionally, a string with which document labels must start to

be considered for inclusion in the results.

tied_result_quotient -- the quotient between a result and following results above which

the results are interpreted as tied.

Manager.get_supervised_topic_training_basis(self, *, classification_ontology:Ontology=None,

overlap_memory_size:int=10, oneshot:bool=True, match_all_words:bool=False,

verbose:bool=True) -> SupervisedTopicTrainingBasis:

Returns an object that is used to train and generate a model for the

supervised document classification use case.

Parameters:

classification_ontology -- an Ontology object incorporating relationships between

classification labels, or 'None' if no such ontology is to be used.

overlap_memory_size -- how many non-word phraselet matches to the left should be

checked for words in common with a current match.

oneshot -- whether the same word or relationship matched multiple times within a

single document should be counted once only (value 'True') or multiple times

(value 'False')

match_all_words -- whether all single words should be taken into account

(value 'True') or only single words with noun tags (value 'False')

verbose -- if 'True', information about training progress is outputted to the console.

Manager.deserialize_supervised_topic_classifier(self,

serialized_model:bytes, verbose:bool=False) -> SupervisedTopicClassifier:

Returns a classifier for the supervised document classification use case

that will use a supplied pre-trained model.

Parameters:

serialized_model -- the pre-trained model as returned from `SupervisedTopicClassifier.serialize_model()`.

verbose -- if 'True', information about matching is outputted to the console.

Manager.start_chatbot_mode_console(self)

Starts a chatbot mode console enabling the matching of pre-registered

search phrases to documents (chatbot entries) entered ad-hoc by the

user.

Manager.start_structural_search_mode_console(self)

Starts a structural extraction mode console enabling the matching of pre-registered

documents to search phrases entered ad-hoc by the user.

Manager.start_topic_matching_search_mode_console(self,

only_one_result_per_document:bool=False, word_embedding_match_threshold:float=0.8,

initial_question_word_embedding_match_threshold:float=0.7):

Starts a topic matching search mode console enabling the matching of pre-registered

documents to query phrases entered ad-hoc by the user.

Parameters:

only_one_result_per_document -- if 'True', prevents multiple topic match

results from being returned for the same document.

word_embedding_match_threshold -- the cosine similarity above which two words match where the

search phrase word does not govern an interrogative pronoun.

initial_question_word_embedding_match_threshold -- the cosine similarity above which two

words match where the search phrase word governs an interrogative pronoun.

Manager.close(self) -> None

Terminates the worker processes.

manager.nlp manager.nlp is the underlying spaCy Language object on which both Coreferee and Holmes have been registered as custom pipeline components. The most efficient way of parsing documents for use with Holmes is to call manager.nlp.pipe() . This yields an iterable of documents that can then be loaded into Holmes via manager.register_serialized_documents() .

The pipe() method has an argument n_process that specifies the number of processors to use. With _lg , _md and _sm spaCy models, there are some situations where it can make sense to specify a value other than 1 (the default). Note however that with transformer spaCy models ( _trf ) values other than 1 are not supported.

Ontology holmes_extractor.Ontology(self, ontology_path,

owl_class_type='http://www.w3.org/2002/07/owl#Class',

owl_individual_type='http://www.w3.org/2002/07/owl#NamedIndividual',

owl_type_link='http://www.w3.org/1999/02/22-rdf-syntax-ns#type',

owl_synonym_type='http://www.w3.org/2002/07/owl#equivalentClass',

owl_hyponym_type='http://www.w3.org/2000/01/rdf-schema#subClassOf',

symmetric_matching=False)

Loads information from an existing ontology and manages ontology

matching.

The ontology must follow the W3C OWL 2 standard. Search phrase words are

matched to hyponyms, synonyms and instances from within documents being

searched.

This class is designed for small ontologies that have been constructed

by hand for specific use cases. Where the aim is to model a large number

of semantic relationships, word embeddings are likely to offer

better results.

Holmes is not designed to support changes to a loaded ontology via direct

calls to the methods of this class. It is also not permitted to share a single instance

of this class between multiple Manager instances: instead, a separate Ontology instance

pointing to the same path should be created for each Manager.

Matching is case-insensitive.

Parameters:

ontology_path -- the path from where the ontology is to be loaded,

or a list of several such paths. See https://github.com/RDFLib/rdflib/.

owl_class_type -- optionally overrides the OWL 2 URL for types.

owl_individual_type -- optionally overrides the OWL 2 URL for individuals.

owl_type_link -- optionally overrides the RDF URL for types.

owl_synonym_type -- optionally overrides the OWL 2 URL for synonyms.

owl_hyponym_type -- optionally overrides the RDF URL for hyponyms.

symmetric_matching -- if 'True', means hypernym relationships are also taken into account.

SupervisedTopicTrainingBasis (returned from Manager.get_supervised_topic_training_basis() )Holder object for training documents and their classifications from which one or more SupervisedTopicModelTrainer objects can be derived. This class is NOT threadsafe.

SupervisedTopicTrainingBasis.parse_and_register_training_document(self, text:str, classification:str,

label:Optional[str]=None) -> None

Parses and registers a document to use for training.

Parameters:

text -- the document text

classification -- the classification label

label -- a label with which to identify the document in verbose training output,

or 'None' if a random label should be assigned.

SupervisedTopicTrainingBasis.register_training_document(self, doc:Doc, classification:str,

label:Optional[str]=None) -> None

Registers a pre-parsed document to use for training.

Parameters:

doc -- the document

classification -- the classification label

label -- a label with which to identify the document in verbose training output,

or 'None' if a random label should be assigned.

SupervisedTopicTrainingBasis.register_additional_classification_label(self, label:str) -> None

Register an additional classification label which no training document possesses explicitly

but that should be assigned to documents whose explicit labels are related to the

additional classification label via the classification ontology.

SupervisedTopicTrainingBasis.prepare(self) -> None

Matches the phraselets derived from the training documents against the training

documents to generate frequencies that also include combined labels, and examines the

explicit classification labels, the additional classification labels and the

classification ontology to derive classification implications.

Once this method has been called, the instance no longer accepts new training documents

or additional classification labels.

SupervisedTopicTrainingBasis.train(

self,

*,

minimum_occurrences: int = 4,

cv_threshold: float = 1.0,

learning_rate: float = 0.001,

batch_size: int = 5,

max_epochs: int = 200,

convergence_threshold: float = 0.0001,

hidden_layer_sizes: Optional[List[int]] = None,

shuffle: bool = True,

normalize: bool = True

) -> SupervisedTopicModelTrainer:

Trains a model based on the prepared state.

Parameters:

minimum_occurrences -- the minimum number of times a word or relationship has to

occur in the context of the same classification for the phraselet

to be accepted into the final model.

cv_threshold -- the minimum coefficient of variation with which a word or relationship has

to occur across the explicit classification labels for the phraselet to be

accepted into the final model.

learning_rate -- the learning rate for the Adam optimizer.

batch_size -- the number of documents in each training batch.

max_epochs -- the maximum number of training epochs.

convergence_threshold -- the threshold below which loss measurements after consecutive

epochs are regarded as equivalent. Training stops before 'max_epochs' is reached

if equivalent results are achieved after four consecutive epochs.

hidden_layer_sizes -- a list containing the number of neurons in each hidden layer, or

'None' if the topology should be determined automatically.

shuffle -- 'True' if documents should be shuffled during batching.

normalize -- 'True' if normalization should be applied to the loss function.

SupervisedTopicModelTrainer (returned from SupervisedTopicTrainingBasis.train() ) Worker object used to train and generate models. This object could be removed from the public interface ( SupervisedTopicTrainingBasis.train() could return a SupervisedTopicClassifier directly) but has been retained to facilitate testability.

This class is NOT threadsafe.

SupervisedTopicModelTrainer.classifier(self)

Returns a supervised topic classifier which contains no explicit references to the training data and that

can be serialized.

SupervisedTopicClassifier (returned from SupervisedTopicModelTrainer.classifier() and Manager.deserialize_supervised_topic_classifier() ))

SupervisedTopicClassifier.def parse_and_classify(self, text: str) -> Optional[OrderedDict]:

Returns a dictionary from classification labels to probabilities

ordered starting with the most probable, or *None* if the text did

not contain any words recognised by the model.

Parameters:

text -- the text to parse and classify.

SupervisedTopicClassifier.classify(self, doc: Doc) -> Optional[OrderedDict]:

Returns a dictionary from classification labels to probabilities

ordered starting with the most probable, or *None* if the text did

not contain any words recognised by the model.

Parameters:

doc -- the pre-parsed document to classify.

SupervisedTopicClassifier.serialize_model(self) -> str

Returns a serialized model that can be reloaded using

*Manager.deserialize_supervised_topic_classifier()*

Manager.match() A text-only representation of a match between a search phrase and a

document. The indexes refer to tokens.

Properties:

search_phrase_label -- the label of the search phrase.

search_phrase_text -- the text of the search phrase.

document -- the label of the document.

index_within_document -- the index of the match within the document.

sentences_within_document -- the raw text of the sentences within the document that matched.

negated -- 'True' if this match is negated.

uncertain -- 'True' if this match is uncertain.

involves_coreference -- 'True' if this match was found using coreference resolution.

overall_similarity_measure -- the overall similarity of the match, or

'1.0' if embedding-based matching was not involved in the match.

word_matches -- an array of dictionaries with the properties:

search_phrase_token_index -- the index of the token that matched from the search phrase.

search_phrase_word -- the string that matched from the search phrase.

document_token_index -- the index of the token that matched within the document.

first_document_token_index -- the index of the first token that matched within the document.

Identical to 'document_token_index' except where the match involves a multiword phrase.

last_document_token_index -- the index of the last token that matched within the document

(NOT one more than that index). Identical to 'document_token_index' except where the match

involves a multiword phrase.

structurally_matched_document_token_index -- the index of the token within the document that

structurally matched the search phrase token. Is either the same as 'document_token_index' or

is linked to 'document_token_index' within a coreference chain.

document_subword_index -- the index of the token subword that matched within the document, or

'None' if matching was not with a subword but with an entire token.

document_subword_containing_token_index -- the index of the document token that contained the

subword that matched, which may be different from 'document_token_index' in situations where a

word containing multiple subwords is split by hyphenation and a subword whose sense

contributes to a word is not overtly realised within that word.

document_word -- the string that matched from the document.

document_phrase -- the phrase headed by the word that matched from the document.

match_type -- 'direct', 'derivation', 'entity', 'embedding', 'ontology', 'entity_embedding'

or 'question'.

negated -- 'True' if this word match is negated.

uncertain -- 'True' if this word match is uncertain.

similarity_measure -- for types 'embedding' and 'entity_embedding', the similarity between the

two tokens, otherwise '1.0'.

involves_coreference -- 'True' if the word was matched using coreference resolution.

extracted_word -- within the coreference chain, the most specific term that corresponded to

the document_word.

depth -- the number of hyponym relationships linking 'search_phrase_word' and

'extracted_word', or '0' if ontology-based matching is not active. Can be negative

if symmetric matching is active.

explanation -- creates a human-readable explanation of the word match from the perspective of the

document word (e.g. to be used as a tooltip over it).

Manager.topic_match_documents_against() A text-only representation of a topic match between a search text and a document.

Properties:

document_label -- the label of the document.

text -- the document text that was matched.

text_to_match -- the search text.

rank -- a string representation of the scoring rank which can have the form e.g. '2=' in case of a tie.