Inglés | Italiano | Español | Français | বাংলা | தமிழ் | ગુજરાતી | Portugués | हिंदी | తెలుగు | Română | العر sil hac. | Nepalí | 简体中文

Si está interesado en contribuir a este proyecto, tómese un momento para revisar contribuyentes. MD para obtener instrucciones detalladas sobre cómo comenzar. ¡Sus contribuciones son muy apreciadas!

La informática es el estudio de las computadoras y la computación y sus aplicaciones teóricas y prácticas. La informática aplica los principios de las matemáticas, la ingeniería y la lógica a una gran cantidad de problemas. Estos incluyen formulación de algoritmos, desarrollo de software/hardware e inteligencia artificial.

Una computadora es un dispositivo diseñado para realizar operaciones matemáticas, lógicas o de procesamiento de datos de alta velocidad. Es una máquina electrónica programable que puede ensamblar, almacenar, correlacionar y procesar información de manera eficiente.

La lógica booleana es una rama de las matemáticas centrada en los valores de la verdad, específicamente verdadero y falso. Funciona con un sistema binario donde 0 representa falso y 1 representa verdadero. Conocido como álgebra booleana, este sistema fue introducido por primera vez por George Boole en 1854.

| Operador | Nombre | Descripción |

|---|---|---|

| ! | NO | Niega (invertir) el valor del operando. |

| && | Y | Devuelve verdadero si ambos operandos son verdaderos. |

| || | O | Devuelve verdadero si al menos un operando es verdadero. |

| Operador | Nombre | Descripción |

|---|---|---|

| () | Paréntesis | Le permite agrupar palabras clave y controlar el orden en que se buscarán los términos. |

| "" | Comillas | Proporciona resultados con la frase exacta. |

| * | Asterisco | Proporciona resultados que contienen una variación de palabras clave. |

| ⊕ | Xor | Devuelve verdadero si los operandos son diferentes |

| ⊽ | NI | Devuelve verdadero si todos los operandos son falsos. |

| ⊼ | Noble | Devuelve falsos solo si ambos valores de sus dos entradas son verdaderos. |

Los circuitos digitales tratan con señales booleanas (1 y 0). Son los bloques de construcción financieros de una computadora. Son los componentes y circuitos utilizados para crear unidades de procesador y unidades de memoria esenciales para un sistema informático.

Las tablas de verdad son tablas matemáticas utilizadas en el diseño lógico y de circuito digital. Ayudan a mapear la funcionalidad de un circuito. Podemos usarlos para ayudar a diseñar circuitos digitales complejos.

Las tablas de verdad tienen 1 columna para cada variable de entrada y 1 columna final que muestra todos los resultados posibles de la operación lógica que representa la tabla.

Hay 2 tipos de circuitos digitales: combinacionales y secuenciales

Al diseñar un circuito digital, especialmente los complejos. Es importante utilizar herramientas de álgebra booleana para ayudar con el proceso de diseño (ejemplo: mapa de Karnaugh). También es importante dividir todo en circuitos más pequeños y examinar la tabla de verdad necesaria para ese circuito más pequeño. No intentes abordar todo el circuito a la vez, descomponenlo y junte gradualmente las piezas.

Los sistemas de números son sistemas matemáticos para expresar números. Un sistema numérico consiste en un conjunto de símbolos que se utilizan para representar números y un conjunto de reglas para manipular esos símbolos. Los símbolos utilizados en un sistema numérico se denominan números.

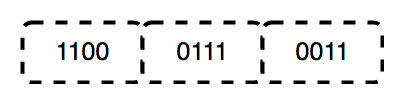

El binario es un sistema de números Base-2, inventado por Gottfried Leibniz, que consta de solo dos dígitos: 0 y 1. Este sistema es fundamental para todo el código binario, que se utiliza para codificar datos digitales, incluidas las instrucciones del procesador de computadora. En binario, los dígitos representan estados: 0 corresponde a "apagado", y 1 corresponde a "on".

En los transistores, un "0" no indica un flujo de electricidad, mientras que un "1" significa que la electricidad está fluyendo. Esta representación física de los números permite a las computadoras realizar cálculos y operaciones de manera eficiente.

El binario sigue siendo el lenguaje central de las computadoras y se usa en electrónica y hardware por varias razones clave:

Una unidad de procesamiento central (CPU) es la parte más importante de cualquier computadora. La CPU envía señales para controlar las otras partes de la computadora, casi como cómo un cerebro controla un cuerpo. La CPU es una máquina electrónica que funciona en una lista de cosas de la computadora que hacer, llamadas instrucciones. Se lee la lista de instrucciones y ejecuta (ejecuta) cada una en orden. Una lista de instrucciones que una CPU puede ejecutar es un programa de computadora. Una CPU puede procesar más de una instrucción a la vez en las secciones llamadas "núcleos". Una CPU con cuatro núcleos puede procesar cuatro programas a la vez. La CPU en sí está hecha de tres componentes principales. Ellos son:

Los registros son pequeñas cantidades de memoria de alta velocidad contenida dentro de la CPU. Los registros son una colección de "chanclas" (un circuito utilizado para almacenar 1 bit de memoria). El procesador utiliza para almacenar pequeñas cantidades de datos que se necesitan durante el procesamiento. Una CPU puede tener varios conjuntos de registros que se llaman "núcleos". Registrar también ayuda en operaciones aritméticas y lógicas.

Las operaciones aritméticas son cálculos matemáticos realizados por la CPU en datos numéricos almacenados en registros. Estas operaciones incluyen suma, resta, multiplicación y división. Las operaciones lógicas son cálculos booleanos realizados por la CPU en datos binarios almacenados en registros. Estas operaciones incluyen comparaciones (por ejemplo, pruebas si dos valores son iguales) y operaciones lógicas (por ejemplo, o, o no).

Los registros son esenciales para realizar estas operaciones porque permiten que la CPU acceda a acceder y manipular rápidamente pequeñas cantidades de datos. Al almacenar datos de acceso frecuente en registros, la CPU puede evitar el proceso más lento de recuperar datos de la memoria.

Se pueden almacenar mayores cantidades de datos en caché (pronunciado como "efectivo"), una memoria muy rápida ubicada en el mismo circuito integrado que los registros. El caché se utiliza para los datos a los que se accede con frecuencia a medida que se ejecuta el programa. Incluso grandes cantidades de datos pueden almacenarse en RAM. RAM significa memoria de acceso aleatorio, que es un tipo de memoria que contiene datos e instrucciones que se han movido desde el almacenamiento en disco hasta que el procesador lo necesite.

La memoria de caché es un componente de computadora basado en chips que hace que recuperar datos de la memoria de la computadora sea más eficiente. Actúa como un área de almacenamiento temporal para que el procesador de la computadora pueda recuperar datos fácilmente. Esta área de almacenamiento temporal, conocida como caché, está más fácilmente disponible para el procesador que la fuente de memoria principal de la computadora, generalmente alguna forma de DRAM.

La memoria de caché a veces se denomina memoria CPU (unidad de procesamiento central) porque generalmente se integra directamente en el chip de la CPU o se coloca en un chip separado que tiene una interconexión de bus separada con la CPU. Por lo tanto, es más accesible para el procesador y puede aumentar la eficiencia porque está físicamente cerca del procesador.

Para estar cerca del procesador, la memoria de caché debe ser mucho más pequeña que la memoria principal. En consecuencia, tiene menos espacio de almacenamiento. También es más caro que la memoria principal, ya que es un chip más complejo que produce un mayor rendimiento.

Lo que sacrifica en tamaño y precio, lo compensa en velocidad. La memoria de caché funciona de 10 a 100 veces más rápido que la RAM, lo que requiere solo unos pocos nanosegundos para responder a una solicitud de CPU.

El nombre del hardware real que se usa para la memoria de caché es la memoria de acceso aleatorio estático de alta velocidad (SRAM). El nombre del hardware que se usa en la memoria principal de una computadora es la memoria dinámica de acceso aleatorio (DRAM).

La memoria de caché no debe confundirse con el caché de término más amplio. Los cachés son tiendas de datos temporales que pueden existir tanto en hardware como en software. La memoria de caché se refiere al componente de hardware específico que permite a las computadoras crear cachés en varios niveles de la red. Un caché es un hardware o software que se utiliza para almacenar algo, típicamente datos, temporalmente en un entorno informático.

RAM (memoria de acceso aleatorio) es una forma de memoria de la computadora que se puede leer y cambiar en cualquier orden, generalmente usado para almacenar datos de trabajo y código de máquina. A random access memory device allows data items to be read or written in almost the same amount of time regardless of the physical location of data inside the memory, in contrast with other direct-access data storage media (such as hard disks, CD-RWS, DVD-RWs and the older magnetic tapes and drum memory), where the time required to read and write data items varies significantly depending on their physical locations on the recording medium, due to mechanical limitations such as media rotation speeds and arm movimiento.

En informática, una instrucción es una operación única de un procesador definido por el conjunto de instrucciones del procesador. Un programa de computadora es una lista de instrucciones que le dicen a una computadora qué hacer. Todo lo que hace una computadora se logra utilizando un programa de computadora. Los programas que se almacenan en la memoria de una computadora ("programación interna") permiten que la computadora haga una cosa tras otra, incluso con descansos en el medio.

Un lenguaje de programación es cualquier conjunto de reglas que conviertan cadenas o elementos gráficos del programa en el caso de los lenguajes de programación visual, a varios tipos de salida del código de máquina. Los lenguajes de programación son un tipo de lenguaje informático utilizado en la programación de computadoras para implementar algoritmos.

Los lenguajes de programación a menudo se dividen en dos amplias categorías:

También hay varios paradigmas de programación diferentes. Los paradigmas de programación son diferentes formas o estilos en los que se puede organizar un programa o lenguaje de programación determinado. Cada paradigma consiste en ciertas estructuras, características y opiniones sobre cómo se deben abordar los problemas de programación comunes.

Los paradigmas de programación no son lenguajes o herramientas. No puedes "construir" nada con un paradigma. Son más como un conjunto de ideales y pautas que muchas personas han acordado, seguido y expandido. Los lenguajes de programación no siempre están vinculados a un paradigma particular. Hay idiomas que se han construido con un cierto paradigma en mente y tienen características que facilitan ese tipo de programación más que otros (Haskell y la programación funcional es un buen ejemplo). Pero también hay lenguajes de "paradigma múltiple" en los que puede adaptar su código para que se ajuste a un cierto paradigma u otro (JavaScript y Python son buenos ejemplos).

Un tipo de datos, en la programación, es una clasificación que especifica qué tipo de valor tiene una variable y qué tipo de operaciones matemáticas, relacionales o lógicas se pueden aplicar sin causar un error.

Los tipos de datos primitivos son los tipos de datos más básicos en un lenguaje de programación. Son los bloques de construcción de tipos de datos más complejos. Los tipos de datos primitivos están predefinidos por el lenguaje de programación y son nombrados por una palabra clave reservada.

Los tipos de datos no primitivos también se conocen como tipos de datos de referencia. Son creados por el programador y no están definidos por el lenguaje de programación. Los tipos de datos no ejercicios también se denominan tipos de datos compuestos porque están compuestos por otros tipos.

En la programación de computadoras, una declaración es una unidad sintáctica de un lenguaje de programación imperativo que expresa alguna acción que se llevará a cabo. Un programa escrito en dicho lenguaje se forma una secuencia de una o más declaraciones. Una declaración puede tener componentes internos (por ejemplo, expresiones). Hay dos tipos principales de declaraciones en cualquier lenguaje de programación que sea necesario para construir la lógica de un código.

Hay dos tipos de declaraciones condicionales principalmente:

Hay tres tipos de bucles principalmente:

Una función es un bloque de declaraciones que realiza una tarea específica. Las funciones aceptan datos, procesarlos y devolver un resultado o ejecutarlos. Las funciones se escriben principalmente para apoyar el concepto de reutilización. Una vez que se escribe una función, se puede llamar fácilmente sin tener que repetir el mismo código.

Diferentes idiomas funcionales usan diferentes sintaxis para escribir funciones.

Lea más sobre las funciones aquí

En informática, una estructura de datos es una organización de datos, gestión y formato de almacenamiento que permite un acceso y modificación eficientes. Más precisamente, una estructura de datos es una colección de valores de datos, las relaciones entre ellos y las funciones u operaciones que pueden aplicarse a los datos.

Los algoritmos son los conjuntos de pasos necesarios para completar el cálculo. Están en el corazón de lo que hacen nuestros dispositivos, y este no es un concepto nuevo. Desde el desarrollo de las matemáticas en sí, se han necesitado algoritmos para ayudarnos a completar las tareas de manera más eficiente, pero hoy vamos a echar un vistazo a un par de problemas informáticos modernos como la clasificación y la búsqueda de gráficos y mostrar cómo las hemos hecho más eficientes, por lo que puede encontrar más fácilmente la tarifa aérea o las instrucciones de mapa baratas a las instrucciones de Invierno o un restaurante o algo.

La complejidad del tiempo de un algoritmo estima cuánto tiempo usará el algoritmo para alguna entrada. La idea es representar la eficiencia como una función cuyo parámetro es el tamaño de entrada. Al calcular la complejidad del tiempo, podemos determinar si el algoritmo es lo suficientemente rápido sin implementarlo.

La complejidad del espacio se refiere a la cantidad total de espacio de memoria que utiliza un algoritmo/programa, incluido el espacio de los valores de entrada para la ejecución. Calcule el espacio ocupado por variables en un algoritmo/programa para determinar la complejidad del espacio.

La clasificación es el proceso de organizar una lista de elementos en un orden particular. Por ejemplo, si tuviera una lista de nombres, es posible que desee ordenarlos alfabéticamente. Alternativamente, si tenía una lista de números, es posible que desee ponerlos en orden de más pequeño a más grande. La clasificación es una tarea común, y es una que podemos hacer de muchas maneras diferentes.

La búsqueda es un algoritmo para encontrar un cierto elemento objetivo dentro de un contenedor. Los algoritmos de búsqueda están diseñados para verificar un elemento o recuperar un elemento de cualquier estructura de datos donde se almacene.

Las cadenas son una de las estructuras de datos más utilizadas y más importantes en la programación, este repositorio contiene algunos de los algoritmos más utilizados que ayudan a buscar tiempo de búsqueda más rápido para mejorar nuestro código.

La búsqueda de gráficos es el proceso de búsqueda a través de un gráfico para encontrar un nodo particular. Un gráfico es una estructura de datos que consiste en un conjunto finito (y posiblemente mutable) de vértices o nodos o puntos, junto con un conjunto de pares no ordenados de estos vértices para un gráfico no dirigido o un conjunto de pares ordenados para un gráfico dirigido. Estos pares se conocen como bordes, arcos o líneas para un gráfico no dirigido, y como flechas, bordes dirigidos, arcos dirigidos o líneas dirigidas para un gráfico dirigido. Los vértices pueden ser parte de la estructura gráfica o pueden ser entidades externas representadas por índices o referencias enteras. Los gráficos son una de las estructuras de datos más útiles para muchas aplicaciones del mundo real. Los gráficos se utilizan para modelar relaciones por pares entre objetos. Por ejemplo, la red de ruta de la aerolínea es un gráfico en el que las ciudades son los vértices, y las rutas de vuelo son los bordes. Los gráficos también se utilizan para representar redes. Internet se puede modelar como un gráfico en el que las computadoras son los vértices, y los enlaces entre las computadoras son los bordes. Los gráficos también se usan en redes sociales como LinkedIn y Facebook. Los gráficos se utilizan para representar muchas aplicaciones del mundo real: redes informáticas, diseño de circuitos y programación aeronáutica, por nombrar solo algunas.

La programación dinámica es un método de optimización matemática y un método de programación de computadoras. Richard Bellman desarrolló el método en la década de 1950 y ha encontrado aplicaciones en numerosos campos, desde ingeniería aeroespacial hasta economía. En ambos contextos, se refiere a simplificar un problema complicado al dividirlo en subproblemas más simples de manera recursiva. Si bien algunos problemas de decisión no se pueden desarmar de esta manera, las decisiones que abarcan varios puntos en el tiempo a menudo se separan de manera recursiva. Del mismo modo, en la informática, si un problema se puede resolver de manera óptima dividiéndolo en subproblemas y luego encontrar recursivamente las soluciones óptimas a los subproblemas, entonces se dice que tiene una subestructura óptima. La programación dinámica es una forma de resolver problemas con estas propiedades. El proceso de romper un problema complicado en subproblemas más simples se llama "dividir y conquistar".

Los algoritmos codiciosos son una clase simple e intuitiva de algoritmos que se pueden usar para encontrar la solución óptima para algunos problemas de optimización. Se llaman codiciosos porque, en cada paso, toman la decisión que parece mejor en ese momento. Esto significa que los algoritmos codiciosos no garantizan devolver la solución globalmente óptima, sino que toman decisiones localmente óptimas con la esperanza de encontrar un óptimo global. Los algoritmos codiciosos se utilizan para problemas de optimización. Un problema de optimización se puede resolver usando codicioso si el problema tiene la siguiente propiedad: en cada paso, podemos elegir una decisión que se vea mejor en el momento, y obtenemos la solución óptima al problema completo.

El retroceso es una técnica algorítmica para resolver problemas de forma recursiva al tratar de construir una solución incrementalmente, una pieza a la vez, eliminando esas soluciones que no satisfacen las limitaciones del problema en cualquier momento (por el tiempo, aquí, se refiere al tiempo transcurrido hasta que alcanza cualquier nivel del árbol de búsqueda).

Branch and Bound es una técnica general para resolver problemas de optimización combinatoria. Es una técnica de enumeración sistemática que reduce el número de soluciones candidatas mediante el uso de la estructura del problema para eliminar las soluciones candidatas que no pueden ser óptimas.

Complejidad del tiempo : se define como el número de veces que se espera que se ejecute un conjunto de instrucciones en particular en lugar del tiempo total tomado. Dado que el tiempo es un fenómeno dependiente, la complejidad del tiempo puede variar en algunos factores externos como la velocidad del procesador, el compilador utilizado, etc.

Complejidad del espacio : es el espacio de memoria total consumido por el programa para su ejecución.

Ambos se calculan como la función del tamaño de entrada (n). La complejidad del tiempo de un algoritmo se expresa en la notación grande.

La eficiencia de un algoritmo depende de estos dos parámetros.

Tipos de complejidad del tiempo:

Algunas complejidades de tiempo comunes son:

O (1) : Esto denota el tiempo constante. O (1) generalmente significa que un algoritmo tendrá un tiempo constante independientemente del tamaño de entrada. Los mapas hash son ejemplos perfectos de tiempo constante.

O (log n) : esto denota tiempo logarítmico. O (log n) significa disminuir con cada instancia para las operaciones. Buscar elementos en árboles de búsqueda binarios (BST) es un buen ejemplo de tiempo logarítmico.

O (n) : esto denota tiempo lineal. O (n) significa que el rendimiento es directamente proporcional al tamaño de la entrada. En términos simples, el número de entradas y el tiempo tomado para ejecutar esas entradas será proporcional. La búsqueda lineal en las matrices es un gran ejemplo de complejidad del tiempo lineal.

O (n*n) : esto denota tiempo cuadrático. O (n^2) significa que el rendimiento es directamente proporcional al cuadrado de la entrada tomada. En simple, el tiempo tomado para la ejecución tomará tiempos cuadrados aproximadamente el tamaño de la entrada. Los bucles anidados son ejemplos perfectos de complejidad del tiempo cuadrático.

O (n log n) : esto denota complejidad del tiempo polinomial. O (n log n) significa que el rendimiento es N veces el de O (log n) (que es la peor complejidad). Un buen ejemplo se dividiría y conquistaría algoritmos como la clasificación de fusiones. Este algoritmo primero divide el conjunto, que toma el tiempo O (log n), luego conquista y clasifica a través del conjunto, que toma o (n) tiempo, por lo tanto, la clasificación de fusión toma o (n log n) tiempo.

| Algoritmo | Complejidad del tiempo | Complejidad espacial | ||

|---|---|---|---|---|

| Mejor | Promedio | El peor | El peor | |

| Clasificación de selección | Ω (n^2) | θ (n^2) | O (n^2) | O (1) |

| Burbuja | Ω (n) | θ (n^2) | O (n^2) | O (1) |

| Clasificación de inserción | Ω (n) | θ (n^2) | O (n^2) | O (1) |

| Sort de montón | Ω (n log (n)) | θ (n log (n)) | O (n log (n)) | O (1) |

| Clasificación rápida | Ω (n log (n)) | θ (n log (n)) | O (n^2) | En) |

| Fusionar | Ω (n log (n)) | θ (n log (n)) | O (n log (n)) | En) |

| Clasificación de cubos | Ω (N +K) | θ (n +k) | O (n^2) | En) |

| Radix Sort | Ω (NK) | θ (NK) | O (NK) | O (N + K) |

| Clasificar | Ω (N +K) | θ (n +k) | O (N +K) | De acuerdo) |

| Clasificar con cáscara | Ω (n log (n)) | θ (n log (n)) | O (n^2) | O (1) |

| Tim sort | Ω (n) | θ (n log (n)) | O (n log (n)) | En) |

| Ordena de árboles | Ω (n log (n)) | θ (n log (n)) | O (n^2) | En) |

| Orden de cubo | Ω (n) | θ (n log (n)) | O (n log (n)) | En) |

| Algoritmo | Complejidad del tiempo | ||

|---|---|---|---|

| Mejor | Promedio | El peor | |

| Búsqueda lineal | O (1) | EN) | EN) |

| Búsqueda binaria | O (1) | O (logn) | O (logn) |

Alan Turing (nacido el 23 de junio de 1912, Londres, Eng. - Fundado el 7 de junio de 1954, Wilmslow, Cheshire) fue un matemático y lógico inglés. Estudió en la Universidad de Cambridge y el Instituto de Estudios Avanzados de Princeton. En su artículo seminal de 1936 "Sobre números computables", demostró que no podría existir ningún método algorítmico universal para determinar la verdad en las matemáticas y que las matemáticas siempre contendrán proposiciones indecidibles (en oposición a desconocidas). Ese documento también introdujo la máquina Turing. Él creía que las computadoras eventualmente serían capaces de pensar que no se podían distinguir de la de un humano y propusieron una prueba simple (ver prueba de Turing) para evaluar esta capacidad. Sus documentos sobre el tema son ampliamente reconocidos como la base de la investigación en inteligencia artificial. Hizo un valioso trabajo en criptografía durante la Segunda Guerra Mundial, jugando un papel importante en la ruptura del código de enigma utilizado por Alemania para las comunicaciones de la radio. Después de la guerra, enseñó en la Universidad de Manchester y comenzó a trabajar en lo que ahora se conoce como inteligencia artificial. En medio de este trabajo innovador, Turing fue encontrado muerto en su cama, envenenado por cianuro. Su muerte siguió su arresto por un acto homosexual (entonces un delito) y una sentencia de 12 meses de terapia hormonal.

Después de una campaña pública en 2009, el primer ministro británico, Gordon Brown, hizo una disculpa pública oficial en nombre del gobierno británico por la terrible forma en que se trató a Turing. La reina Isabel II otorgó un indulto póstumo en 2013. El término "Ley de Alan Turing" ahora se usa informalmente para referirse a una ley de 2017 en el Reino Unido que perdonó retroactivamente a los hombres advirtidos o condenados bajo la legislación histórica que prohibió los actos homosexuales.

Turing tiene un amplio legado con estatuas de él y muchas cosas que llevan su nombre, incluido un premio anual a las innovaciones de la informática. Aparece en la actual nota de £ 50 del Banco de Inglaterra, que se lanzó el 23 de junio de 2021 para coincidir con su cumpleaños. Una serie de la BBC de 2019, votada por la audiencia, lo nombró la persona más grande del siglo XX.

La ingeniería de software es la rama de la informática que se ocupa del diseño, desarrollo, pruebas y mantenimiento de aplicaciones de software. Los ingenieros de software aplican principios de ingeniería y conocimiento de los lenguajes de programación para crear soluciones de software para usuarios finales.

Veamos las diversas definiciones de ingeniería de software:

Los ingenieros exitosos saben cómo usar los lenguajes de programación correctos, las plataformas y las arquitecturas para desarrollar todo, desde juegos de computadora hasta sistemas de control de red. Además de construir sus sistemas, los ingenieros de software también prueban, mejoran y mantienen el software creado por otros ingenieros.

En este rol, sus tareas diarias pueden incluir lo siguiente:

El proceso de ingeniería de software involucra varias fases, incluidas la recopilación de requisitos, el diseño, la implementación, las pruebas y el mantenimiento. Siguiendo un enfoque disciplinado para el desarrollo de software, los ingenieros de software pueden crear software de alta calidad que satisfaga las necesidades de sus usuarios.

La primera fase de la ingeniería de software es la recopilación de requisitos. En esta fase, el ingeniero de software trabaja con el cliente para determinar los requisitos funcionales y no funcionales del software. Los requisitos funcionales describen lo que debe hacer el software, mientras que los requisitos no funcionales describen qué tan bien debe hacerlo. La recopilación de requisitos es una fase crítica, ya que sienta las bases para todo el proceso de desarrollo de software.

Después de reunir los requisitos, la siguiente fase es el diseño. En esta fase, el ingeniero de software crea un plan detallado para la arquitectura y la funcionalidad del software. Este plan incluye un documento de diseño de software que especifica la estructura, el comportamiento y las interacciones del software con otros sistemas. El documento de diseño de software es esencial, ya que sirve como un plan para la fase de implementación.

La fase de implementación es donde el ingeniero de software crea el código real para el software. Aquí es donde el documento de diseño se transforma en software de trabajo. La fase de implementación implica escribir código, compilarlo y probarlo para garantizar que cumpla con los requisitos especificados en el documento de diseño.

Las pruebas son una fase crítica en la ingeniería de software. En esta fase, el ingeniero de software verifica para garantizar que el software funcione correctamente, sea confiable y fácil de usar. Esto implica varios tipos de pruebas, incluidas pruebas unitarias, pruebas de integración y pruebas de sistema. Las pruebas aseguran que el software cumpla con los requisitos y funciones como se esperaba.

La fase final de la ingeniería de software es el mantenimiento. En esta fase, el ingeniero de software realiza cambios en el software para corregir errores, agregar nuevas funciones o mejorar su rendimiento. El mantenimiento es un proceso continuo que continúa durante toda la vida del software.

Data Science extrae ideas valiosas de datos a menudo desordenados mediante la aplicación de la informática, las estadísticas y el conocimiento del dominio bajo consideración. Los ejemplos del uso de la ciencia de datos incluyen derivar el sentimiento del cliente de los registros de llamadas o los sistemas de recomendación de compra derivados de los registros de ventas.

Un circuito integrado o un circuito integrado monolítico (también denominado IC, un chip o un microchip) es un conjunto de circuitos electrónicos en una pequeña pieza plana (o "chip") de material semiconductor, generalmente silicio. Muchos pequeños MOSFET (metal-óxido-transistores de efecto de campo semiconductor) se integran en un chip pequeño. Esto da como resultado circuitos que son órdenes de magnitud más pequeños, más rápidos y menos costosos que los construidos con componentes electrónicos discretos. La capacidad de producción en masa, la confiabilidad y el enfoque de bloqueo de edificios del CI para el diseño integrado de circuitos han asegurado la rápida adopción de IC estandarizados en lugar de transistores discretos. Los IC ahora se usan en prácticamente todos los equipos electrónicos y han revolucionado el mundo de la electrónica. Computers, mobile phones, and other home appliances are now inextricable parts of the structure of modern societies, made possible by the small size and low cost of ICs such as modern computer processors and microcontrollers.

Very-large-scale integration was made practical by technological advancements in metal–oxide–silicon (MOS) semiconductor device fabrication. Since their origins in the 1960s, the size, speed, and capacity of chips have progressed enormously, driven by technical advances that fit more and more MOS transistors on chips of the same size – a modern chip may have many billions of MOS transistors in an area the size of a human fingernail. These advances, roughly following Moore's law, make today's computer chips possess millions of times the capacity and thousands of times the speed of the computer chips of the early 1970s.

ICs have two main advantages over discrete circuits: cost and performance. The cost is low because the chips, with all their components, are printed as a unit by photolithography rather than being constructed one transistor at a time. Furthermore, packaged ICs use much less material than discrete circuits. Performance is high because the IC's components switch quickly and consume comparatively little power because of their small size and proximity. The main disadvantage of ICs is the high cost of designing them and fabricating the required photomasks. This high initial cost means ICs are only commercially viable when high production volumes are anticipated.

Modern electronic component distributors often further sub-categorize integrated circuits:

Object Oriented Programming is a fundamental programming paradigm that is based on the concepts of objects and data.

It is the standard way of code that every programmer has to abide by for better readability and reusability of the code.

Read more about these concepts of OOP here

In computer science, functional programming is a programming paradigm where programs are constructed by applying and composing functions. It is a declarative programming paradigm in which function definitions are trees of expressions that map values to other values, rather than a sequence of imperative statements which update the running state of the program.

In functional programming, functions are treated as first-class citizens, meaning that they can be bound to names (including local identifiers), passed as arguments, and returned from other functions, just as any other data type can. This allows programs to be written in a declarative and composable style, where small functions are combined in a modular manner.

Functional programming is sometimes treated as synonymous with purely functional programming, a subset of functional programming which treats all functions as deterministic mathematical functions, or pure functions. When a pure function is called with some given arguments, it will always return the same result, and cannot be affected by any mutable state or other side effects. This is in contrast with impure procedures, common in imperative programming, which can have side effects (such as modifying the program's state or taking input from a user). Proponents of purely functional programming claim that by restricting side effects, programs can have fewer bugs, be easier to debug and test, and be more suited to formal verification procedures.

Functional programming has its roots in academia, evolving from the lambda calculus, a formal system of computation based only on functions. Functional programming has historically been less popular than imperative programming, but many functional languages are seeing use today in industry and education.

Some examples of functional programming languages are:

Functional programming is derived historically from the lambda calculus . Lambda calculus is a framework developed by Alonzo Church to study computations with functions. It is often called "the smallest programming language in the world." It provides a definition of what is computable and what is not. It is equivalent to a Turing machine in its computational ability and anything computable by the lambda calculus, just like anything computable by a Turing machine, is computable. It provides a theoretical framework for describing functions and their evaluations.

Some essential concepts of functional programming are:

Pure functions : These functions have two main properties. First, they always produce the same output for the same arguments irrespective of anything else. Secondly, they have no side effects. ie they do not modify any arguments or local/global variables or input/output streams. The latter property is called immutability . The pure function's only result is the value it returns. They are deterministic. Programs done using functional programming are easy to debug because they have no side effects or hidden I/O. Pure functions also make it easier to write parallel/concurrent applications. When code is written in this style, a smart compiler can do many things- it can parallelize the instructions, wait to evaluate results until needed and memorize the results since the results never change as long as the input doesn't change. Here is a simple example of a pure function in Python:

def sum ( x , y ): # sum is a function taking x and y as arguments

return x + y # returns x + y without changing the valueRecursion : There are no "for" or "while" loops in pure functional programming languages. Iteration is implemented through recursion. Recursive functions repeatedly call themselves until a base case is reached. Here is a simple example of a recursion function in C:

int fib ( n ) {

if ( n <= 1 )

return 1 ;

else

return ( fib ( n - 1 ) + fib ( n - 2 ));

}Referential transparency : In functional programs, variables once defined do not change their value throughout the program. Functional programs do not have assignment statements. If we have to store some value, we define a new variable instead. This eliminates any chance of side effects because any variable can be replaced with its actual value at any point of the execution. The state of any variable is constant at any instant. Ejemplo:

x = x + 1 # this changed the value assigned to the variable x

# Therefore, the expression is NOT referentially transparentFunctions are first-class and can be higher order : First class functions are treated as first-class variables. The first class variables can be passed to functions as parameters, can be returned from functions or stored in data structures.

A combination of function applications may be defined using a LISP form called funcall , which takes as arguments a function and a series of arguments and applies that function to those arguments:

( defun filter (list-of-elements test)

( cond (( null list-of-elements) nil )

(( funcall test ( car list-of-elements))

( cons ( car list-of-elements)

(filter ( cdr list-of-elements)

test)))

( t (filter ( cdr list-of-elements)

test))))The function filter applies the test to the first element of the list. If the test returns non-nil, it conses the element onto the result of filter applied to the cdr of the list; otherwise, it just returns the filtered cdr. This function may be used with different predicates passed in as parameters to perform a variety of filtering tasks:

> (filter ' ( 1 3 -9 5 -2 -7 6 ) #' plusp ) ; filter out all negative numbers output: (1 3 5 6)

> (filter ' ( 1 2 3 4 5 6 7 8 9 ) #' evenp ) ; filter out all odd numbersoutput: (2 4 6 8)

etcétera.

Variables are immutable : In functional programming, we can't modify a variable after it's been initialized. We can create new variables- but we can't modify existing variables, and this really helps to maintain the state throughout the runtime of a program. Once we create a variable and set its value, we can have full confidence knowing that the value of that variable will never change.

An operating system (or OS for short) acts as an intermediary between a computer user and computer hardware. The purpose of an operating system is to provide an environment in which a user can execute programs conveniently and efficiently. An operating system is software that manages computer hardware. The hardware must provide appropriate mechanisms to ensure the correct operation of the computer system and to prevent user programs from interfering with the proper operation of the system. An even more common definition is that the operating system is the one program running at all times on the computer (usually called the kernel), with all else being application programs.

Operating systems can be viewed from two viewpoints: resource managers and extended machines. In the resource-manager view, the operating system's job is to manage the different parts of the system efficiently. In the extended-machine view, the job of the system is to provide the users with abstractions that are more con- convenient to use than the actual machine. These include processes, address spaces, and files. Operating systems have a long history, from when they replaced the operator to modern multiprogramming systems. Highlights include early batch systems, multiprogramming systems, and personal computer systems. Since operating systems interact closely with the hardware, some knowledge of computer hardware is useful for understanding them. Computers are built up of processors, memories, and I/O devices. These parts are connected by buses. The basic concepts on which all operating systems are built are processes, memory management, I/O management, the file system, and security. The heart of any operating system is the set of system calls that it can handle. These tell what the operating system does.

The operating system manages all the pieces of a complex system. Modern computers consist of processors, memories, timers, disks, mice, network interfaces, printers, and a wide variety of other devices. In the bottom-up view, the job of the operating system is to provide for an orderly and controlled allocation of the processors, memories, and I/O devices among the various programs wanting them. Modern operating systems allow multiple programs to be in memory and run simultaneously. Imagine what would happen if three programs running on some computer all tried to print their output simultaneously on the same printer. The result would be utter chaos. The operating system can bring order to the potential chaos by buffering all the output destined for the printer on the disk. When one program is finished, the operating system can then copy its output from the disk file where it has been stored for the printer, while at the same time, the other program can continue generating more output, oblivious to the fact that the output is not going to the printer (yet). When a computer (or network) has more than one user, the need to manage and protect the memory, I/O devices, and other resources even more since the users might otherwise interfere with one another. In addition, users often need to share not only hardware but also information (files, databases, etc.). In short, this view of the operating system holds that its primary task is to keep track of which programs are using which resource, to grant resource requests, to account for usage and to mediate conflicting requests from different programs and users.

The architecture of most computers at the machine-language level is primitive and awkward to program, especially for input/output. To make this point more concrete, consider modern SATA (Serial ATA) hard disks used on most computers. What a programmer would have to know to use the disk. Since then, the interface has been revised multiple times and is more complicated than it was in 2007. No sane programmer would want to deal with this disk at the hardware level. Instead, a piece of software called a disk driver deals with the hardware and provides an interface to read and write disk blocks, without getting into the details. Operating systems contain many drivers for controlling I/O devices. But even this level is much too low for most applications. For this reason, all operating systems provide yet another layer of abstraction for using disks: files. Using this abstraction, programs can create, write, and read files without dealing with the messy details of how the hardware works. This abstraction is the key to managing all this complexity. Good abstractions turn a nearly impossible task into two manageable ones. The first is defining and implementing the abstractions. The second is using these abstractions to solve the problem at hand.

First Generation (1945-55) : Little progress was achieved in building digital computers after Babbage's disastrous efforts until the World War II era. At Iowa State University, Professor John Atanasoff and his graduate student Clifford Berry created what is today recognized as the first operational digital computer. Konrad Zuse in Berlin constructed the Z3 computer using electromechanical relays around the same time. The Mark I was created by Howard Aiken at Harvard, the Colossus by a team of scientists at Bletchley Park in England, and the ENIAC by William Mauchley and his doctoral student J. Presper Eckert at the University of Pennsylvania in 1944.

Second Generation (1955-65) : The transistor's invention in the middle of the 1950s drastically altered the situation. Computers became dependable enough that they could be manufactured and sold to paying customers with the assumption that they would keep working long enough to conduct some meaningful job. Mainframes, as these machines are now known, were kept locked up in huge, particularly air-conditioned computer rooms, with teams of qualified operators to manage them. Only huge businesses, significant government entities, or institutions could afford the price tag of several million dollars.

Third Generation (1965-80) : In comparison to second-generation computers, which were constructed from individual transistors, the IBM 360 was the first major computer line to employ (small-scale) ICs (Integrated Circuits). As a result, it offered a significant price/performance benefit. It was an instant hit, and all the other big manufacturers quickly embraced the concept of a family of interoperable computers. All software, including the OS/360 operating system, was supposed to be compatible with all models in the original design. It had to run on massive systems, which frequently replaced 7094s for heavy computation and weather forecasting, and tiny systems, which frequently merely replaced 1401s for transferring cards to tape. Both systems with few peripherals and systems with many peripherals needed to function well with it. It had to function both in professional and academic settings. Above all, it had to be effective for each of these many applications.

Fourth Generation (1980-Present) : The personal computer era began with the creation of LSI (Large Scale Integration) circuits, processors with thousands of transistors on a square centimeter of silicon. Although personal computers, originally known as microcomputers, did not change significantly in architecture from minicomputers of the PDP-11 class, they did differ significantly in price.

Fifth Generation (1990-Present) : People have yearned for a portable communication gadget ever since detective Dick Tracy in the 1940s comic strip began conversing with his "two-way radio wristwatch." In 1946, a real mobile phone made its debut, and it weighed about 40 kilograms. The first real portable phone debuted in the 1970s and was incredibly lightweight at about one kilogram. It was jokingly referred to as "the brick." Soon, everyone was clamoring for one.

Mainframe OS : At the high end are the operating systems for mainframes, those room-sized computers still found in major corporate data centres. These computers differ from personal computers in terms of their I/O capacity. A mainframe with 1000 disks and millions of gigabytes of data is not unusual; a personal computer with these specifications would be the envy of its friends. Mainframes are also making some- a thing of a comeback as high-end Web servers, servers for large-scale electronic commerce sites, and servers for business-to-business transactions. The operating systems for mainframes are heavily oriented toward processing many jobs at once, most of which need prodigious amounts of I/O. They typically offer three kinds of services: batch, transaction processing, and timesharing

Server OS : One level down is the server operating systems. They run on servers, which are either very large personal computers, workstations, or even mainframes. They serve multiple users at once over a network and allow the users to share hardware and software resources. Servers can provide print service, file service, or Web service. Internet providers run many server machines to support their customers, and Websites use servers to store Web pages and handle incoming requests. Typical server operating systems are Solaris, FreeBSD, Linux, and Windows Server 201x.

Multiprocessor OS : An increasingly common way to get major-league computing power is to connect multiple CPUs into a single system. Depending on precisely how they are connected and what is shared, these systems are called parallel computers, multi-computers, or multiprocessors. They need special operating systems, but often these are variations on the server operating systems, with special features for communication, connectivity, and consistency.

Personal Computer OS : The next category is the personal computer operating system. Modern ones all support multiprogramming, often with dozens of programs started up at boot time. Their job is to provide good support to a single user. They are widely used for word processing, spreadsheets, games, and Internet access. Common examples are Linux, FreeBSD, Windows 7, Windows 8, and Apple's OS X. Personal computer operating systems are so widely known that probably little introduction is needed. Many people are not even aware that other kinds exist.

Embedded OS : Embedded systems run on computers that control devices that are not generally considered computers and do not accept user-installed software. Typical examples are microwave ovens, TV sets, cars, DVD recorders, traditional phones, and MP3 players. The main property distinguishing embedded systems from handhelds is the certainty that no untrusted software will ever run on them. You cannot download new applications to your microwave oven—all the software is in ROM. This means there is no need for protection between applications, simplifying design. Systems such as Embedded Linux, QNX and VxWorks is popular in this domain.

Smart Card OS : The smallest operating systems run on credit-card-sized smart card devices with CPU chips. They have very severe processing power and memory constraints. Some are powered by contacts in the reader into which they are inserted, but contactless smart cards are inductively powered, greatly limiting what they can do. Some can handle only a single function, such as electronic payments, but others can handle multiple functions. Often these are proprietary systems. Some smart cards are Java oriented. This means that the ROM on the smart card holds an interpreter for the Java Virtual Machine (JVM). Java applets (small programs) are downloaded to the card and are interpreted by the JVM interpreter. Some of these cards can handle multiple Java applets at the same time, leading to multiprogramming and the need to schedule them. Resource management and protection also become an issue when two or more applets are present simultaneously. These issues must be handled by the (usually extremely primitive) operating system present on the card.

The term memory refers to the component within your computer that allows short-term data access. You may recognize this component as DRAM or dynamic random-access memory. Your computer performs many operations by accessing data stored in its short-term memory. Some examples of such operations include editing a document, loading applications, and browsing the Internet. The speed and performance of your system depend on the amount of memory that is installed on your computer.

If you have a desk and a filing cabinet, the desk represents your computer's memory. Items you need to use immediately are kept on your desk for easy access. However, not much can be stored on a desk due to its size limitations.

Whereas memory refers to the location of short-term data, storage is the component within your computer that allows you to store and access data long-term. Usually, storage comes in the form of a solid-state drive or a hard drive. Storage houses your applications, operating system, and files indefinitely. Computers need to read and write information from the storage system, so the storage speed determines how fast your system can boot up, load, and access what you've saved.

While the desk represents the computer's memory, the filing cabinet represents your computer's storage. It holds items that need to be saved and stored but is not necessarily needed for immediate access. The size of the filing cabinet means that it can hold many things.

An important distinction between memory and storage is that memory clears when the computer is turned off. On the other hand, storage remains intact no matter how often you shut off your computer. Therefore, in the desk and filing cabinet analogy, any files left on your desk will be thrown away when you leave the office. Everything in your filing cabinet will remain.

At the heart of computer systems lies memory, the space where programs run and data is stored. But what happens when the programs you're running and the data you're working with exceed the physical capacity of your computer's memory? This is where virtual memory steps in, acting as a smart extension to your computer's memory and enhancing its capabilities.

Definition and Purpose of Virtual Memory:

Virtual memory is a memory management technique employed by operating systems to overcome the limitations of physical memory (RAM). It creates an illusion for software applications that they have access to a larger amount of memory than what is physically installed on the computer. In essence, it enables programs to utilize memory space beyond the confines of the computer's physical RAM.

The primary purpose of virtual memory is to enable efficient multitasking and the execution of larger programs, all while maintaining the responsiveness of the system. It achieves this by creating a seamless interaction between the physical RAM and secondary storage devices, like the hard drive or SSD.

How Virtual Memory Extends Available Physical Memory:

Think of virtual memory as a bridge that connects your computer's RAM and its secondary storage (disk drives). When you run a program, parts of it are loaded into the faster physical memory (RAM). However, not all parts of the program may be used immediately.

Virtual memory exploits this situation by moving sections of the program that aren't actively being used from RAM to the secondary storage, creating more room in RAM for the parts that are actively in use. This process is transparent to the user and the running programs. When the moved parts are needed again, they are swapped back into RAM, while other less active parts may be moved to the secondary storage.

This dynamic swapping of data in and out of physical memory is managed by the operating system. It allows programs to run even if they're larger than the available RAM, as the operating system intelligently decides what data needs to be in RAM for optimal performance.

In summary, virtual memory acts as a virtualization layer that extends the available physical memory by temporarily transferring parts of programs and data between the RAM and secondary storage. This process ensures that the computer can handle larger tasks and numerous programs simultaneously, all while maintaining efficient performance and responsiveness.

In computing, a file system or filesystem (often abbreviated to fs) is a method and data structure the operating system uses to control how data is stored and retrieved. Without a file system, data placed in a storage medium would be one large body of data with no way to tell where one piece of data stopped and the next began or where any piece of data was located when it was time to retrieve it. By separating the data into pieces and giving each piece a name, the data is easily isolated and identified. Taking its name from how a paper-based data management system is named, each data group is called a "file". The structure and logic rules used to manage the groups of data and their names are called a "file system."

There are many kinds of file systems, each with unique structure and logic, properties of speed, flexibility, security, size, and more. Some file systems have been designed to be used for specific applications. For example, the ISO 9660 file system is designed specifically for optical discs.

File systems can be used on many types of storage devices using various media. As of 2019, hard disk drives have been key storage devices and are projected to remain so for the foreseeable future. Other kinds of media that are used include SSDs, magnetic tapes, and optical discs. In some cases, such as with tmpfs, the computer's main memory (random-access memory, RAM) creates a temporary file system for short-term use.

Some file systems are used on local data storage devices; others provide file access via a network protocol (for example, NFS, SMB, or 9P clients). Some file systems are "virtual", meaning that the supplied "files" (called virtual files) are computed on request (such as procfs and sysfs) or are merely a mapping into a different file system used as a backing store. The file system manages access to both the content of files and the metadata about those files. It is responsible for arranging storage space; reliability, efficiency, and tuning with regard to the physical storage medium are important design considerations.

A file system stores and organizes data and can be thought of as a type of index for all the data contained in a storage device. These devices can include hard drives, optical drives, and flash drives.

File systems specify conventions for naming files, including the maximum number of characters in a name, which characters can be used, and, in some systems, how long the file name suffix can be. In many file systems, file names are not case-sensitive.

Along with the file itself, file systems contain information such as the file's size and its attributes, location, and hierarchy in the directory in the metadata. Metadata can also identify free blocks of available storage on the drive and how much space is available.

A file system also includes a format to specify the path to a file through the structure of directories. A file is placed in a directory -- or a folder in Windows OS -- or subdirectory at the desired place in the tree structure. PC and mobile OSes have file systems in which files are placed in a hierarchical tree structure.

Before files and directories are created on the storage medium, partitions should be put into place. A partition is a region of the hard disk or other storage that the OS manages separately. One file system is contained in the primary partition, and some OSes allow for multiple partitions on one disk. In this situation, if one file system gets corrupted, the data in a different partition will be safe.

There are several types of file systems, all with different logical structures and properties, such as speed and size. The type of file system can differ by OS and the needs of that OS. Microsoft Windows, Mac OS X, and Linux are the three most common PC operating systems. Mobile OSes include Apple iOS and Google Android.

Major file systems include the following:

File allocation table (FAT) is supported by Microsoft Windows OS. FAT is considered simple and reliable and modeled after legacy file systems. FAT was designed in 1977 for floppy disks but was later adapted for hard disks. While efficient and compatible with most current OSes, FAT cannot match the performance and scalability of more modern file systems.

Global file system (GFS) is a file system for the Linux OS, and it is a shared disk file system. GFS offers direct access to shared block storage and can be used as a local file system.

GFS2 is an updated version with features not included in the original GFS, such as an updated metadata system. Under the GNU General Public License terms, both the GFS and GFS2 file systems are available as free software.

Hierarchical file system (HFS) was developed for use with Mac operating systems. HFS can also be called Mac OS Standard, succeeded by Mac OS Extended. Originally introduced in 1985 for floppy and hard disks, HFS replaced the original Macintosh file system. It can also be used on CD-ROMs.

The NT file system -- also known as the New Technology File System (NTFS) -- is the default file system for Windows products from Windows NT 3.1 OS onward. Improvements from the previous FAT file system include better metadata support, performance, and use of disk space. NTFS is also supported in the Linux OS through a free, open-source NTFS driver. Mac OSes have read-only support for NTFS.

Universal Disk Format (UDF) is a vendor-neutral file system for optical media and DVDs. UDF replaces the ISO 9660 file system and is the official file system for DVD video and audio, as chosen by the DVD Forum.

Cloud computing is a type of Internet-based computing that provides shared computer processing resources and data to computers and other devices on demand. It also allows authorized users and systems to access applications and data from any location with an internet connection.

It is a model for enabling ubiquitous, convenient, on-demand network access to a shared pool of configurable computing resources (eg, networks, servers, storage, applications, and services) that can be rapidly provisioned and released with minimal management effort or service provider interaction.

Cloud computing is a big shift from how businesses think about IT resources. Here are seven common reasons organizations are turning to cloud computing services:

Cloud computing eliminates the capital expense of buying hardware and software and setting up and running on-site data centers—the racks of servers, the round-the-clock electricity for power and cooling, and the IT experts for managing the infrastructure. It adds up fast.

Most cloud computing services are provided self-service and on demand, so even vast amounts of computing resources can be provisioned in minutes, typically with just a few mouse clicks, giving businesses a lot of flexibility and taking the pressure off capacity planning.

The benefits of cloud computing services include the ability to scale elastically. In cloud speak, that means delivering the right amount of IT resources—for example, more or less computing power, storage, and bandwidth—right when it is needed and from the right geographic location.

On-site data centers typically require a lot of "racking and stacking"—hardware setup, software patching, and other time-consuming IT management chores. Cloud computing removes the need for many of these tasks, so IT teams can spend time on achieving more important business goals.

The biggest cloud computing services run on a worldwide network of secure data centers, which are regularly upgraded to the latest generation of fast and efficient computing hardware. This offers several benefits over a single corporate data center, including reduced network latency for applications and greater economies of scale.

Cloud computing makes data backup, disaster recovery, and business continuity easier and less expensive because data can be mirrored at multiple redundant sites on the cloud provider's network.

Many cloud providers offer a broad set of policies, technologies, and controls that strengthen your security posture overall, helping protect your data, apps, and infrastructure from potential threats.

Machine learning is the practice of teaching a computer to learn. The concept uses pattern recognition, as well as other forms of predictive algorithms, to make judgments on incoming data. This field is closely related to artificial intelligence and computational statistics.

In this, machine learning models are trained with labelled data sets, which allow the models to learn and grow more accurately over time. For example, an algorithm would be trained with pictures of dogs and other things, all labelled by humans, and the machine would learn ways to identify pictures of dogs on its own. Supervised machine learning is the most common type used today.

Practical applications of Supervised Learning –

In Unsupervised machine learning, a program looks for patterns in unlabeled data. Unsupervised machine learning can find patterns or trends that people aren't explicitly looking for. For example, an unsupervised machine learning program could look through online sales data and identify different types of clients making purchases.

Practical applications of unsupervised Learning

The disadvantage of supervised learning is that it requires hand-labelling by ML specialists or data scientists and requires a high cost to process. Unsupervised learning also has a limited spectrum for its applications. To overcome these drawbacks of supervised learning and unsupervised learning algorithms, the concept of Semi-supervised learning is introduced. Typically, this combination contains a very small amount of labelled data and a large amount of unlabelled data. The basic procedure involved is that first, the programmer will cluster similar data using an unsupervised learning algorithm and then use the existing labelled data to label the rest of the unlabelled data.

Practical applications of Semi-Supervised Learning –

This trains machines through trial and error to take the best action by establishing a reward system. Reinforcement learning can train models to play games or train autonomous vehicles to drive by telling the machine when it made the right decisions, which helps it learn over time what actions it should take.

Practical applications of Reinforcement Learning -

Natural language processing is a field of machine learning in which machines learn to understand natural language as spoken and written by humans instead of the data and numbers normally used to program computers. This allows machines to recognize the language, understand it, and respond to it, as well as create new text and translate between languages. Natural language processing enables familiar technology like chatbots and digital assistants like Siri or Alexa.

Practical applications of NLP:

Neural networks are a commonly used, specific class of machine learning algorithms. Artificial neural networks are modelled on the human brain, in which thousands or millions of processing nodes are interconnected and organized into layers.

In an artificial neural network, cells, or nodes, are connected, with each cell processing inputs and producing an output that is sent to other neurons. Labeled data moves through the nodes or cells, with each cell performing a different function. In a neural network trained to identify whether a picture contains a cat or not, the different nodes would assess the information and arrive at an output that indicates whether a picture features a cat.

Practical applications of Neural Networks:

Deep learning networks are advanced neural networks with multiple layers. These layered networks can process vast amounts of data and adjust the "weights" of each connection within the network. For example, in an image recognition system, certain layers of the neural network might detect individual facial features like eyes, nose, or mouth. Another layer would then analyze whether these features are arranged in a way that identifies a face.

Practical applications of Deep Learning:

Web Technology refers to the various tools and techniques that are utilized in the process of communication between different types of devices over the Internet. A web browser is used to access web pages. Web browsers can be defined as programs that display text, data, pictures, animation, and video on the Internet. Hyperlinked resources on the World Wide Web can be accessed using software interfaces provided by Web browsers.

The part of a website where the user interacts directly is termed the front end. It is also referred to as the 'client side' of the application.

The backend is the server side of a website. It is part of the website that users cannot see and interact with. It is the portion of software that does not come in direct contact with the users. It is used to store and arrange data.

A computer network is a set of computers sharing resources located on or provided by network nodes. Computers use common communication protocols over digital interconnections to communicate with each other. These interconnections are made up of telecommunication network technologies based on physically wired, optical, and wireless radio-frequency methods that may be arranged in a variety of network topologies.

The nodes of a computer network can include personal computers, servers, networking hardware, or other specialized or general-purpose hosts. They are identified by network addresses and may have hostnames. Hostnames serve as memorable labels for the nodes, rarely changed after the initial assignment. Network addresses serve for locating and identifying the nodes by communication protocols such as the Internet Protocol.

Computer networks may be classified by many criteria, including the transmission medium used to carry signals, bandwidth, communications protocols to organize network traffic, the network size, the topology, traffic control mechanism, and organizational intent.

There are two primary types of computer networking:

OSI stands for Open Systems Interconnection . It was developed by ISO – ' International Organization for Standardization 'in the year 1984. It is a 7-layer architecture with each layer having specific functionality to perform. All these seven layers work collaboratively to transmit the data from one person to another across the globe.

The lowest layer of the OSI reference model is the physical layer. It is responsible for the actual physical connection between the devices. The physical layer contains information in the form of bits. It is responsible for transmitting individual bits from one node to the next. When receiving data, this layer will get the signal received and convert it into 0s and 1s and send them to the Data Link layer, which will put the frame back together.

The functions of the physical layer are as follows:

The data link layer is responsible for the node-to-node delivery of the message. The main function of this layer is to make sure data transfer is error-free from one node to another over the physical layer. When a packet arrives in a network, it is the responsibility of the DLL to transmit it to the host using its MAC address.

The Data Link Layer is divided into two sublayers:

The packet received from the Network layer is further divided into frames depending on the frame size of the NIC(Network Interface Card). DLL also encapsulates the Sender and Receiver's MAC address in the header.

The Receiver's MAC address is obtained by placing an ARP(Address Resolution Protocol) request onto the wire asking, “Who has that IP address?” and the destination host will reply with its MAC address.

The functions of the Data Link layer are :

The network layer works for the transmission of data from one host to the other located in different networks. It also takes care of packet routing, ie, the selection of the shortest path to transmit the packet from the number of routes available. The sender & receiver's IP addresses are placed in the header by the network layer.

The functions of the Network layer are :

The Internet is a global system of interconnected computer networks that use the standard Internet protocol suite (TCP/IP) to serve billions of users worldwide. It is a network of networks that consists of millions of private, public, academic, business, and government networks of local to global scope that is linked by a broad array of electronic, wireless, and optical networking technologies. The Internet carries an extensive range of information resources and services, such as the interlinked hypertext documents and applications of the World Wide Web (WWW) and the infrastructure to support email.

The World Wide Web (WWW) is an information space where documents and other web resources are identified by Uniform Resource Locators (URLs), interlinked by hypertext links, and accessible via the Internet. English scientist Tim Berners-Lee invented the World Wide Web in 1989. He wrote the first web browser in 1990 while employed at CERN in Switzerland. The browser was released outside CERN in 1991, first to other research institutions starting in January 1991 and to the general public on the Internet in August 1991.

The Internet Protocol (IP) is a protocol, or set of rules, for routing and addressing packets of data so that they can travel across networks and arrive at the correct destination. Data traversing the Internet is divided into smaller pieces called packets.

A database is a collection of related data that represents some aspect of the real world. A database system is designed to be built and populated with data for a certain task.

Database Management System (DBMS) is software for storing and retrieving users' data while considering appropriate security measures. It consists of a group of programs that manipulate the database. The DBMS accepts the request for data from an application and instructs the operating system to provide the specific data. In large systems, a DBMS helps users and other third-party software store and retrieve data.

DBMS allows users to create their databases as per their requirements. The term "DBMS" includes the use of a database and other application programs. It provides an interface between the data and the software application.

Let us see a simple example of a university database. This database maintains information concerning students, courses, and grades in a university environment. The database is organized into five files:

To define DBMS:

Here are the important landmarks from history:

Here are the characteristics and properties of a Database Management System:

Here is the list of some popular DBMS systems:

Cryptography is a technique to secure data and communication. It is a method of protecting information and communications through the use of codes so that only those for whom the information is intended can read and process it. Cryptography is used to protect data in transit, at rest, and in use. The prefix crypt means "hidden" or "secret", and the suffix graphy means "writing".

There are two types of cryptography:

Cryptocurrency is a digital currency in which encryption techniques are used to regulate the generation of units of currency and verify the transfer of funds, operating independently of a central bank. Cryptocurrencies use decentralized control as opposed to centralized digital currency and central banking systems. The decentralized control of each cryptocurrency works through distributed ledger technology, typically a blockchain, that serves as a public financial transaction database. A defining feature of a cryptocurrency, and arguably its most endearing allure, is its organic nature; it is not issued by any central authority, rendering it theoretically immune to government interference or manipulation.

In theoretical computer science and mathematics, the theory of computation is the branch that deals with what problems can be solved on a model of computation using an algorithm, how efficiently they can be solved, or to what degree (eg, approximate solutions versus precise ones). The field is divided into three major branches: automata theory and formal languages, computability theory, and computational complexity theory, which are linked by the question: "What are the fundamental capabilities and limitations of computers?".

Automata theory is the study of abstract machines and automata, as well as the computational problems that can be solved using them. It is a theory in theoretical computer science. The word automata comes from the Greek word αὐτόματος, which means "self-acting, self-willed, self-moving". An automaton (automata in plural) is an abstract self-propelled computing device that follows a predetermined sequence of operations automatically. An automaton with a finite number of states is called a Finite Automaton (FA) or Finite-State Machine (FSM). The figure on the right illustrates a finite-state machine, which is a well-known type of automaton. This automaton consists of states (represented in the figure by circles) and transitions (represented by arrows). As the automaton sees a symbol of input, it makes a transition (or jump) to another state, according to its transition function, which takes the previous state and current input symbol as its arguments.

In logic, mathematics, computer science, and linguistics, a formal language consists of words whose letters are taken from an alphabet and are well-formed according to a specific set of rules.

The alphabet of a formal language consists of symbols, letters, or tokens that concatenate into strings of the language. Each string concatenated from symbols of this alphabet is called a word, and the words that belong to a particular formal language are sometimes called well-formed words or well-formed formulas. A formal language is often defined using formal grammar, such as regular grammar or context-free grammar, which consists of its formation rules.

In computer science, formal languages are used, among others, as the basis for defining the grammar of programming languages and formalized versions of subsets of natural languages in which the words of the language represent concepts that are associated with particular meanings or semantics. In computational complexity theory, decision problems are typically defined as formal languages and complexity classes are defined as the sets of formal languages that can be parsed by machines with limited computational power. In logic and the foundations of mathematics, formal languages are used to represent the syntax of axiomatic systems, and mathematical formalism is the philosophy that all mathematics can be reduced to the syntactic manipulation of formal languages in this way.

Computability theory, also known as recursion theory, is a branch of mathematical logic and computer science that began in the 1930s with the study of computable functions and Turing degrees. Since its inception, the field has expanded to encompass the study of generalized computability and definability. In these areas, computability theory intersects with proof theory and effective descriptive set theory, reflecting its broad and interdisciplinary nature.

In theoretical computer science and mathematics, computational complexity theory focuses on classifying computational problems according to their resource usage and relating these classes to each other. A computational problem is a task solved by a computer. A computation problem is solvable by a mechanical application of mathematical steps, such as an algorithm.

A problem is regarded as inherently difficult if its solution requires significant resources, whatever the algorithm used. The theory formalizes this intuition by introducing mathematical models of computation to study these problems and quantifying their computational complexity, ie, the number of resources needed to solve them, such as time and storage. Other measures of complexity are also used, such as the amount of communication (used in communication complexity), the number of gates in a circuit (used in circuit complexity), and the number of processors (used in parallel computing). One of the roles of computational complexity theory is to determine the practical limits on what computers can and cannot do. The P versus NP problem, one of the seven Millennium Prize Problems, is dedicated to the field of computational complexity.