用於分佈式,可擴展的Pytorch應用程序的機器學習指標。

什麼是火炬器•實施度量•內置指標•文檔•社區•許可證

PYPI的簡單安裝

pip install torchmetrics使用conda安裝

conda install -c conda-forge torchmetrics來自來源的PIP

# with git

pip install git+https://github.com/Lightning-AI/torchmetrics.git@release/stable來自檔案的pip

pip install https://github.com/Lightning-AI/torchmetrics/archive/refs/heads/release/stable.zip專門指標的額外依賴性:

pip install torchmetrics[audio]

pip install torchmetrics[image]

pip install torchmetrics[text]

pip install torchmetrics[all] # install all of the above安裝最新開發人員版本

pip install https://github.com/Lightning-AI/torchmetrics/archive/master.zipTorchmetrics是100多個Pytorch指標實現的集合和易於使用的API,可創建自定義指標。它提供:

您可以將Torchmetrics與任何Pytorch型號或Pytorch Lightning一起使用,以享受其他功能,例如:

基於模塊的指標包含內部度量狀態(類似於Pytorch模塊的參數),它們可以自動化跨設備的積累和同步!

這可以在CPU,單個GPU或Multi-GPU上運行!

對於單個GPU/CPU案例:

import torch

# import our library

import torchmetrics

# initialize metric

metric = torchmetrics . classification . Accuracy ( task = "multiclass" , num_classes = 5 )

# move the metric to device you want computations to take place

device = "cuda" if torch . cuda . is_available () else "cpu"

metric . to ( device )

n_batches = 10

for i in range ( n_batches ):

# simulate a classification problem

preds = torch . randn ( 10 , 5 ). softmax ( dim = - 1 ). to ( device )

target = torch . randint ( 5 , ( 10 ,)). to ( device )

# metric on current batch

acc = metric ( preds , target )

print ( f"Accuracy on batch { i } : { acc } " )

# metric on all batches using custom accumulation

acc = metric . compute ()

print ( f"Accuracy on all data: { acc } " )使用多個GPU或多個節點時,模塊度量使用率保持不變。

import os

import torch

import torch . distributed as dist

import torch . multiprocessing as mp

from torch import nn

from torch . nn . parallel import DistributedDataParallel as DDP

import torchmetrics

def metric_ddp ( rank , world_size ):

os . environ [ "MASTER_ADDR" ] = "localhost"

os . environ [ "MASTER_PORT" ] = "12355"

# create default process group

dist . init_process_group ( "gloo" , rank = rank , world_size = world_size )

# initialize model

metric = torchmetrics . classification . Accuracy ( task = "multiclass" , num_classes = 5 )

# define a model and append your metric to it

# this allows metric states to be placed on correct accelerators when

# .to(device) is called on the model

model = nn . Linear ( 10 , 10 )

model . metric = metric

model = model . to ( rank )

# initialize DDP

model = DDP ( model , device_ids = [ rank ])

n_epochs = 5

# this shows iteration over multiple training epochs

for n in range ( n_epochs ):

# this will be replaced by a DataLoader with a DistributedSampler

n_batches = 10

for i in range ( n_batches ):

# simulate a classification problem

preds = torch . randn ( 10 , 5 ). softmax ( dim = - 1 )

target = torch . randint ( 5 , ( 10 ,))

# metric on current batch

acc = metric ( preds , target )

if rank == 0 : # print only for rank 0

print ( f"Accuracy on batch { i } : { acc } " )

# metric on all batches and all accelerators using custom accumulation

# accuracy is same across both accelerators

acc = metric . compute ()

print ( f"Accuracy on all data: { acc } , accelerator rank: { rank } " )

# Resetting internal state such that metric ready for new data

metric . reset ()

# cleanup

dist . destroy_process_group ()

if __name__ == "__main__" :

world_size = 2 # number of gpus to parallelize over

mp . spawn ( metric_ddp , args = ( world_size ,), nprocs = world_size , join = True )實施自己的指標就像對torch.nn.Module子類別一樣容易。 torchmetrics.Metric update

import torch

from torchmetrics import Metric

class MyAccuracy ( Metric ):

def __init__ ( self ):

# remember to call super

super (). __init__ ()

# call `self.add_state`for every internal state that is needed for the metrics computations

# dist_reduce_fx indicates the function that should be used to reduce

# state from multiple processes

self . add_state ( "correct" , default = torch . tensor ( 0 ), dist_reduce_fx = "sum" )

self . add_state ( "total" , default = torch . tensor ( 0 ), dist_reduce_fx = "sum" )

def update ( self , preds : torch . Tensor , target : torch . Tensor ) -> None :

# extract predicted class index for computing accuracy

preds = preds . argmax ( dim = - 1 )

assert preds . shape == target . shape

# update metric states

self . correct += torch . sum ( preds == target )

self . total += target . numel ()

def compute ( self ) -> torch . Tensor :

# compute final result

return self . correct . float () / self . total

my_metric = MyAccuracy ()

preds = torch . randn ( 10 , 5 ). softmax ( dim = - 1 )

target = torch . randint ( 5 , ( 10 ,))

print ( my_metric ( preds , target ))與torch.nn類似,大多數指標都具有基於模塊的功能版本。功能版本是簡單的python函數,當輸入帶有torch.tensor。

import torch

# import our library

import torchmetrics

# simulate a classification problem

preds = torch . randn ( 10 , 5 ). softmax ( dim = - 1 )

target = torch . randint ( 5 , ( 10 ,))

acc = torchmetrics . functional . classification . multiclass_accuracy (

preds , target , num_classes = 5

)Torchmetrics總共包含100多個指標,其中涵蓋以下域:

每個域可能需要一些其他依賴項,這些依賴項可以通過pip install torchmetrics[audio] , pip install torchmetrics['image']等安裝。

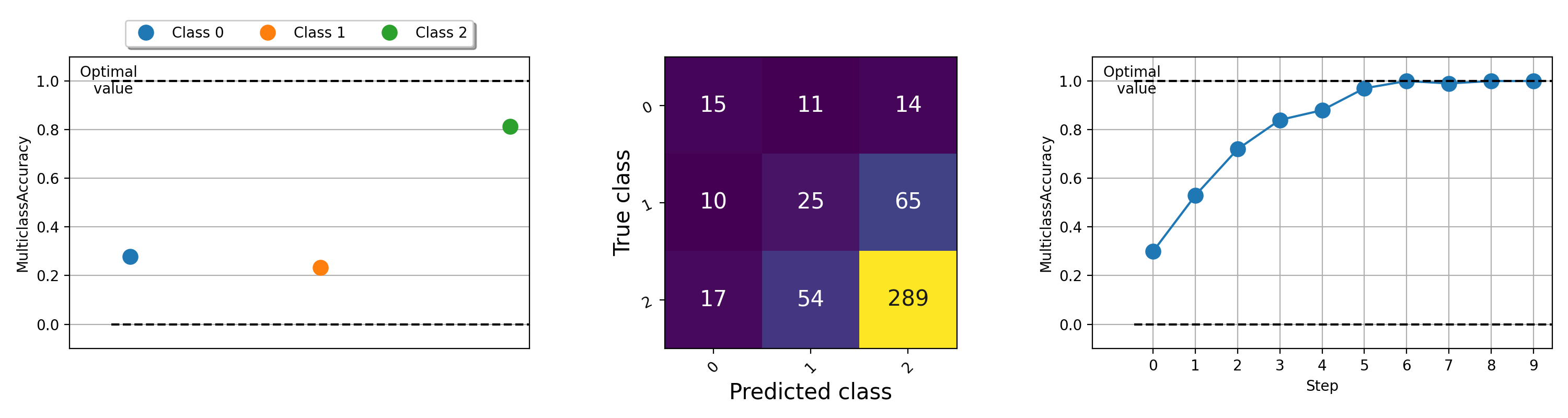

指標的可視化對於幫助了解機器學習算法的情況很重要。 Torchmetrics具有內置的繪圖支持(使用pip install torchmetrics[visual]安裝依賴項),幾乎通過.plot方法為所有模塊化指標。只需調用該方法即可獲得任何度量的簡單可視化!

import torch

from torchmetrics . classification import MulticlassAccuracy , MulticlassConfusionMatrix

num_classes = 3

# this will generate two distributions that comes more similar as iterations increase

w = torch . randn ( num_classes )

target = lambda it : torch . multinomial (( it * w ). softmax ( dim = - 1 ), 100 , replacement = True )

preds = lambda it : torch . multinomial (( it * w ). softmax ( dim = - 1 ), 100 , replacement = True )

acc = MulticlassAccuracy ( num_classes = num_classes , average = "micro" )

acc_per_class = MulticlassAccuracy ( num_classes = num_classes , average = None )

confmat = MulticlassConfusionMatrix ( num_classes = num_classes )

# plot single value

for i in range ( 5 ):

acc_per_class . update ( preds ( i ), target ( i ))

confmat . update ( preds ( i ), target ( i ))

fig1 , ax1 = acc_per_class . plot ()

fig2 , ax2 = confmat . plot ()

# plot multiple values

values = []

for i in range ( 10 ):

values . append ( acc ( preds ( i ), target ( i )))

fig3 , ax3 = acc . plot ( values )

有關繪製不同指標的示例,請嘗試運行此示例文件。

Lightning + Torchmetrics團隊努力添加更多指標。但是,我們正在尋找像您這樣的令人難以置信的貢獻者來提交新的指標並改善現有指標!

加入我們的不和諧以獲得貢獻者的幫助!

如有幫助或疑問,請加入我們的巨大社區不和諧!

我們很高興能繼續堅強的開源軟件遺產,並受到Caffe,Theano,Keras,Pytorch,Torchbearer,Ignite,Ignite,Sklearn和Fast.ai的啟發。

如果您想引用此框架,請隨時使用GitHub的內置引用選項來基於此文件生成Bibtex或APA風格的引用(但前提是您喜歡它?)。

請觀察此存儲庫中列出的Apache 2.0許可證。此外,閃電框架是申請專利。