这个存储库是

(1)在主流简历基准上提供经典知识蒸馏算法的Pytorch库,

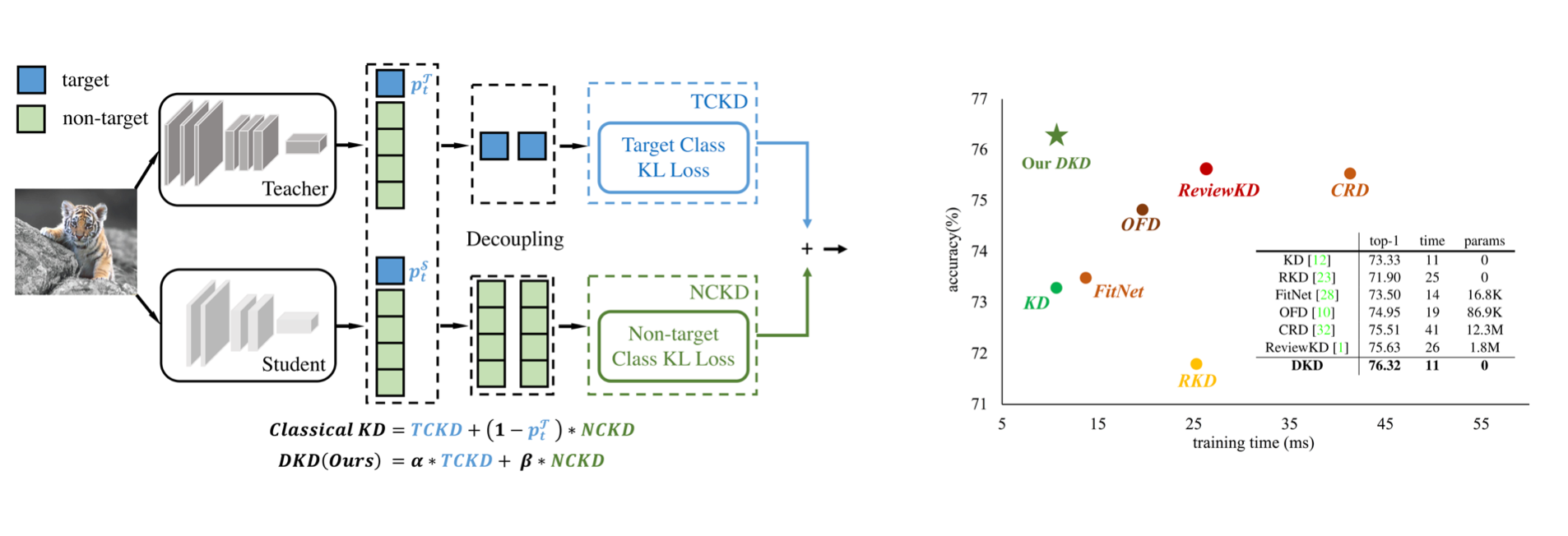

(2)CVPR-2022论文的正式实施:脱钩知识蒸馏。

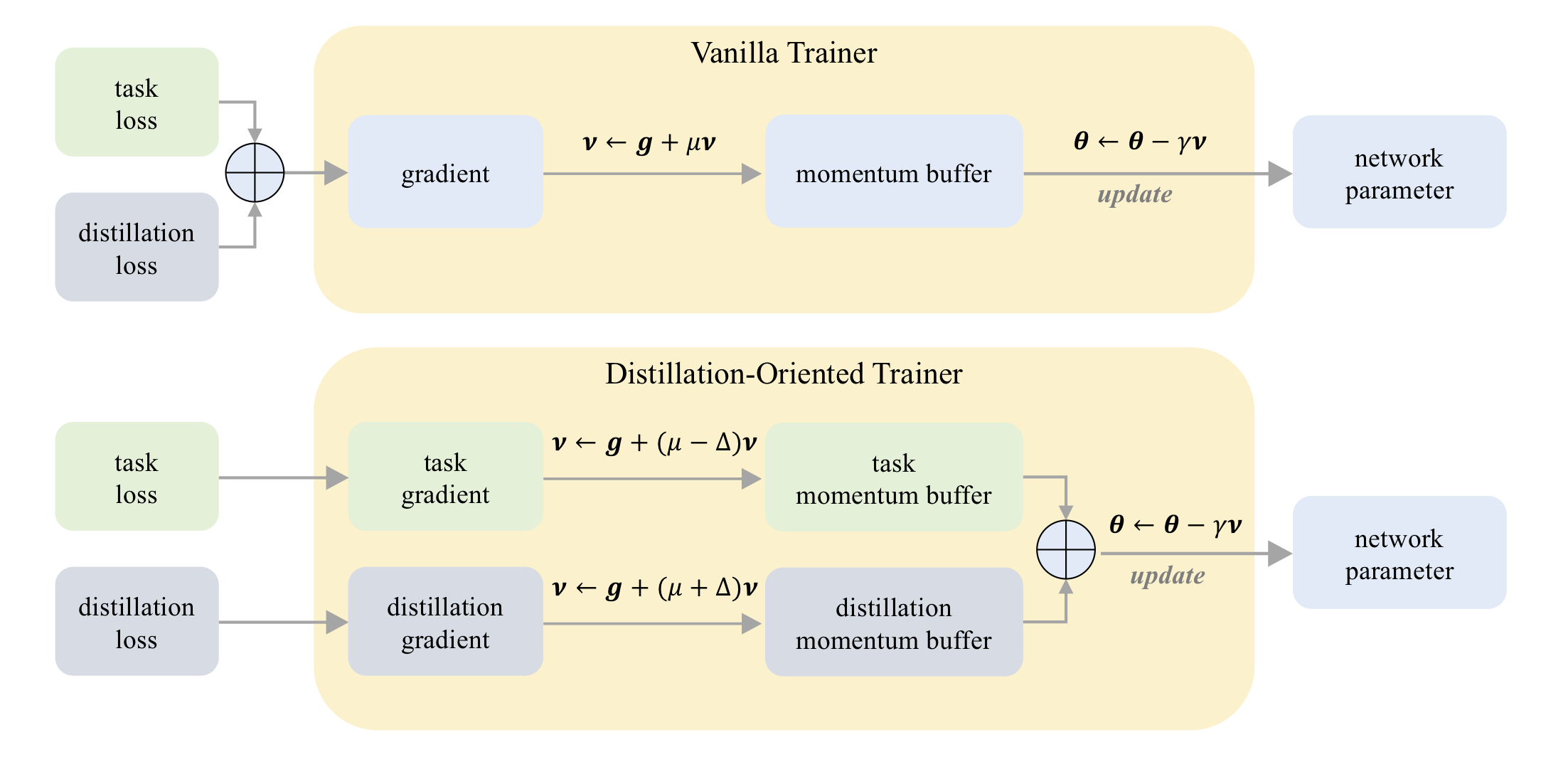

(3)ICCV-2023论文的正式实施:DOT:面向蒸馏的教练。

在CIFAR-100上:

| 老师 学生 | RESNET32X4 resnet8x4 | VGG13 VGG8 | RESNET32X4 Shufflenet-V2 |

|---|---|---|---|

| KD | 73.33 | 72.98 | 74.45 |

| KD+点 | 75.12 | 73.77 | 75.55 |

关于小象征:

| 老师 学生 | RESNET18 Mobilenet-V2 | RESNET18 Shufflenet-V2 |

|---|---|---|

| KD | 58.35 | 62.26 |

| KD+点 | 64.01 | 65.75 |

在Imagenet上:

| 老师 学生 | Resnet34 RESNET18 | RESNET50 Mobilenet-V1 |

|---|---|---|

| KD | 71.03 | 70.50 |

| KD+点 | 71.72 | 73.09 |

在CIFAR-100上:

| 老师 学生 | Resnet56 RESNET20 | RESNET110 Resnet32 | RESNET32X4 resnet8x4 | WRN-40-2 WRN-16-2 | WRN-40-2 WRN-40-1 | VGG13 VGG8 |

|---|---|---|---|---|---|---|

| KD | 70.66 | 73.08 | 73.33 | 74.92 | 73.54 | 72.98 |

| DKD | 71.97 | 74.11 | 76.32 | 76.23 | 74.81 | 74.68 |

| 老师 学生 | RESNET32X4 Shufflenet-V1 | WRN-40-2 Shufflenet-V1 | VGG13 Mobilenet-V2 | RESNET50 Mobilenet-V2 | RESNET32X4 Mobilenet-V2 |

|---|---|---|---|---|---|

| KD | 74.07 | 74.83 | 67.37 | 67.35 | 74.45 |

| DKD | 76.45 | 76.70 | 69.71 | 70.35 | 77.07 |

在Imagenet上:

| 老师 学生 | Resnet34 RESNET18 | RESNET50 Mobilenet-V1 |

|---|---|---|

| KD | 71.03 | 70.50 |

| DKD | 71.70 | 72.05 |

Mdistiller支持CIFAR-100,ImageNet和MS-Coco的以下蒸馏方法:

| 方法 | 纸链接 | CIFAR-100 | 成像网 | MS-Coco |

|---|---|---|---|---|

| KD | https://arxiv.org/abs/1503.02531 | ✓ | ✓ | |

| fitnet | https://arxiv.org/abs/1412.6550 | ✓ | ||

| 在 | https://arxiv.org/abs/1612.03928 | ✓ | ✓ | |

| nst | https://arxiv.org/abs/1707.01219 | ✓ | ||

| PKT | https://arxiv.org/abs/1803.10837 | ✓ | ||

| KDSVD | https://arxiv.org/abs/1807.06819 | ✓ | ||

| OFD | https://arxiv.org/abs/1904.01866 | ✓ | ✓ | |

| RKD | https://arxiv.org/abs/1904.05068 | ✓ | ||

| vid | https://arxiv.org/abs/1904.05835 | ✓ | ||

| sp | https://arxiv.org/abs/1907.09682 | ✓ | ||

| CRD | https://arxiv.org/abs/1910.10699 | ✓ | ✓ | |

| 评论 | https://arxiv.org/abs/2104.09044 | ✓ | ✓ | ✓ |

| DKD | https://arxiv.org/abs/2203.08679 | ✓ | ✓ | ✓ |

环境:

安装软件包:

sudo pip3 install -r requirements.txt

sudo python3 setup.py develop

mdistiller/engine/cfg.py上将CFG.LOG.WANDB设置为False 。您可以评估自己的模型或自己训练的模型的性能。

我们的模型位于https://github.com/megvii-research/mdistiller/releases/tag/checkpoints,请下载./download_ckpts

如果在Imagenet上测试模型,请在https://image-net.org/上下载数据集,然后将它们放在./data/imagenet上

# evaluate teachers

python3 tools/eval.py -m resnet32x4 # resnet32x4 on cifar100

python3 tools/eval.py -m ResNet34 -d imagenet # ResNet34 on imagenet

# evaluate students

python3 tools/eval.p -m resnet8x4 -c download_ckpts/dkd_resnet8x4 # dkd-resnet8x4 on cifar100

python3 tools/eval.p -m MobileNetV1 -c download_ckpts/imgnet_dkd_mv1 -d imagenet # dkd-mv1 on imagenet

python3 tools/eval.p -m model_name -c output/your_exp/student_best # your checkpoints在https://github.com/megvii-research/mdistiller/releases/tag/checkpoints ./download_ckpts cifar_teachers.tar tar xvf cifar_teachers.tar

# for instance, our DKD method.

python3 tools/train.py --cfg configs/cifar100/dkd/res32x4_res8x4.yaml

# you can also change settings at command line

python3 tools/train.py --cfg configs/cifar100/dkd/res32x4_res8x4.yaml SOLVER.BATCH_SIZE 128 SOLVER.LR 0.1在https://image-net.org/下载数据集并将其放在./data/imagenet

# for instance, our DKD method.

python3 tools/train.py --cfg configs/imagenet/r34_r18/dkd.yamlmdistiller/distillers/定义蒸馏器上创建一个Python文件 from . _base import Distiller

class MyDistiller ( Distiller ):

def __init__ ( self , student , teacher , cfg ):

super ( MyDistiller , self ). __init__ ( student , teacher )

self . hyper1 = cfg . MyDistiller . hyper1

...

def forward_train ( self , image , target , ** kwargs ):

# return the output logits and a Dict of losses

...

# rewrite the get_learnable_parameters function if there are more nn modules for distillation.

# rewrite the get_extra_parameters if you want to obtain the extra cost.

...在mdistiller/distillers/__init__.py中重新列入distiller_dict中的蒸馏器

在mdistiller/engines/cfg.py中重新列出相应的超参数

创建一个新的配置文件并测试它。

如果此存储库有助于您的研究,请考虑引用论文:

@article { zhao2022dkd ,

title = { Decoupled Knowledge Distillation } ,

author = { Zhao, Borui and Cui, Quan and Song, Renjie and Qiu, Yiyu and Liang, Jiajun } ,

journal = { arXiv preprint arXiv:2203.08679 } ,

year = { 2022 }

}

@article { zhao2023dot ,

title = { DOT: A Distillation-Oriented Trainer } ,

author = { Zhao, Borui and Cui, Quan and Song, Renjie and Liang, Jiajun } ,

journal = { arXiv preprint arXiv:2307.08436 } ,

year = { 2023 }

}Mdistiller根据MIT许可发布。有关详细信息,请参见许可证。

感谢CRD和评论。我们根据CRD的代码库和评论KD的代码库来构建此库。

感谢Yiyu Qiu和Yi Shi在实习MEGVII技术期间的代码贡献。

感谢Xin Jin关于DKD的讨论。