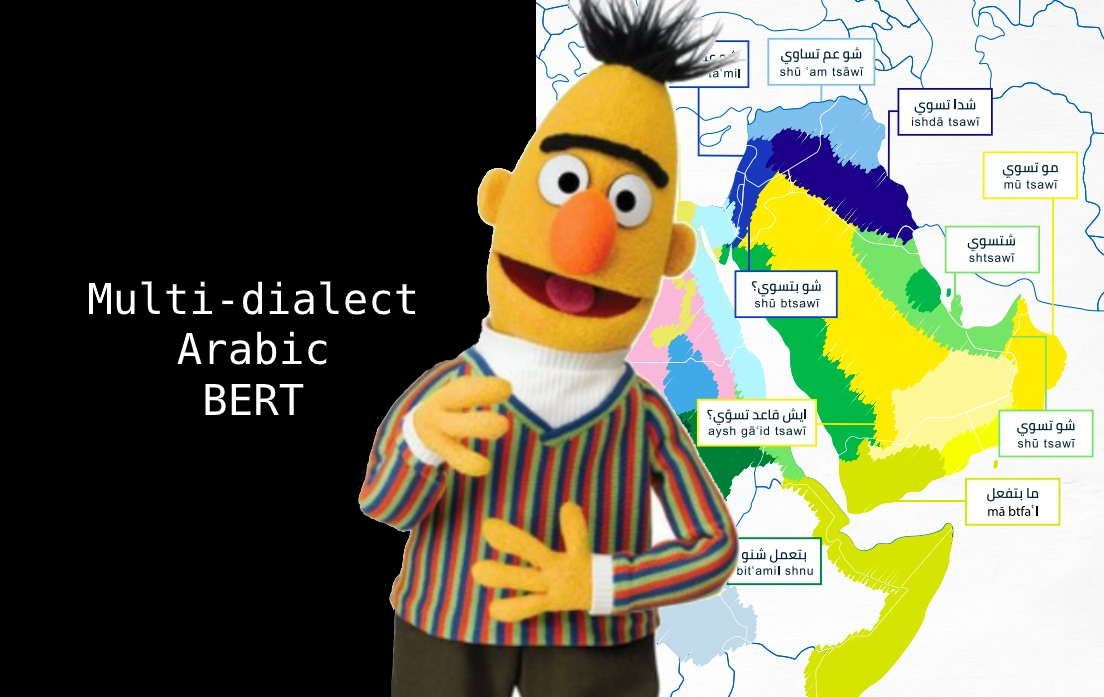

Multi dialect Arabic BERT

1.0.0

這是多核心阿拉伯語BERT模型的存儲庫。

由mawdoo3-ai。

我們沒有從頭開始訓練多支二式阿拉伯語BERT模型,而是使用阿拉伯語bert初始化了該模型的權重,並從差異阿拉伯語方言標識(NADI)共享任務的無效數據中對1000萬阿拉伯推文進行了訓練。

@misc{talafha2020multidialect,

title={Multi-Dialect Arabic BERT for Country-Level Dialect Identification},

author={Bashar Talafha and Mohammad Ali and Muhy Eddin Za'ter and Haitham Seelawi and Ibraheem Tuffaha and Mostafa Samir and Wael Farhan and Hussein T. Al-Natsheh},

year={2020},

eprint={2007.05612},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

可以通過擁抱面來使用transformers庫加載模型權重。

from transformers import AutoTokenizer , AutoModel

tokenizer = AutoTokenizer . from_pretrained ( "bashar-talafha/multi-dialect-bert-base-arabic" )

model = AutoModel . from_pretrained ( "bashar-talafha/multi-dialect-bert-base-arabic" )使用pipeline的示例:

from transformers import pipeline

fill_mask = pipeline (

"fill-mask" ,

model = "bashar-talafha/multi-dialect-bert-base-arabic " ,

tokenizer = "bashar-talafha/multi-dialect-bert-base-arabic "

)

fill_mask ( " سافر الرحالة من مطار [MASK] " ) [{'sequence': '[CLS] سافر الرحالة من مطار الكويت [SEP]', 'score': 0.08296813815832138, 'token': 3226},

{'sequence': '[CLS] سافر الرحالة من مطار دبي [SEP]', 'score': 0.05123933032155037, 'token': 4747},

{'sequence': '[CLS] سافر الرحالة من مطار مسقط [SEP]', 'score': 0.046838656067848206, 'token': 13205},

{'sequence': '[CLS] سافر الرحالة من مطار القاهرة [SEP]', 'score': 0.03234650194644928, 'token': 4003},

{'sequence': '[CLS] سافر الرحالة من مطار الرياض [SEP]', 'score': 0.02606341242790222, 'token': 2200}]

| 範圍 | 價值 |

|---|---|

| 建築學 | bertmumaskedlm |

| hidden_size | 768 |

| max_position_embeddings | 512 |

| num_attention_heads | 12 |

| num_hidden_layers | 12 |

| vocab_size | 32000 |

| hidden_size | 768 |

| 參數總數 | 110m |