semantic segmentation

v0.2.6

易于使用和可自定义的SOTA语义分割模型,并在Pytorch中具有丰富的数据集

自2022年以来,已经发生了很多变化,如今甚至有开放世界的细分模型(任何细分)。但是,传统的细分模型仍然需要高精度和自定义用例。此存储库将根据新的Pytorch版本,更新的模型以及如何与自定义数据集一起使用的文档进行更新。

预期发布日期 - > 2024年5月

计划的功能:

当前要丢弃的功能:

支持的骨干:

支持的头/方法:

支持的独立模型:

支持的模块:

请参阅基准和可用预训练模型的模型。

并检查骨架是否有支撑的骨架。

注意:大多数方法没有预训练的模型。在一个存储库中将不同的模型与预先训练的权重相结合和有限的资源以重新培训自己非常困难。

场景解析:

人解析:

面对解析:

其他的:

有关更多详细信息和数据集准备,请参阅数据集。

在此处检查笔记本以测试增强效果。

像素级变换:

空间级别的变换:

然后,克隆回购,并使用以下方式安装项目

$ git clone https://github.com/sithu31296/semantic-segmentation

$ cd semantic-segmentation

$ pip install -e .在configs中创建一个配置文件。可以在此处找到ADE20K数据集的示例配置。然后编辑您认为是否需要的字段。所有培训,评估和预测脚本都需要此配置文件。

用单个GPU训练:

$ python tools/train.py --cfg configs/CONFIG_FILE.yaml要使用多个GPU训练,请将配置文件中的DDP字段设置为true ,然后运行如下:

$ python -m torch.distributed.launch --nproc_per_node=2 --use_env tools/train.py --cfg configs/ < CONFIG_FILE_NAME > .yaml确保将配置文件的MODEL_PATH设置为训练有素的模型目录。

$ python tools/val.py --cfg configs/ < CONFIG_FILE_NAME > .yaml要评估多尺度和翻转,请将MSF中的ENABLE字段更改为true ,并运行与上述相同的命令。

要进行推断,请从下面编辑配置文件的参数。

MODEL >> NAME和BACKBONE更改为您所需的预处理模型。DATASET >> NAME更改为数据集名称。TEST >> MODEL_PATH设置为测试模型的预处理重量。TEST >> FILE更改为您要测试的文件或图像文件夹路径。SAVE_DIR中。 # # example using ade20k pretrained models

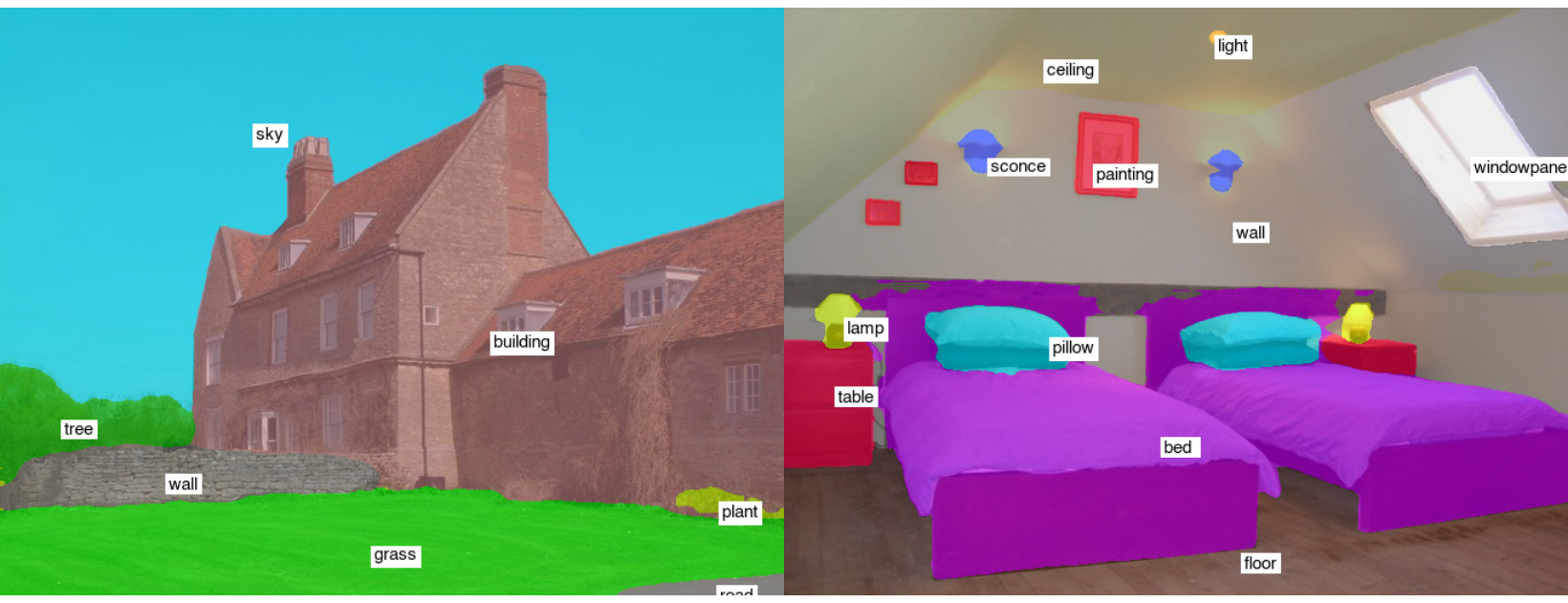

$ python tools/infer.py --cfg configs/ade20k.yaml示例测试结果(segformer-b2):

要转换为ONNX和Coreml,请运行:

$ python tools/export.py --cfg configs/ < CONFIG_FILE_NAME > .yaml要转换为OpenVino和Tflite,请参见Torch_optimize。

# # ONNX Inference

$ python scripts/onnx_infer.py --model < ONNX_MODEL_PATH > --img-path < TEST_IMAGE_PATH >

# # OpenVINO Inference

$ python scripts/openvino_infer.py --model < OpenVINO_MODEL_PATH > --img-path < TEST_IMAGE_PATH >

# # TFLite Inference

$ python scripts/tflite_infer.py --model < TFLite_MODEL_PATH > --img-path < TEST_IMAGE_PATH > @article{xie2021segformer,

title={SegFormer: Simple and Efficient Design for Semantic Segmentation with Transformers},

author={Xie, Enze and Wang, Wenhai and Yu, Zhiding and Anandkumar, Anima and Alvarez, Jose M and Luo, Ping},

journal={arXiv preprint arXiv:2105.15203},

year={2021}

}

@misc{xiao2018unified,

title={Unified Perceptual Parsing for Scene Understanding},

author={Tete Xiao and Yingcheng Liu and Bolei Zhou and Yuning Jiang and Jian Sun},

year={2018},

eprint={1807.10221},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

@article{hong2021deep,

title={Deep Dual-resolution Networks for Real-time and Accurate Semantic Segmentation of Road Scenes},

author={Hong, Yuanduo and Pan, Huihui and Sun, Weichao and Jia, Yisong},

journal={arXiv preprint arXiv:2101.06085},

year={2021}

}

@misc{zhang2021rest,

title={ResT: An Efficient Transformer for Visual Recognition},

author={Qinglong Zhang and Yubin Yang},

year={2021},

eprint={2105.13677},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

@misc{huang2021fapn,

title={FaPN: Feature-aligned Pyramid Network for Dense Image Prediction},

author={Shihua Huang and Zhichao Lu and Ran Cheng and Cheng He},

year={2021},

eprint={2108.07058},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

@misc{wang2021pvtv2,

title={PVTv2: Improved Baselines with Pyramid Vision Transformer},

author={Wenhai Wang and Enze Xie and Xiang Li and Deng-Ping Fan and Kaitao Song and Ding Liang and Tong Lu and Ping Luo and Ling Shao},

year={2021},

eprint={2106.13797},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

@article{Liu2021PSA,

title={Polarized Self-Attention: Towards High-quality Pixel-wise Regression},

author={Huajun Liu and Fuqiang Liu and Xinyi Fan and Dong Huang},

journal={Arxiv Pre-Print arXiv:2107.00782 },

year={2021}

}

@misc{chao2019hardnet,

title={HarDNet: A Low Memory Traffic Network},

author={Ping Chao and Chao-Yang Kao and Yu-Shan Ruan and Chien-Hsiang Huang and Youn-Long Lin},

year={2019},

eprint={1909.00948},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

@inproceedings{sfnet,

title={Semantic Flow for Fast and Accurate Scene Parsing},

author={Li, Xiangtai and You, Ansheng and Zhu, Zhen and Zhao, Houlong and Yang, Maoke and Yang, Kuiyuan and Tong, Yunhai},

booktitle={ECCV},

year={2020}

}

@article{Li2020SRNet,

title={Towards Efficient Scene Understanding via Squeeze Reasoning},

author={Xiangtai Li and Xia Li and Ansheng You and Li Zhang and Guang-Liang Cheng and Kuiyuan Yang and Y. Tong and Zhouchen Lin},

journal={ArXiv},

year={2020},

volume={abs/2011.03308}

}

@ARTICLE{Yucondnet21,

author={Yu, Changqian and Shao, Yuanjie and Gao, Changxin and Sang, Nong},

journal={IEEE Signal Processing Letters},

title={CondNet: Conditional Classifier for Scene Segmentation},

year={2021},

volume={28},

number={},

pages={758-762},

doi={10.1109/LSP.2021.3070472}

}

@misc{yan2022lawin,

title={Lawin Transformer: Improving Semantic Segmentation Transformer with Multi-Scale Representations via Large Window Attention},

author={Haotian Yan and Chuang Zhang and Ming Wu},

year={2022},

eprint={2201.01615},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

@misc{yu2021metaformer,

title={MetaFormer is Actually What You Need for Vision},

author={Weihao Yu and Mi Luo and Pan Zhou and Chenyang Si and Yichen Zhou and Xinchao Wang and Jiashi Feng and Shuicheng Yan},

year={2021},

eprint={2111.11418},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

@misc{wightman2021resnet,

title={ResNet strikes back: An improved training procedure in timm},

author={Ross Wightman and Hugo Touvron and Hervé Jégou},

year={2021},

eprint={2110.00476},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

@misc{liu2022convnet,

title={A ConvNet for the 2020s},

author={Zhuang Liu and Hanzi Mao and Chao-Yuan Wu and Christoph Feichtenhofer and Trevor Darrell and Saining Xie},

year={2022},

eprint={2201.03545},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

@misc{li2022uniformer,

title={UniFormer: Unifying Convolution and Self-attention for Visual Recognition},

author={Kunchang Li and Yali Wang and Junhao Zhang and Peng Gao and Guanglu Song and Yu Liu and Hongsheng Li and Yu Qiao},

year={2022},

eprint={2201.09450},

archivePrefix={arXiv},

primaryClass={cs.CV}

}