Zhendong Wang, Xiaodong Cun, Jianmin Bao, Wengang Zhou, Jianzhuang Liu, Houqiang Li

Paper link: [Arxiv] [CVPR]

The project is built with PyTorch 1.9.0, Python3.7, CUDA11.1. For package dependencies, you can install them by:

pip install -r requirements.txtFor training data of SIDD, you can download the SIDD-Medium dataset from the official url. Then generate training patches for training by:

python3 generate_patches_SIDD.py --src_dir ../SIDD_Medium_Srgb/Data --tar_dir ../datasets/denoising/sidd/trainFor evaluation on SIDD and DND, you can download data from here.

For training on GoPro, and evaluation on GoPro, HIDE, RealBlur-J and RealBlur-R, you can download data from here.

Then put all the denoising data into ../datasets/denoising, and all the deblurring data into ../datasets/deblurring.

To train Uformer on SIDD, you can begin the training by:

sh script/train_denoise.shTo train Uformer on GoPro, you can begin the training by:

sh script/train_motiondeblur.shTo evaluate Uformer, you can run:

sh script/test.shFor evaluate on each dataset, you should uncomment corresponding line.

We provide a simple script to calculate the flops by ourselves, a simple script has been added in model.py. You can change the configuration and run:

python3 model.pyThe manual calculation of GMacs in this repo differs slightly from the main paper, but they do not influence the conclusion. We will correct the paper later.

If you find this project useful in your research, please consider citing:

@InProceedings{Wang_2022_CVPR,

author = {Wang, Zhendong and Cun, Xiaodong and Bao, Jianmin and Zhou, Wengang and Liu, Jianzhuang and Li, Houqiang},

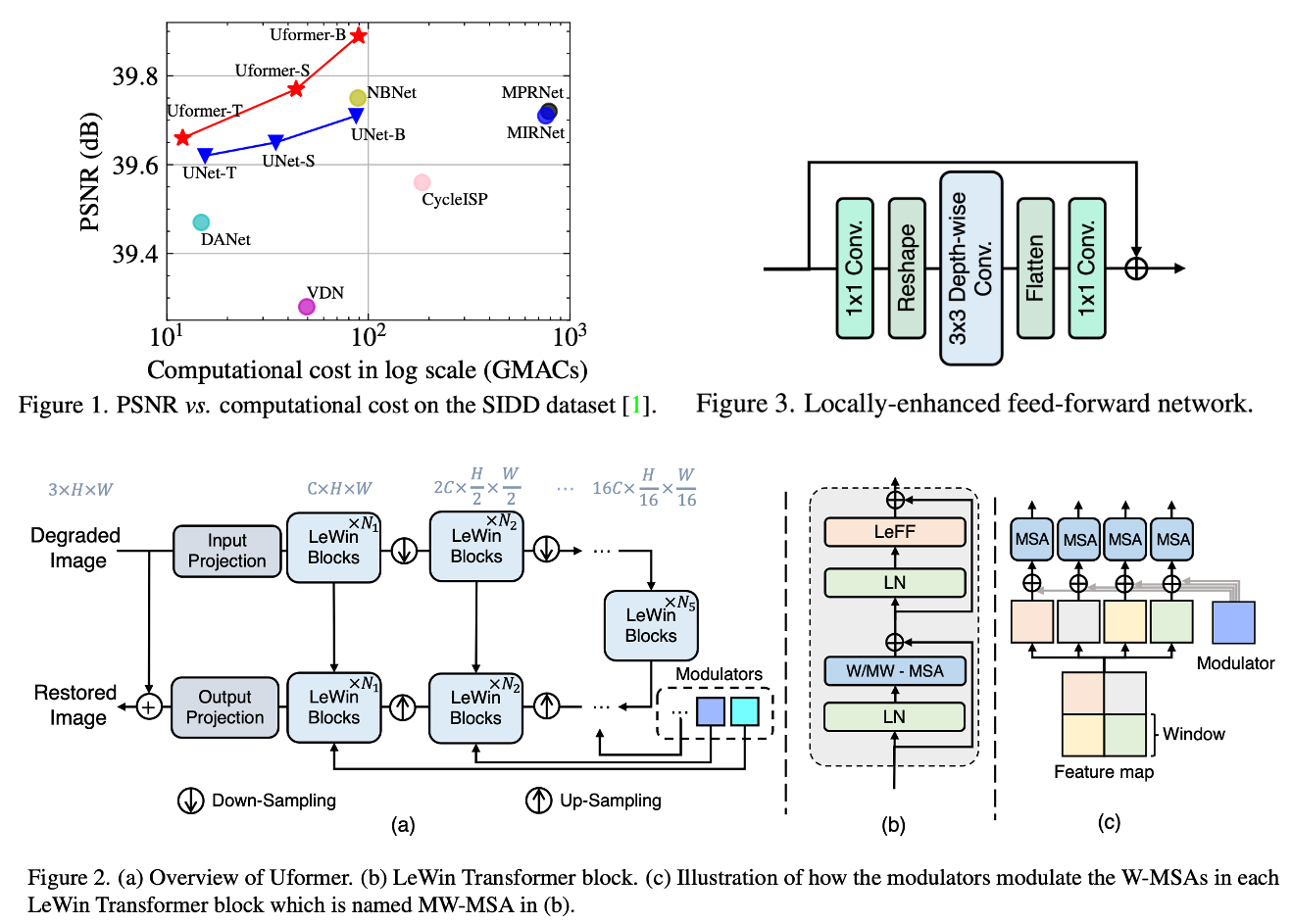

title = {Uformer: A General U-Shaped Transformer for Image Restoration},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2022},

pages = {17683-17693}

}

This code borrows heavily from MIRNet and SwinTransformer.

Please contact us if there is any question or suggestion(Zhendong Wang [email protected], Xiaodong Cun [email protected]).