【Langya Bang】-Chinese big model special arena, the leading models you care about are all here

The latest list of Chinese mockups

SuperCLUE: Chinese general model comprehensive assessment benchmark

*** update ****

Chinese task benchmark evaluation, 10 major tasks & 9 models running in one click, detailed evaluation:

Language Understanding Evaluation benchmark for Chinese(CLUE benchmark): run 10 tasks & 9 baselines with one line of code, performance comparison with details.

Releasing Pre-trained Model of ALBERT_Chinese:

Training with 30G+ Raw Chinese Corpus, xxlarge, small version and more, Target to match State of the Art performance in Chinese with 30% less parameters, 2019-Oct-7, During the National Day of China!

The corpus will continue to expand. . .

Phase I target: 10 million-level Chinese corpus & 3 million-level Chinese corpus (May 1, 2019)

Phase II target: 30 million-level Chinese corpus & 10 million-level Chinese corpus & 100 million-level Chinese corpus (December 31, 2019)

Update: Added high-quality community Q&A json version (webtext2019zh), which can be used to train super-large-scale NLP models; add 5.2 million translated corpus (translation2019zh).

Chinese information is everywhere, but it is not easy and sometimes very difficult to obtain a large amount of Chinese corpus. At this time in early 2019,

Ordinary practitioners, researchers or students do not have a good channel to obtain a large amount of Chinese corpus. The author wants to train a Chinese word vector.

After searching on Baidu and github for a long time, I gained very little: either the magnitude of the corpus is too small, the data is too old, or the processing required is too complicated.

I wonder if you have encountered such a problem too?

Our project is to contribute a meager effort to solve this problem.

Google Drive download or Baidu Cloud Drive

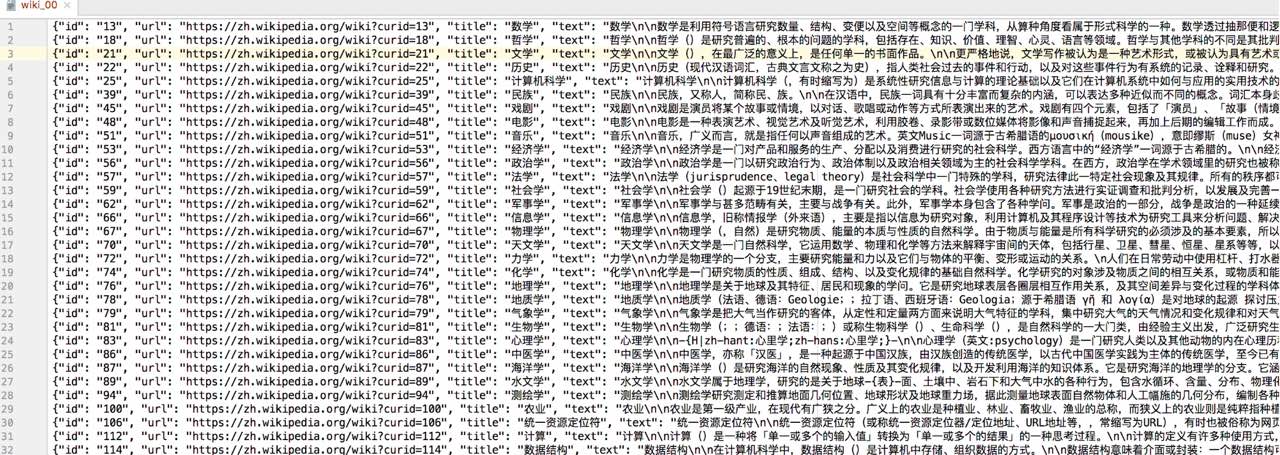

可以做为通用中文语料,做预训练的语料或构建词向量,也可以用于构建知识问答。

{"id":<id>,"url":<url>,"title":<title>,"text":<text>} 其中,title是词条的标题,text是正文;通过"nn"换行。

{"id": "53", "url": "https://zh.wikipedia.org/wiki?curid=53", "title": "经济学", "text": "经济学nn经济学是一门对产品和服务的生产、分配以及消费进行研究的社会科学。西方语言中的“经济学”一词源于古希腊的。nn经济学注重的是研究经济行为者在一个经济体系下的行为,以及他们彼此之间的互动。在现代,经济学的教材通常将这门领域的研究分为总体经济学和个体经济学。微观经济学检视一个社会里基本层次的行为,包括个体的行为者(例如个人、公司、买家或卖家)以及与市场的互动。而宏观经济学则分析整个经济体和其议题,包括失业、通货膨胀、经济成长、财政和货币政策等。..."}

经济学

经济学是一门对产品和服务的生产、分配以及消费进行研究的社会科学。西方语言中的“经济学”一词源于古希腊的。

经济学注重的是研究经济行为者在一个经济体系下的行为,以及他们彼此之间的互动。在现代,经济学的教材通常将这门领域的研究分为总体经济学和个体经济学。微观经济学检视一个社会里基本层次的行为,包括个体的行为者(例如个人、公司、买家或卖家)以及与市场的互动。而宏观经济学则分析整个经济体和其议题,包括失业、通货膨胀、经济成长、财政和货币政策等。

其他的对照还包括了实证经济学(研究「是什么」)以及规范经济学(研究「应该是什么」)、经济理论与实用经济学、行为经济学与理性选择经济学、主流经济学(研究理性-个体-均衡等)与非主流经济学(研究体制-历史-社会结构等)。

经济学的分析也被用在其他各种领域上,主要领域包括了商业、金融、和政府等,但同时也包括了如健康、犯罪、教育、法律、政治、社会架构、宗教、战争、和科学等等。到了21世纪初,经济学在社会科学领域各方面不断扩张影响力,使得有些学者讽刺地称其为「经济学帝国主义」。

在现代对于经济学的定义有数种说法,其中有许多说法因为发展自不同的领域或理论而有截然不同的定义,苏格兰哲学家和经济学家亚当·斯密在1776年将政治经济学定义为「国民财富的性质和原因的研究」,他说:

让-巴蒂斯特·赛伊在1803年将经济学从公共政策里独立出来,并定义其为对于财富之生产、分配、和消费的学问。另一方面,托马斯·卡莱尔则讽刺的称经济学为「忧郁的科学」(Dismal science),不过这一词最早是由马尔萨斯在1798年提出。约翰·斯图尔特·密尔在1844年提出了一个以社会科学定义经济学的角度:

.....

Download Google Drive or download Baidu Cloud Drive, password: k265

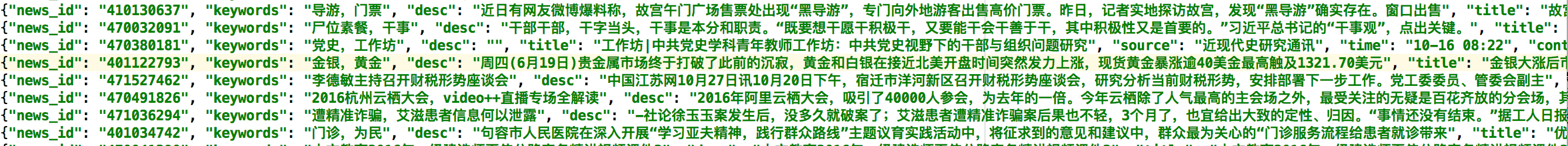

It contains 2.5 million news articles. The news source covers 63,000 media, including titles, keywords, descriptions, and texts.

Dataset division: data is deduplicated and divided into three parts. Training set: 2.43 million; Verification set: 77,000; Test set, tens of thousands, no download is provided.

可以做为【通用中文语料】,训练【词向量】或做为【预训练】的语料;

也可以用于训练【标题生成】模型,或训练【关键词生成】模型(选关键词内容不同于标题的数据);

亦可以通过新闻渠道区分出新闻的类型。

{'news_id': <news_id>,'title':<title>,'content':<content>,'source': <source>,'time':<time>,'keywords': <keywords>,'desc': <desc>, 'desc': <desc>}

其中,title是新闻标题,content是正文,keywords是关键词,desc是描述,source是新闻的来源,time是发布时间

{"news_id": "610130831", "keywords": "导游,门票","title": "故宫淡季门票40元 “黑导游”卖外地客140元", "desc": "近日有网友微博爆料称,故宫午门广场售票处出现“黑导游”,专门向外地游客出售高价门票。昨日,记者实地探访故宫,发现“黑导游”确实存在。窗口出售", "source": "新华网", "time": "03-22 12:00", "content": "近日有网友微博爆料称,故宫午门广场售票处出现“黑导游”,专门向外地游客出售高价门票。昨日,记者实地探访故宫,发现“黑导游”确实存在。窗口出售40元的门票,被“黑导游”加价出售,最高加到140元。故宫方面表示,请游客务必通过正规渠道购买门票,避免上当受骗遭受损失。目前单笔门票购买流程不过几秒钟,耐心排队购票也不会等待太长时间。....再反弹”的态势,打击黑导游需要游客配合,通过正规渠道购买门票。"}

Download Google Drive or download Baidu Cloud Drive, password: fu45

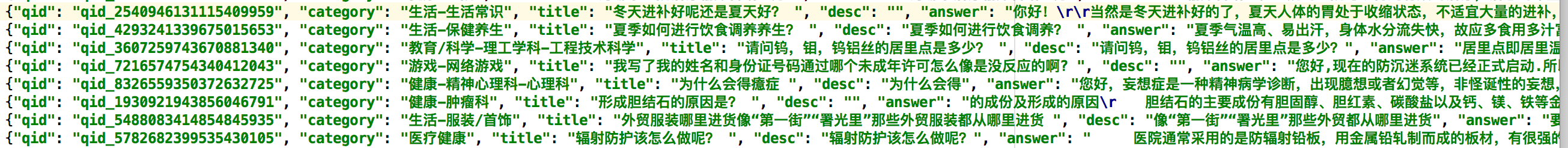

Contains 1.5 million pre-filtered, high-quality questions and answers, each of which falls into a category. There are 492 categories in total, of which 434 categories have reached or exceeded 10 times.

Dataset division: data is deduplicated and divided into three parts. Training set: 1.425 million; Verification set: 45,000; Test set, tens of thousands, no download is provided.

可以做为通用中文语料,训练词向量或做为预训练的语料;也可以用于构建百科类问答;其中类别信息比较有用,可以用于做监督训练,从而构建

更好句子表示的模型、句子相似性任务等。

{"qid":<qid>,"category":<category>,"title":<title>,"desc":<desc>,"answer":<answer>}

其中,category是问题的类型,title是问题的标题,desc是问题的描述,可以为空或与标题内容一致。

{"qid": "qid_2540946131115409959", "category": "生活知识", "title": "冬天进补好一些呢,还是夏天进步好啊? ", "desc": "", "answer": "你好!rr当然是冬天进补好的了,夏天人体的胃处于收缩状态,不适宜大量的进补,所以我们有时候说:“夏天就要吃些清淡的,就是这个道理的。”rr不过,秋季进补要注意“四忌” 一忌多多益善。任何补药服用过量都有害。认为“多吃补药,有病治病,无病强身”是不的。过量进补会加重脾胃、肝脏负担。在夏季里,人们由于喝冷饮,常食冻品,多有脾胃功能减弱的现象,这时候如果突然大量进补,会骤然加重脾胃及肝脏的负担,使长期处于疲弱的消化器官难于承受,导致消化器官功能紊乱。 rr二忌以药代食。重药物轻食物的做法是不科学的,许多食物也是好的滋补品。如多吃荠菜可治疗高血压;多吃萝卜可健胃消食,顺气宽胸;多吃山药能补脾胃。日常食用的胡桃、芝麻、花生、红枣、扁豆等也是进补的佳品。rr三忌越贵越好。每个人的身体状况不同,因此与之相适应的补品也是不同的。价格昂贵的补品如燕窝、人参之类并非对每个人都适合。每种进补品都有一定的对象和适应症,应以实用有效为滋补原则,缺啥补啥。 rr四忌只补肉类。秋季适当食用牛羊肉进补效果好。但经过夏季后,由于脾胃尚未完全恢复到正常功能,因此过于油腻的食品不易消化吸收。另外,体内过多的脂类、糖类等物质堆积可能诱发心脑血管病。"}

Welcome to report the accuracy of the model on the validation set. Task 1: Category prediction.

Reports include: #1) Accuracy on the verification set; #2) Model, method description, operation mode used, 1 page PDF; #3) Runable source code (optional)

Based on #2 and #3, we will do tests on the test set and report the accuracy on the test set; only teams with #1 and #2 are provided, and the results on the verification set can still be displayed, but will be marked as unverified.

Google Drive Download

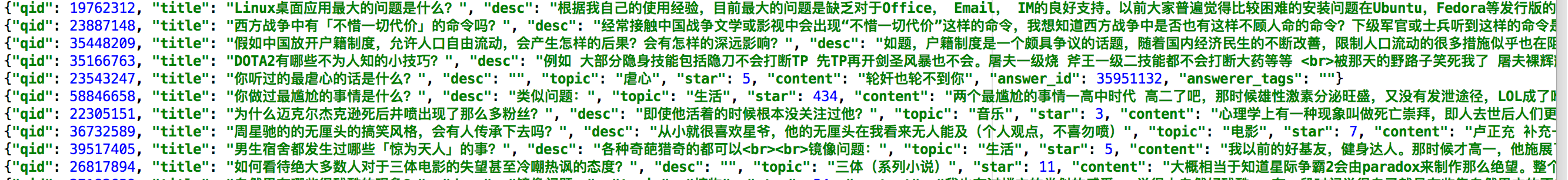

Contains 4.1 million pre-filtered, high-quality questions and responses. Each question belongs to a [topic], with a total of 28,000 various topics, and the topics are all-encompassing.

From the 14 million original Q&A, filtering out answers that received at least 3 likes means that the content of the reply is relatively good or interesting, thereby obtaining a high-quality data set.

In addition to corresponding to a topic, a description of the question, and one or more replies for each question, each replies also have a tag like number, a reply ID, and a replyer.

Dataset division: data is deduplicated and divided into three parts. Training set: 4.12 million; Verification set: 68,000; Test set a: 68,000; Test set b, no download is provided.

1)构建百科类问答:输入一个问题,构建检索系统得到一个回复或生产一个回复;或根据相关关键词从,社区问答库中筛选出你相关的领域数据

2)训练话题预测模型:输入一个问题(和或描述),预测属于话题。

3)训练社区问答(cQA)系统:针对一问多答的场景,输入一个问题,找到最相关的问题,在这个基础上基于不同答案回复的质量、

问题与答案的相关性,找到最好的答案。

4)做为通用中文语料,做大模型预训练的语料或训练词向量。其中类别信息也比较有用,可以用于做监督训练,从而构建更好句子表示的模型、句子相似性任务等。

5)结合点赞数量这一额外信息,预测回复的受欢迎程度或训练答案评分系统。

{"qid":<qid>,"title":<title>,"desc":<desc>,"topic":<topic>,"star":<star>,"content":<content>,

"answer_id":<answer_id>,"answerer_tags":<answerer_tags>}

其中,qid是问题的id,title是问题的标题,desc是问题的描述,可以为空;topic是问题所属的话题,star是该回复的点赞个数,

content是回复的内容,answer_id是回复的ID,answerer_tags是回复者所携带的标签

{"qid": 65618973, "title": "AlphaGo只会下围棋吗?阿法狗能写小说吗?", "desc": "那么现在会不会有智能机器人能从事文学创作?<br>如果有,能写出什么水平的作品?", "topic": "机器人", "star": 3, "content": "AlphaGo只会下围棋,因为它的设计目的,架构,技术方案以及训练数据,都是围绕下围棋这个核心进行的。它在围棋领域的突破,证明了深度学习深度强化学习MCTS技术在围棋领域的有效性,并且取得了重大的PR效果。AlphaGo不会写小说,它是专用的,不会做跨出它领域的其它事情,比如语音识别,人脸识别,自动驾驶,写小说或者理解小说。如果要写小说,需要用到自然语言处理(NLP))中的自然语言生成技术,那是人工智能领域一个", "answer_id": 545576062, "answerer_tags": "人工智能@游戏业"}

Task 1: Topic Prediction.

Reports include: #1) Accuracy on the verification set; #2) Model, method description, operation mode used, 1 page PDF; #3) Runable source code (optional)

Based on #2 and #3, we will do tests on the test set and report the accuracy on the test set; only teams with #1 and #2 are provided, and the results on the verification set can still be displayed, but will be marked as unverified.

Task 2: Training the community Q&A (cQA) system.

Requirements: The evaluation indicator adopts MAP, build a test set suitable for sorting problems, and report the effect on the test set.

Task 3: Use this dataset (webtext2019zh), refer to OpenAI's GPT-2, train Chinese text writing models, test the effect of zero-shot on other datasets, or evaluate the effect of language models.

Google Drive Download

5.2 million pairs of parallel corpus in Chinese and English. Each pair contains one English and corresponding Chinese. In Chinese or English, most of the time it is a complete sentence with punctuation.

For a parallel Chinese-English pair, there are 36 characters in Chinese on average and 19 words in English on average (words such as "she")

Dataset division: data is deduplicated and divided into three parts. Training set: 5.16 million; Verification set: 39,000; Test set, tens of thousands, no download is provided.

可以用于训练中英文翻译系统,从中文翻译到英文,或从英文翻译到中文;

由于有上百万的中文句子,可以只抽取中文的句子,做为通用中文语料,训练词向量或做为预训练的语料。英文任务也可以类似操作;

{"english": <english>, "chinese": <chinese>}

其中,english是英文句子,chinese是中文句子,中英文一一对应。

{"english": "In Italy, there is no real public pressure for a new, fairer tax system.", "chinese": "在意大利,公众不会真的向政府施压,要求实行新的、更公平的税收制度。"}

To contribute Chinese corpus, please send an email to [email protected]

To jointly establish a large-scale open and shared Chinese corpus to promote the development of the field of Chinese natural language processing, any corpus provided and adopted into the project,

In addition to listing the contributor list (optional), we will select the top 20 students based on the quality and magnitude of the corpus, and send out the keyboard, mouse, and

Display screens, wireless headphones, smart speakers or other items of equivalent value to express gratitude to the contributor.

add your chinese corpus here by sending us an email

If there is any issue regarding the data, you can also contact with us, we will process it within one week.

Thank you for your understanding.

@misc{bright_xu_2019_3402023,

author = {Bright Xu},

title = {NLP Chinese Corpus: Large Scale Chinese Corpus for NLP },

month = sep,

year = 2019,

doi = {10.5281/zenodo.3402023},

version = {1.0},

publisher = {Zenodo},

url = {https://doi.org/10.5281/zenodo.3402023}

}

Please also email us your paper title or work on this project's dataset

To contribute Chinese corpus, please send an email: [email protected];

Experiment in constructing a Wiki Chinese corpus word vector model using Python

A tool for extracting plain text from Wikipedia dumps

Open Chinese convert (OpenCC) in pure Python: Open Chinese convert

dumps of wiki, latest in chinese